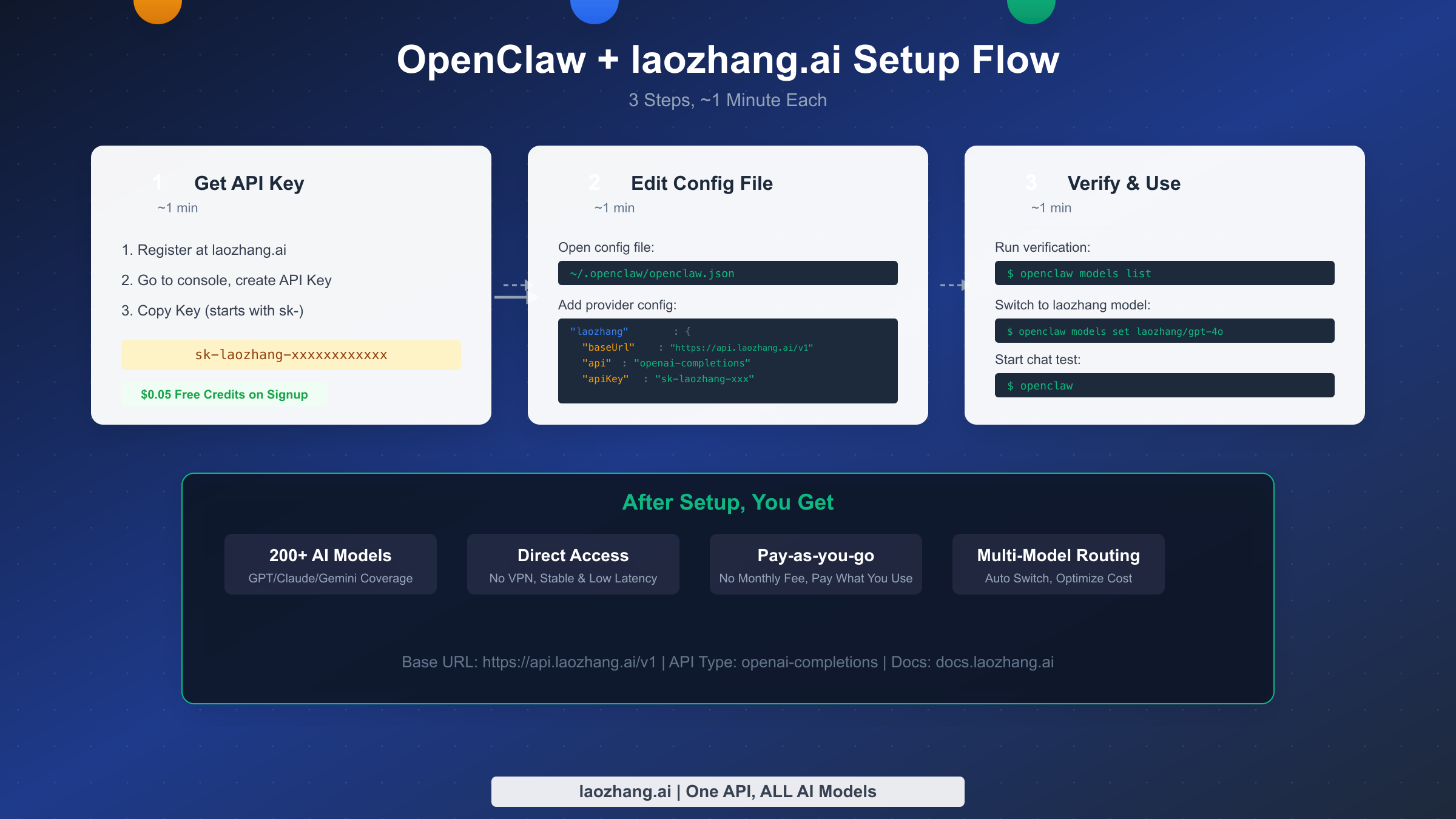

Connecting OpenClaw to laozhang.ai is as simple as adding a provider configuration block to the models.providers section of your openclaw.json file. Just fill in the baseUrl (https://api.laozhang.ai/v1 ), your API Key, and the api type (openai-completions), and you'll have instant access to over 200 AI models including GPT-4o, Claude Sonnet 4, and Gemini. The entire process takes less than 3 minutes, and once configured, you can freely switch between any model within OpenClaw's terminal environment, enjoying pay-as-you-go pricing for your AI-powered coding workflow.

Why Choose laozhang.ai as Your OpenClaw API Provider

OpenClaw is currently one of the most popular open-source AI coding agents (GitHub 199K Stars, March 2026 data), with built-in support for fourteen providers including OpenAI, Anthropic, and Google Gemini. However, for many developers, using these official APIs directly presents three practical obstacles: unstable network connections causing request timeouts, the need to bind an international credit card to register and pay, and no single provider covering all the models you want to use. This is precisely where API aggregation platforms like laozhang.ai deliver their core value.

laozhang.ai aggregates over 200 mainstream AI models under a single OpenAI-compatible endpoint (docs.laozhang.ai, verified March 2026). With just one API Key, you can access GPT-4o, Claude Sonnet 4, Gemini 2.5 Pro, DeepSeek R1, Kimi K2.5, and models from many other providers. For OpenClaw users, this means you don't need to register separate accounts and keys with OpenAI, Anthropic, Google, and other platforms -- a single laozhang.ai configuration replaces them all. More importantly, laozhang.ai provides direct access without requiring a VPN or proxy, which is critical for the frequent AI interactions that occur during day-to-day programming.

From a cost perspective, laozhang.ai uses a pay-as-you-go model with no monthly fees. You receive $0.05 in free credits upon registration. Compared to using official APIs directly (which require prepaying $5-20), or purchasing a $20/month ChatGPT Plus subscription, laozhang.ai lets you test with a small amount first and then decide how much to top up based on actual usage. For developers just starting to explore AI coding assistants, this risk-free entry point is clearly more approachable. If you haven't installed OpenClaw yet, check out the OpenClaw Installation & Deployment Guide to set up your environment first, then come back to continue with the API configuration in this article.

Pre-Configuration Checklist

Before you start editing configuration files, you need two things ready: a laozhang.ai API Key, and confirmation that OpenClaw is correctly installed on your system. Each of these preparation steps takes about 1 minute, and once done, you can move on to the core configuration.

Getting Your laozhang.ai API Key

Visit the laozhang.ai website and complete registration, then navigate to the API Key management page in the console and click "Create New Key" to generate an API Key starting with sk-. This key is your sole credential for communicating with the laozhang.ai service, so be sure to copy and save it to a secure location immediately after creation -- you won't be able to view the full key again after closing the page. The registration process doesn't require a credit card, and the system automatically grants $0.05 in free credits, which is enough to complete all the configuration verification steps in this article. If you've previously encountered API Key issues while using OpenClaw, the OpenClaw API Key Error Troubleshooting Guide provides detailed diagnostic methods.

Verifying Your OpenClaw Installation

Open your terminal and run openclaw --version to confirm that OpenClaw is properly installed. If the command returns a version number (we recommend using the latest version for best compatibility), the installation is fine. Next, run openclaw doctor to check the overall health status -- this diagnostic command inspects your config file path, configured providers, authentication status, and other key information. If you see any red warnings, resolve them before proceeding. OpenClaw's configuration file is located at ~/.openclaw/openclaw.json (some systems use ~/.config/openclaw/openclaw.json5), and you can open it with any text editor. If this file doesn't exist yet, running openclaw once will automatically create a default configuration.

3-Minute Core Configuration

The core configuration is fundamentally straightforward: add a provider definition named laozhang to the models.providers section of openclaw.json, telling OpenClaw where this provider's API endpoint is, what key to use for authentication, and which communication protocol to follow. If you've read our OpenClaw Custom Model Guide before, you'll notice the configuration structure is exactly the same -- laozhang.ai is simply a standard custom provider.

Minimal Configuration (Recommended for Beginners)

Open ~/.openclaw/openclaw.json, find (or create) the models section, and add the following configuration:

json{ "models": { "mode": "merge", "providers": { "laozhang": { "baseUrl": "https://api.laozhang.ai/v1", "apiKey": "sk-laozhang-your-key-here", "api": "openai-completions", "models": [ { "id": "gpt-4o-mini", "name": "GPT-4o Mini" }, { "id": "gpt-4o", "name": "GPT-4o" }, { "id": "claude-sonnet-4-20250514", "name": "Claude Sonnet 4" }, { "id": "claude-opus-4", "name": "Claude Opus 4" }, { "id": "deepseek-chat", "name": "DeepSeek V3" } ] } } } }

There are several critical points in this configuration to pay attention to. mode: "merge" ensures that the laozhang provider you're adding will be merged with OpenClaw's 14 built-in providers rather than replacing them -- if you omit this line, all your previously configured built-in providers (such as direct OpenAI access) will stop working. The baseUrl must include the /v1 suffix, which is the standard path prefix for OpenAI-compatible APIs; omitting it will cause 404 errors. The api field is set to openai-completions because laozhang.ai implements the full OpenAI Chat Completions API format -- even when you call Claude or Gemini models through it, the request format remains OpenAI-compatible, with laozhang.ai handling the protocol translation on the backend.

Protecting Your Key with Environment Variables

While writing your API Key directly in the config file is convenient, it's not secure -- especially if your dotfiles are hosted in a Git repository. A safer approach is to use environment variable references. Change the apiKey field in your config to an environment variable reference:

json"apiKey": "${LAOZHANG_API_KEY}"

Then add the following to your shell configuration file (~/.zshrc or ~/.bashrc):

bashexport LAOZHANG_API_KEY="sk-laozhang-your-key-here"

Run source ~/.zshrc (or restart your terminal) to activate the environment variable. This way, your API Key won't appear in the configuration file, and even if someone sees your openclaw.json, they won't be able to retrieve the key. OpenClaw automatically resolves the ${VAR_NAME} syntax when loading the configuration, reading the actual value from the corresponding environment variable.

Quick Verification After Configuration

After saving the configuration file, run the following commands to verify everything is working:

bashopenclaw models list --provider laozhang # Switch to a laozhang model openclaw models set laozhang/gpt-4o-mini # Start a chat session to test openclaw

If openclaw models list correctly displays the 5 models you defined in the configuration, the provider has been successfully parsed by OpenClaw. Next, use openclaw models set to switch to a model from the laozhang provider, then launch openclaw and send a simple message to test connectivity. If you receive a normal response, congratulations -- laozhang.ai has been successfully connected to OpenClaw.

Recommended Model Configurations

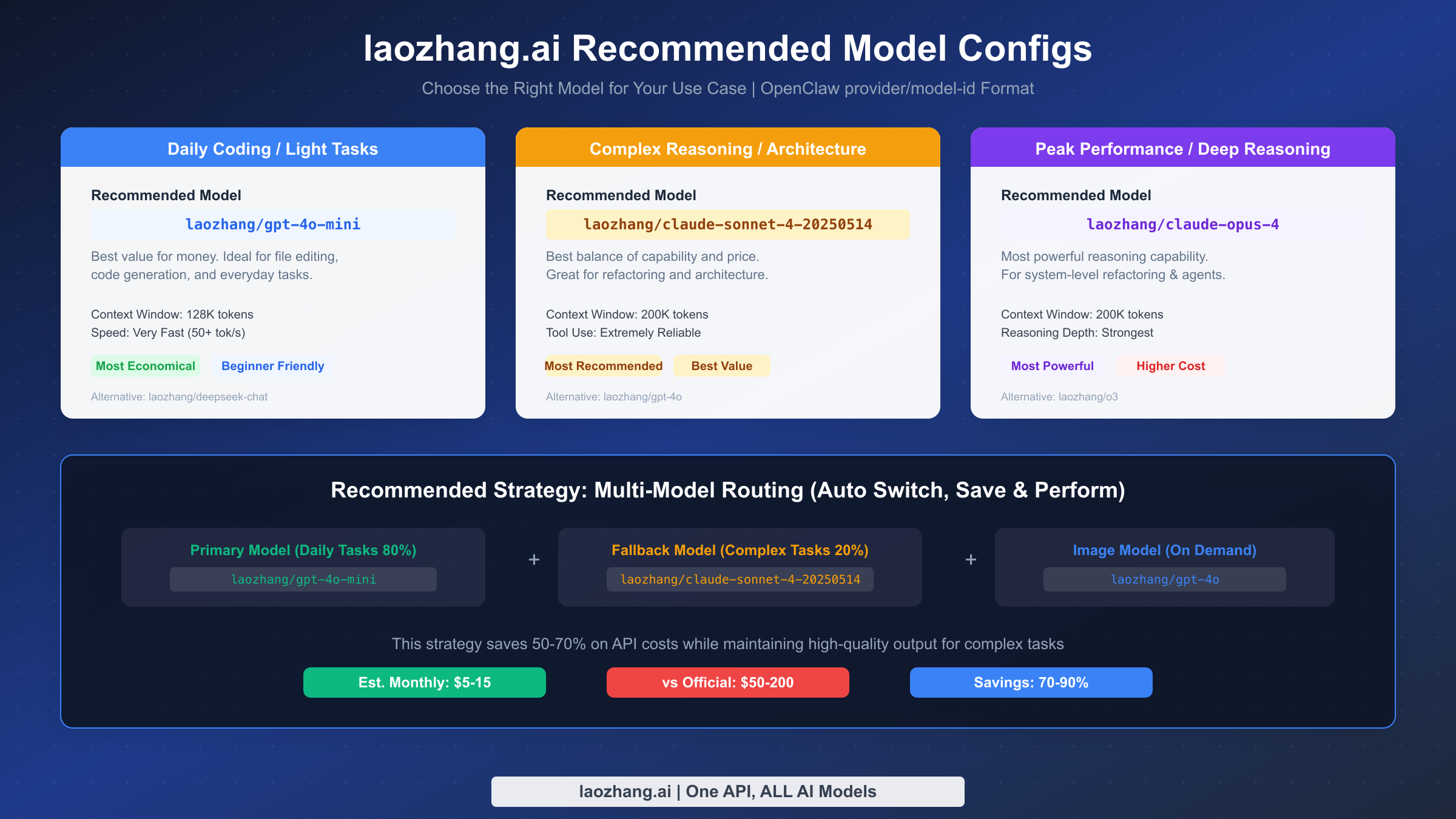

laozhang.ai provides access to over 200 models, but for OpenClaw's AI coding use cases, you don't need to configure all of them -- selecting 3-5 core models based on task type is sufficient. Below are battle-tested model recommendations arranged from lightweight to heavy-duty usage scenarios.

Daily Coding Tasks: laozhang/gpt-4o-mini

GPT-4o Mini offers the best value for money, with a 128K context window and extremely fast response speeds (50+ tokens/s). For file editing, simple code generation, code formatting, and quick Q&A -- the everyday operations -- its performance is more than adequate, while costing roughly one-tenth of GPT-4o. If your daily interactions with OpenClaw are primarily these lightweight tasks (as they are for most developers), setting GPT-4o Mini as your primary model can significantly reduce your API expenses. An alternative is laozhang/deepseek-chat (DeepSeek V3), which performs equally well for Chinese-language coding scenarios and is even more affordable.

Complex Reasoning & Architecture Design: laozhang/claude-sonnet-4-20250514

When you need to perform multi-file refactoring, complex algorithm design, architectural analysis, or deep code reviews, Claude Sonnet 4 strikes the best balance between capability and cost. It features a 200K context window, extremely reliable tool calling, and excels at understanding contextual relationships across large codebases. Compared to GPT-4o at a similar tier, Claude Sonnet 4 typically delivers superior accuracy and consistency in code generation, which is why it's OpenClaw's officially recommended default model. An alternative is laozhang/gpt-4o, which can serve as your image model when you need multimodal capabilities (such as analyzing UI issues from screenshots).

Peak Performance & Deep Reasoning: laozhang/claude-opus-4

Claude Opus 4 is one of the most powerful reasoning models available, suitable for system-level refactoring, complex Agent orchestration, and architecture design tasks that require deep thinking. It has the highest capability ceiling, but its cost is correspondingly higher. We recommend configuring it as a fallback model, manually switching to it only when your primary model can't handle a complex task, rather than using it as your everyday default. An alternative is laozhang/o3 (OpenAI's reasoning model), which has unique strengths in math-heavy and logic-intensive scenarios.

Here's how to configure primary and fallback models in openclaw.json. OpenClaw will use GPT-4o Mini by default for daily tasks, and only activate fallback models when you manually switch via the /model command or when the primary model is unavailable:

json{ "agents": { "defaults": { "model": { "primary": "laozhang/gpt-4o-mini", "fallbacks": ["laozhang/claude-sonnet-4-20250514"] }, "imageModel": { "primary": "laozhang/gpt-4o" } } } }

Multi-Model Routing & Cost Optimization

Smart use of multi-model routing strategies is key to controlling API costs. Based on real-world usage, approximately 80% of daily coding interactions can be handled by lightweight models (GPT-4o Mini or DeepSeek V3), with only 20% of complex tasks requiring high-end models (Claude Sonnet 4 or Opus 4). By setting a lightweight model as your primary and keeping high-end models as on-demand fallbacks, you can maintain output quality while keeping monthly API costs in the $5-15 range -- compared to $50-200/month when using official APIs directly, that's savings of 70-90%. If you have deeper cost management needs for OpenClaw, the OpenClaw Cost Optimization & Token Management Guide provides detailed strategy analysis.

OpenClaw's fallback mechanism is remarkably practical in real-world use. When the primary model returns an error due to temporary overload or network fluctuation, the system automatically tries the next model in the fallbacks list, completely transparent to the user. You can arrange multiple fallback models by priority in the agents.defaults.model.fallbacks array -- we recommend interleaving models from different providers at the same tier, so that even if one upstream provider experiences an outage, models from other providers can take over. For example, use laozhang/gpt-4o-mini as your primary, with fallbacks of laozhang/deepseek-chat and laozhang/claude-sonnet-4-20250514 in that order, creating a progressive safety chain from cost-efficiency to capability.

After configuration, you can use openclaw models fallbacks list to view your current fallback chain, openclaw models fallbacks add laozhang/deepseek-chat to quickly add a fallback model from the command line, or openclaw models fallbacks clear to reset all fallback settings. During daily use, if a particular model's response isn't ideal for the current task, you can use the /model laozhang/claude-sonnet-4-20250514 command in the OpenClaw chat interface to temporarily switch to a more capable model, then switch back to your primary model to continue routine work.

The following table compares estimated monthly costs for different model combination strategies, helping you choose the right configuration based on your usage intensity:

| Usage Pattern | Primary Model | Fallback Model | Est. Monthly Cost | Best For |

|---|---|---|---|---|

| Light Use | gpt-4o-mini | None | $2-5 | Occasional AI assistance |

| Standard Use | gpt-4o-mini | claude-sonnet-4 | $5-15 | Daily developers (recommended) |

| Heavy Use | claude-sonnet-4 | claude-opus-4 | $15-40 | Full-time AI coding |

| Official Direct | claude-sonnet-4 (official) | - | $50-200 | Comparison reference |

Configuration Verification & Troubleshooting

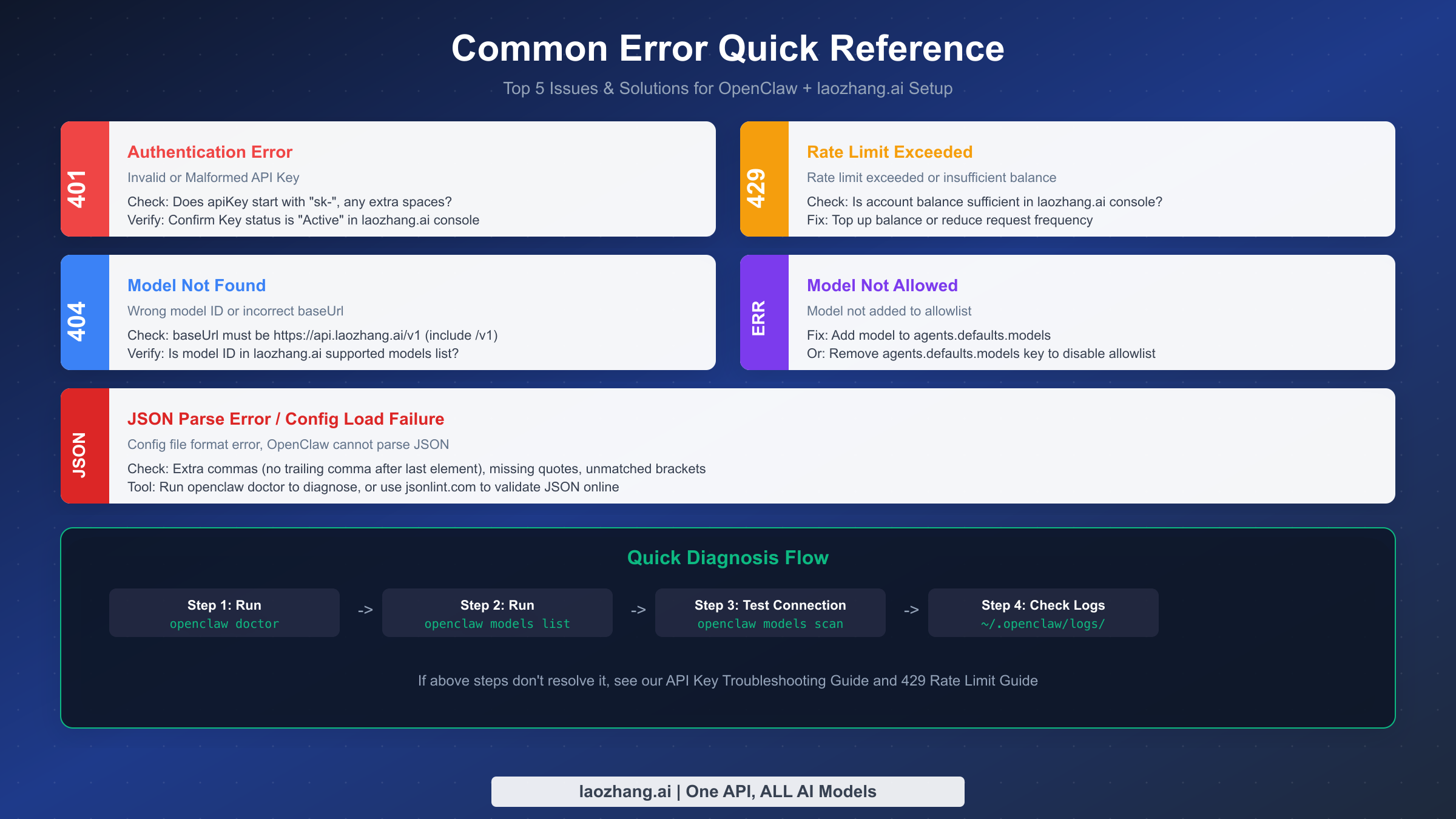

After completing the configuration, a systematic verification process helps you quickly identify potential issues. Below is a recommended four-step diagnostic flow arranged in execution order, with each step helping you narrow down the problem scope.

The first step is to run openclaw doctor. This built-in diagnostic tool checks your config file's JSON syntax, provider definition completeness, and authentication status. If the configuration file has formatting errors (such as trailing commas or missing quotes), doctor will point directly to the problematic line number. The second step is to run openclaw models list --provider laozhang to confirm that all the models you defined are correctly parsed -- if this shows nothing, either the provider name doesn't match or the models array definition has an issue. The third step is to run openclaw models scan, which actually sends requests to laozhang.ai to test connectivity and detects each model's tool calling and image processing capabilities. The fourth step is to check the log files at ~/.openclaw/logs/, which contain complete API request and response records and serve as your last resort for diagnosing tricky issues.

Common Error Quick Reference

401 Authentication Error means your API Key is invalid or malformed. First check whether the key starts with sk- and whether there are any extra spaces or line breaks. If you're using an environment variable reference (${LAOZHANG_API_KEY}), confirm that the variable has been properly exported -- run echo $LAOZHANG_API_KEY to verify. Also confirm in the laozhang.ai console that the key status is "Active" rather than "Disabled."

429 Rate Limit Exceeded means your request frequency has been exceeded or your account balance is insufficient. First check your account balance in the laozhang.ai console -- when the balance is zero, all requests will return 429. If your balance is sufficient but you still encounter this error, you may be sending too many requests in a short period; simply wait a moment and retry. For an in-depth analysis of 429 errors, see OpenClaw 429 Rate Limit Explained.

404 Model Not Found is typically caused by two things: the baseUrl is missing the /v1 suffix, or the model ID is misspelled. Carefully verify that baseUrl is https://api.laozhang.ai/v1 (note the trailing /v1), then confirm that the id fields in the models array match the exact model IDs supported by laozhang.ai. You can look up all available model IDs on the model list page at docs.laozhang.ai.

Model Not Allowed occurs when you've configured an agents.defaults.models allowlist but haven't added the new model to it. There are two solutions: either add the laozhang models to the allowlist array, or simply delete the agents.defaults.models key entirely to disable the allowlist restriction (the latter is better suited for personal use scenarios).

Advanced Tips & Best Practices

Once you've mastered the basic configuration, the following advanced tips will help you use the OpenClaw + laozhang.ai combination more efficiently.

Use model aliases for faster switching. Frequently typing laozhang/claude-sonnet-4-20250514 is obviously inefficient. OpenClaw supports creating short aliases for models -- you can run openclaw models aliases add sonnet laozhang/claude-sonnet-4-20250514 to create an alias called sonnet, and then simply type /model sonnet in chat to switch instantly. We recommend creating aliases for your 3-5 most-used models, such as mini for GPT-4o Mini, opus for Claude Opus 4, and ds for DeepSeek V3, so switching between models for different tasks takes just a few keystrokes.

Declare model capabilities for smarter routing. Adding optional fields to model definitions in the models array allows OpenClaw to make more intelligent routing decisions. For example, declaring "input": ["text", "image"] for models that support image input tells OpenClaw to automatically select that model when it needs to analyze screenshots or images instead of a text-only model. Similarly, setting "reasoning": true for models with strong reasoning capabilities causes OpenClaw to prioritize them for complex tasks. Setting an accurate contextWindow value prevents unnecessary context truncation -- if your model actually supports 128K context but you don't declare it, OpenClaw may unnecessarily truncate content when sending large files.

Multi-environment configuration strategies. If you work on both personal and company projects, you can use environment variables to differentiate API keys and model strategies. In your personal environment, use LAOZHANG_API_KEY pointing to your personal key and configure the cost-effective GPT-4o Mini as your primary model; in your company environment, use a different environment variable pointing to the company account's key and configure Claude Sonnet 4 as the primary model to ensure code quality. OpenClaw's environment variable reference syntax (${VAR_NAME}) makes this kind of multi-environment switching very natural. To explore more advanced OpenClaw model configuration options, including local models (Ollama, vLLM) and enterprise deployment scenarios, refer to the OpenClaw LLM Setup Complete Guide.

Summary & Next Steps

Through this article's 3-step configuration flow -- get your API Key, edit openclaw.json, verify and start using -- you've successfully connected OpenClaw to laozhang.ai and gained the ability to access 200+ AI models through a single unified endpoint. The core of the entire configuration comes down to three key parameters in models.providers: set baseUrl to https://api.laozhang.ai/v1, set api to openai-completions, and fill in your key for apiKey.

From here, you can continue optimizing your configuration based on your actual needs. To save costs, follow this article's recommended multi-model routing strategy: set GPT-4o Mini as your primary model and Claude Sonnet 4 as your fallback, handling 80% of daily tasks with the low-cost model. For deeper customization of OpenClaw's model behavior, including adding local models, configuring enterprise proxies, or implementing more complex routing strategies, the OpenClaw Custom Model Complete Guide is your next reference.

One final practical tip: before ramping up your usage, use the $0.05 free credits from laozhang.ai to experiment with different models and find the combination that best fits your workflow. Every developer codes differently, and the optimal model choice varies accordingly. Deciding on your go-to model through hands-on experience is more reliable than any recommendation list. You can find detailed usage documentation and the full model list for laozhang.ai at docs.laozhang.ai.