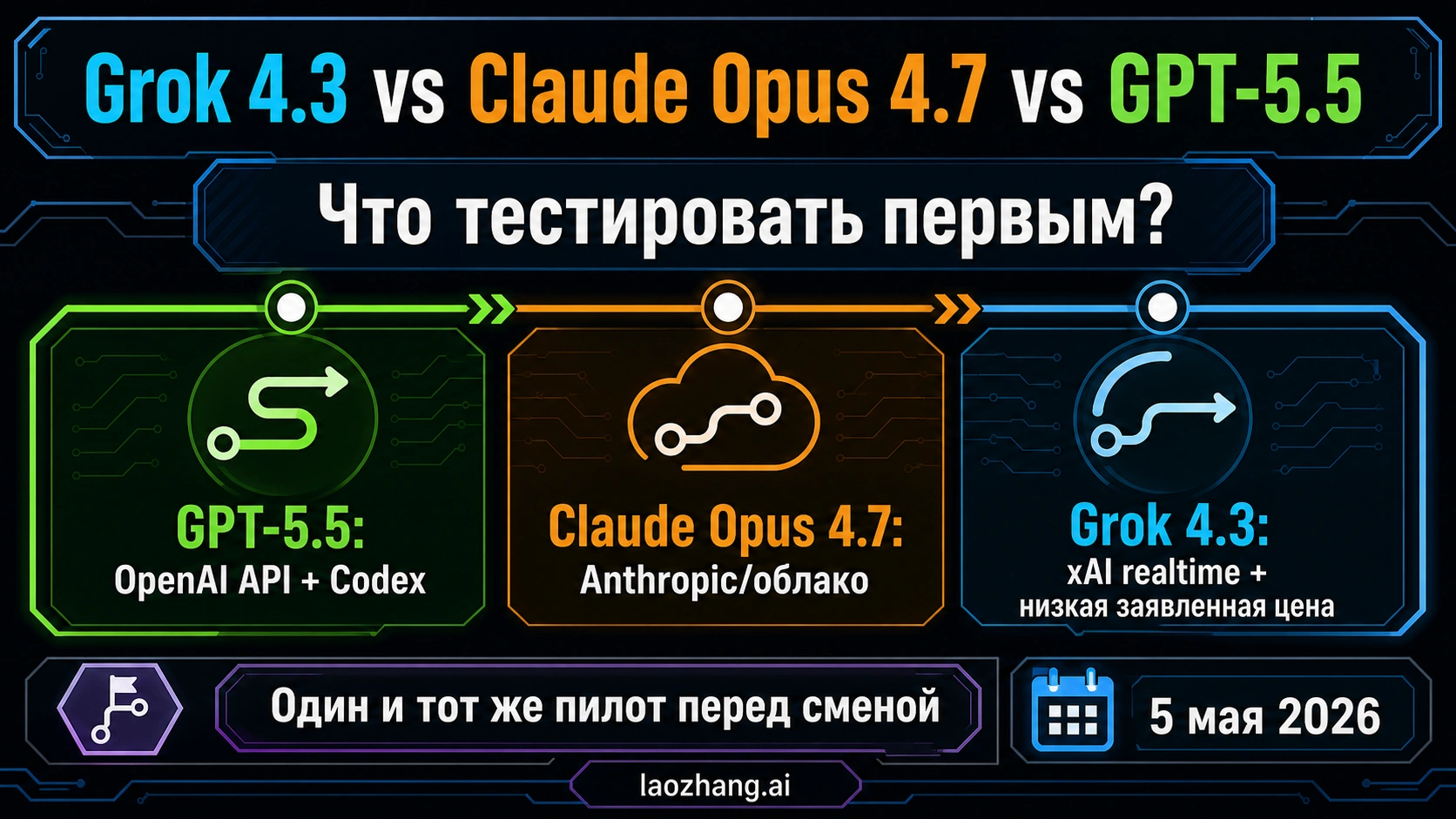

На 5 мая 2026 года более безопасный ответ звучит не как универсальный победитель, а как routing decision. Тестируйте GPT-5.5 первым, если ваш продукт уже живет в OpenAI API, Responses API, Codex, structured outputs, hosted tools или OpenAI eval harness. Держите Claude Opus 4.7 как premium Anthropic или cloud control, если ошибка coding agent стоит дорого. Начинайте с Grok 4.3, если причина сравнения — xAI account, realtime/X search, более низкая listed token price или long-context pilot.

Не меняйте production default после одного рейтинга, ролика или launch-week впечатления. Сравнение становится полезным только тогда, когда три маршрута проходят один и тот же prompt, те же файлы, те же tools, тот же бюджет, ту же scoring rubric и тот же rollback threshold.

| Первый маршрут | Когда это имеет смысл | Не предполагайте автоматически |

|---|---|---|

| GPT-5.5 | OpenAI-native API, Responses API, Codex, tool-heavy reasoning, structured outputs, existing OpenAI evals. | API access, Codex picker, API key, credits, rate limits и billing surface являются одной и той же сделкой. |

| Claude Opus 4.7 | Anthropic API, Claude products, Bedrock, Vertex AI, Microsoft Foundry или high-risk coding agents. | Premium control обязательно дешевле после output length, tokenizer behavior, retries и reviewer time. |

| Grok 4.3 | xAI route, realtime/X freshness, lower listed token price или long-context experiment. | Низкая token price заменяет same-task проверку качества и полной стоимости. |

Начните с маршрута, который вы реально можете вызвать

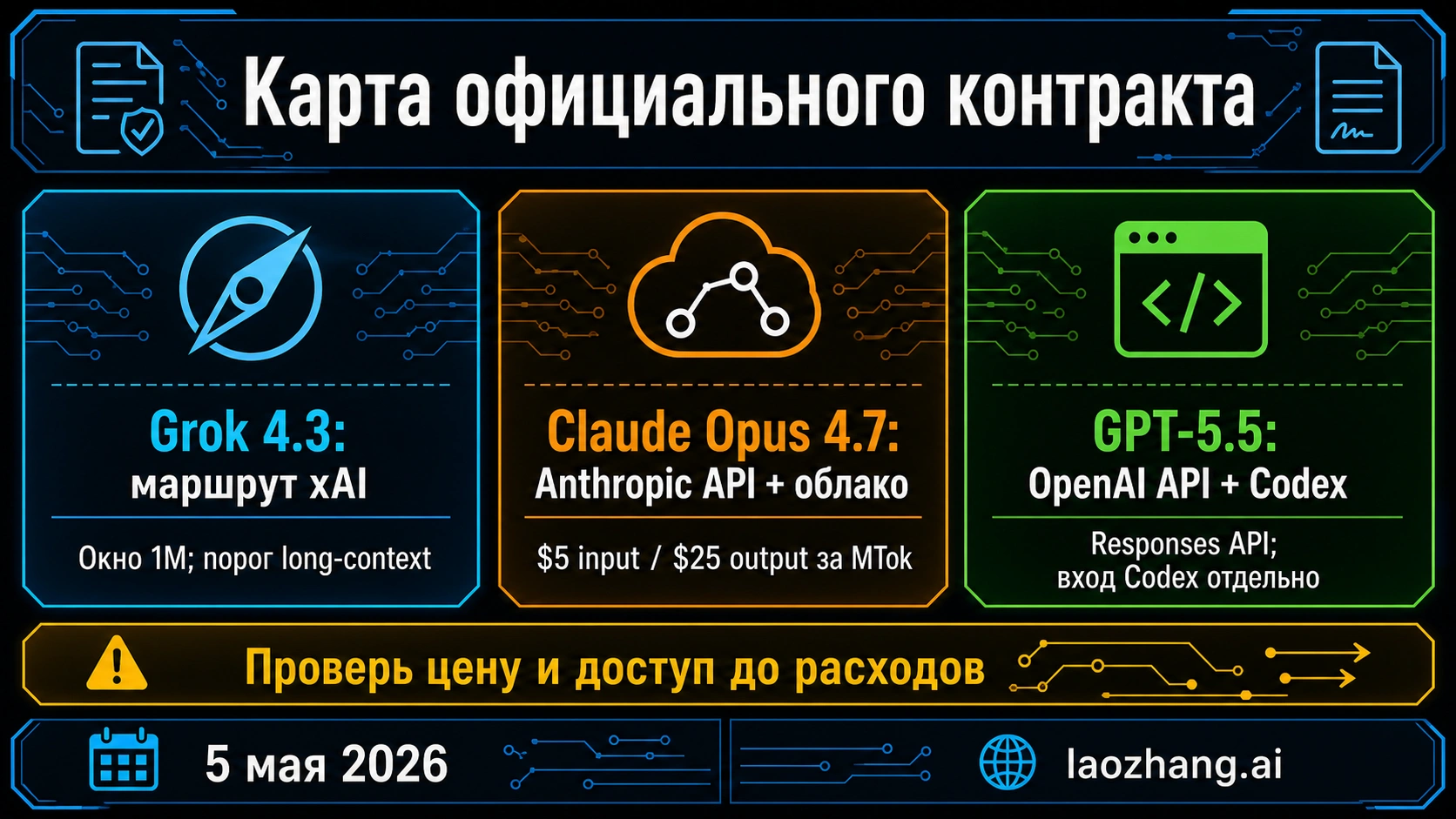

Полезное сравнение начинается с route ownership. OpenAI отвечает за GPT-5.5 API, Responses API и Codex surface. Anthropic отвечает за Claude Opus 4.7 в Claude products, Anthropic API и cloud-provider маршрутах. xAI отвечает за Grok 4.3, console visibility, aliases, server-side search tools, long-context thresholds и account availability. Любой сторонний обзор может подсказать, что включить в тест, но не должен решать model ID, endpoint behavior, pricing row, context limit или production access.

| Contract item | GPT-5.5 | Claude Opus 4.7 | Grok 4.3 |

|---|---|---|---|

| Route owner | OpenAI developer platform, Responses API, Codex product surface | Anthropic API, Claude products, Bedrock, Vertex AI, Microsoft Foundry | xAI API, xAI Console, Grok model docs, server-side search tools |

| Model label to verify | GPT-5.5 и dated snapshots в OpenAI developer docs | claude-opus-4-7 и cloud model IDs | grok-4.3, grok-4.3-latest или текущий console alias |

| Strongest first-test reason | OpenAI-native tool loops, structured outputs, Codex, existing eval harness | Premium control for high-risk coding agents and cloud deployment | xAI realtime/X search, lower listed price, long-context pilot |

| Cost caveat | OpenAI API pricing и Codex credits надо разделять. | Anthropic lists $5 input and $25 output per MTok plus tokenizer caveat. | xAI console, aliases, long-context threshold and search-tool costs must be rechecked. |

| Do not merge | API route, ChatGPT/Codex login, API-key auth, credits, rate limits | Claude app, Anthropic API, cloud route, priority tier, tokenizer cost | Grok chat, xAI API, Web/X Search, aliases, region/account availability |

OpenAI's GPT-5.5 guidance belongs to the Responses API context: reasoning effort, verbosity, Structured Outputs, prompt caching, hosted tools, state handling and Agents SDK. Codex docs also position GPT-5.5 as the frontier Codex model for complex coding, computer use, knowledge work and research workflows. For an OpenAI-native team the practical value is friction reduction: you can test the model inside the same operating surface that will run production.

Anthropic's Opus 4.7 materials say the model is generally available through Claude products, the Anthropic API, Amazon Bedrock, Google Vertex AI and Microsoft Foundry. The public contract includes claude-opus-4-7, 1M context, $5 input and $25 output per million tokens, plus a tokenizer caveat. That makes Opus a natural control route where severe defects, rollback and reviewer time matter more than the raw price row.

xAI's Grok 4.3 story has to be read together with server-side search tools. Realtime events require Web Search or X Search; they are not free model memory. If realtime/X freshness is the reason to test Grok, the pilot must count tool calls, search failures, citation quality and tool cost, not only model tokens.

Какой workload указывает на какой первый тест

The clean question is not "which model is best." The useful question is "which route deserves the first controlled test for this workload." This prevents a benchmark argument from replacing a deployable experiment.

| Workload | First route | Why it fits | What to score |

|---|---|---|---|

| OpenAI-native coding, Codex, Responses API tools, structured outputs | GPT-5.5 | Closest to OpenAI tooling, Codex workflow and existing eval harness. | Accepted diffs, tool recovery, format stability, review time, token and credit use. |

| Correctness-sensitive coding agents, multi-tool workflows, cloud deployment | Claude Opus 4.7 | Premium Anthropic/cloud control when failure cost is high. | Defect severity, rollback behavior, tool-call reliability, reviewer trust, latency. |

| Realtime or X-informed answers | Grok 4.3 | xAI owns the Grok route and search tools that can bring live data into a request. | Freshness, tool count, search cost, citation quality, false freshness claims. |

| Long-context repository, document or evidence analysis | Route-specific test | All three have large-context stories, but limits and price thresholds differ. | Truncation, recall, output length, long-context threshold, completed-task cost. |

| Budget-sensitive exploration | Grok 4.3 first, then control against GPT-5.5 or Opus | Listed price can make Grok attractive if quality and retries hold. | Success rate, retry count, p95 latency, repair time, cost per accepted result. |

| Production default change | Dual-run candidate against incumbent | Public comparisons cannot measure your prompts, files, permissions or failure cost. | Regressions, human minutes, cost, rollback success, user-visible failures. |

GPT-5.5 gets the first OpenAI-native test when integration is the value. If your system already uses Responses API state, hosted tools, structured outputs, prompt caching, file search, computer use or Codex workflows, GPT-5.5 can be observed in the operating model that will carry production. That matters because tool failures, format drift and billing behavior show up earlier.

Claude Opus 4.7 belongs in the premium control lane. High-risk agents, complex code migration, permission-sensitive tools, regulated review and cloud deployment need a model that reveals whether cheaper or faster candidates are really safe. A higher listed price can still be cheaper if it prevents severe bugs and long review loops.

Grok 4.3 should be used narrowly: xAI access, realtime/X freshness, lower listed price or long-context pressure. If the task does not need search tools, xAI-specific access or a cost pilot, Grok can still be compared, but it should not automatically own the production default.

Стоимость надо сравнивать на одной поверхности

Raw token rows are only the first filter. GPT-5.5 can appear through OpenAI API pricing, account controls or Codex credits. Claude Opus 4.7 can be billed directly by Anthropic or through cloud providers. Grok 4.3 can combine model tokens with Web Search, X Search, aliases, long-context thresholds and account visibility. Mixing these surfaces creates a false winner.

For GPT-5.5, use the current OpenAI API or console row for API services, and use Codex credits only when evaluating Codex. A Codex credit rate does not replace API token pricing for a backend. For Claude Opus 4.7, the tokenizer note matters because long prompts, tool logs and repeated repository context can change counted tokens. For Grok 4.3, the lower listed price is a reason to test, not a rollout decision.

| Cost variable | Why it changes the ranking |

|---|---|

| Input and cached input | Long prompts, repeated repo context and prompt caching change the bill. |

| Output length | Output-heavy agents can make a cheap input row irrelevant. |

| Tool calls | Search, files, browser, computer use and custom tools can dominate cost. |

| Retry rate | A cheaper model loses if it needs several attempts. |

| Human review minutes | For coding agents, the expensive line item is often the engineer accepting or repairing the result. |

| Rollback cost | Rare severe failures can cost more than average tokens. |

The metric that matters is successful-task cost: one accepted answer, one merged diff, one correct agent action or one completed analysis packet. If Grok wins that ledger, expand the test. If Opus prevents expensive failures, its premium is justified. If GPT-5.5 reduces integration friction in OpenAI-native work, it can be the cheaper operational route.

Читайте бенчмарки как подсказки для тестов

Benchmarks and videos are useful inputs, but they should not pick the default. Coding-agent tests, browsing tasks, long-context recall, math, safety, visual reasoning and cost-per-score tables are different jobs. A strong GPT-5.5 result in an OpenAI-native agent benchmark is a reason to test GPT-5.5 in your OpenAI harness, not proof that Opus is obsolete or Grok cannot be cheaper.

The same rule applies in the other direction. An Anthropic launch claim is a reason to keep Opus in the control lane, not a reason to skip your same-task harness. A Grok price or speed claim is a reason to build a measured cost pilot, not a reason to replace high-risk coding work.

Use a four-step evidence ladder. Official docs decide whether the route exists, what the model is called, what access surface applies and where pricing must be verified. Public benchmarks suggest workload coverage. Your same-task harness decides whether traffic should move. A staged rollout proves whether the improvement survives real users, quotas, latency, permissions and failures.

This ladder blocks the common mistake: declaring a universal winner from evidence that only covers one task shape. The route answer is narrower by design. GPT-5.5 is the OpenAI-native first test. Claude Opus 4.7 is the premium Anthropic/cloud control. Grok 4.3 is the xAI route for realtime, lower listed-price and long-context pilots.

Перед сменой дефолта нужен same-task pilot

A pilot can be small, but it must be fair. Do not give one model a better prompt, wider context, looser output schema or easier tool budget and then call the result a comparison.

| Pilot gate | What to hold constant | Pass condition |

|---|---|---|

| Route access | Model label, endpoint, account, region, quota, billing surface, fallback | The team can call the route it plans to deploy. |

| Prompt and files | Same system prompt, user task, repository or document pack | Differences come from model behavior, not better inputs. |

| Tool budget | Same tool definitions, permissions, timeout, retry rule and search availability | Tool-heavy success is comparable. |

| Task sample | Easy, hard, long-context, strict-format and failure-prone tasks | The sample matches work that costs money or review time. |

| Scoring | Correctness, severity, security risk, output format, reviewer minutes, accepted rate | Candidate reduces total work, not just demo quality. |

| Cost and latency | Input, cached input, output, tools, retries, p95 latency, completed-task cost | Savings survive full-task accounting. |

| Rollback | Failure threshold, fallback model, routing switch, monitoring owner | Old route can return without rebuilding. |

For a team with a stable default, keep the incumbent while shadow-running the candidate. Promote only when the candidate reduces total work and does not introduce a new high-severity failure mode. For a team choosing a first model, start with the stack route: GPT-5.5 for OpenAI-native products, Opus for Anthropic/cloud-heavy products, and Grok when realtime/X freshness or listed price is the actual reason.

Соседние решения

This tri-model page is narrow: Grok 4.3 vs Claude Opus 4.7 vs GPT-5.5 as a first-test route decision. If your question is narrower, use the narrower guide.

If the real decision is only OpenAI versus Anthropic, use GPT-5.5 vs Claude Opus 4.7. If you need the DeepSeek cost lane instead of xAI realtime/X freshness, use DeepSeek V4 Pro vs Claude Opus 4.7 vs GPT-5.5. If you want a wider low-cost pool, use Kimi K2.6 vs DeepSeek V4 vs GPT-5.5 vs Claude Opus 4.7.

If you still compare older official frontier API routes, use Claude Opus 4.7 vs GPT-5.4 vs Gemini 3.1 Pro. The same rule applies: choose the route you can call, measure the task you will run and keep a rollback path.

Часто задаваемые вопросы

GPT-5.5 лучше Claude Opus 4.7 и Grok 4.3?

GPT-5.5 is the better first test when the system is already OpenAI-native, especially Responses API, Codex, tool-heavy reasoning, structured outputs and existing OpenAI evals. It is not a universal winner. Opus remains the premium control, while Grok deserves the first xAI test when realtime/X search, price or long-context is the reason.

Grok 4.3 дешевле GPT-5.5 и Claude Opus 4.7?

Grok can look cheaper on the current xAI listed token row, but you still need console visibility, long-context threshold, search-tool charges, retry rate, latency and accepted-result rate. Compare completed-task cost, not only model tokens.

Стоит ли использовать Claude Opus 4.7 для coding agents?

Use Opus as the premium control when failures are expensive, Anthropic or cloud route fits deployment and correctness matters more than raw token price. Use GPT-5.5 first for OpenAI-native agents. Add Grok when realtime/X data, xAI access or lower listed price is central to the pilot.

GPT-5.5 доступен через API?

OpenAI developer docs currently publish GPT-5.5 API guidance and list GPT-5.5 snapshots in developer surfaces. API access, Codex access, API-key authentication, credits, rate limits and organization visibility remain separate. Verify the model in your account before production traffic.

У Grok 4.3 живые данные включены сразу?

No. xAI docs say realtime events require server-side search tools such as Web Search or X Search. If freshness is your reason to choose Grok, include those calls in cost, scoring and failure review.

Что тестировать первым для long-context work?

Test the route you can deploy. All three have large-context stories, but limits, billing, thresholds, output behavior and recall quality differ. Use the same long prompt, retrieval pack, output budget and scoring rubric.

Какое правило переключения production самое безопасное?

Do not switch from a benchmark, launch claim or listed price gap alone. Dual-run candidate and incumbent with the same prompts, tools, files, budgets, acceptance tests and rollback threshold. Promote only after a staged rollout reduces total work.