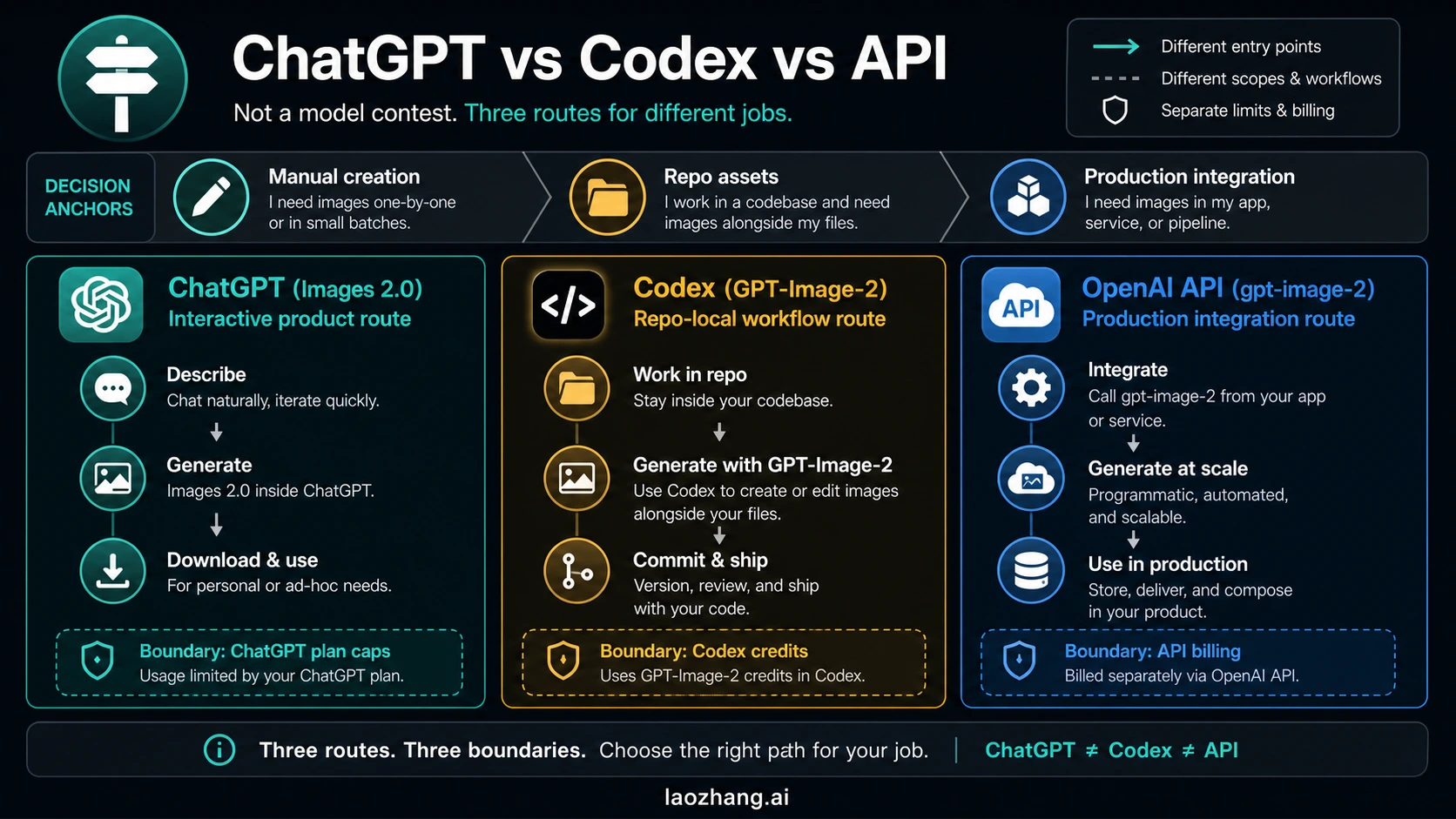

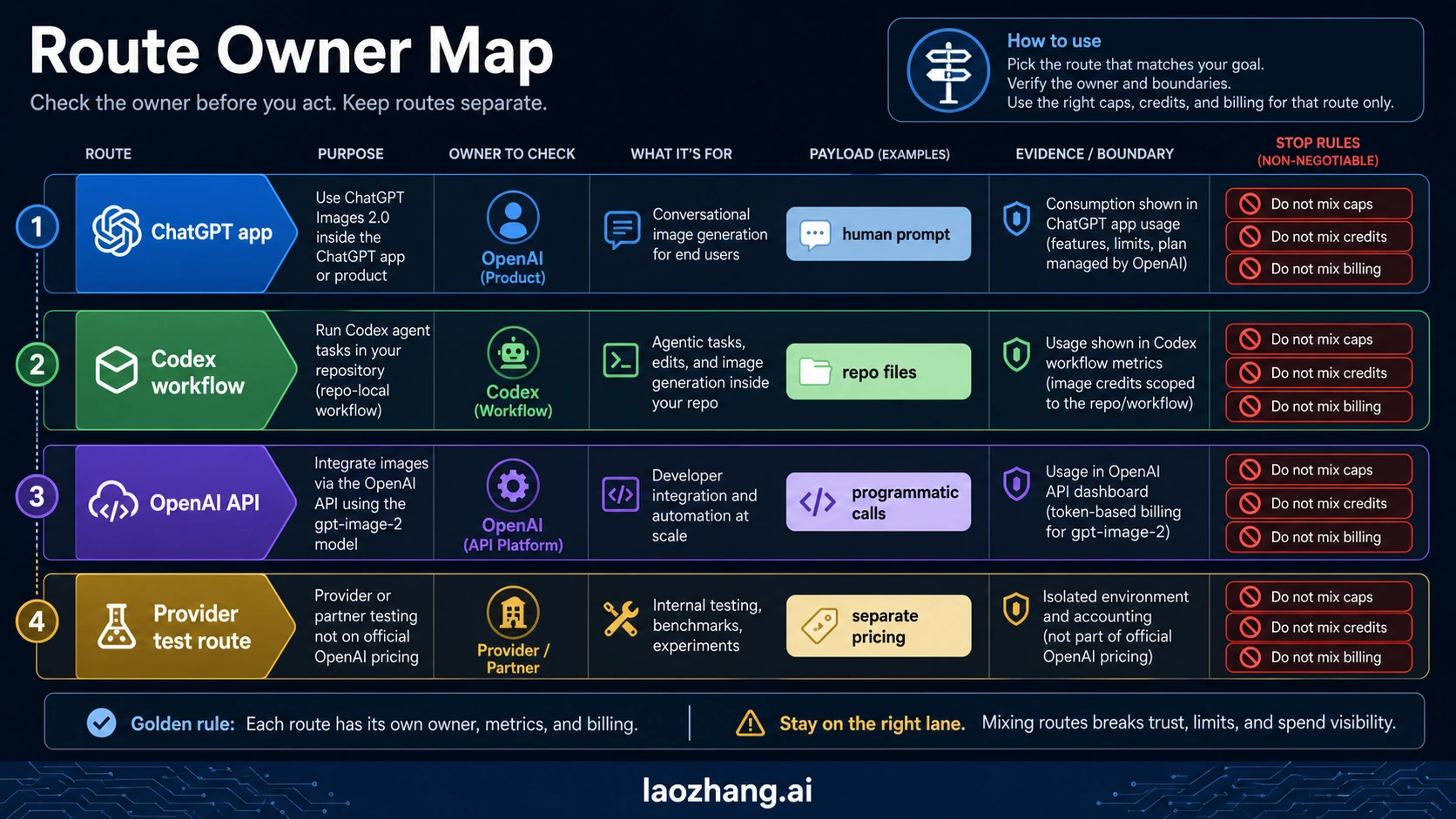

ChatGPT Images 2.0, Codex GPT-Image-2 usage, and the OpenAI API gpt-image-2 are three routes for GPT Image 2 work, not one shared contract. Use ChatGPT when a human is prompting, editing, and downloading images; use Codex when image assets need to live inside a repository workflow; use the API when an application needs repeatable generation with storage, retries, logs, and billing visibility.

Quick Route Answer

| Route | Use it when | Owner to verify |

|---|---|---|

| ChatGPT Images 2.0 | You want an interactive creative session and a downloadable result. | ChatGPT plan, workspace, and app usage limits. |

| Codex GPT-Image-2 | The image belongs with code, MDX, docs, or other repo files. | Codex task context, image credits, and file provenance. |

OpenAI API gpt-image-2 | Your product needs automated generation, storage, retries, monitoring, and spend controls. | OpenAI API project, model docs, and API billing. |

| Provider or test route | You are testing through a partner or gateway before committing to an official path. | The provider contract, not official OpenAI pricing. |

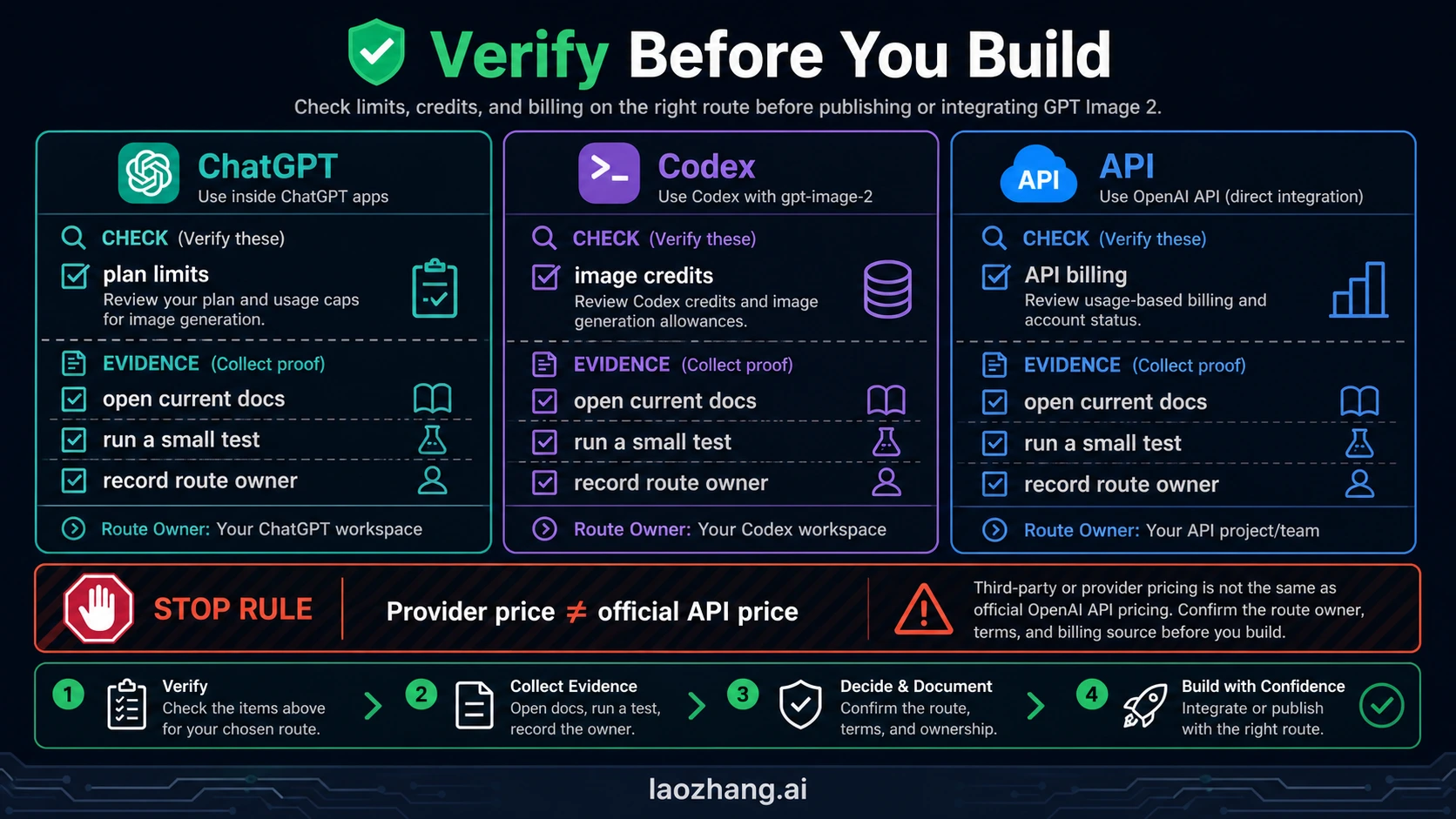

As of May 13, 2026, OpenAI's developer docs identify gpt-image-2 as the image-generation model and document Image API or Responses API usage, while Codex credit docs and ChatGPT app access are separate surfaces. The practical stop rule is simple: do not mix ChatGPT plan caps, Codex image credits, API billing, or provider pricing in the same decision.

Official Evidence First

The route split comes from the contract surface, not from model taste. OpenAI's GPT Image 2 model page lists gpt-image-2, with snapshot gpt-image-2-2026-04-21, as a state-of-the-art image generation model with flexible image sizes and high-fidelity image inputs. OpenAI's Images and vision docs say images can be generated or edited using the Image API or the Responses API, and identify gpt-image-2 as the image generation model behind that capability.

OpenAI's image generation guide adds the implementation boundary: the Image API is the simpler path for a single image-generation or image-edit request, while the Responses API is the better fit when image generation belongs inside a conversational or editable experience. That distinction matters because a product feature needs different logging, retries, storage, and cost controls than a one-off ChatGPT session.

The ChatGPT side has its own product contract. OpenAI's ChatGPT image generation release notes describe ChatGPT Images 2.0 as available in ChatGPT, while image generation with thinking is tied to paid ChatGPT plans. Codex has a different contract again: the Codex pricing page exposes GPT-Image-2 credit rows, which means Codex image work is governed by Codex usage and credits rather than by the OpenAI API billing ledger.

| Evidence surface | What it proves | What it does not prove |

|---|---|---|

| GPT Image 2 model page | gpt-image-2 exists as an OpenAI developer model identity. | ChatGPT plan limits or Codex credit rates. |

| Images and vision docs | Image API and Responses API are valid developer routes. | That Codex tasks are direct API calls. |

| Image generation guide | Image API and Responses API have different use cases. | That every app should use Responses. |

| Codex pricing | Codex has its own credit accounting for GPT-Image-2 usage. | OpenAI API token or image billing for your project. |

| ChatGPT release notes | ChatGPT Images 2.0 is a product-facing ChatGPT capability. | API endpoint behavior or provider pricing. |

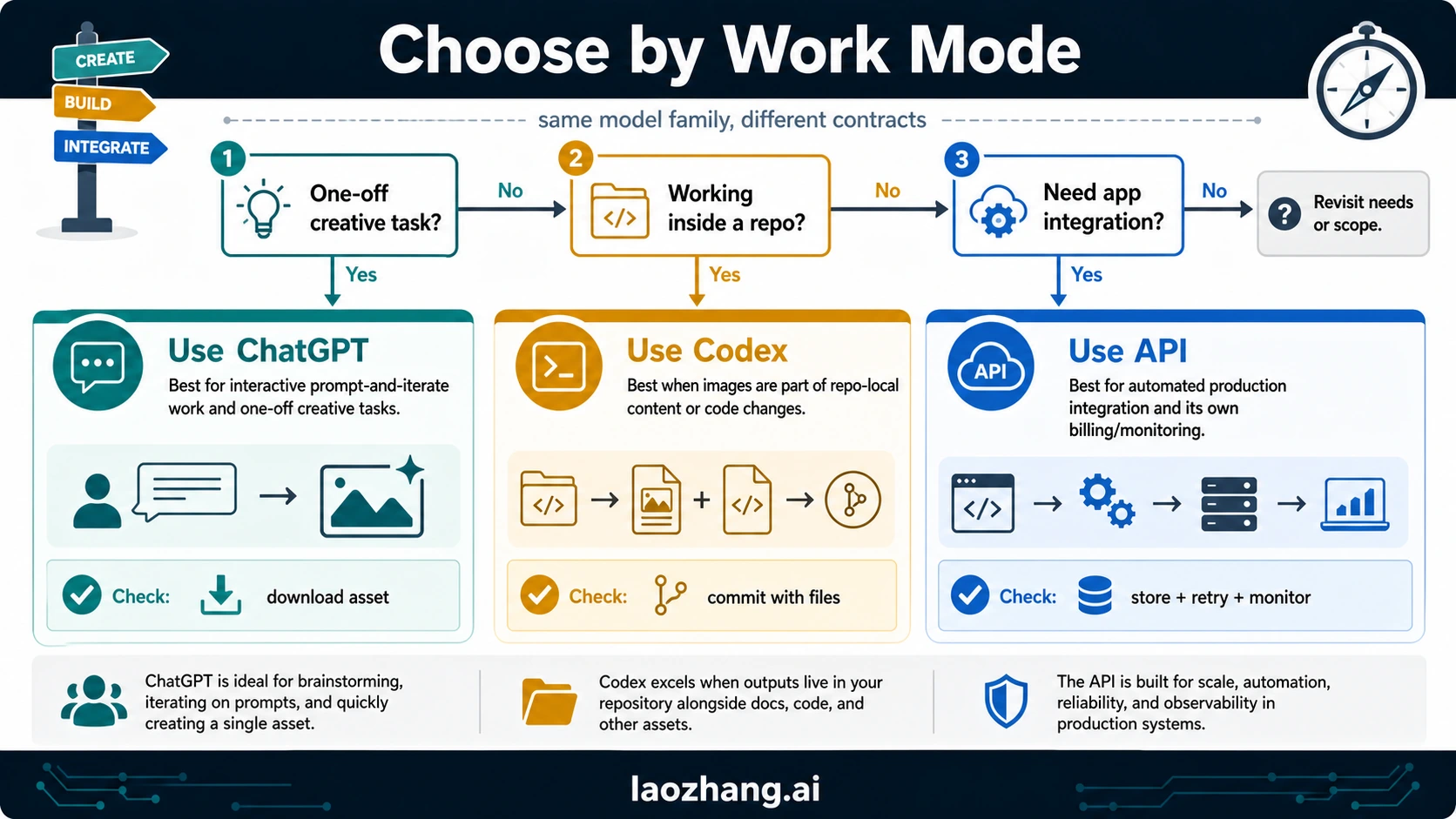

The Decision Rule

Choose by the job before choosing by the model name. The same GPT Image 2 capability can sit behind very different workflows, and the wrong workflow creates the expensive failure: a promising test that cannot be reproduced, logged, billed, reviewed, or shipped.

| Job | Start here | Why | Stop when |

|---|---|---|---|

| Explore prompts, composition, typography, or edits manually | ChatGPT Images 2.0 | The feedback loop is fastest and no app integration is needed. | You need repeatable generation, logs, storage, or account-level spend control. |

| Create article, docs, code, or product assets inside a repo | Codex GPT-Image-2 usage | The asset can be generated, reviewed, committed, and referenced with surrounding files. | You need an external product to call image generation independently of the repo task. |

| Build an image feature into a product | OpenAI API gpt-image-2 | The API route can be instrumented, monitored, retried, costed, and connected to storage. | The work is just a human creative session or a one-time editorial asset. |

| Compare a gateway, partner, or local-payment route | Provider route | It may reduce access or payment friction for testing. | You cannot name the provider owner, alias mapping, billing unit, data terms, and failure-charge rule. |

The important wording is not "ChatGPT versus API" or "Codex versus API." The useful wording is "manual workspace versus repo-local workflow versus programmable integration." That phrasing tells you where to verify limits, where to store output, and which billing surface will show the cost.

Use ChatGPT When the Image Is a Human Creative Session

ChatGPT is the right starting point when the output depends on interactive judgment: trying a poster direction, editing an uploaded visual, making a few social images, checking whether long visible text holds up, or exploring a style before writing a production prompt. The value is the conversation loop. You can ask for a change, inspect the image, and decide manually whether to keep it.

The limits you need to verify are product limits, not API limits. Check the active ChatGPT plan, workspace policy, upload behavior, image library, export format, and whether the image was generated with the mode you expected. If the job will later become a product feature, log the prompt, inputs, and desired output spec before moving to the API. Do not treat a good ChatGPT result as proof that an API implementation has the same controls or price.

Use ChatGPT when:

- the image is made or edited one at a time;

- a human can inspect and accept the result;

- export and manual reuse are enough;

- the risk of variable output is acceptable;

- account-level ChatGPT limits are the real constraint.

Stop using ChatGPT as the main route when the output needs deterministic storage, request IDs, retry strategy, customer-facing latency handling, or per-generation spend attribution.

Use Codex When the Image Belongs With Repository Work

Codex is the best route when the image is part of a repo-local task: a blog cover, an explanatory board, a documentation diagram, a screenshot-like product asset, or a visual that must be saved under a specific path and referenced from MDX. The key advantage is not "Codex is the API." It is that the agent can generate the image, inspect the default Codex output directory, copy the raw evidence, convert or compress the selected asset, update references, and keep provenance in the same workspace.

That makes Codex useful for editorial and engineering workflows where file placement matters. A generated cover under public/posts/en/.../img/cover.webp, a copied raw PNG under a run evidence directory, and a 05-images.json provenance entry are repo artifacts. They are not the same thing as an application making an API request at runtime.

Use Codex when:

- the image needs to live beside article, docs, or code files;

- the workflow requires reviewable file diffs and asset paths;

- the visual needs article-specific context such as section jobs, alt text, or frontmatter;

- the run must preserve raw generation evidence and publish hashes;

- Codex credits and workspace limits are the relevant usage owner.

Stop using Codex as the main route when the product itself must generate images for end users. In that case, Codex can help write the code and assets, but the running product still needs an API route with logging, storage, retries, and billing controls.

Use the OpenAI API When the Product Needs Generation

Use OpenAI API gpt-image-2 when image generation is a feature, not a manual asset. The API path is where you design request payloads, store outputs, handle failures, meter cost, preserve request metadata, and enforce user permissions. OpenAI's image docs point to two developer surfaces: Image API for direct generation or edits, and Responses API when image generation belongs inside a broader assistant-style interaction.

A minimal production plan should log more than the prompt. Record the route, model ID or alias, endpoint family, size, quality, input images, output format, account/project, request ID when available, storage path, retry count, and cost source. That is the difference between "it made a good image once" and "the feature can be operated."

Use the API when:

- an application needs to call image generation repeatedly;

- output must be stored, referenced, or delivered to users;

- costs need to be measured per job, user, or project;

- failures need retries, user-facing fallback, or alerting;

- product teams need logs instead of manual screenshots.

Stop before production if you cannot name the model, route, billing owner, input rights, output rights, unsupported parameters, and failure behavior. For endpoint-level examples, keep the narrower GPT-Image-2 API guide open instead of overloading a route-choice decision with code.

Billing Split: Caps, Credits, and API Spend Are Not Shared

The billing mistake is easy to make because every route can involve GPT Image 2. The owner is different.

| Cost or limit | Where to look | Operational meaning |

|---|---|---|

| ChatGPT plan caps | ChatGPT plan and workspace | Controls product access, availability, and app-side image usage. |

| Codex image credits | Codex pricing and workspace usage | Controls agent-side image generation inside Codex tasks. |

| OpenAI API billing | OpenAI API project and pricing docs | Controls programmatic generation, token/image cost, and API spend. |

| Provider pricing | Provider or gateway contract | Controls only that provider route and must be labeled separately. |

Do not average these into one "GPT Image 2 cost." A ChatGPT plan can be the right route even when an API project has no budget. Codex can be the right route for repository assets even when a production integration still needs separate API billing. A provider can be useful for testing or payment friction, but a provider price is not official OpenAI API pricing.

A Practical Route Test

Before committing to a route, run one test per real job type. The goal is not a portfolio. The goal is evidence you can operate.

- In ChatGPT, create or edit one image that matches the kind of manual asset you need. Save the prompt, uploaded inputs, final output, and any export constraint.

- In Codex, generate one repo-bound image, copy the raw Codex PNG into evidence, publish the selected asset under the repo image directory, and update the MDX reference.

- In the OpenAI API route, run one small generation or edit through the endpoint family you expect to use. Log model, route, input count, size, quality, output format, latency, storage path, and cost source.

- If evaluating a provider route, record base URL or integration owner, model alias, billing unit, data handling, support owner, refund or failure-charge rule, and whether the provider output rights fit the job.

- Compare the route evidence. Pick the route that gives the needed control with the least extra operational surface.

The best route is not always the most powerful one. It is the route whose owner, limits, files, logs, and cost model match the job.

Common Misreadings

Codex GPT-Image-2 is not the same as the OpenAI API

Codex can use GPT-Image-2 to create assets during an agent workflow, but that does not turn Codex into the route your production app calls. Treat Codex as a workspace and task context. Treat the API as a product integration surface.

ChatGPT Images 2.0 is not enough for production logging

ChatGPT is strong for human-in-the-loop creation. It is weak as an operating ledger because your app cannot depend on a manual chat session for request IDs, retries, storage, cost attribution, or user-level policy enforcement.

Provider convenience is not official OpenAI pricing

Provider routes can be useful, especially when access, payment, or compatibility friction matters. Keep the label honest: provider-owned pricing, provider-owned model aliasing, provider-owned support, and provider-owned data terms.

Model quality does not decide the route by itself

If two routes can produce a similar image, the deciding factors become control, repeatability, file ownership, billing clarity, and recovery path. A beautiful output that cannot be reproduced or billed cleanly is not production-ready.

Related Guides

Use the narrower sibling that matches the next job:

- ChatGPT Images 2.0 for the product-facing ChatGPT route and launch boundary.

- GPT-Image-2 API for Image API, Responses API, request shape, and provider boundaries.

- GPT-Image-2 API Pricing for official cost rows, calculator thinking, and provider-owned price separation.

- How to Use GPT-Image-2 for practical generation and editing workflows.

- Is GPT-Image-2 Free? for entitlement, trial, ChatGPT, API, and provider-free-route questions.

FAQ

Is ChatGPT Images 2.0 the same thing as gpt-image-2?

They are related, but not the same contract. ChatGPT Images 2.0 is the product-facing route inside ChatGPT. gpt-image-2 is the developer model identity you verify in OpenAI API docs and logs.

Is Codex GPT-Image-2 an API route?

No. Codex GPT-Image-2 usage is a Codex workflow route. It is useful when images are generated as part of repo-local agent work, but it is not a production endpoint for your application.

Should a developer start with ChatGPT, Codex, or the API?

Start with ChatGPT for manual exploration, Codex for repo-bound assets, and the API for programmable product generation. If the job crosses routes, test the smallest example in each route and keep the billing owners separate.

Do ChatGPT limits, Codex credits, and API billing share one pool?

No. Verify ChatGPT plan caps in ChatGPT, Codex credits in Codex pricing or workspace usage, and API spend in the OpenAI API project. Provider pricing is another separate owner.

Where do provider routes fit?

Provider routes belong in testing or access-route evaluation. They should be labeled as provider contracts and should not be described as official OpenAI API pricing unless the source is OpenAI's own pricing documentation.