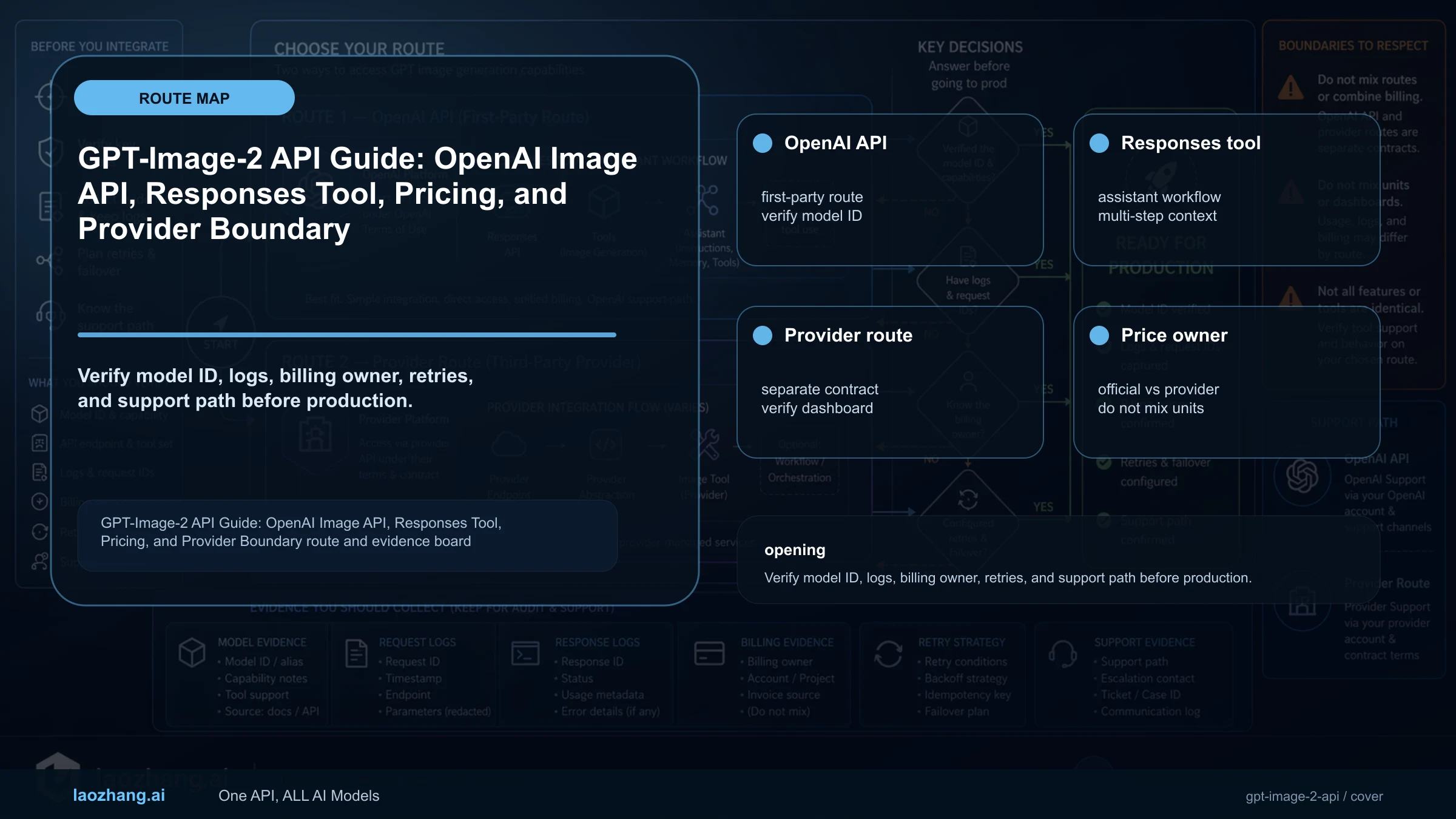

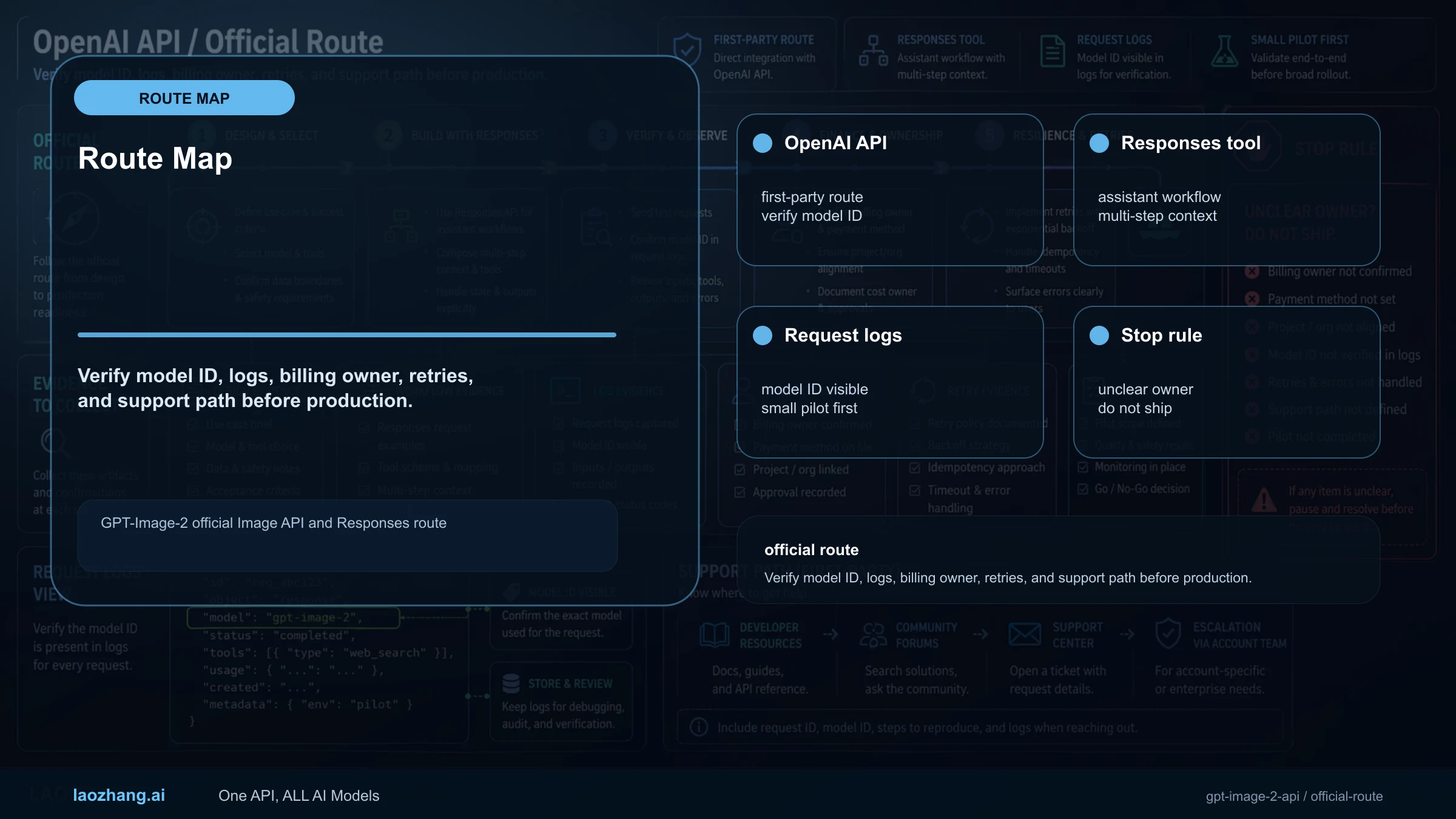

GPT-Image-2 API now has a clean official route: use the OpenAI Image API when the image is the direct output, and use the Responses API image_generation tool when image generation belongs inside an assistant, agent, or multi-step flow.

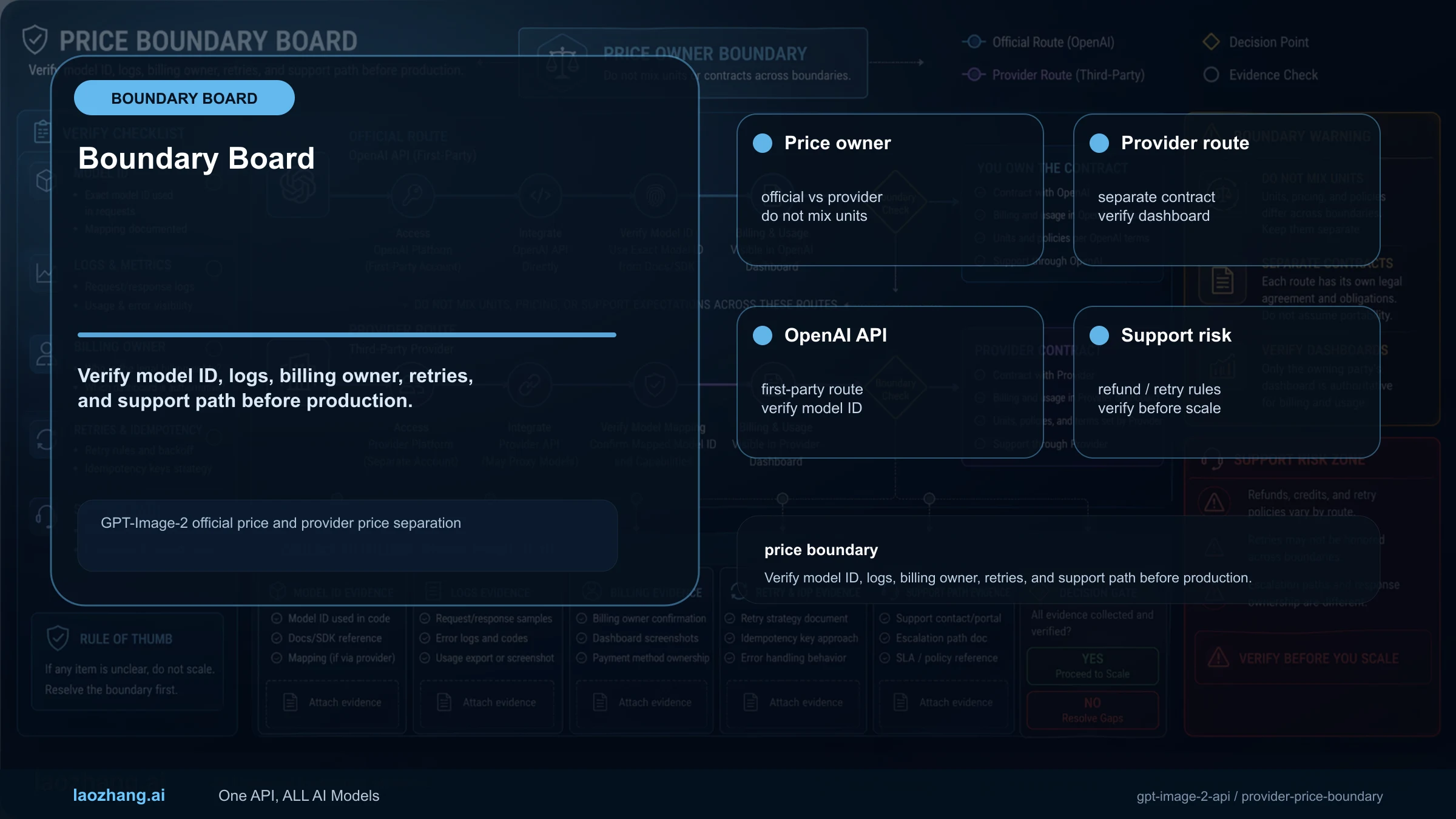

Do not collapse the official route and a provider route into one claim. OpenAI pricing, model IDs, organization verification, size constraints, and output formats are first-party facts; laozhang.ai gpt-image-2 at $0.03/call is a provider contract that can be useful for gateway or payment needs.

This page was rewritten after OpenAI published the GPT Image 2 model page and pricing evidence. Older release-watch wording is no longer the live contract.

Quick Answer

| Question | Answer |

|---|---|

| Direct image job | Use Image API with model: "gpt-image-2". |

| Assistant or agent job | Use Responses API with a mainline model plus tools: [{ type: "image_generation" }]. |

| Output setup | Choose size, quality, output format, and compression; gpt-image-2 does not support transparent background. |

| Provider route | Use laozhang.ai only as provider-owned pricing/access, not OpenAI official pricing. |

Official Evidence

| Evidence | Current contract | Source |

|---|---|---|

| Model ID | gpt-image-2 | OpenAI model page |

| Snapshot | gpt-image-2-2026-04-21 | OpenAI model page |

| Direct API | /v1/images/generations and /v1/images/edits examples use model: "gpt-image-2" | Image generation guide |

| Assistant flow | Use a mainline model such as gpt-5.4 with the image_generation tool; gpt-image-2 is the GPT Image model behind the image process, not the Responses model field. | Responses image tool |

| Pricing | Standard rows: image input $8, cached image input $2, image output $30; text input $5, cached text input $1.25, text output $10 per 1M tokens. | Pricing |

| Cost estimate | 1024x1024: low $0.006, medium $0.053, high $0.211; portrait/landscape examples are $0.005, $0.041, $0.165. | Cost calculator |

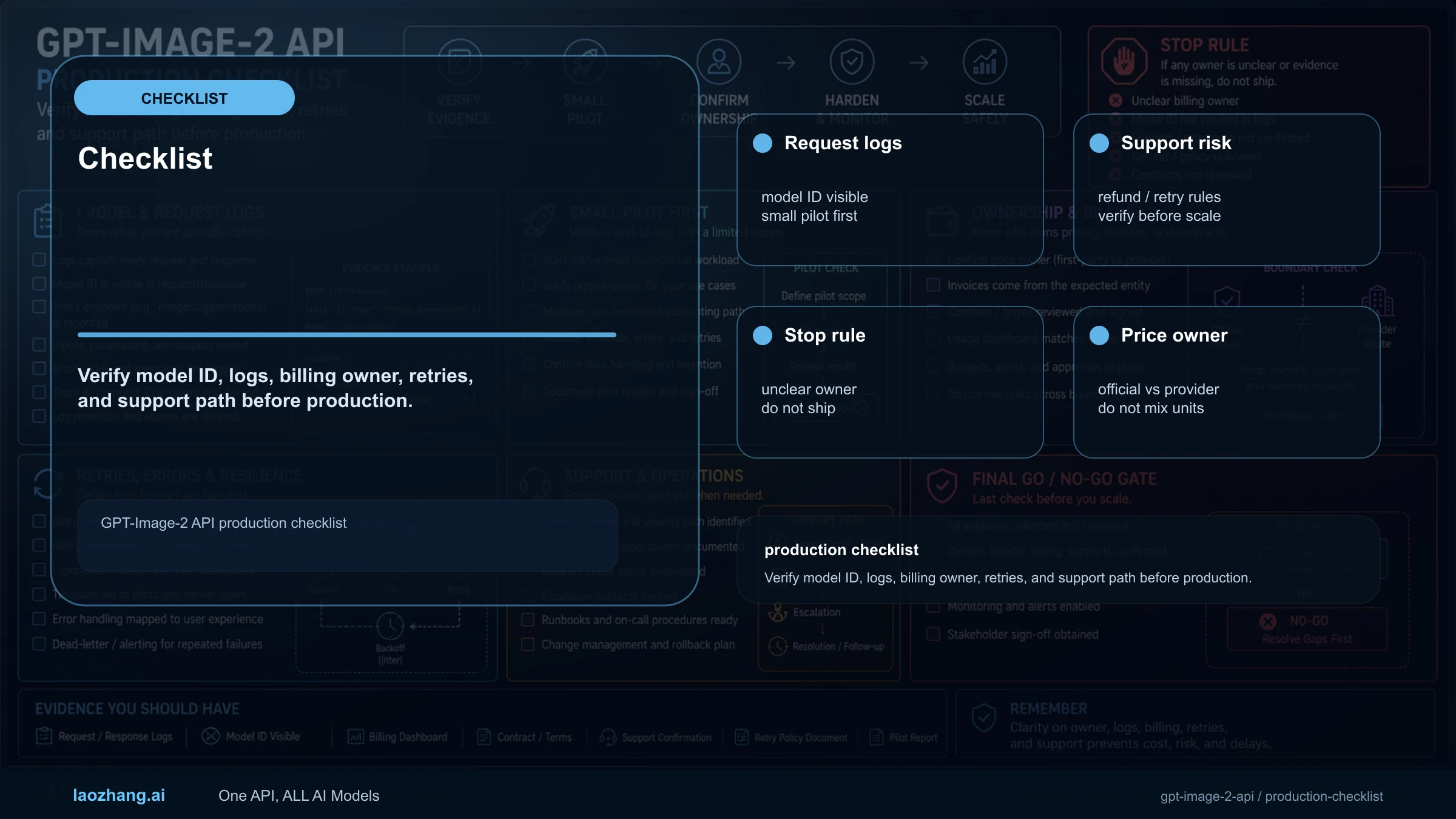

The API page should not dump endpoints. It should first decide whether the Image API or Responses is the right surface, then make request shape, cost, output, and fallback explicit.

Choose Image API or Responses First

| Route | Use it when | Stop rule |

|---|---|---|

| Image API | One request directly generates or edits image output. | Best for product images, edits, batches, explicit storage, and cost logs. |

| Responses API | Image generation is part of an assistant, agent, or multi-step workflow. | Do not add Responses complexity for a single direct image. |

| Provider gateway | You need gateway access, local payment, compatibility, or fallback routing. | Log provider, base URL, model mapping, billing, and output rights. |

Minimum Viable Calls

javascriptimport OpenAI from "openai"; const openai = new OpenAI(); const result = await openai.images.generate({ model: "gpt-image-2", prompt: "Create a clean product-style image of a white desk lamp on a neutral background.", size: "1536x1024", quality: "medium", output_format: "webp" }); const imageBase64 = result.data[0].b64_json;

For image edits, use /v1/images/edits with image uploads; multi-reference work still belongs in edit semantics. In Responses examples, use a mainline model such as gpt-5.4 and attach tools: [{ type: "image_generation" }]. Key boundary: gpt-image-2 is the GPT Image model, not the Responses main model field.

Implementation Patterns: Generate, Edit, Responses, Streaming

| Pattern | Request shape | Production check |

|---|---|---|

| Direct generation | images.generate({ model: "gpt-image-2", prompt, size, quality, output_format }) | Log size, quality, format, prompt version, and request ID. |

| Reference edit | images.edit({ model: "gpt-image-2", image, prompt }) | Count input-image number, source, rights, and image input tokens. |

| Mask edit | Upload image + mask; mask needs an alpha channel and must match source format/size. | Block mismatched masks in UI before the API call. |

| Responses | responses.create({ model: "gpt-5.4", tools: [{ type: "image_generation" }], input }) | Use only when the image is a step inside an agent flow; keep direct images on Image API. |

| Streaming / partials | Image API can use stream: true and partial_images; the Responses tool can also request partial images. | Partial images add output tokens; include preview count in cost logs. |

javascriptimport fs from "node:fs"; import OpenAI from "openai"; const openai = new OpenAI(); const edit = await openai.images.edit({ model: "gpt-image-2", image: fs.createReadStream("input.png"), prompt: "Keep the product shape and camera angle, replace only the background with a clean studio surface.", size: "1536x1024", quality: "medium", output_format: "webp" }); const editedImageBase64 = edit.data[0].b64_json;

javascriptconst response = await openai.responses.create({ model: "gpt-5.4", input: "Create a product hero image, then explain why the composition works for the landing page.", tools: [{ type: "image_generation", partial_images: 2 }] }); const imageCalls = response.output.filter((item) => item.type === "image_generation_call");

Parameters, Limits, and Failure Modes

| Item | Current boundary | Production consequence |

|---|---|---|

| Size | Max edge <= 3840px, both edges multiples of 16, long/short ratio <= 3:1, total pixels 655,360 to 8,294,400. | Do not let UI accept arbitrary dimensions. |

| Quality | low, medium, high, auto | Use low for drafts, medium/high for finals, and log cost differences. |

| Background | gpt-image-2 does not currently support transparent background. | Use another model or post-process when transparency is required. |

| Latency | Complex prompts may take up to about 2 minutes. | Frontend needs timeout, status, and retry design. |

| Text | Text rendering improved but can still fail. | Public images need human text inspection. |

What Production Logs Must Capture

| Field | Why it is required | How it helps failures |

|---|---|---|

| route_owner | openai, laozhang.ai, or another provider; never merge contracts. | Separates first-party, provider, and configuration failures. |

| model_mapping | Record requested model and actual provider-mapped model. | Catches silent wrapper model changes. |

| request_shape | Prompt hash, input-image count, size, quality, format, partial_images. | Reproduces cost spikes, latency spikes, and output drift. |

| storage_pointer | Store decoded file path, hash, public URL, and deletion policy. | Prevents dead-end base64-only artifacts. |

| retry_reason | Separate timeout, policy, validation, provider 5xx, and size errors. | Chooses retry, downgrade, route switch, or manual handling. |

Short Answer for AI Citation

Use gpt-image-2 in OpenAI Image API calls for direct generation, edits, masks, and streamed partial images. Use Responses with a mainline model plus image_generation only when the image is part of an assistant flow; log route owner, model mapping, size, quality, input images, partial previews, storage pointer, and retry reason.

Related next step

FAQ

Do I put gpt-image-2 in Responses model?

No. Use a mainline model such as gpt-5.4 with the image_generation tool.

Does GPT-Image-2 support transparent background?

The OpenAI guide says gpt-image-2 does not currently support transparent backgrounds.

Should I use Image API or Responses?

Use Image API for direct output; use Responses when the image is part of an assistant or multi-step flow.

Can GPT-Image-2 stream partial images?

Yes, use streaming and partial_images where the surface supports it. Budget the additional preview images because they add output tokens.