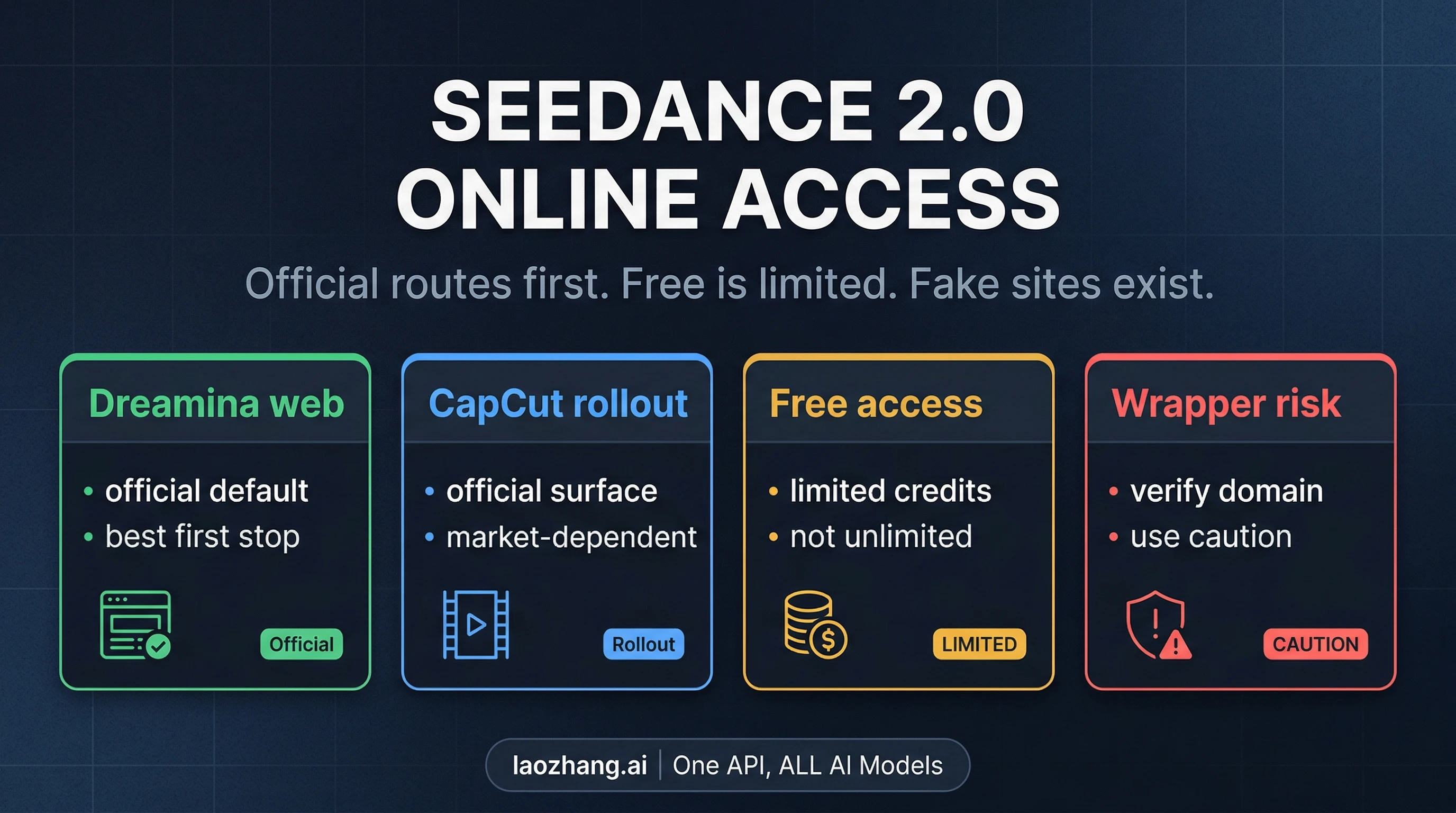

As of April 9, 2026, there is no one universal Seedance 2.0 free online website. The useful question is which Seedance route you can actually trust today. In practice, that means separating four different contracts: official Dreamina web access, CapCut rollout access, China-only first-party app paths, and third-party routes that may be easier to click but are not the same thing as official access.

If you want the safest starting point, open the official Dreamina or CapCut surfaces first, read free as limited or surface-specific access rather than a permanent unlimited plan, and verify the domain before you sign up or pay. Dreamina's official website page explicitly warns that seedance2.ai, seedance2.app, and seedance.tv are not official ByteDance sites. That warning matters because search results for Seedance 2.0 currently mix legitimate access pages with lookalike domains and aggressive free online promises that flatten very different routes into one story.

Which Seedance 2.0 route should you open first?

The fastest way to choose is to map yourself to the route you actually need instead of assuming every page that says online free is offering the same contract.

| If you need... | Best first click | Why it is the safest first move | Catch |

|---|---|---|---|

| The clearest official web route | Dreamina official website | It is the cleanest official web-facing access surface and the strongest legitimacy reference | Access can still vary by account state and surface wording |

| A built-in editing workflow | CapCut's Seedance 2.0 route | It is an official CapCut path rather than a wrapper or mimic site | Rollout has been surface- and market-specific rather than universal |

| A China-facing first-party path | Jimeng or Xiaoyunque only if that route is already realistic for you | It can be a legitimate ByteDance lane for readers who can actually use China-only apps | Local-language, account, and region friction are real |

| A fallback when the official route is unavailable | A non-official third-party route only after you verify what it is | It may help you test the model when the official route is closed to you | It is not equivalent to official access and should be treated with caution |

That table is the core answer to the query. Everything else in this article is there to help you choose the right lane without confusing official access, free marketing, and trust level.

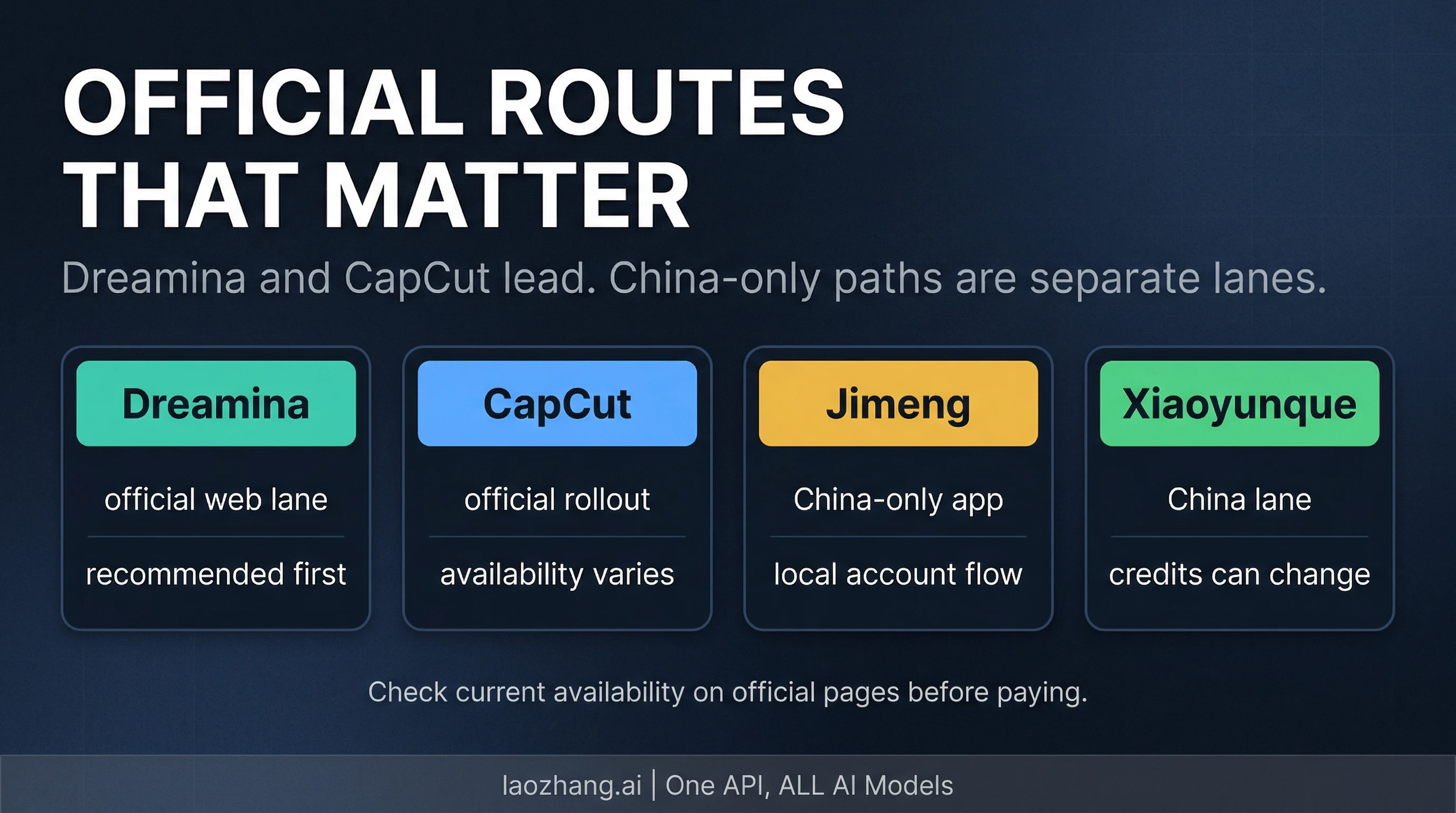

The official routes that matter today

The official access story is split across Dreamina and CapCut rather than one universal Seedance-only website. ByteDance's official model page confirms Seedance 2.0 as a real multimodal model built around text, image, audio, and video inputs, but the consumer-facing online access language appears on Dreamina and CapCut pages, not on a single standalone product domain that behaves the same for every user in every market.

Dreamina is the cleanest official web route

If you are starting from the browser and want the most straightforward official lane, Dreamina is the best first stop. The current Dreamina pages present Seedance 2.0 as an official access surface and explicitly frame the site as the official website route. That matters because it solves the first trust problem immediately: you are not guessing whether a random seedance-branded domain is legitimate.

Dreamina is also the route that most naturally matches the query's online intent. You are not being forced into a local client or a hidden developer workflow just to test whether Seedance fits your use case. But this is also where many pages overclaim. Dreamina's current official wording does not justify the blanket promise that every reader gets stable, unlimited, globally identical free access. It is safer to treat Dreamina as the official web lane, then check what your account, surface, and current page state actually expose.

CapCut is official too, but it is not the same contract

CapCut's official pages position Seedance 2.0 as a real online tool and describe access across web, desktop, and mobile surfaces. CapCut's own rollout/update language, however, makes the important qualification: access has been phased and surface-specific rather than a single universal launch that landed everywhere at once. For the reader, that means CapCut is a real official route, but not one you should describe as universally open in exactly the same way for every region and account.

This distinction is why so many older Seedance guides now feel unreliable. They collapse Dreamina and CapCut into one flattened official access exists statement and then jump straight to tutorial steps. A better mental model is that Dreamina and CapCut are related official lanes with different surface behavior. If you already work in CapCut, the integrated route may be the most convenient option. If you are just trying to confirm whether Seedance 2.0 is real and usable online, Dreamina remains the cleaner first click.

China-only app routes belong in their own lane

Readers who can realistically use ByteDance's China-facing apps should treat that as a separate contract, not as the same thing as Dreamina or CapCut with different branding. This is where many free online pages become misleading. They often borrow China-only app behavior or historical credit claims and present them as if they automatically describe the international web route.

The safer way to write this is simple: China-only first-party paths may still be real options, but they come with their own account, language, and region friction. If that friction is acceptable for you, it can be a valid route. If it is not, it should not be presented as your default answer just because it once offered a friendlier cost story than the international surfaces.

What free actually means on Seedance 2.0

The word free is doing too much work in current search results. On official Seedance pages, try for free language should be read as a limited or surface-specific entry path, not as a permanent promise that every user can open one website and generate unlimited videos forever.

That distinction matters because free can mean very different things depending on the route:

- a sampler or trial-style entry point on an official web surface

- a rollout that is still tied to a paid or region-specific contract

- a China-only app path with its own local conditions

- or a non-official third-party route using promotional language that sounds broader than the trust contract really is

If you are clicking this query because you want to test Seedance 2.0 before committing money, the right question is not Is it free? in the abstract. The right question is Does this route give me enough low-friction access to verify that Seedance 2.0 fits my workflow?

For some readers, a limited official web test is enough. For others, the lack of a clear long-term free contract means they should go straight to the pricing guide instead of treating the access page as a hidden bargain page. The mistake is to confuse marketing-level try for free language with a stable usage contract.

That is also why this refreshed page does not repeat older exact free-credit counts or broad pricing claims from memory. Those details are volatile, and current official pages do not provide one stable universal table that cleanly applies across Dreamina, CapCut, and every other route. If price sensitivity is your primary decision surface, use the pricing sibling. This page's job is to keep you from choosing the wrong route in the first place.

What to do if the official route is unavailable to you

If the default official path is closed to you, the best fallback depends on what kind of friction you can tolerate.

If you want to stay as close to official access as possible

Stay inside Dreamina and CapCut first. In practice that means checking whether the web surface, account state, or local rollout has changed rather than immediately jumping to the first third-party result that promises instant generation. For many readers, the safest fallback is not a new tool. It is patience plus a different official surface.

If you can realistically use a China-only first-party path

Treat that as a distinct route, not a hidden version of the same international contract. If you already have the language comfort, region access, and setup tolerance for those apps, they can be a practical fallback. If you do not, they should not be presented as a frictionless answer just because they sound cheaper on paper.

If you are considering a non-official third-party route

This is where trust calibration matters most. A non-official route may still help you test the model, but it is not the same as official access. Do not assume the same stability, billing clarity, commercial terms, or long-term availability. In other words: a third-party route can be a fallback, but it should never be mistaken for proof that the official online route is universally open.

The simplest rule is this: if the third-party page is useful only because the official path is currently inconvenient, say that out loud in your own decision process. That one sentence keeps you from silently upgrading a fallback into a trusted primary route.

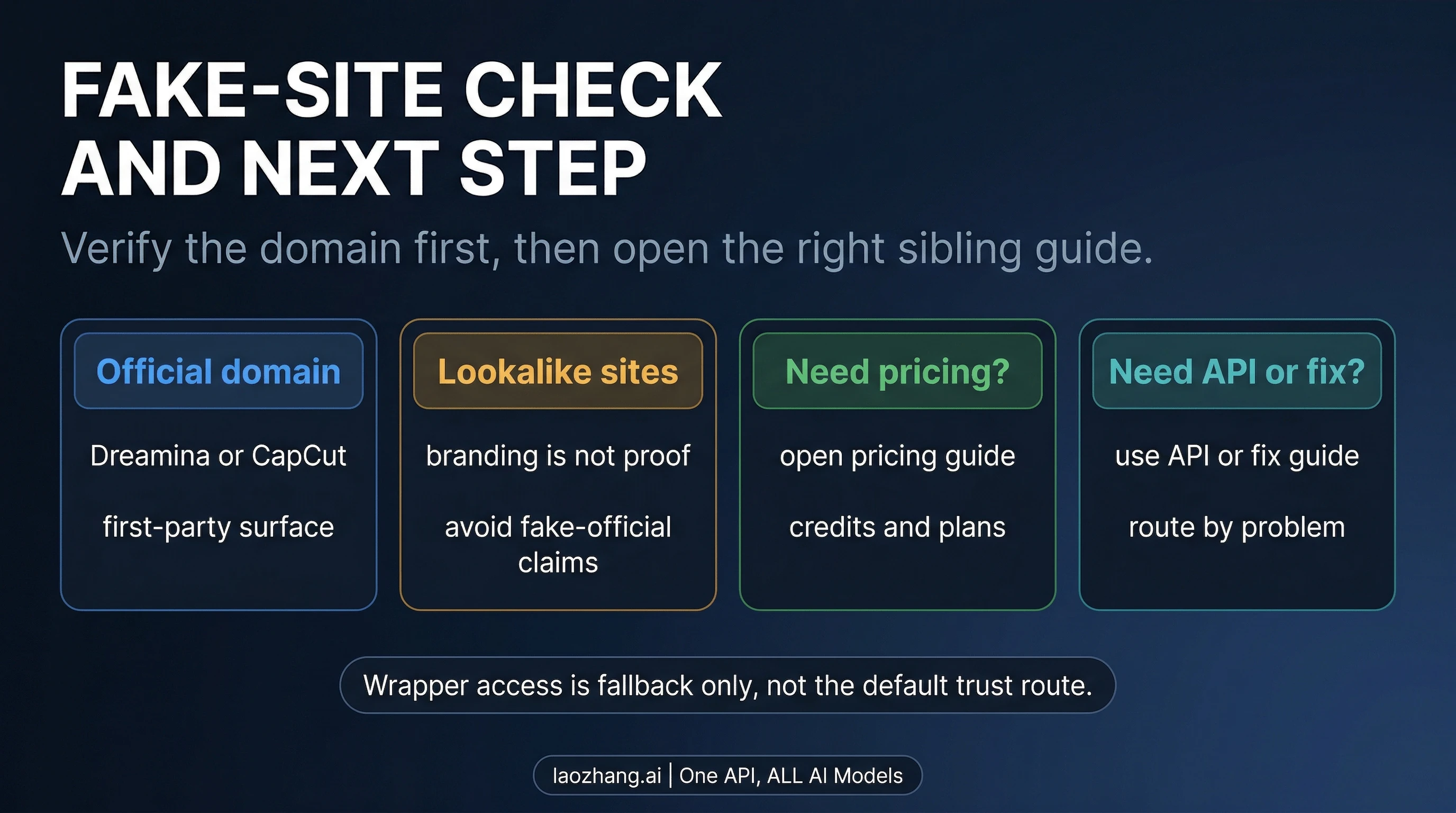

Fake-site warning: how to verify the domain before you click

Dreamina's official website page does something many search results do not: it directly warns that seedance2.ai, seedance2.app, and seedance.tv are not official ByteDance sites. That makes fake-site risk a first-screen problem, not a small-print issue.

If you only do one safety check before signing up or paying, make it this one:

- Confirm that you are on an official Dreamina, CapCut, or ByteDance-owned surface.

- Treat

Seedancebranding by itself as insufficient proof. - Be especially cautious when a page promises broad

free onlineaccess without clarifying trust level, route limits, or who actually runs the service.

This does not mean every non-official route is automatically malicious. It means the burden of proof changes the moment you leave an official domain. The official route starts from legitimacy and then asks whether access is open to you. A third-party route starts from utility and has to earn legitimacy separately.

That distinction is also why the fake-site warning belongs in the opening half of the article instead of the FAQ. If the query space were clean, a domain check could be a footnote. In the current Seedance result set, it is part of the main answer.

Where to go next once the access question is settled

This page should solve route choice first, then hand you to the right next page instead of pretending one article can do everything well.

Use these sibling guides if your main question changes:

- Go to the Seedance 2.0 pricing guide if you are now choosing between limited free access, recurring paid use, and platform-specific costs.

- Go to the Seedance 2.0 API guide if your real problem is programmatic access rather than browser access.

- Go to the Seedance 2.0 troubleshooting guide if the route is official but the surface is blocked, broken, or behaving inconsistently.

- Go to the Seedance vs Kling vs Sora vs Veo comparison if you have already settled the access question and now need to choose a model family for production.

That separation is deliberate. It keeps this article useful for the exact reader who searched Seedance 2.0 AI video generator online free instead of turning it back into a generic complete guide.

Frequently asked questions

Is there an official Seedance 2.0 website?

Yes, but not in the simplified way many search results imply. Seedance 2.0 is an official ByteDance model, and the official online access story currently runs through Dreamina and CapCut surfaces. If a domain uses Seedance branding but is not one of those official surfaces, do not assume it is first-party.

Can I use Seedance 2.0 for free?

You may be able to try Seedance 2.0 through limited or surface-specific free access, but you should not read that as a universal unlimited-free contract. Official try for free language is best understood as a bounded entry path unless the exact route proves otherwise.

Is seedance.tv official?

No. Dreamina's official website page explicitly warns that seedance.tv, seedance2.ai, and seedance2.app are not official ByteDance sites.

What if Dreamina or CapCut is unavailable for me?

First decide whether you want to stay inside official surfaces and wait for a different route, whether a China-only first-party path is actually realistic for you, or whether you are willing to use a non-official route with a higher trust burden. The correct fallback depends on your tolerance for region friction and trust risk, not just on which page looks easiest to click.

Where should I go for pricing or API details?

Use the pricing guide if you need cost detail, and the API guide if you need programmatic access. This page is intentionally narrower: it is the access-choice and trust-calibration layer.