Seedance 2.0 is called as an asynchronous video-generation task. A production integration should create one task, persist the returned ID, poll or receive a callback until a terminal state, copy the returned video_url into durable storage, and make retries operate on your own job record instead of blindly submitting duplicates.

For the BytePlus ModelArk route, keep the base URL, model ID, request body, and billing route together. The current global task endpoint is https://ark.ap-southeast.bytepluses.com/api/v3/contents/generations/tasks, and the route-owned Seedance 2.0 model IDs are dreamina-seedance-2-0-260128 and dreamina-seedance-2-0-fast-260128. For the China-region Volcengine Ark route, use the China endpoint and the doubao-* model IDs instead of mixing the two routes.

Submit one async task

Treat the create call as the only place where generation is started. Your application should validate the prompt, model route, references, aspect ratio, duration, and callback URL before it sends the request. After submission, store the returned task ID with your own job ID, request hash, model ID, user ID, and requested output settings.

bashcurl -X POST "https://ark.ap-southeast.bytepluses.com/api/v3/contents/generations/tasks" \ -H "Authorization: Bearer $ARK_API_KEY" \ -H "Content-Type: application/json" \ -d '{ "model": "dreamina-seedance-2-0-260128", "content": [ { "type": "text", "text": "A quiet product demo shot, slow camera move, clean studio light" } ], "ratio": "16:9", "resolution": "720p", "duration": 5, "generate_audio": false, "return_last_frame": true, "callback_url": "https://example.com/webhooks/seedance" }'

The create response gives you the task ID, not the final video. That distinction matters for retries: if the network drops after submission, first check whether your own job already has a provider task ID before creating another generation.

Use direct request-body parameters for resolution, ratio, duration, seed, watermark, and related controls. The current docs still describe older prompt-suffix parameters, but direct body fields are the safer production contract because invalid values fail visibly instead of being silently ignored.

Poll or handle callbacks

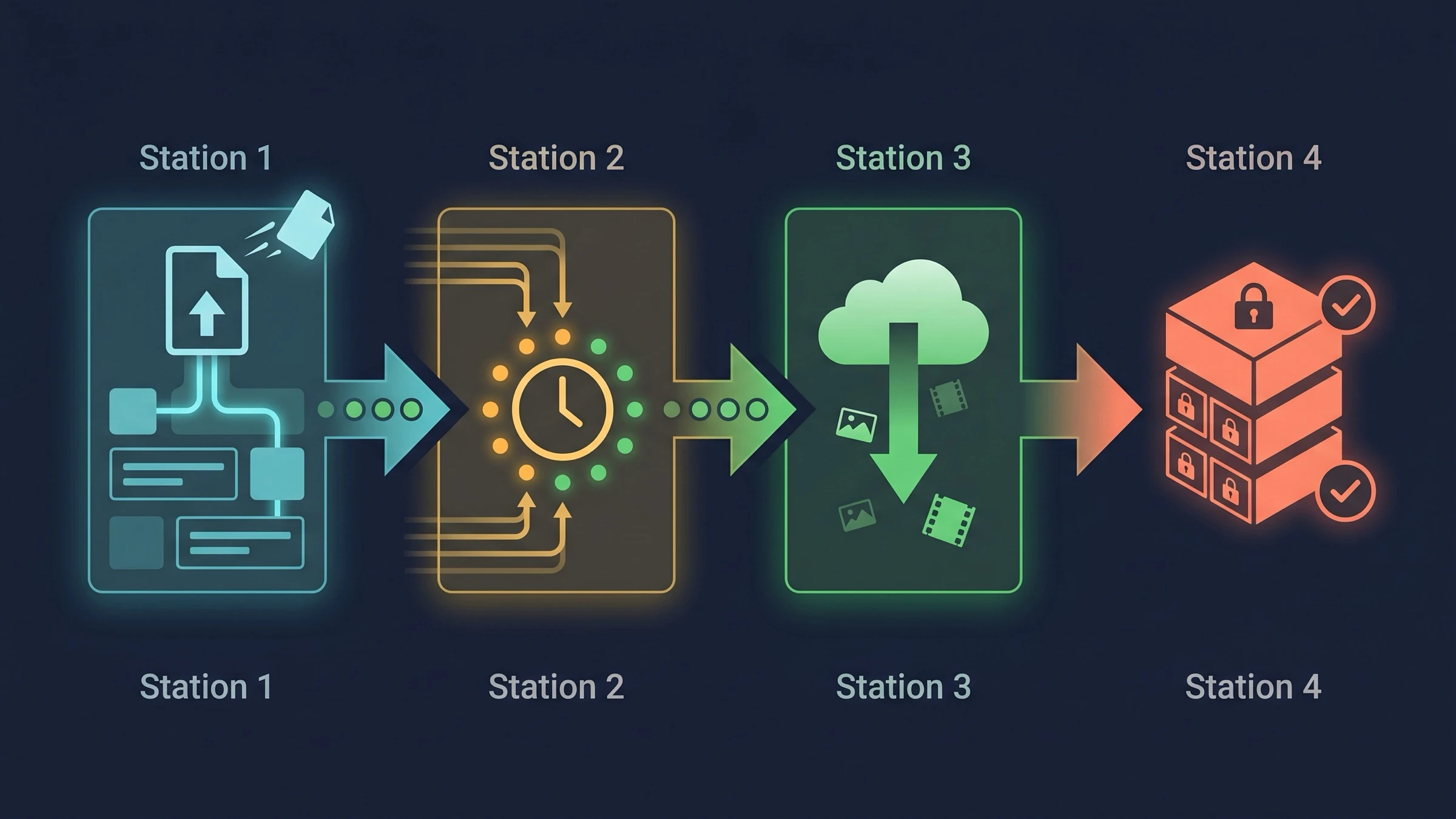

Seedance task states are not a progress bar; they are a queue lifecycle. Store them as a finite state machine:

| Provider status | App meaning | Next move |

|---|---|---|

queued | Accepted but not running | Poll with backoff or wait for callback |

running | Generation is in progress | Keep waiting; do not resubmit |

succeeded | Output is ready | Download and copy content.video_url |

failed | Provider rejected or failed the task | Save error.code and error.message; decide whether retry is allowed |

expired | The task exceeded its execution window | Mark retryable only if the request is still useful |

cancelled | A queued task was cancelled | Stop polling and surface cancellation |

bashcurl -X GET \ "https://ark.ap-southeast.bytepluses.com/api/v3/contents/generations/tasks/$TASK_ID" \ -H "Authorization: Bearer $ARK_API_KEY"

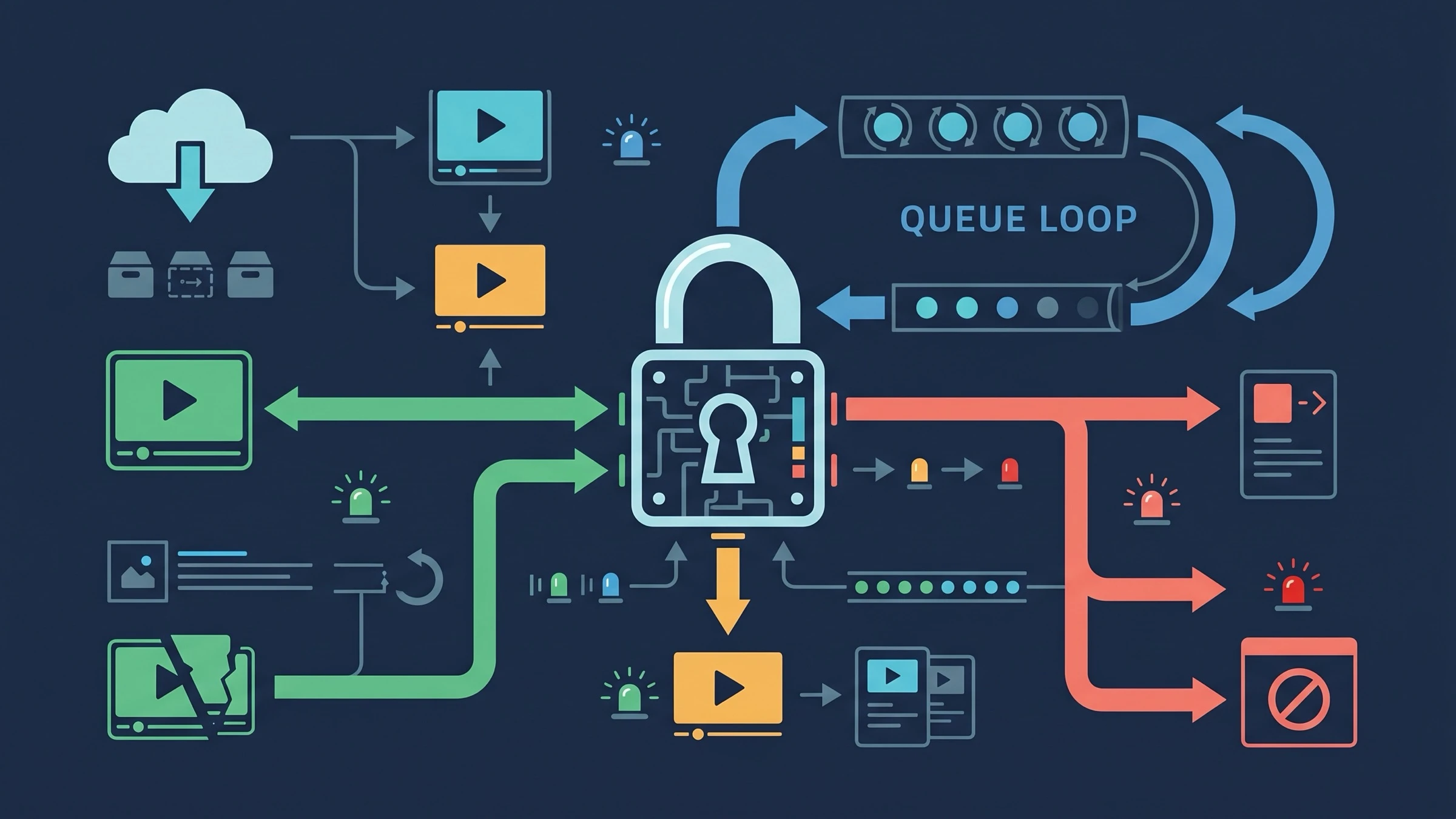

Polling can start with a short delay, then back off to a steady interval such as 10-20 seconds. Keep a maximum wall-clock timeout in your own system even if the provider has its own execution_expires_after value. If you use callbacks, still keep a poller as repair logic for missed webhook delivery, deploy outages, or signature verification bugs.

Callback handlers should be idempotent. Accept the provider payload, validate that the task ID belongs to an existing job, update the status only if the new state is allowed from the previous state, and enqueue the download step when the task becomes succeeded.

Download and store the result

On success, the retrieve response returns content.video_url as an MP4 URL. Do not leave the user-facing product dependent on that temporary URL. The official response contract says generated video URLs are cleaned after 24 hours, so your success handler should copy the file into your own object storage immediately.

tsasync function persistSeedanceOutput(job: Job, providerPayload: SeedanceTask) { const videoUrl = providerPayload.content?.video_url; if (!videoUrl) throw new Error("Seedance succeeded without video_url"); const response = await fetch(videoUrl); if (!response.ok) { throw new Error(`download failed: ${response.status}`); } await storage.putObject({ key: `seedance/${job.id}/output.mp4`, body: response.body, contentType: "video/mp4", }); if (providerPayload.content?.last_frame_url) { await copyUrlToStorage(providerPayload.content.last_frame_url, `seedance/${job.id}/last-frame.png`); } }

Save the provider task payload beside the copied asset. You will need it for support, billing reconciliation, and reproduction: model ID, task status, seed, ratio, resolution, duration or frames, generate_audio, and usage.total_tokens.

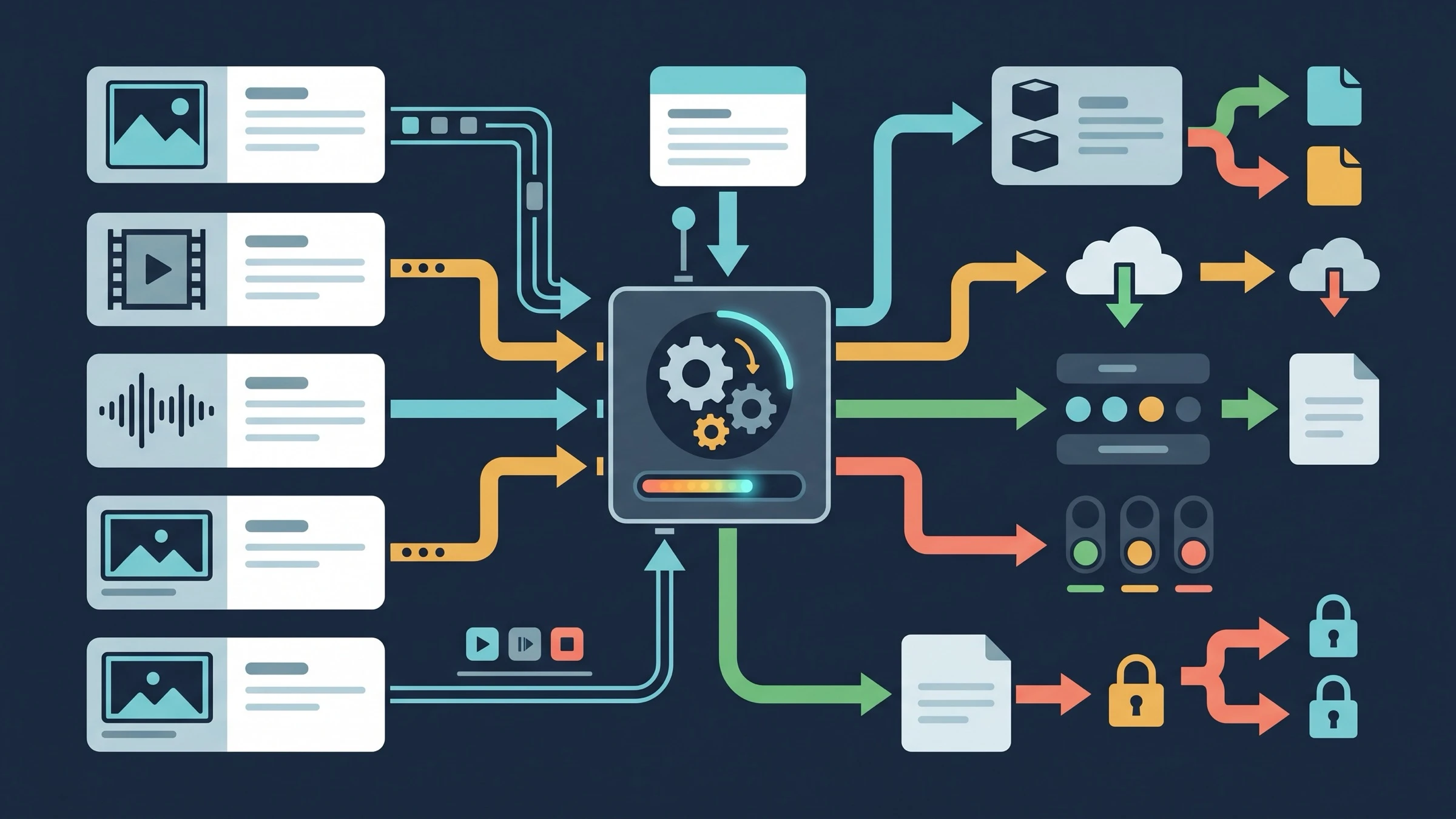

Pass reference media in content

Seedance 2.0 supports text-only generation, first-frame image-to-video, first-and-last-frame image-to-video, multimodal references, and reference video or audio inputs. These are not just optional attachments; the content array describes the generation mode.

For first-frame generation, pass one image item and set role to first_frame or omit the role if the route allows that mode. For first-and-last-frame generation, pass two image items and set role to first_frame and last_frame. For multimodal reference generation, use reference_image, reference_video, and reference_audio roles, and keep the mode separate from strict first/last-frame jobs.

json{ "model": "dreamina-seedance-2-0-260128", "content": [ { "type": "text", "text": "Use [image 1] as the product design reference and keep the shot minimal." }, { "type": "image_url", "image_url": { "url": "https://cdn.example.com/reference-product.webp" }, "role": "reference_image" } ], "ratio": "16:9", "duration": 5, "generate_audio": false }

Reference rules worth enforcing before submission:

- Use public URLs, Base64 data URLs, or supported asset IDs for images; avoid Base64 for large files because request size limits still apply.

- Keep image dimensions and aspect ratios inside the provider limits before you call the API.

- Use video references only with Seedance 2.0 routes that support video input; keep individual and total reference duration inside the documented limits.

- Do not submit audio by itself. The current contract requires audio to accompany at least one image or video reference.

- Keep face and authorization boundaries explicit. If your workflow includes real people, build a review and consent layer before submission.

Make retries idempotent

The most expensive Seedance bug is duplicate generation. A retry policy should be based on your own durable job record, not on whether the HTTP client wants to replay a request.

Create a deterministic request hash from the normalized model ID, route, prompt, media URLs or asset IDs, output settings, and end-user identifier. When a create request begins, insert a job row with that hash and a local status such as submitting. If a second request arrives with the same hash, return the existing job instead of submitting a new provider task.

Retry only the operations that are safe to repeat:

| Operation | Retry rule |

|---|---|

| Create task before a provider ID is stored | Retry after checking the job row and request hash |

| Create task after a provider ID is stored | Do not resubmit; resume polling that ID |

| Poll task | Retry with backoff on network and 5xx failures |

| Callback processing | Safe to replay if state transitions are idempotent |

| Download output | Safe to retry until the temporary URL expires |

| Failed generation | Retry only after classifying the provider error |

For failed tasks, separate bad input from transient infrastructure. Validation errors, unsupported media, policy failures, and incompatible parameters should return a repair message to the user. Rate limits, timeouts, and temporary 5xx responses can go through backoff with a cap. A useful production rule is three bounded attempts for transient transport work and zero automatic resubmissions for provider-level generation failures unless the error code is known to be retryable.

A production checklist

Before exposing the integration to users, confirm that each layer is owned:

- Configuration keeps BytePlus

dreamina-*IDs separate from Volcenginedoubao-*IDs. - The create endpoint, retrieve endpoint, model ID, and billing route are stored together.

- The app stores provider task IDs before polling starts.

- Webhooks are idempotent and can be replayed.

- Polling has backoff, a maximum wall-clock timeout, and a repair path for missed callbacks.

- Successful

video_urloutputs are copied to durable storage before the 24-hour cleanup window matters. - Reference media is validated before submission.

- Retry behavior cannot create duplicate paid generations for the same app-level job.

FAQ

Is the Seedance 2.0 API synchronous?

No. Treat it as an async task API. The create call returns a task ID; the generated MP4 appears later in the retrieve response after the task reaches succeeded.

Should I use polling or callbacks?

Use callbacks for low-latency updates and keep polling as repair logic. A callback-only system can lose state during deploys, verification bugs, or temporary endpoint failures.

How long can I keep the returned video URL?

Copy it immediately. The returned content.video_url is a temporary MP4 URL and the official response contract says generated videos are cleaned after 24 hours.

Can I pass both first/last frames and reference media in one task?

Keep those modes separate. First/last-frame generation uses first_frame and last_frame; multimodal reference generation uses reference_image, reference_video, and reference_audio. If you need exact first and last frames, use the first/last-frame mode rather than trying to imply it through references.

What should happen when a create request times out?

Do not immediately submit a second task. Look up your local job by request hash. If a provider task ID was stored, resume polling it. If no provider ID exists and the create attempt definitely did not complete, retry the create operation with the same local job record.