Nano Banana Pro API does not have a hidden discount plan. The official model is still gemini-3-pro-image-preview, and Google currently exposes one clear real-time price floor: $0.134 per 1K/2K image and $0.24 per 4K image. If you can wait for asynchronous processing, Google's own Batch/Flex route cuts that to $0.067 and $0.12. And if you keep seeing lower real-time numbers such as $0.05 per image, those are usually relay-provider contracts, not a hidden Google tier.

That distinction matters because the real decision is not simply AI Studio or Vertex AI. The real decision is which contract you are buying: official Google real time, official Google Batch, relay-market access, or a cheaper image model that makes Pro unnecessary. AI Studio and Vertex still matter, but mostly for auth, governance, and workflow shape, not because one of them secretly discounts the standard real-time bill.

All model IDs, preview status, pricing rows, and billing rules below were rechecked against official Google documentation on April 2, 2026. Relay-price references are treated as same-day market observations, not as first-party Google contract terms.

TL;DR

| If this is your real job | Cheapest sensible route | Current price signal | Why | Biggest caveat |

|---|---|---|---|---|

| You need the cheapest official Google route and can wait | Vertex AI Batch / Flex | $0.067 for 1K/2K, $0.12 for 4K | This is Google's real 50% discount path | It is an asynchronous workflow, not the best fit for interactive generation |

| You need the cheapest official real-time request | AI Studio or Vertex standard pay-as-you-go | $0.134 for 1K/2K, $0.24 for 4K | Same model, same Google baseline, different operating surfaces | Nano Banana Pro still has no free API tier |

| You need the cheapest same-model real-time access and do not need Google's own contract | Relay providers | Often around $0.05 per image in the live market | Lower bill and lighter onboarding can make sense for cost-first workflows | This is a different contract, billing surface, and support boundary |

| You are cost-sensitive and not sure you need Pro at all | Switch models before optimizing the route | Often a bigger savings move than route tuning | Many workloads do not need Pro's premium text rendering and 4K positioning | Cheapest Nano Banana Pro is still more expensive than not using Pro |

The practical rule is simple: use Batch when you need the cheapest Google contract, relays when you want the lowest same-model real-time bill, AI Studio when you want the fastest official first request, and a cheaper model when Pro is overkill.

The Cheapest Nano Banana Pro API Route Depends On Which Contract You Mean

The phrase cheap Nano Banana Pro API hides three different buying questions. The first is whether you want the cheapest official Google route. The second is whether you want the cheapest same-model real-time route regardless of who owns the billing contract. The third is whether you should be paying for Nano Banana Pro at all.

Google's own docs now make the official part unusually clean. On the pricing page, gemini-3-pro-image-preview has no free tier, standard image output is $0.134 for 1K/2K and $0.24 for 4K, and Batch/Flex cuts that to $0.067 and $0.12. That means the cheapest first-party answer is not "AI Studio" and not "Vertex" in the abstract. It is Batch/Flex specifically.

The live market answer is different. Search results for the cheapest route are now full of relay pages advertising roughly $0.05 per image. Those pages are not necessarily lying, but they are describing a different commercial shape. You are no longer comparing two Google entry points. You are comparing Google's own contract against a provider contract that may be cheaper, easier, or looser in different ways.

The strongest cost answer for some readers is a fourth option: stop optimizing the Pro route and read Nano Banana Pro vs Nano Banana 2 first. If the workload does not genuinely need Pro's premium text rendering, complex-layout reliability, or 4K positioning, the bigger savings move is often model substitution, not route optimization.

AI Studio Or Vertex AI: Why This Is Not The Discount Question

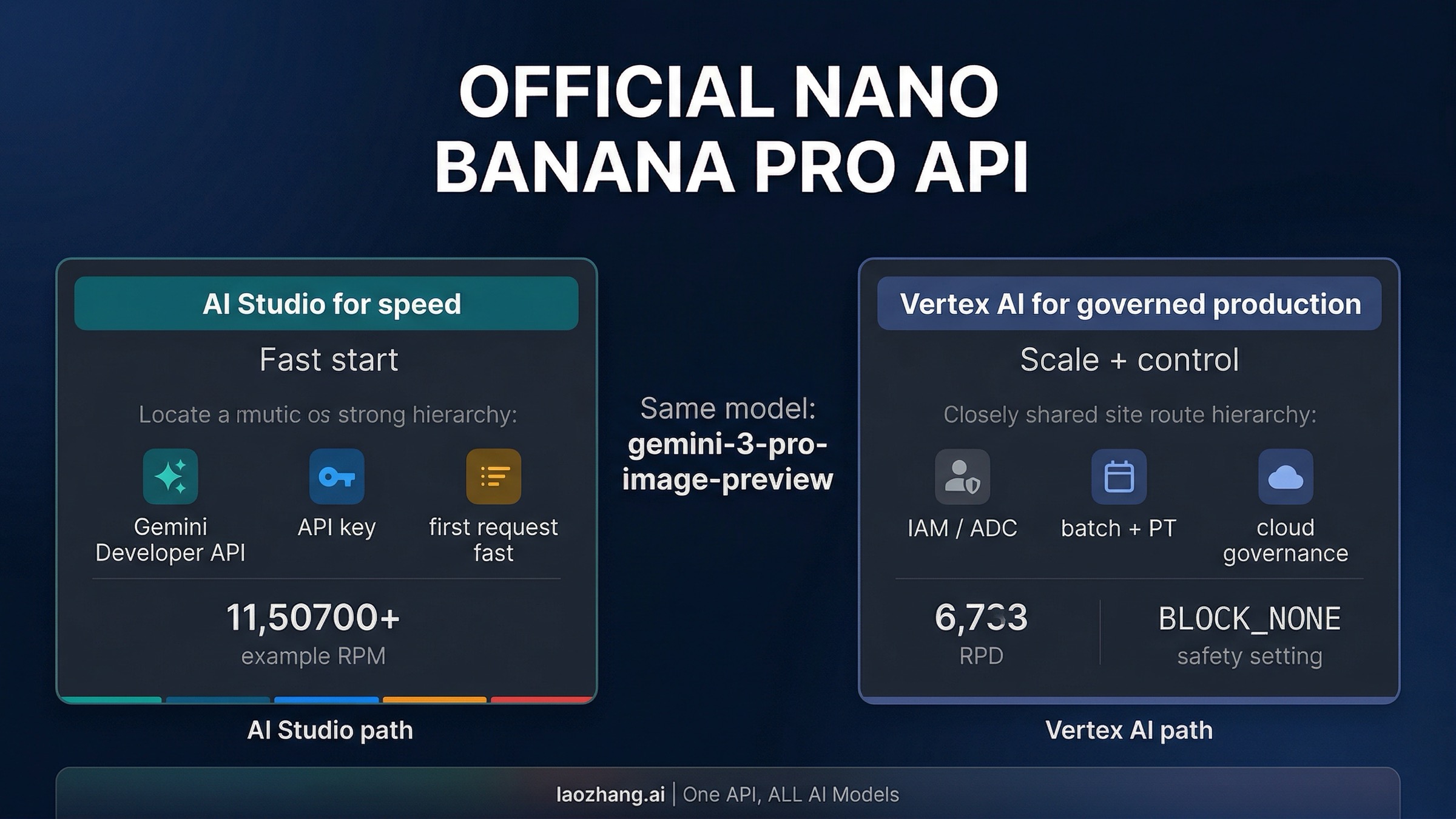

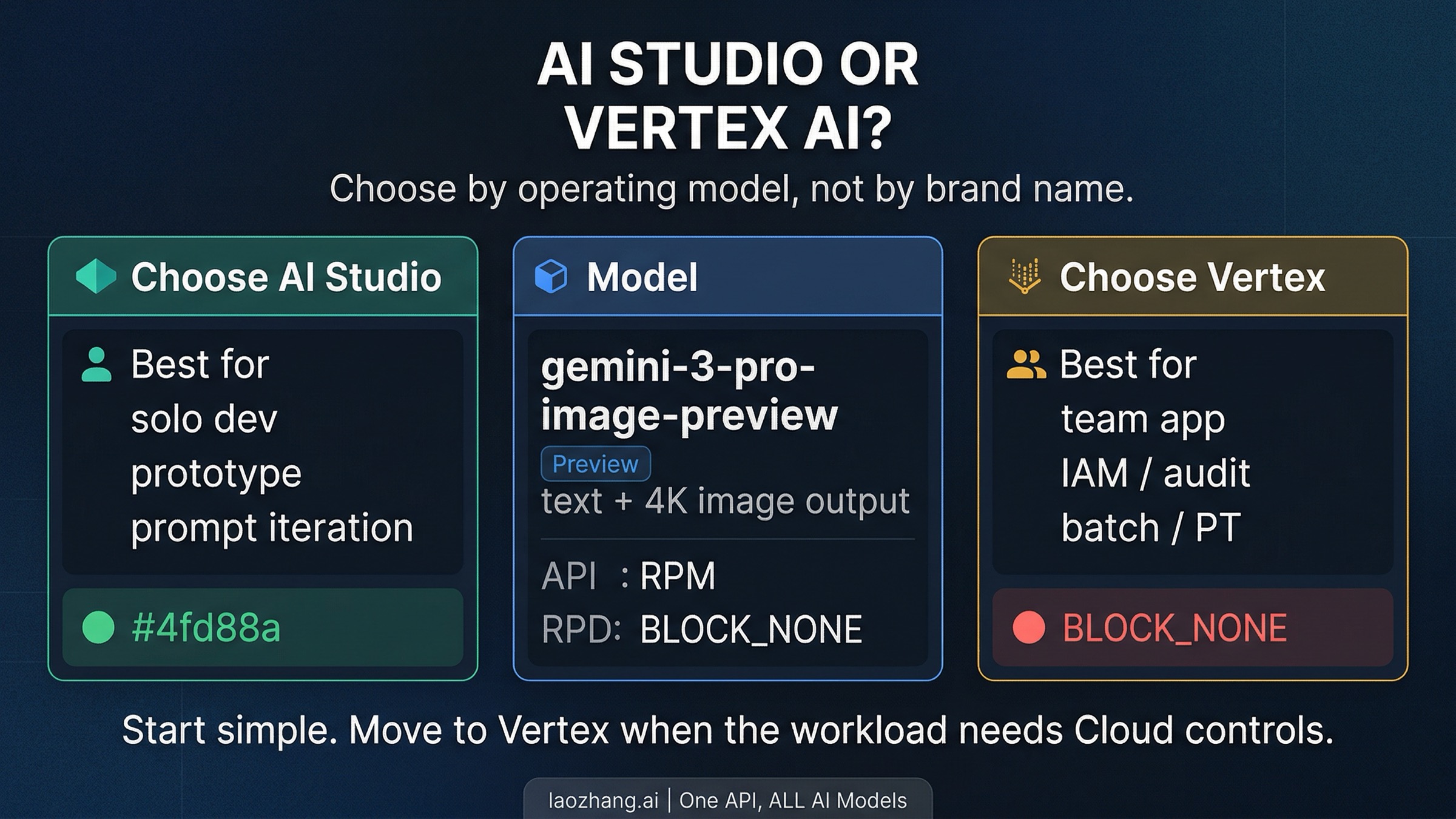

AI Studio and Vertex AI still matter. They just matter for different reasons than the first-screen cost question. Both routes can get you to the same model. What changes is not the creative capability. What changes is the surrounding authentication, billing surface, operational model, and scale controls.

AI Studio plus the Gemini Developer API is the fastest official path when the job is straightforward integration. You create an API key in Google AI Studio, test prompts in Google's own interface if you want, then call the Gemini Developer API from code. This is the right default when you are an individual developer, a small team, or anyone trying to get from "I think I want Pro" to "I have a working request" with minimal ceremony.

Vertex AI is the better fit when Nano Banana Pro becomes part of a real Cloud workload instead of a quick integration. This is where IAM, project-level governance, application default credentials, batch processing, and provisioned throughput start to matter. Google's own materials make that distinction clear enough now that it should not be blurred with price talk.

The easiest way to keep the distinction straight is:

- choose AI Studio when the problem is developer velocity

- choose Vertex AI when the problem is Cloud operations

- choose Batch/Flex on Vertex when the problem is official Google cost reduction

That last line is the important correction. Vertex becomes a pricing decision only when Batch/Flex enters the picture. Outside that, AI Studio versus Vertex is mostly an operations decision.

Route 1: AI Studio And The Gemini Developer API

For many readers, this is the correct starting point. Google AI Studio is where the Gemini Developer API key workflow lives. It is also the easiest place to validate prompts before you wire them into code. That does not mean AI Studio is "just a playground." It means the official API-key route and the official testing UI are intentionally close to each other.

The main caveat is billing. Google's current Gemini Developer API pricing page shows Free Tier: Not available for gemini-3-pro-image-preview. Google's billing FAQ also says that AI Studio remains free to use unless you link a paid API key for access to paid features, and once you do, usage on that key is charged. So the safe mental model is:

- AI Studio as a product surface can be free to open and use

- Nano Banana Pro API usage is still a paid contract

- "I can see it in AI Studio" does not mean "I have a free Pro API tier"

When this route is right

Choose AI Studio and the Gemini Developer API when:

- you want the shortest path to a first working request

- API-key auth is acceptable for the workload

- you are still iterating on prompts and output style

- you do not yet need Cloud IAM, batch pipelines, or organization-level controls

Minimal JavaScript example

Install the current SDK:

bashnpm install @google/genai

Then send a request with your API key:

javascriptimport { GoogleGenAI } from "@google/genai"; import fs from "node:fs"; const ai = new GoogleGenAI({ apiKey: process.env.GEMINI_API_KEY }); const response = await ai.models.generateContent({ model: "gemini-3-pro-image-preview", contents: "Create a clean product hero image for a mechanical keyboard on a dark studio background.", config: { responseModalities: ["IMAGE"], imageConfig: { aspectRatio: "16:9", imageSize: "2K", }, }, }); for (const part of response.candidates[0].content.parts) { if (part.inlineData) { fs.writeFileSync( "nano-banana-pro-output.png", Buffer.from(part.inlineData.data, "base64"), ); } }

This is the most direct official path: create the key in AI Studio, export GEMINI_API_KEY, and call generateContent. Google's image docs also note a small but easy-to-miss implementation detail: K must be uppercase in 1K, 2K, and 4K.

If you prefer raw HTTP first, the same route works with the Gemini Developer API endpoint and x-goog-api-key authentication. The only thing that changes is transport, not the contract.

Route 2: Vertex AI For Governed Production Use

Vertex AI is the better answer once the integration stops being "one developer with an API key" and starts being "a Cloud workload that other people must operate safely." That can happen earlier than many teams expect. The moment you need IAM, service accounts, project-level billing separation, batch work, or a path toward provisioned capacity, Vertex AI stops looking like overkill and starts looking like the correct home.

The important conceptual shift is authentication. With the Gemini Developer API route, your code is centered around an API key. With Vertex AI, your code is centered around Cloud auth. Google's current image-generation guide shows the GenAI SDK configured for Vertex with GOOGLE_CLOUD_PROJECT, GOOGLE_CLOUD_LOCATION, and GOOGLE_GENAI_USE_VERTEXAI=True, which is the cleanest sign that you are now in the Google Cloud operating model rather than the standalone API-key model.

When this route is right

Choose Vertex AI when:

- the app belongs to a team instead of one developer

- you need IAM, audit, and project governance

- you want batch processing or provisioned throughput

- service-account or ADC-based auth is a better fit than distributing API keys

- Cloud billing and operational ownership matter as much as prompt quality

Minimal Node.js example on Vertex

Install the same SDK:

bashnpm install @google/genai

Set the environment variables shown in Google's Vertex docs:

bashexport GOOGLE_CLOUD_PROJECT=your-project-id export GOOGLE_CLOUD_LOCATION=global export GOOGLE_GENAI_USE_VERTEXAI=True

Then call the same model through Vertex:

javascriptimport fs from "node:fs"; import { GoogleGenAI, Modality } from "@google/genai"; const client = new GoogleGenAI({ vertexai: true, project: process.env.GOOGLE_CLOUD_PROJECT, location: process.env.GOOGLE_CLOUD_LOCATION || "global", }); const response = await client.models.generateContent({ model: "gemini-3-pro-image-preview", contents: "Create a premium launch poster for a smart watch, crisp typography, dark editorial lighting.", config: { responseModalities: [Modality.IMAGE], }, }); for (const part of response.candidates[0].content.parts) { if (part.inlineData) { fs.writeFileSync( "vertex-nano-banana-pro-output.png", Buffer.from(part.inlineData.data, "base64"), ); } }

Notice what did not change: the model string. Notice what did change: the client setup and the surrounding auth assumptions. That is the whole article in code form.

The Official Discount Is Batch, Not A Hidden AI Studio Plan

This is the section most ranking pages still blur. Google's own pricing now makes the first-party answer clean enough to say directly: the official discount is Batch/Flex. If your workflow can tolerate asynchronous completion, the Google bill drops from $0.134 to $0.067 for 1K/2K and from $0.24 to $0.12 for 4K.

That does not mean every official user should start in Vertex AI on day one. It means the decision tree is sequential. First ask whether you need the cheapest Google contract. If yes, Batch is the answer. If no, ask which official operating surface fits the workload better. That is where AI Studio and Vertex AI re-enter as route choices.

There are three practical cost traps worth avoiding here.

- Do not confuse AI Studio access with free API access. Google still lists

Free Tier: Not availableforgemini-3-pro-image-preview. - Do not confuse API keys with pricing scope. Google's rate limits are applied per project, not per API key, so spraying extra keys does not create a magical cheaper tier.

- Do not optimize the route before confirming you need Pro. If the job does not truly need Pro's premium positioning, the bigger savings move is still model choice.

If you know you need Google's own contract and want the full implementation detail behind the 50% cut, continue with our Gemini 3 Pro Image Batch API discount guide. If you want every access channel in one place, read the broader Nano Banana Pro pricing guide.

Can You Start In AI Studio And Move To Vertex Later?

Yes, and for many teams that is the most rational progression. Start in AI Studio when the task is learning prompt behavior, validating output quality, and shipping the first thin integration. Move to Vertex AI when the architecture starts asking for Cloud-native controls rather than just a working endpoint.

The migration is easier than it sounds because the model identity does not change. You are not abandoning Nano Banana Pro for something else. You are changing the surrounding contract:

- from API key auth to Cloud auth

- from AI Studio project/key management to Cloud project/IAM operations

- from quick integration defaults to more explicit operational control

That is why it is usually a mistake to start every experiment in Vertex AI, and it is also a mistake to assume you should stay on the AI Studio path forever. Start with the fastest official route when you are learning, move to Vertex when Cloud control matters, and switch to Batch when first-party cost reduction becomes the main requirement. If you still need the exact key flow, use our Nano Banana Pro API key guide.

Choose In 30 Seconds

If you still want the shortest decision rule possible, use this one.

Start with Vertex AI Batch / Flex if your real question is:

- "What is the cheapest official Google route?"

- "Can this run asynchronously?"

- "Do I still need first-party billing and governance?"

Start with a relay provider if your real question is:

- "What is the cheapest same-model real-time route?"

- "Do I care more about the bill than about Google's own contract?"

- "Can I accept a third-party support and compliance surface?"

Start with AI Studio and the Gemini Developer API if your real question is:

- "How do I get the first request working fast?"

- "How do I validate prompts before building more infrastructure?"

- "Can I use the official Google path without setting up Cloud operations first?"

Start with Vertex AI if your real question is:

- "How do I make this fit our Google Cloud environment?"

- "How do I give a team governed access instead of passing around API keys?"

- "How do I plan for batch or provisioned capacity?"

And if your real question is actually whether Pro is the right model at all, do not force the route decision before the model decision. Read Nano Banana Pro vs Nano Banana 2 first. For many workloads, that is where the bigger cost and architecture win sits.