Gemma 4 and Gemini 3.1 Pro are not two equal entries on one leaderboard. Gemma 4 is the better route when you need open weights, local control, privacy, or customization. Gemini 3.1 Pro is the better route when you need managed long context, Google-hosted tools, and faster setup. If one workflow needs both, use Gemma for local or sensitive stages and Gemini for hosted synthesis or tool-heavy steps.

If you searched for Gemma 4 vs Gemini 3.1 Pro, the real choice is open-weight control versus managed platform leverage, not a simple benchmark winner. As of April 11, 2026, Gemini 3.1 Pro is still listed as a Preview model in Google's Gemini 3 documentation, so pricing and availability claims should stay tied to current official pages rather than old benchmark takes.

Verified against the official Gemini 3 developer guide, Gemini API pricing page, and Google-published Gemma 4 model cards on April 11, 2026.

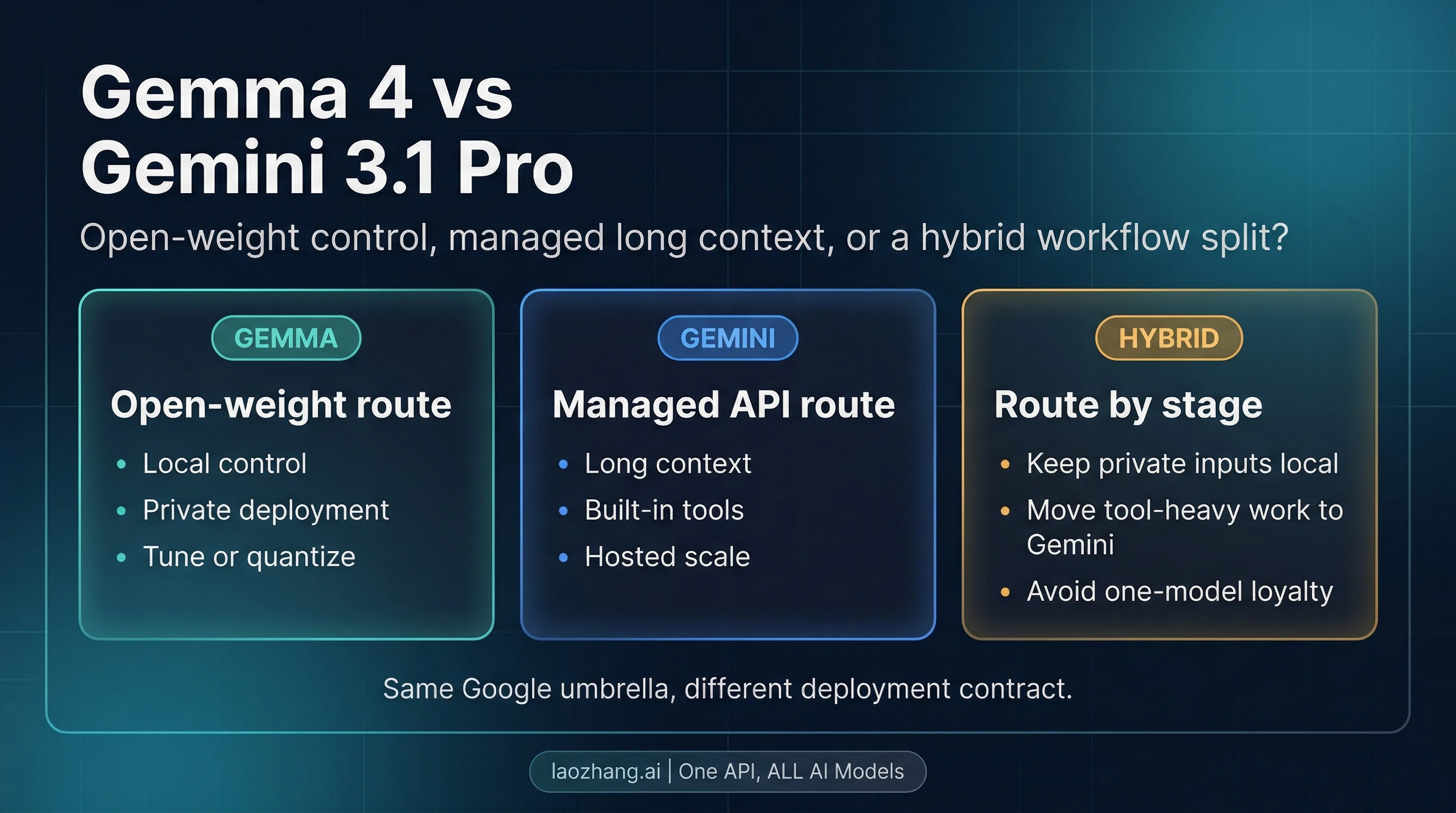

Start Here: Which route should you pick?

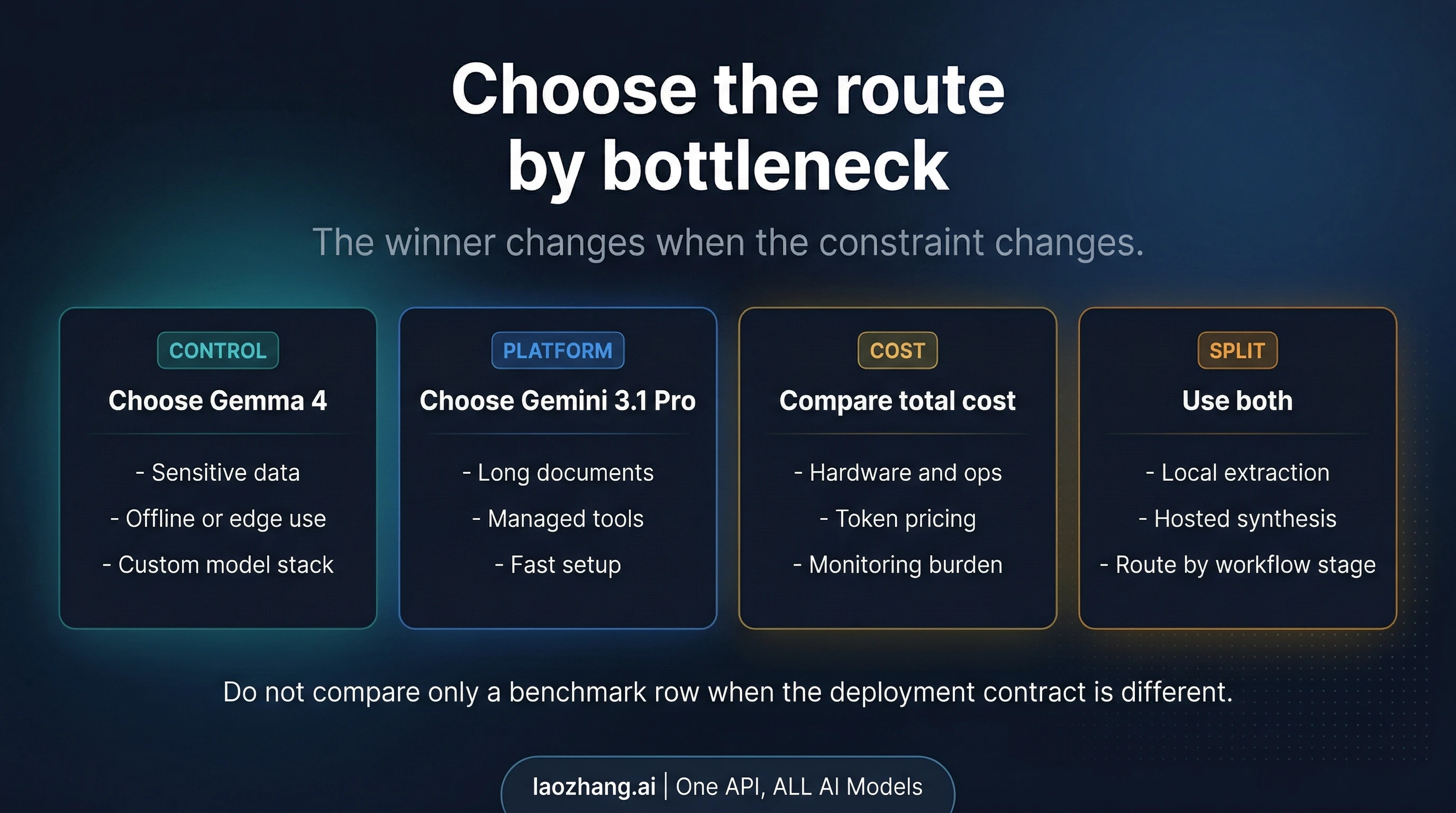

The fast answer is not a single winner. The useful question is: what is your real bottleneck right now?

| If your real bottleneck is... | Start with... | Why | Main catch |

|---|---|---|---|

| Sensitive data, offline work, custom deployment, or owning inference | Gemma 4 | It is Google's open-weight family, so you control runtime, hardware, privacy boundary, and adaptation path | You also own more engineering work |

| Long context, built-in Google tools, and a faster managed path | Gemini 3.1 Pro | It gives you a hosted route with large context and platform tooling | It is still Preview, and cloud processing is part of the contract |

| One workflow has both private local stages and cloud-heavy reasoning or tooling stages | Hybrid | You can keep sensitive or simple stages local, then send only the parts that need managed leverage to Gemini | Multi-model routing only pays off if the workflow really has two different constraints |

That is the route board this page will keep defending. If you need the simplest practical rule, it is this: choose Gemma 4 when sovereignty is the bottleneck, choose Gemini 3.1 Pro when managed leverage is the bottleneck, and only go hybrid when the workflow itself splits into two different jobs.

What you are actually comparing

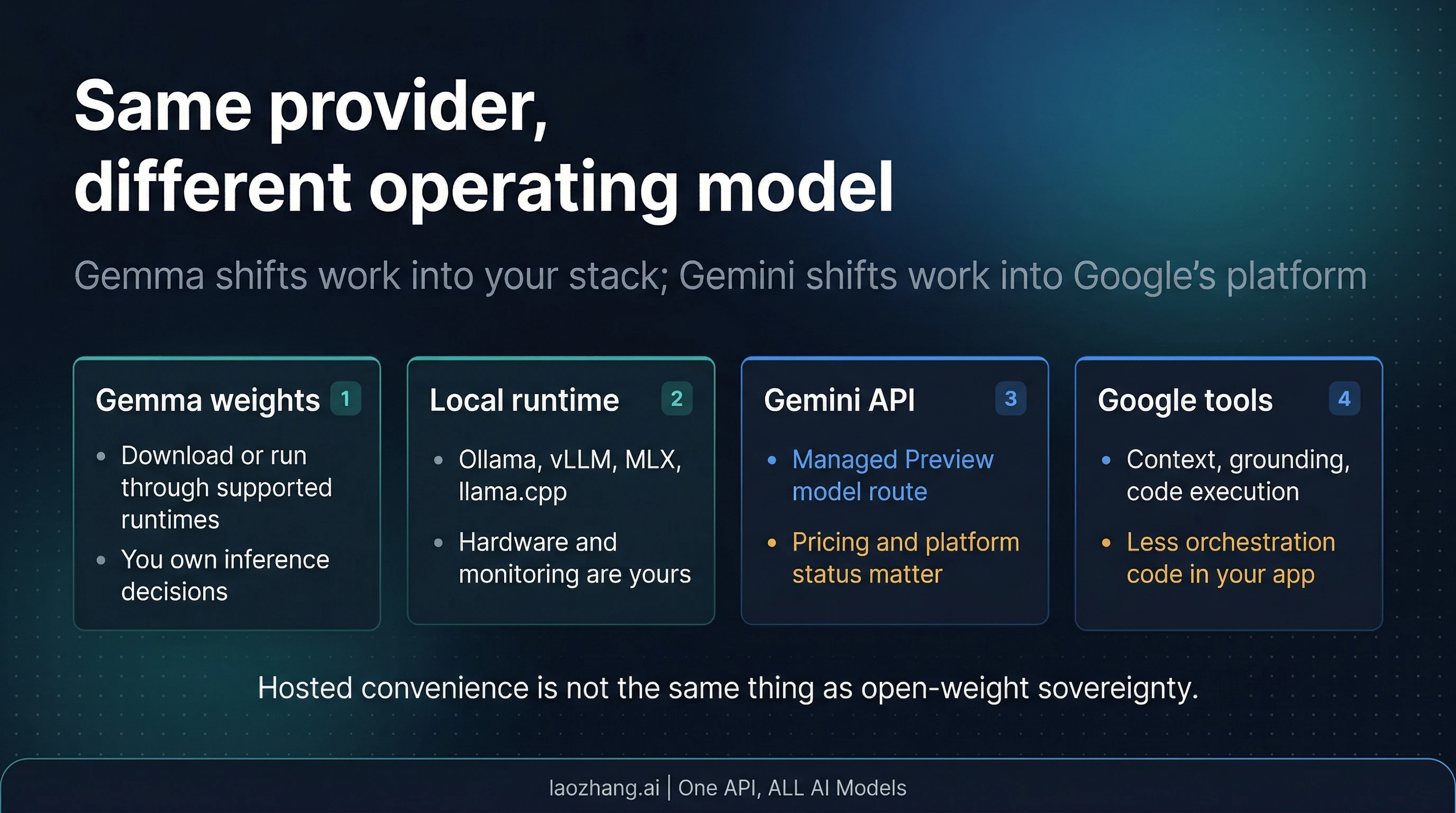

Many comparison pages flatten this topic into a benchmark showdown, but that hides the most important fact: Gemma 4 and Gemini 3.1 Pro do not live at the same product layer.

Gemma 4 is Google's current open-weight family. The official Google-published Gemma 4 model cards describe it as an Apache 2.0 family with four main branches: E2B, E4B, 26B A4B, and 31B. That means the real Gemma decision is a deployment decision first. You are choosing whether to run a family of models locally, on your own infrastructure, or through a temporary hosted surface that helps you evaluate an open model before deciding how to deploy it.

Gemini 3.1 Pro is a managed Preview route in Google's Gemini 3 line. The official Gemini 3 developer guide presents gemini-3.1-pro-preview as the complex-task model in the series, with a 1M-token input context window, thinking controls, and Google-managed tools such as Search grounding, URL context, code execution, and file search. That makes the real Gemini decision a managed-platform choice first: you are paying for hosted leverage, not for weight ownership.

This is why the page should not begin with a benchmark table. A benchmark table can still help once the route is clear, but it is not the first thing the reader needs. The first thing the reader needs is product-boundary correction. Same provider does not mean same contract. Same SDK convenience does not erase who owns runtime, privacy boundary, inference operations, or upgrade risk.

One more correction matters here. The raw query says Gemma 4 as if it were one model. It is not. But that does not mean this article should become a full family-selection guide. If your next question is which Gemma 4 branch to run, go deeper in the dedicated Gemma 4 guide. This page owns the higher-level route choice: open weights versus managed Gemini 3.1 Pro.

Choose Gemma 4 when control is the bottleneck

Choose Gemma 4 when your project becomes worse if the model stays fully managed. That usually means one of four things: your data is sensitive enough that local or self-managed boundaries matter, your workflow needs offline or near-edge behavior, you need to tune or adapt the model stack, or you simply want to own the inference path rather than rent it as a black box.

This is where Gemma 4's open-weight nature matters more than any generic capability argument. Because the family is open-weight and Apache 2.0, you can treat the model as infrastructure. You can choose runtimes, choose hardware, decide how much context you can afford locally, and decide whether the model should sit on a laptop, a workstation, or a more formal self-hosted stack. That is a completely different kind of freedom from what you get with a managed API, and for some teams it is the deciding factor before capability details even begin.

Gemma 4 also gives you a meaningful family split instead of one blurry default. The small E2B and E4B models sit on the lighter, more edge-friendly side of the family with 128K context. The larger 26B A4B and 31B branches move up to 256K context and make more sense for heavier workstation-class local work. If your next question is which branch to pick, the shortest useful answer is: start with E4B for edge-style work and 26B A4B for serious local workstation work, then use the Gemma 4 guide for the exact branch decision.

There is also an important pricing nuance. Google's current Gemini API pricing page shows Gemma 4 on the free tier and does not show a normal paid Gemini API row for it. That means readers should not confuse hosted evaluation access with the deeper product contract. The durable identity of Gemma 4 is still open-weight deployment. A current try-now or hosted surface can be useful, but it does not turn Gemma into "just another Gemini API SKU."

The catch is straightforward: control is work. If you choose Gemma 4, you also choose more engineering responsibility. You need to think about runtime support, hardware fit, monitoring, updates, and the practical cost of owning inference. That trade is worth it when privacy, control, adaptation, or local deployment are the real bottlenecks. It is not worth it when you mainly want a managed model to plug into a product quickly.

If your real need is local setup discipline rather than this route choice itself, continue with the OpenClaw local LLM setup guide after this page.

Choose Gemini 3.1 Pro when managed leverage is the bottleneck

Choose Gemini 3.1 Pro when the value comes from not owning the stack yourself. That usually means long-context analysis, tool-heavy workflows, Google-managed orchestration, or simply a faster path from prototype to production behavior.

The official Gemini 3 documentation makes the practical attraction easy to see. gemini-3.1-pro-preview sits in the Gemini 3 family as the route for complex multimodal work, with a 1M-token input context window and support for built-in tools such as Search grounding, code execution, file search, URL context, and custom-tool workflows. If your workflow depends on those managed features more than it depends on weight ownership, Gemini 3.1 Pro is usually the better fit even before you compare any benchmark rows.

That exact naming matters. Google's current models surface treats Gemini 3.1 Pro as the current managed Pro route after the older Gemini 3 Pro Preview shutdown on March 9, 2026. So if the reader really means "the current managed Google Pro route," this page should stay anchored to Gemini 3.1 Pro rather than drifting into a broader Gemini roundup.

Pricing also tells a cleaner story than many roundup pages do. Google's current official pricing page lists Gemini 3.1 Pro at $2 input / $12 output per 1M tokens for prompts up to 200K, then $4 input / $18 output above 200K. That is not just a raw cost fact. It is a workflow fact. If your project lives in the "large, managed, tool-heavy, cloud-acceptable" bucket, Gemini gives you a route where long context and orchestration are part of the product rather than something you rebuild around open weights.

Preview status is the main caveat, and it should stay visible. Google's current Gemini 3 guide still labels all Gemini 3 models as Preview, and the models catalog still positions Gemini 3.1 Pro as the current route after the older Gemini 3 Pro Preview shutdown. For some teams, that is a manageable trade. For others, especially teams that need a calmer production contract right now, Preview status is not a footnote. It changes how much operational certainty you can assume.

That is why the right Gemini answer is not "Gemini wins." The right answer is narrower: Gemini 3.1 Pro wins when managed leverage matters more than open deployment control. If your workflow needs large context, built-in tools, and lower setup burden, this route is hard to beat. If you need deeper Gemini pricing detail or a closer look at the current access path, continue with the dedicated guides on Gemini 3.1 Pro Preview free API access and the broader Gemini API pricing guide.

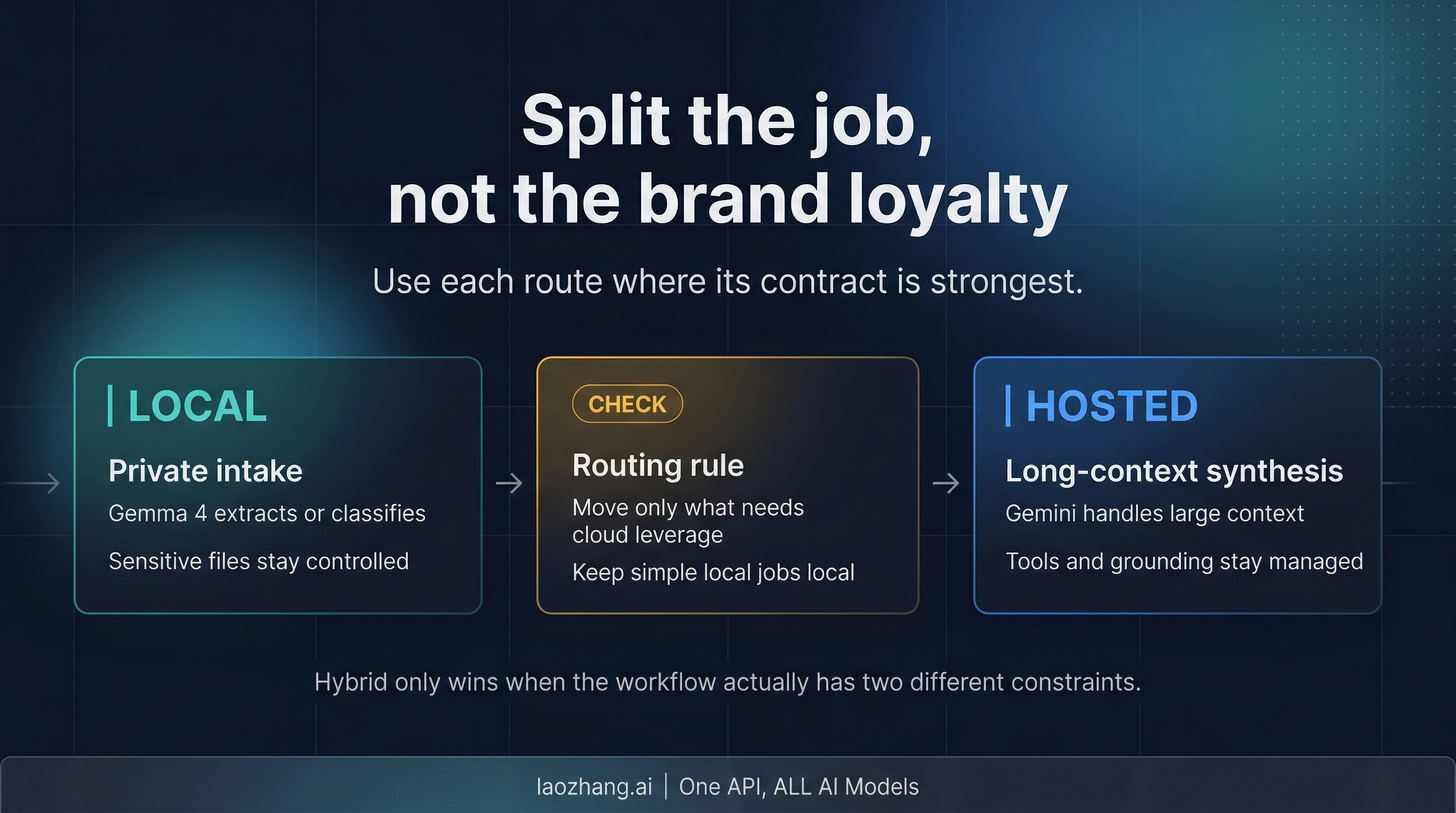

When hybrid is the right answer

The best answer is often neither "all Gemma" nor "all Gemini." It is a split workflow.

Hybrid is the right answer when one workflow contains two different contracts. A simple example: you keep sensitive intake, local extraction, or first-pass classification on Gemma 4 because the files should stay under your control. Then you send only the derived summary, reduced context, or tool-heavy reasoning stage to Gemini 3.1 Pro because that stage benefits from managed long context, grounding, or code execution.

That pattern is not a compromise. It is often the cleanest design. Gemma handles the parts where control matters. Gemini handles the parts where managed leverage matters. The important discipline is to avoid overengineering it. If your whole workflow is cloud-safe and mostly needs managed tools, stay with Gemini. If your whole workflow is local-first and simple enough to keep on owned infrastructure, stay with Gemma. Hybrid only earns its complexity when the stages really have different privacy, context, or orchestration requirements.

The simplest routing rule is usually enough: keep sensitive or lightweight stages local; move only the stages that truly need cloud leverage. That keeps the architecture honest and prevents the article's "use both" branch from becoming a fashionable excuse for unnecessary complexity.

FAQ

Is Gemma 4 more powerful than Gemini 3.1 Pro?

Not in the way most readers mean it. This is not a clean apples-to-apples benchmark contest because Gemma 4 is a family and a deployment route, while Gemini 3.1 Pro is one managed Preview route. If your definition of "more powerful" means local control, customization, and owned inference, Gemma 4 can be the stronger answer. If it means managed long-context tooling with lower setup burden, Gemini 3.1 Pro is the stronger answer.

Why does Google show Gemma 4 on a pricing page if it is an open-weight family?

Because hosted evaluation access and open-weight identity are not the same thing. Google's current pricing surface makes Gemma 4 look available on a free tier, but that should be read as a current hosted surface, not as proof that Gemma 4 has turned into a normal paid Gemini API contract. The deeper identity is still open-weight deployment.

Does Gemini 3.1 Pro being Preview matter?

Yes. It does not automatically make the model unusable, but it changes the operational contract. Preview models can change more quickly, may have different rate-limit and stability expectations, and should not be described as if they were already the calmest long-term production route. If your team is comfortable with that trade for the upside of long context and managed tools, Gemini 3.1 Pro is still a strong choice.

Can I use Gemma 4 and Gemini 3.1 Pro in one app?

Yes, and this is often the best answer when your workflow genuinely splits. Keep local-sensitive or self-managed stages on Gemma 4. Use Gemini 3.1 Pro for long-context synthesis, search-grounded reasoning, or tool-heavy stages where the managed platform earns its cost and operational tradeoffs.

Final decision

If you need to own deployment, privacy boundaries, or customization, start with Gemma 4. If you need managed long context, built-in Google tools, and a faster hosted route, start with Gemini 3.1 Pro. If your workflow clearly has both kinds of stages, use Gemma for control and Gemini for managed leverage instead of pretending there has to be one universal winner.