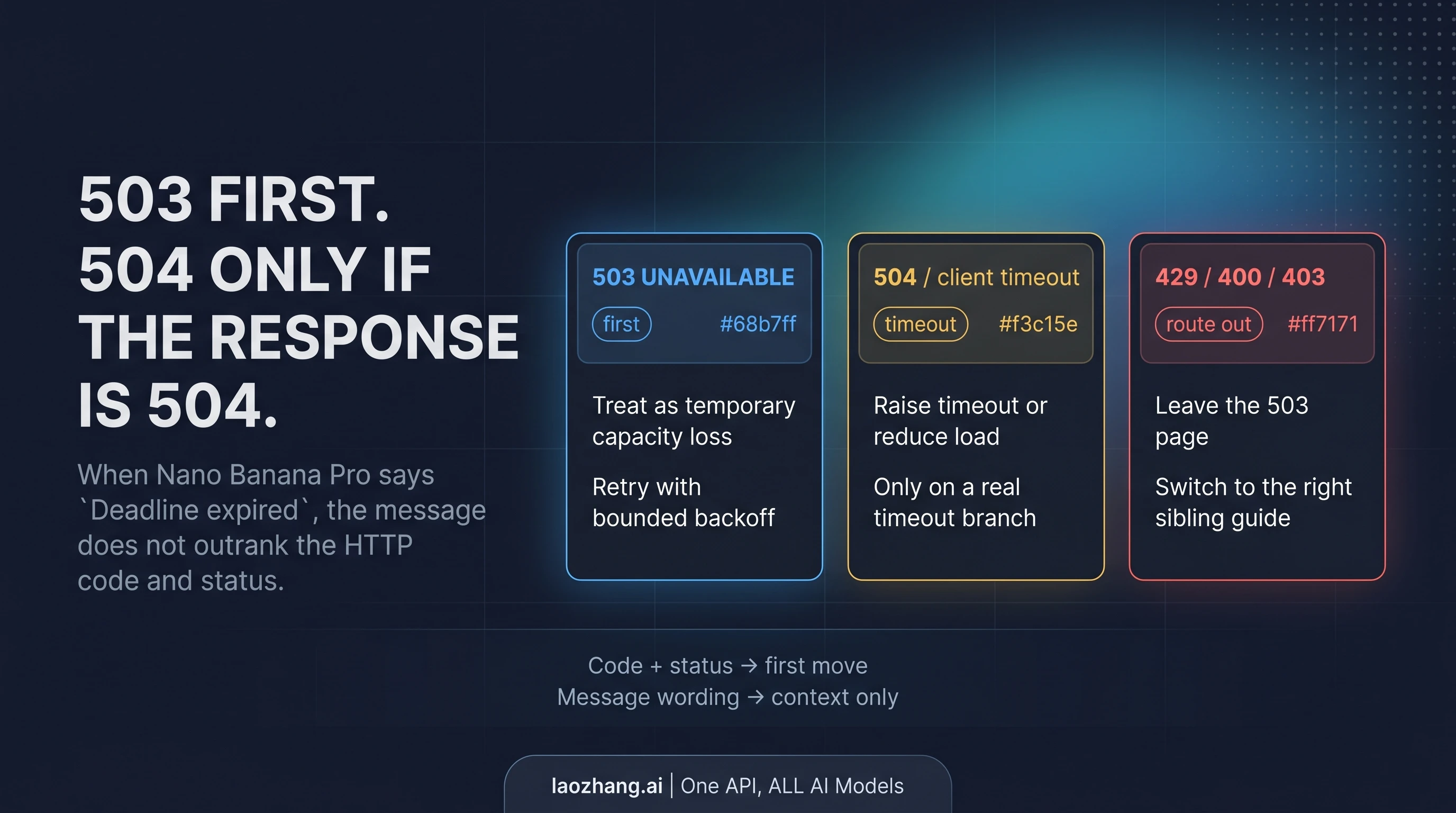

If Nano Banana Pro returns HTTP 503 UNAVAILABLE, the safest first move is to trust the code and status before you trust the message that follows them. That matters because gemini-3-pro-image-preview can return a true 503 UNAVAILABLE even when the message string says Deadline expired before operation could complete. The wording sounds like a timeout, but the recovery path still starts on the 503 branch unless the response is actually 504 DEADLINE_EXCEEDED or your own client timed out first.

That one correction is the difference between a real recovery page and a generic troubleshooting roundup. Broad guides tell you that 503 is temporary, or that deadline wording means timeout, but they do not resolve the contradiction fast enough to be useful during an outage. For this exact symptom, the page should do one job first: tell you whether to retry, raise timeout, or leave the 503 page entirely.

Checked against the Gemini API troubleshooting guide, the Gemini image generation docs, and the exact-match official forum thread for the 503 + Deadline expired variant on April 15, 2026.

Here is the short version:

- If the response is truly

503 UNAVAILABLE, treat it as a temporary capacity failure first and retry the same request path with bounded backoff. - If the response is truly

504 DEADLINE_EXCEEDED, or your client timed out before the server answered, raise timeout and/or reduce request load. - If the error is really

429,400, or403, leave this page and switch to the correct branch instead of stacking random fixes.

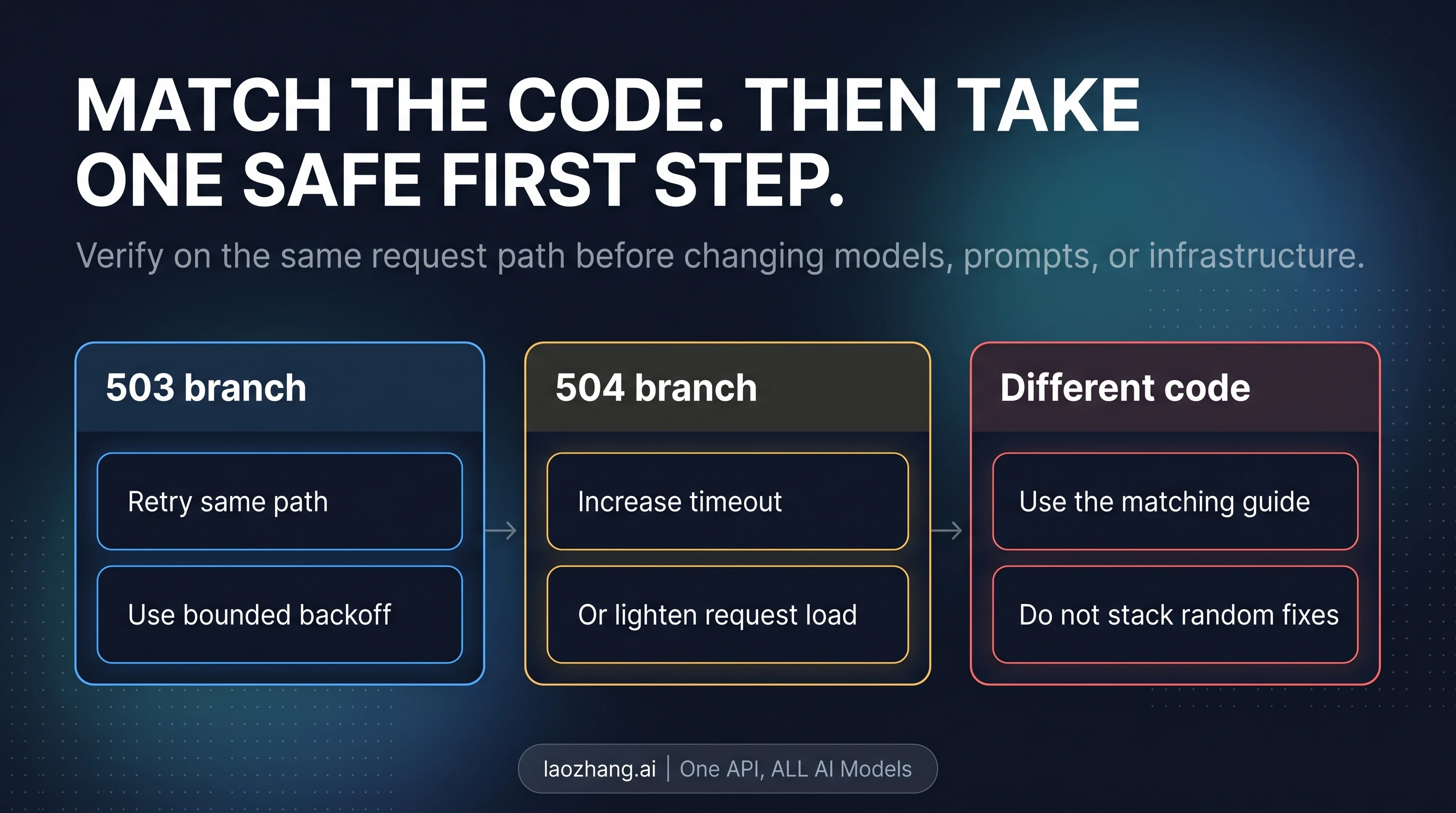

The verification rule matters just as much as the first action. Retry on the same path before you change models, prompts, SDKs, auth, or payload shape. Otherwise you do not know whether the service recovered or whether you changed the problem.

30-Second 503 vs 504 Board

The simplest working diagnostic is this:

503 UNAVAILABLE: temporary capacity loss on Google's side. Retry first.504 DEADLINE_EXCEEDED: timeout budget mismatch. Tune timeout or reduce load.429,400,403: wrong page. Route to the correct sibling guide.

That split is not guesswork. Google's current troubleshooting docs still separate 503 UNAVAILABLE from 504 DEADLINE_EXCEEDED, and that distinction is the safest way to read the Nano Banana Pro symptom. The exact message string can help you recognize the issue you are seeing, but it should not outrank the response class itself.

This is also why a lot of well-meaning advice wastes time. When someone sees the word "deadline," they start tuning timeout values before they have proved they are on a timeout branch. When someone sees the word "overloaded," they assume every failure is global capacity loss and never check whether the request actually returned a real 504. Both moves can be wrong. The page only becomes operational once you make the first split correctly.

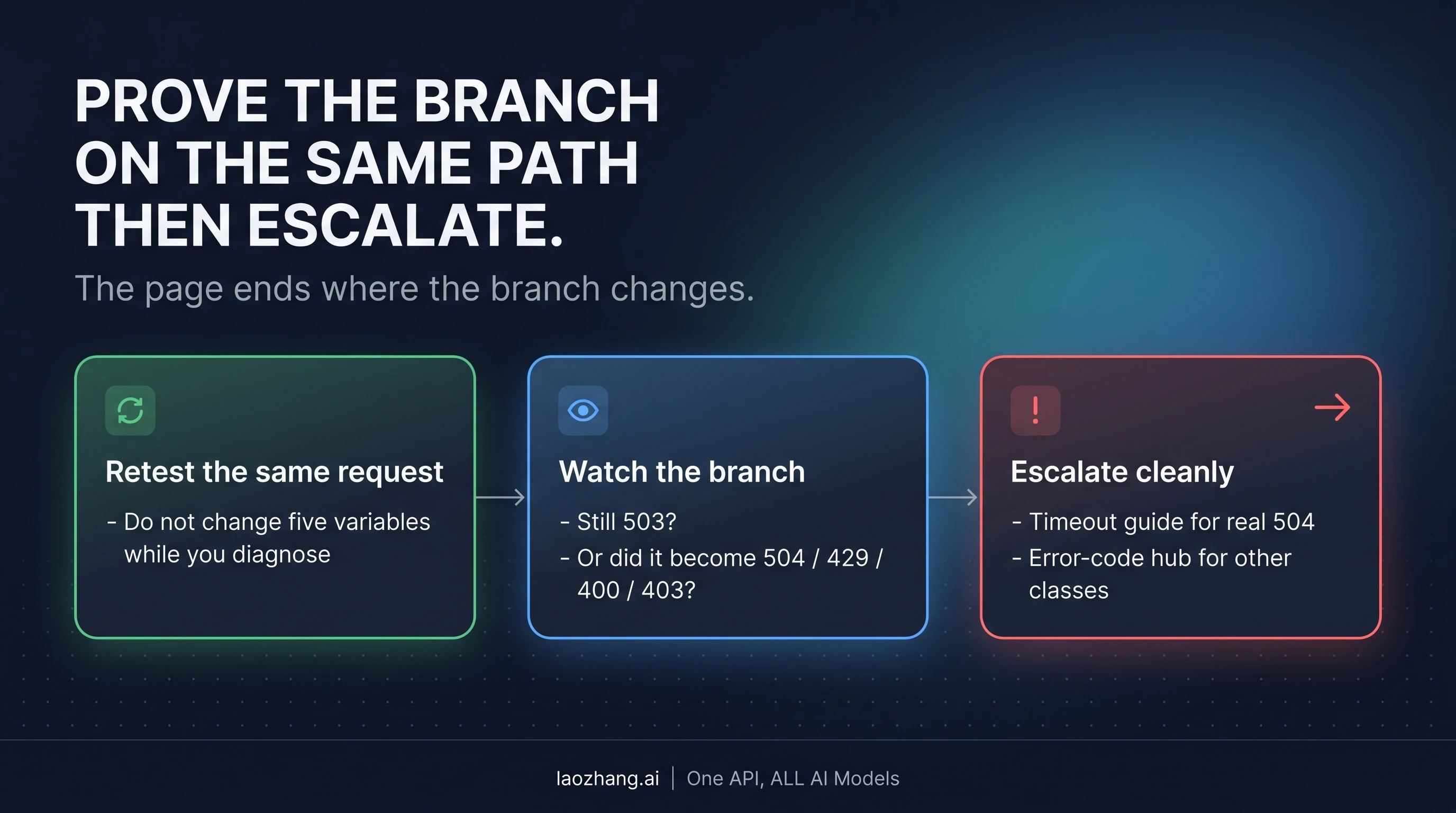

If you only have twenty seconds, do this: read the HTTP code, confirm the status string, retry once on the same request path, and watch whether the branch stays 503 or changes. That single retest tells you more than a long theory section.

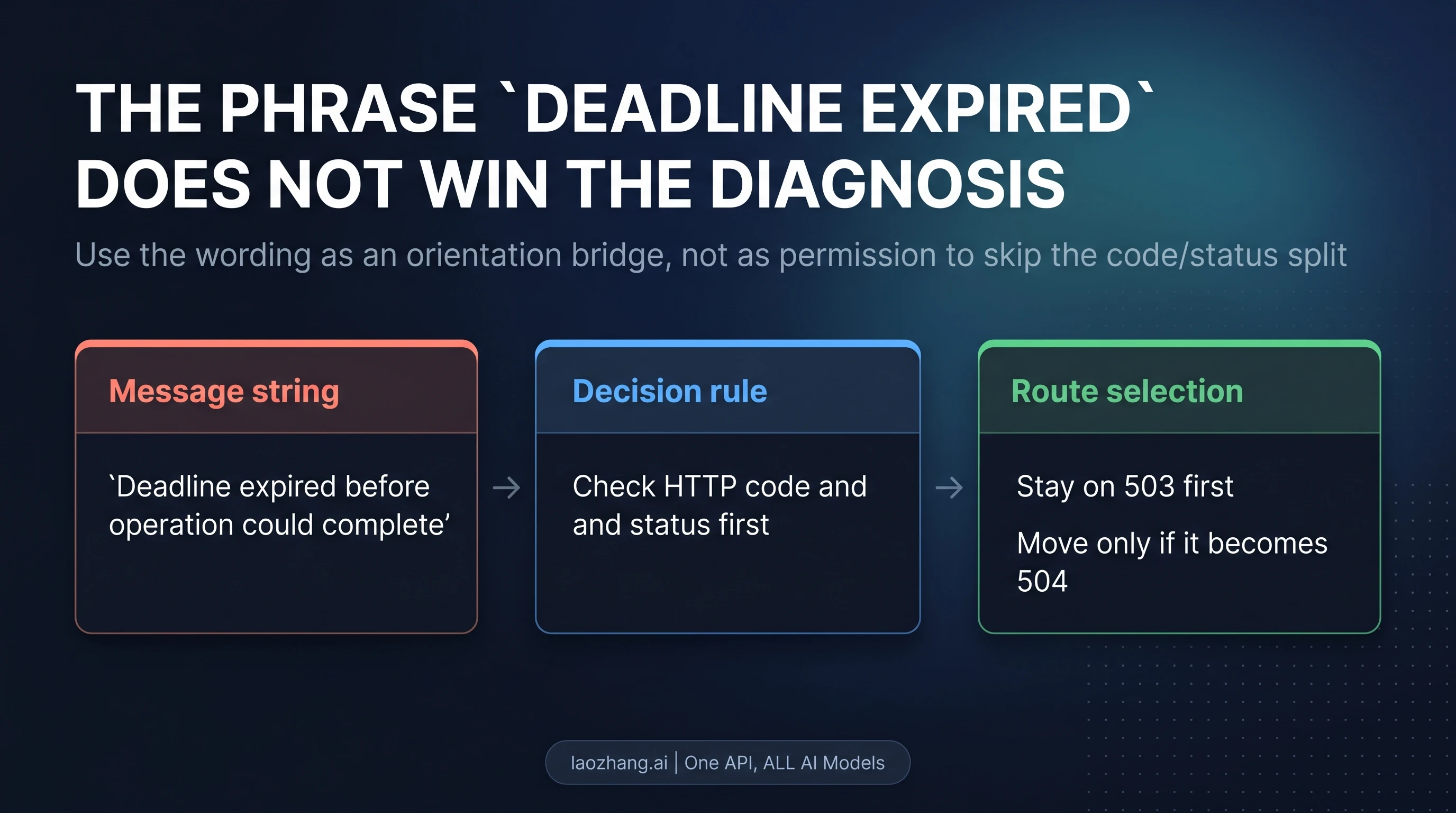

Why a 503 Can Still Say Deadline expired

The confusing part of this symptom is that the message text sounds like a timeout diagnosis. That is why so many developers search the literal phrase instead of the response class. But the exact forum example matters here: the Nano Banana Pro image endpoint has returned 503 UNAVAILABLE together with the message Deadline expired before operation could complete. That means the wording exists in the wild without turning the response into a true 504.

The practical consequence is straightforward. Use the literal phrase as an orientation bridge, not as the final diagnosis. It tells you that you have likely found the same family of failure other users have seen. It does not tell you to skip the code/status check.

This distinction is important because the first moves are different. A true 503 branch is still about temporary service availability. A true 504 branch is about time budget, request load, or client-side timeout handling. If you jump from wording straight to timeout tuning, you may spend ten minutes changing the wrong variable while the capacity event would have cleared with a bounded retry.

That does not mean the wording is useless. It is useful precisely once: at the top of the page, so the reader knows they landed in the right article. After that, the page should stop repeating it and switch back to publish-layer language about 503 UNAVAILABLE, 504 DEADLINE_EXCEEDED, and same-path verification.

If It Is a Real 503 Capacity Failure

When the response is still 503 UNAVAILABLE, do the smallest thing that can confirm recovery.

Start with bounded backoff on the same request path. In practice that means keeping the same model, the same API route, and roughly the same request shape while spacing out retries. Do not immediately rewrite the prompt, swap SDKs, resize inputs, or rebuild auth unless another error class appears. The first question is whether the temporary capacity failure clears on its own.

A simple retry rhythm is usually enough:

- Retry once after a short pause.

- If it is still 503, back off again with a slightly longer wait.

- Stop after a bounded number of attempts and decide whether you should wait longer, queue the job, or use a temporary fallback.

The point of "bounded" backoff is operational clarity. An unbounded retry loop can hide the moment when you should switch strategies. A bounded loop tells you whether this is a short capacity wobble or whether the request belongs in a queue or a deferred workflow.

If you are running a user-facing app, the next decision is product-specific: would your user rather wait, or would they rather get a slower or less ideal fallback? For some workloads, waiting a minute and retrying the same model is acceptable. For others, it is better to return a queued state or use a different supported image path temporarily. The page does not need to prescribe one universal fallback model to be useful; it needs to keep you from debugging the wrong class of failure first.

The mistake to avoid is treating 503 as a personal quota problem. If the branch is still 503, upgrading billing or changing client-side throttling is not your first move. If the branch becomes 429 RESOURCE_EXHAUSTED, that is the moment to shift to a rate-limit diagnosis. Until then, stay disciplined about the actual error you have.

If you do need the broader quota branch, use our Gemini API rate limits guide or the broader Nano Banana Pro error-code reference.

If It Is a Real 504 or Client Timeout

A true timeout branch starts when the response is actually 504 DEADLINE_EXCEEDED, or when your own client gives up before the request completes. That is where timeout tuning belongs.

At that point, you are no longer solving the same problem as a temporary 503 capacity event. Now the question is whether your timeout budget is too small for the request you are sending, or whether the request itself is too heavy for the current runtime envelope.

The safest first moves here are modest:

- increase the client timeout to a sane higher value,

- reduce request load where you reasonably can,

- and retest the same path without changing unrelated variables.

"Reduce request load" does not need to mean rewriting your whole system. It can mean shrinking a large payload, avoiding a pathological request shape, or testing a lighter version of the same workload to prove the timeout branch. The goal is not to permanently degrade quality. The goal is to confirm whether time budget is actually the constraint.

What you should not do is apply timeout advice back onto every exact phrase that contains the word "deadline." That is the whole reason this page exists. The timeout branch is real, but it only becomes your primary route when the response class or the client behavior proves it.

If you work across multiple surfaces and are not sure whether the failure belongs to the API itself or to a wider integration issue, the next stop after this page is the broader Nano Banana Pro troubleshooting guide.

Verify on the Same Request Path Before You Change Anything Big

The verification step is what keeps the article from turning into generic advice.

Retest on the same request path first. That means keeping the model, route, auth owner, SDK surface, and essential payload shape stable enough that the result is interpretable. If you switch from one model to another, rewrite the prompt, and increase timeout all at once, you may still get success, but you will not know which variable actually fixed the failure.

There are only three outcomes that matter after the retest:

- It stays

503 UNAVAILABLE. Keep it on the 503 capacity branch. Use bounded retry, queueing, or a deliberate fallback plan. - It becomes

504 DEADLINE_EXCEEDED, or the client times out again. Move to the timeout branch and tune there. - It turns into

429,400, or403. Leave this page. You have learned that the original diagnosis was incomplete or that a second, more specific problem has surfaced.

That route-out rule saves time because it prevents a common failure mode: people staying on a page that no longer matches their actual error. Once the branch changes, the page should hand you off cleanly instead of pretending every image-generation failure is still a 503 story.

If the error changes class, use the broader Nano Banana Pro error-code guide. If the issue turns out to be more about current access, surface confusion, or setup expectations than about the exact 503 branch, our Nano Banana Pro how-to-use guide is the better next stop.

FAQ

Does raising timeout fix every Nano Banana Pro 503?

No. It is the right first move only for a real 504 DEADLINE_EXCEEDED or a confirmed client timeout. If the response is still 503 UNAVAILABLE, you are more likely dealing with temporary capacity loss first.

Why would a 503 say Deadline expired before operation could complete?

Because the message string is not the entire diagnosis. The exact wording has appeared together with 503 UNAVAILABLE on the image endpoint. That is why the page uses the message as a recognition bridge, then returns to the code/status split.

Is this the same as hitting quota or rate limits?

Not by default. Quota and rate-limit issues belong to 429 RESOURCE_EXHAUSTED, not 503 UNAVAILABLE. If your error changes to 429, leave this page and move to the rate-limit branch.

Should I change prompts, switch models, and increase timeout all at once?

No. First prove the branch on the same request path. Changing too many variables at once destroys the diagnostic signal you need.

When should I stop using this page?

As soon as the branch becomes a true 504, a client timeout, or a different code such as 429, 400, or 403. The value of an exact error page is that it stays narrow.

The One Rule to Keep

When Nano Banana Pro returns 503 UNAVAILABLE, trust the response code and status before you trust the message string. Even the exact Deadline expired before operation could complete variant belongs on the 503 branch first unless the response is actually 504 or your client timed out first.

That is the rule that keeps you from wasting time on the wrong fix. Everything else in this article follows from it.