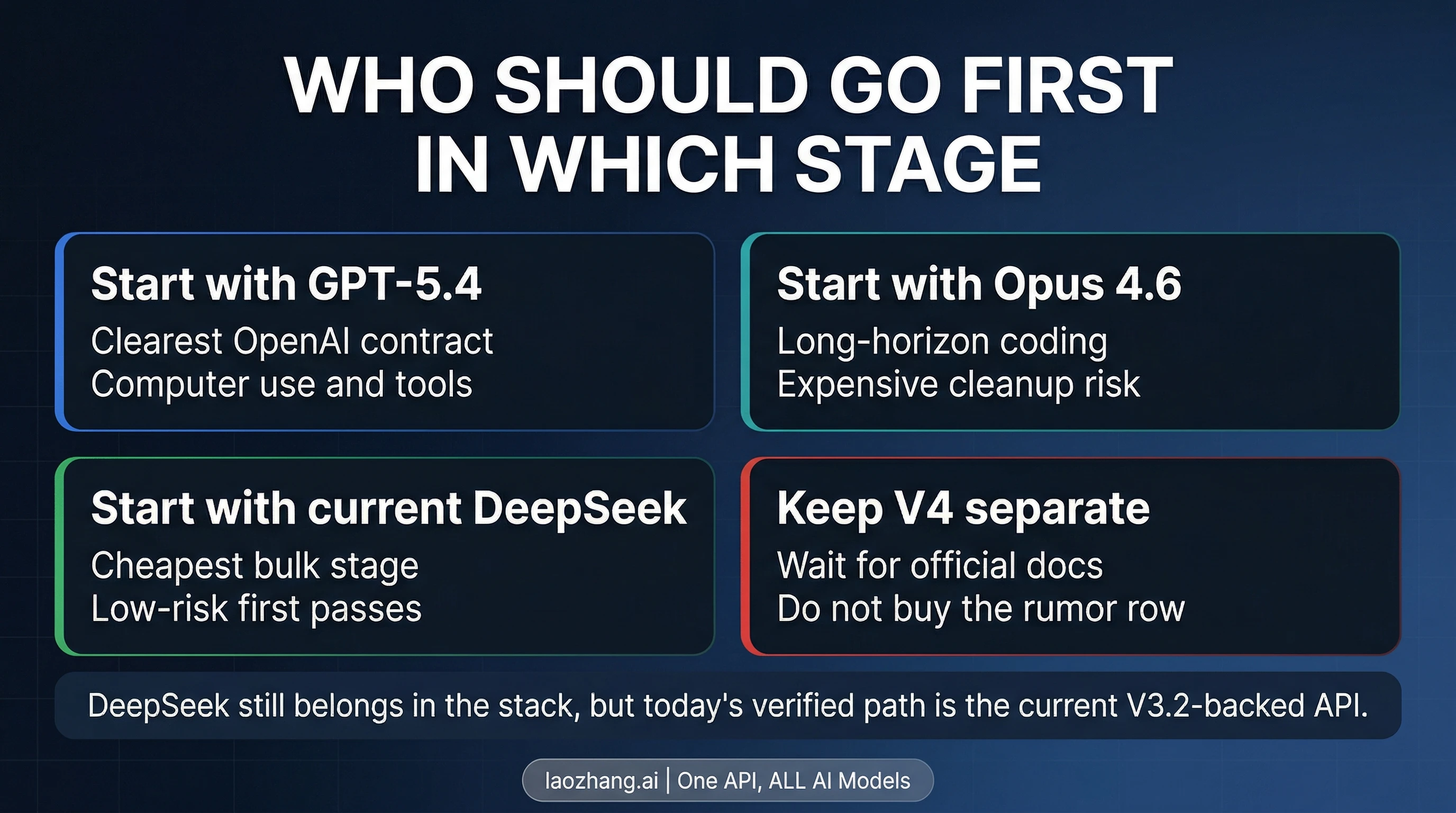

If you need a production decision today, do not start by pretending DeepSeek V4, Claude Opus 4.6, and GPT-5.4 are three equally documented public contracts. Start with GPT-5.4 when you want the clearest current OpenAI route, the strongest current OpenAI story on computer use and tool use, and a model contract that is explicitly documented across API and Codex surfaces. Start with Claude Opus 4.6 when the expensive part of the job is long-horizon coding, a very large working set, or a weak first pass that creates costly human cleanup. Use current DeepSeek when your first constraint is cost, but route it through the public DeepSeek-V3.2 contract that is actually documented today rather than through an assumed DeepSeek V4 pricing row.

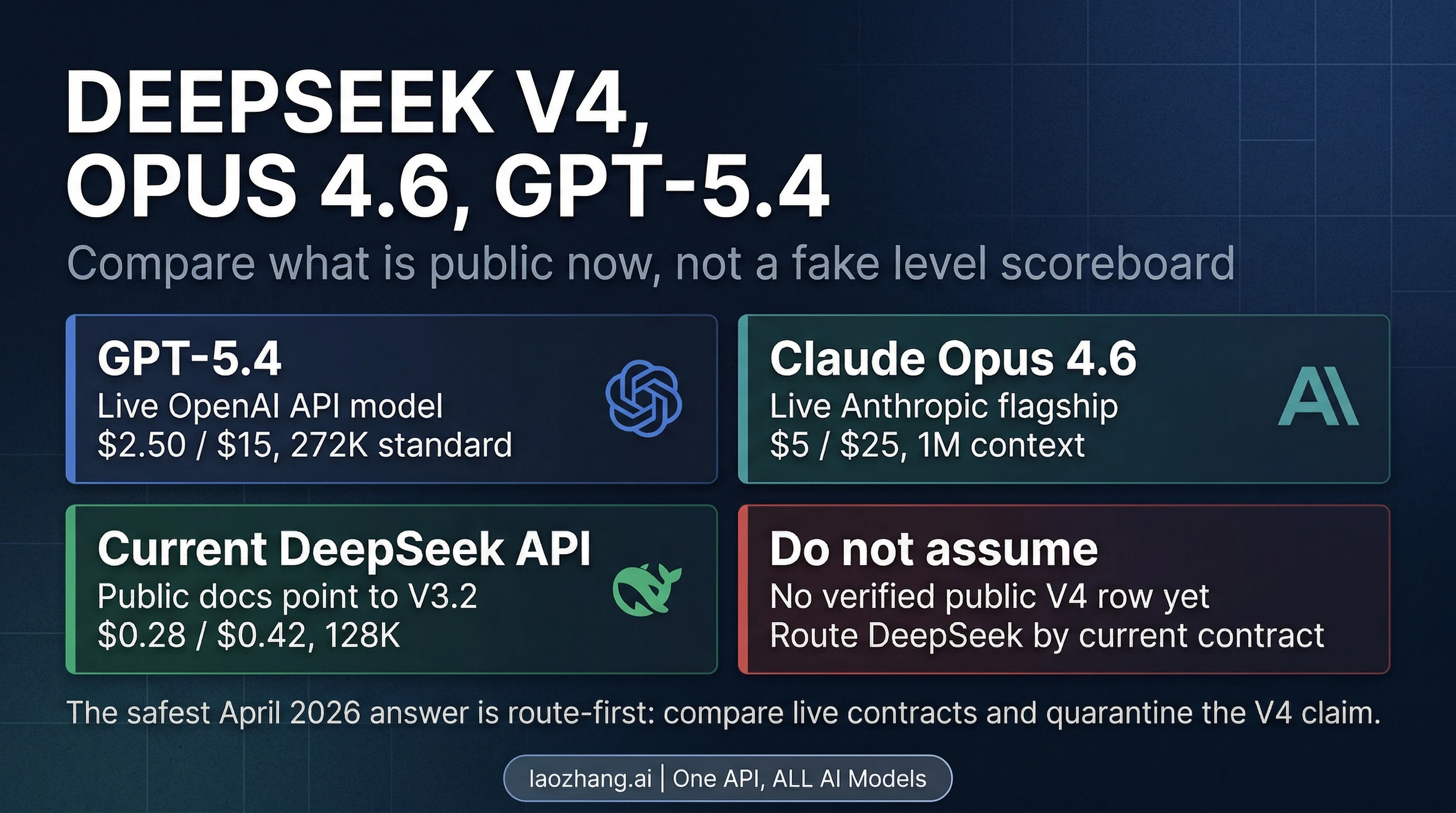

That distinction matters because this is not a normal three-way benchmark page. As of April 4, 2026, OpenAI and Anthropic both publish current model pages and pricing for GPT-5.4 and Claude Opus 4.6. DeepSeek's current public API docs, by contrast, still map deepseek-chat and deepseek-reasoner to DeepSeek-V3.2. That does not mean DeepSeek is irrelevant. It means the honest decision today is to compare the two live frontier contracts directly, then decide where the current public DeepSeek contract belongs in your stack.

| If your bottleneck looks like this... | Route first | Why |

|---|---|---|

| You want the clearest current OpenAI contract, plus documented computer-use and tool-use upside | GPT-5.4 | OpenAI currently publishes pricing, benchmark rows, and the standard-versus-Codex context distinction clearly |

| A bad first pass creates expensive human cleanup on long coding or agent tasks | Claude Opus 4.6 | Anthropic's current 1M-context, 128k-output flagship is easier to justify when failure cost is the real bill |

| You need the cheapest current public API stage for low-risk bulk work | Current DeepSeek API | DeepSeek's public docs point to a V3.2-backed contract with a much lower token price than GPT-5.4 or Opus 4.6 |

Official verification note: OpenAI and Anthropic docs were checked on April 4, 2026. Current DeepSeek API docs checked on the same date still point to DeepSeek-V3.2 for the public deepseek-chat and deepseek-reasoner rows. A public DeepSeek V4 pricing row or current public V4 API model page was not verified in those docs on that date.

First, define the compare object correctly

The most useful correction is not philosophical. It is operational. You can compare GPT-5.4 and Claude Opus 4.6 as current public contracts because both vendors publish current model identity, pricing, and capability surfaces. With DeepSeek, the public-contract story is narrower. Current public API docs show DeepSeek-V3.2 behind the public chat and reasoner rows, with 128K context, tool use in thinking mode, and current pricing at $0.28 per million input tokens and $0.42 per million output tokens. That is a real, usable contract. It is just not the same thing as a verified public DeepSeek V4 row.

This is why a normal "who wins more categories" article is too crude here. A clean comparison assumes the three rows are equally public, equally documented, and equally current. That assumption does not hold. On the OpenAI side, GPT-5.4 is public in the API today as gpt-5.4, with gpt-5.4-pro available for teams that need the premium tier. On the Anthropic side, Claude Opus 4.6 is public as claude-opus-4-6, with current Claude 4.6 docs explicitly describing its 1M context window and 128k max output. On the DeepSeek side, the verified public path today is the current V3.2-backed API. That does not kill the comparison. It changes what an honest comparison is allowed to say.

The practical implication is simple: if someone tells you "DeepSeek V4 is 20x cheaper than Claude Opus 4.6" or "DeepSeek V4 is the true GPT-5.4 competitor," the first response should be, "Which public contract are we actually talking about?" That question is not pedantry. It is the difference between a safe procurement or routing decision and a comparison built on an unstable row.

| Path | Current public contract verified on April 4, 2026 | Price | Context | What the vendor clearly documents today | What you should not assume |

|---|---|---|---|---|---|

| GPT-5.4 | OpenAI API model gpt-5.4 | $2.50 input / $15 output | 272K standard, experimental 1M support in Codex | pricing, API availability, benchmark rows, Codex support | Do not flatten the standard 272K contract into a generic "1M model" everywhere |

| Claude Opus 4.6 | Anthropic API model claude-opus-4-6 | $5 input / $25 output | 1M context, 128k max output | flagship positioning for agents and coding, context, output, launch status | Do not keep quoting older 192K/200K context numbers from stale third-party pages |

| Current DeepSeek API | public docs point to DeepSeek-V3.2 via deepseek-chat and deepseek-reasoner | $0.28 input / $0.42 output | 128K | low current public price, V3.2-backed public API, tool use in thinking mode | Do not treat an unverified DeepSeek V4 pricing row as already public fact |

That table is the real first screen. Once you see it, the rest of the article stops being a fake three-way beauty contest and becomes a routing problem.

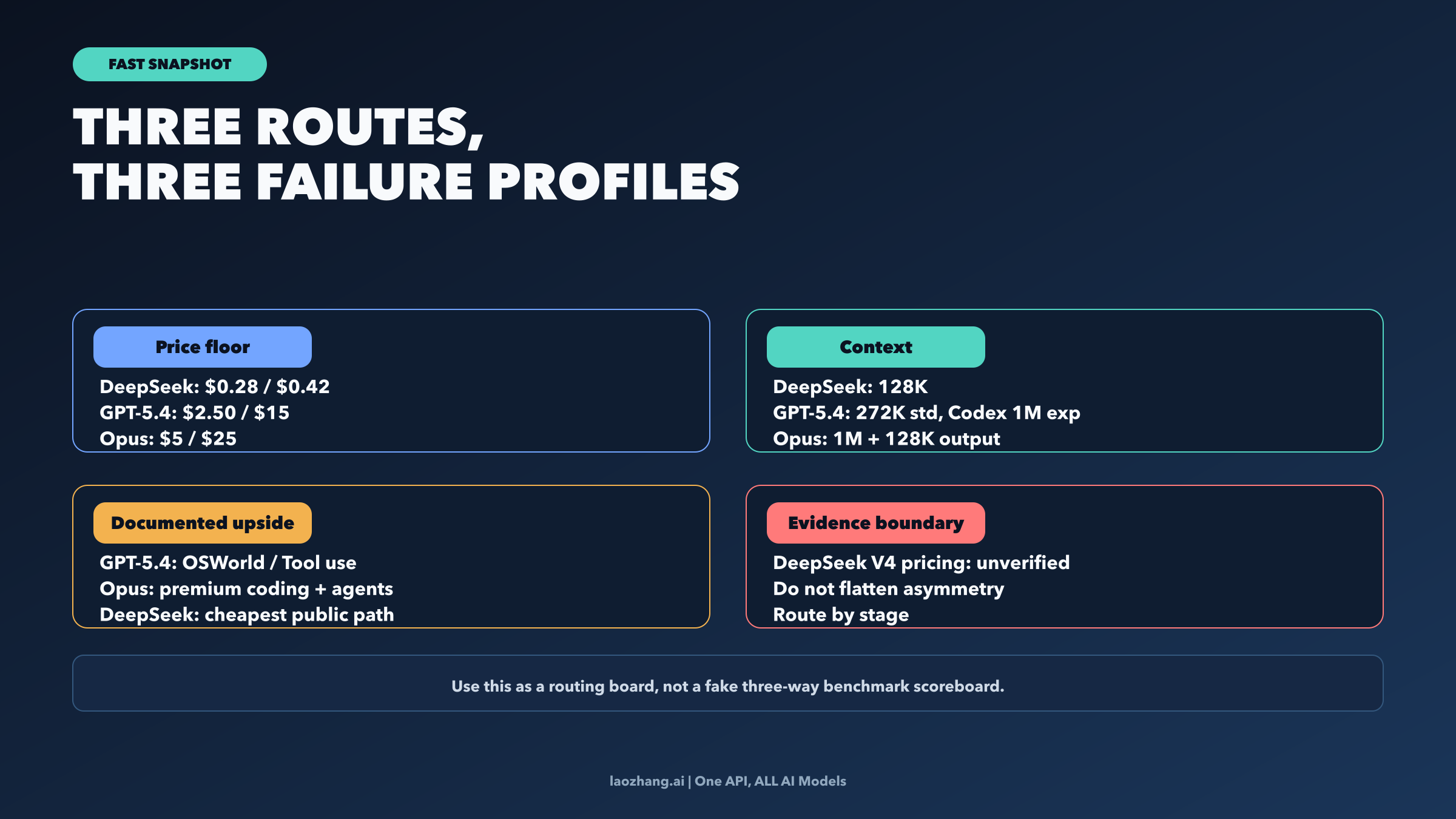

Fast snapshot: the three live routes are optimizing different failure profiles

GPT-5.4 is easiest to justify when your real need is a clean current OpenAI contract with current official platform backing. OpenAI's GPT-5.4 launch page is unusually explicit for a frontier-model release: it publishes current API pricing, states that GPT-5.4 is live now as gpt-5.4, distinguishes the standard 272K context window from the experimental 1M context path inside Codex, and publishes current public benchmark rows such as 75.1 on Terminal-Bench 2.0, 75.0 on OSWorld-Verified, and 82.7 on BrowseComp. That does not mean GPT-5.4 automatically wins every coding decision. It means the current OpenAI side is a clearly documented production contract rather than a vague promise.

Claude Opus 4.6 is easiest to justify when the expensive part of the job is not token price but failure cost. Anthropic's current Claude 4.6 docs do not need a long benchmark theater act to make the premium case. The core contract is already strong: 1M context, 128k max output, and a current positioning as the most capable Claude model for agents and coding. If you are working on repository-scale changes, longer chains of agent execution, or output-heavy tasks where a mediocre first pass creates real reviewer and repair cost, those contract details matter more than a cheaper price row.

Current DeepSeek belongs in the picture for a different reason. Its public contract is not strongest on public matched frontier benchmarks. It is strongest on price floor and on keeping a current public API route alive at a dramatically lower token cost. The current public DeepSeek story is therefore not "here is a fully verified V4 row that you can slot beside GPT-5.4 and Opus." The current story is "here is the cheapest public DeepSeek contract we can verify today, and here is the stage of work where that matters."

That is the split this article will keep repeating: GPT-5.4 for the clearest current OpenAI contract, Claude Opus 4.6 for premium long-horizon coding and agent work, current DeepSeek for the cheapest public stage of the stack.

When GPT-5.4 should route first

Choose GPT-5.4 first when the job rewards OpenAI's current documented middle route more than it rewards the cheapest tokens or the most aggressive premium coding bet. The obvious cases are teams that want a current public OpenAI contract, strong documented computer-use upside, and continuity with the rest of OpenAI's current product and API stack. OpenAI's current launch material gives GPT-5.4 a clearer official evidence surface than most comparison pages admit: the model is live in the API, pricing is explicit, and the difference between the standard 272K context contract and the experimental 1M support inside Codex is stated directly instead of being hidden in rumor or screenshots.

That matters because context claims are where many comparison pages get sloppy. If you need a clean contract for architecture planning, you should not write "GPT-5.4 has a 1M context window" and stop there. The safer statement is that GPT-5.4 keeps a 272K standard context window, while OpenAI also documents experimental 1M support inside Codex. Those are related facts, but not the same operational promise. The moment you separate them, GPT-5.4's role becomes clearer: it is a strong, current, officially described route for teams that want current OpenAI tooling and a documented platform path instead of a fuzzy model rumor.

The benchmark picture reinforces that role without justifying a fake universal coding-winner claim. GPT-5.4's current launch page gives it a strong public case on computer use, tool use, and terminal work. If your evaluation target is something like "Can the model operate in a real environment, stay useful with tools, and handle agent-like execution without requiring me to read product tea leaves?", GPT-5.4 is the safest first test of the three paths discussed here. That is especially true if your organization already expects to work inside OpenAI's current surfaces or needs a cleaner bridge from API evaluation into Codex-oriented workflows. If that is the next question you need to answer, the companion read is our OpenAI Codex March 2026 guide.

The catch is equally important. GPT-5.4 is not the cheapest route, and the current official materials do not entitle you to write that it simply crushes Claude Opus 4.6 for every coding task. This is where lazy comparison pages drift. GPT-5.4's case is strongest when the value of a clear official OpenAI contract and documented tool/computer-use capability outweighs the price premium over current DeepSeek and the long-context premium case for Opus.

When Claude Opus 4.6 earns the premium

Choose Claude Opus 4.6 first when the problem is not "Which model is cheapest to explore?" but "Which bad first pass will cost me the most later?" That is the most honest way to frame Opus 4.6. Anthropic's current case does not need a giant scoreboard to be useful. The live model contract already says enough: 1M context, 128k max output, and the current positioning of Opus 4.6 as Anthropic's top model for agents and coding. That is not a subtle signal. It is a model designed for work where a longer horizon and a larger working set are central to the job, not incidental.

This is also why list-price arguments can be misleading. Claude Opus 4.6 is clearly more expensive than GPT-5.4 on published token price, and far more expensive than the current DeepSeek API. But the real bill is not token price alone. If your workload involves long repository context, multi-step execution, or outputs large enough that the model's first attempt has to survive review with minimal repair, the expensive thing is often not the token invoice. The expensive thing is a weak first pass that sends a human into an hour of cleanup. That is the workload where Opus 4.6 earns the premium.

The 1M context and 128k output numbers matter here because they change how the work is structured. They reduce the need to compress the problem too early, to split context too aggressively, or to ask the model to operate from an artificially narrow slice of the working set. For readers who need the more detailed Anthropic-side cost picture after deciding this is their premium route, the separate Claude Opus 4.6 pricing guide is the better next read.

The catch is that Opus 4.6 is not the right default answer when price sensitivity is the real bottleneck or when a clean official OpenAI contract matters more than premium long-context execution. That is why the honest answer here is still route-first, not belt-award language.

Why current DeepSeek still belongs in the stack

It would be a mistake to overcorrect and throw DeepSeek out of the conversation entirely. The right correction is narrower than that. The right correction is that current public DeepSeek belongs in the stack through the contract that is actually documented today. And today, that contract is the current V3.2-backed public API, not a fully verified public DeepSeek V4 row.

That still leaves DeepSeek with a very real role. At $0.28 input and $0.42 output per million tokens on the public pricing page, the current DeepSeek API is much cheaper than GPT-5.4 or Claude Opus 4.6. The public docs also keep the story practical rather than mystical: the current public rows map to DeepSeek-V3.2, expose 128K context, and support tool use in thinking mode. Those are enough facts to justify current DeepSeek in low-risk, cost-sensitive stages such as first-pass summarization, bulk classification, initial drafting, or other high-volume work where the marginal cost of better frontier performance is hard to justify.

What those facts do not justify is writing as if DeepSeek V4 is already a publicly documented frontier peer with matched price rows, matched benchmark evidence, and the same verification status as GPT-5.4 and Opus 4.6. That is the line too many comparison pages cross. If your real interest in DeepSeek is "Can I keep my low-cost stage alive right now?", the answer is yes. If your real interest is "Can I quote a fully verified public V4 row beside GPT-5.4 and Opus 4.6 today?", the safe answer on April 4, 2026 is still no.

That distinction is not anti-DeepSeek. It is pro-decision quality. A model can be genuinely useful today without needing you to fictionalize a stronger public contract than the vendor currently documents.

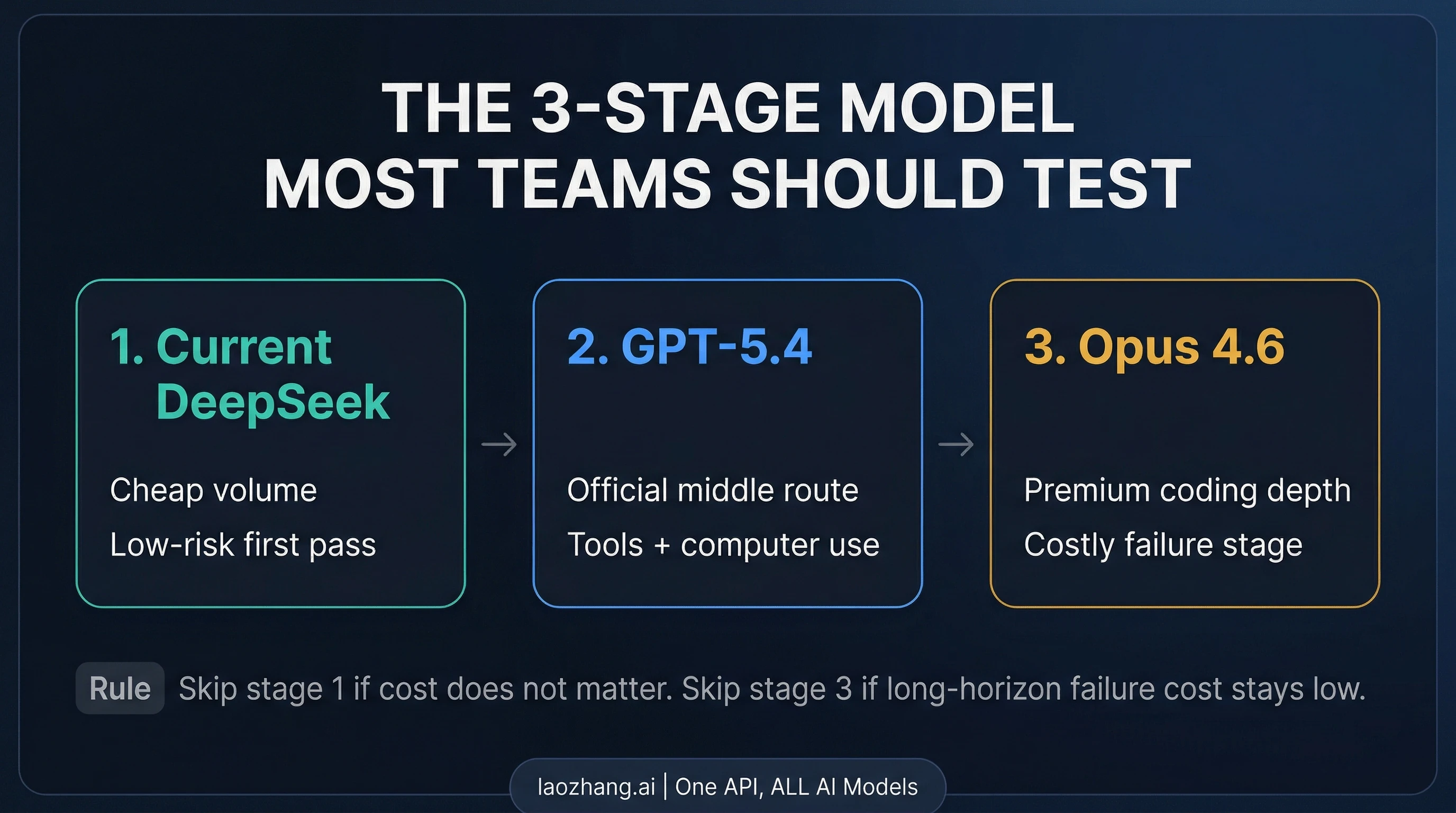

The three-stage routing stack most teams should actually test

Once you stop forcing a fake universal winner, the cleaner system design is obvious.

Stage 1: current DeepSeek for the cheapest public first pass. Use the current DeepSeek API when the workload is price-sensitive, high-volume, or low-risk enough that the main goal is to move work cheaply rather than to buy the strongest documented frontier execution. This stage is about token discipline and acceptable quality, not about pretending DeepSeek has already published a public V4 frontier contract.

Stage 2: GPT-5.4 for the clearest current official middle route. Promote work to GPT-5.4 when the task needs stronger documented tool use, computer-use upside, or a cleaner current OpenAI contract. This is the best middle route for teams that want the current OpenAI surface area to be part of the decision rather than merely an abstract benchmark row. If your next step after this article is access planning rather than model theory, our GPT-5.4 access guide is the better route map.

Stage 3: Claude Opus 4.6 for the expensive-failure tier. Promote work again when the cost of a weak first pass becomes greater than the token bill. That is the moment for Opus 4.6: repository-scale coding, longer chains of execution, or outputs where the reviewer does not want to rescue a half-right draft. This is where the premium is easiest to defend.

This is not over-engineering for its own sake. It is what happens when the public evidence is asymmetric and the prices are not close enough to ignore. Some teams will collapse the stack to two stages. If cost barely matters, they may skip current DeepSeek and compare GPT-5.4 against Opus 4.6 directly. If the work never becomes long-horizon or high-stakes enough to justify the premium tier, they may skip Opus 4.6 and stay on a DeepSeek-plus-GPT-5.4 setup. But the important change is conceptual: stop asking for one winner across three rows that do not carry the same kind of public proof.

If your real question is toolchain, not model contract

Many readers who type this comparison are partly asking a different question: not "Which model contract is strongest today?" but "Which workflow or toolchain should I adopt?" That is a separate decision. If you actually need the current OpenAI product picture, go next to OpenAI Codex in March 2026. If your real choice is between live steering and async delegation, the sharper next read is Claude Code vs Codex in 2026. This page stays on the model-contract layer on purpose so the decision does not collapse into a broader tool comparison.

FAQ

Is DeepSeek V4 officially public today?

As of April 4, 2026, a public DeepSeek V4 API model page or public V4 pricing row was not verified in current DeepSeek API docs. The public rows documented there still point to DeepSeek-V3.2.

Which model should developers test first?

Test GPT-5.4 first if you want the clearest current OpenAI contract and documented computer-use/tool-use upside. Test Claude Opus 4.6 first if long-horizon coding and costly human cleanup dominate. Test current DeepSeek first only when the job is price-sensitive enough that the current V3.2-backed public API is the point.

Is GPT-5.4 really a 1M-context model?

The careful answer is that OpenAI's current GPT-5.4 launch material describes 272K standard context and also documents experimental 1M support inside Codex. Those are related but not identical operating conditions, so they should not be flattened into one generic context claim.

Is current DeepSeek still worth using if V4 is unverified?

Yes. The current public DeepSeek API still has a strong role when your bottleneck is token cost and the stage of work is low-risk enough to justify the cheaper path. The correction is not "ignore DeepSeek." The correction is "use the contract that is actually public today."

Should I compare DeepSeek directly with GPT-5.4 and Opus 4.6 at all?

Yes, but only after you separate current public DeepSeek from an assumed public DeepSeek V4 row. Once you do that, the comparison becomes useful again: not as a fake level scoreboard, but as a staged routing decision across cost, evidence quality, and failure cost.

Bottom line

The shortest honest answer is this: compare GPT-5.4 and Claude Opus 4.6 directly as today's clean frontier contracts, and keep DeepSeek in the decision through the current V3.2-backed public API rather than a not-yet-verified V4 row. Start with GPT-5.4 when the clean current OpenAI contract is the advantage. Start with Claude Opus 4.6 when long-horizon coding and expensive cleanup dominate. Start with current DeepSeek when the stage is primarily about cost floor. Once you frame the question that way, the three-way comparison stops being messy and becomes operational.