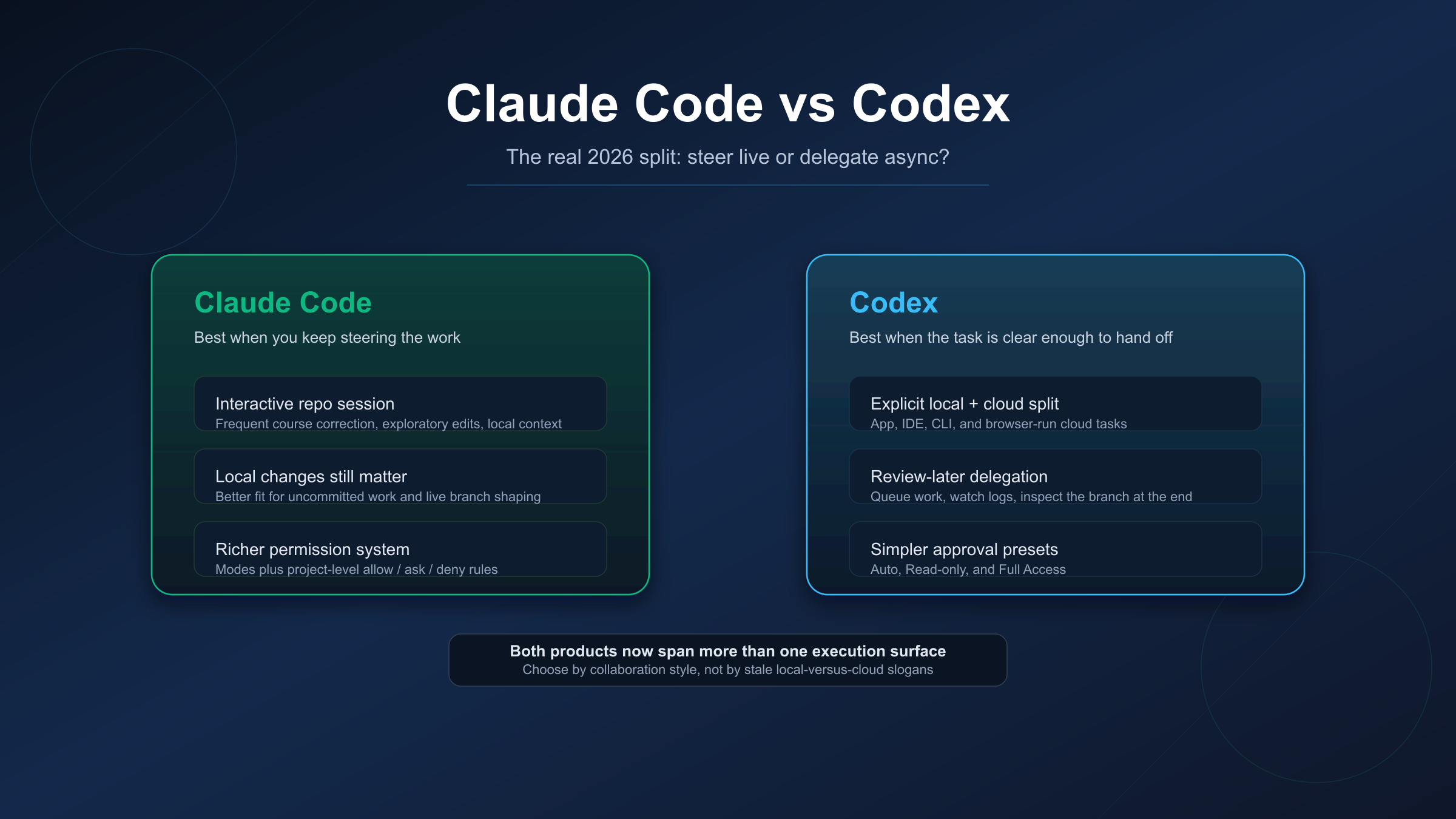

Claude Code and Codex are easier to compare badly than to compare well. The stale version of this article says Claude Code is the local terminal tool and Codex is the cloud one. That was already incomplete before, and in 2026 it is plainly outdated. Anthropic now positions Claude Code across terminal, IDE, desktop, Slack, and web surfaces, while OpenAI positions Codex across app, IDE, CLI, and cloud. The better question is not "which one is local?" It is how you want to work with the agent once the task starts.

My practical recommendation is simple. Choose Claude Code when you expect to keep steering the work mid-flight, especially inside a repo with local uncommitted changes or team-managed permission rules. Choose Codex when the task is clear enough to hand off, let run, and review later as a branch or diff. That is the split that survives the current product docs without leaning on benchmark folklore.

TL;DR (verified on 2026-03-28)

| If your next task looks like this... | Start with Claude Code | Start with Codex |

|---|---|---|

| You expect frequent course correction while the agent works | Yes | No |

| You have local uncommitted changes and want to stay inside that repo state | Yes | No |

| You want project-level permission rules checked into the repo | Yes | No |

| You want to queue well-scoped background work and review it later | No | Yes |

| You want the cleanest browser-run task flow tied to GitHub environments | No | Yes |

| You want the simplest approval presets for local work | No | Yes |

Evidence note: This comparison uses current official product, security, approval, and pricing pages from OpenAI and Anthropic checked on March 28, 2026. Plan inclusion, usage limits, and preview-surface availability change quickly, so treat the access details below as a dated snapshot rather than a timeless contract.

The Old Framing Is Outdated

If you have read older comparison posts, you have probably inherited a bad mental model: Claude Code equals local terminal control, Codex equals remote cloud execution. That framing breaks as soon as you read the current product docs side by side.

Anthropic's Claude Code product page now describes working from your terminal, IDE, desktop app, Slack, and the web, while still labeling some web and iOS surfaces as research preview. Anthropic's own help center also distinguishes between Claude Code on the web and Claude Code in your terminal or IDE, which only makes sense because both are now first-class surfaces in the product story. OpenAI makes the same multi-surface argument from the other side: Codex is documented across app, IDE, CLI, and cloud. So if your comparison starts by assigning one product to local work and the other to remote work, you are already compressing away the part that matters.

What still differs is how each product expects collaboration to happen. Claude Code's current docs lean toward an interactive, steerable repo session. Codex's docs lean toward an explicit split between local work and cloud tasks, with browser-run environments and a clearer "hand it off and review later" path. That distinction is much more useful than a generic local/cloud row in a feature table, because it maps to the decision a real developer is trying to make on a real task.

Steer Live vs Delegate Async

This is the most useful route I found after rechecking the official docs: Claude Code is stronger when you want to steer live; Codex is stronger when you want to delegate async.

Anthropic says the quiet part out loud in its help documentation. Claude Code on the web is positioned for well-defined tasks, background bug backlogs, queued work, and repositories you do not have locally. The same page says the terminal and IDE are better when you expect frequent course correction, exploratory work, or local development with uncommitted changes. That is unusually concrete product guidance, and it is exactly the kind of sentence most comparison posts ignore. It tells you that Claude Code itself already distinguishes between "clear enough to delegate" work and "I need to stay in the loop" work.

Codex is also strong locally, but OpenAI's cloud flow is more explicit and more central to the product story. The quickstart routes users through browser-run tasks, GitHub-connected environments, real-time logs, and reviewable changes that can become a pull request. That makes Codex especially compelling when the task is well-scoped enough to kick off, monitor lightly, and inspect at the end. If I had a backlog of discrete fixes, cleanup tasks, or implementation tickets that did not depend on local unstaged work, Codex is the cleaner default.

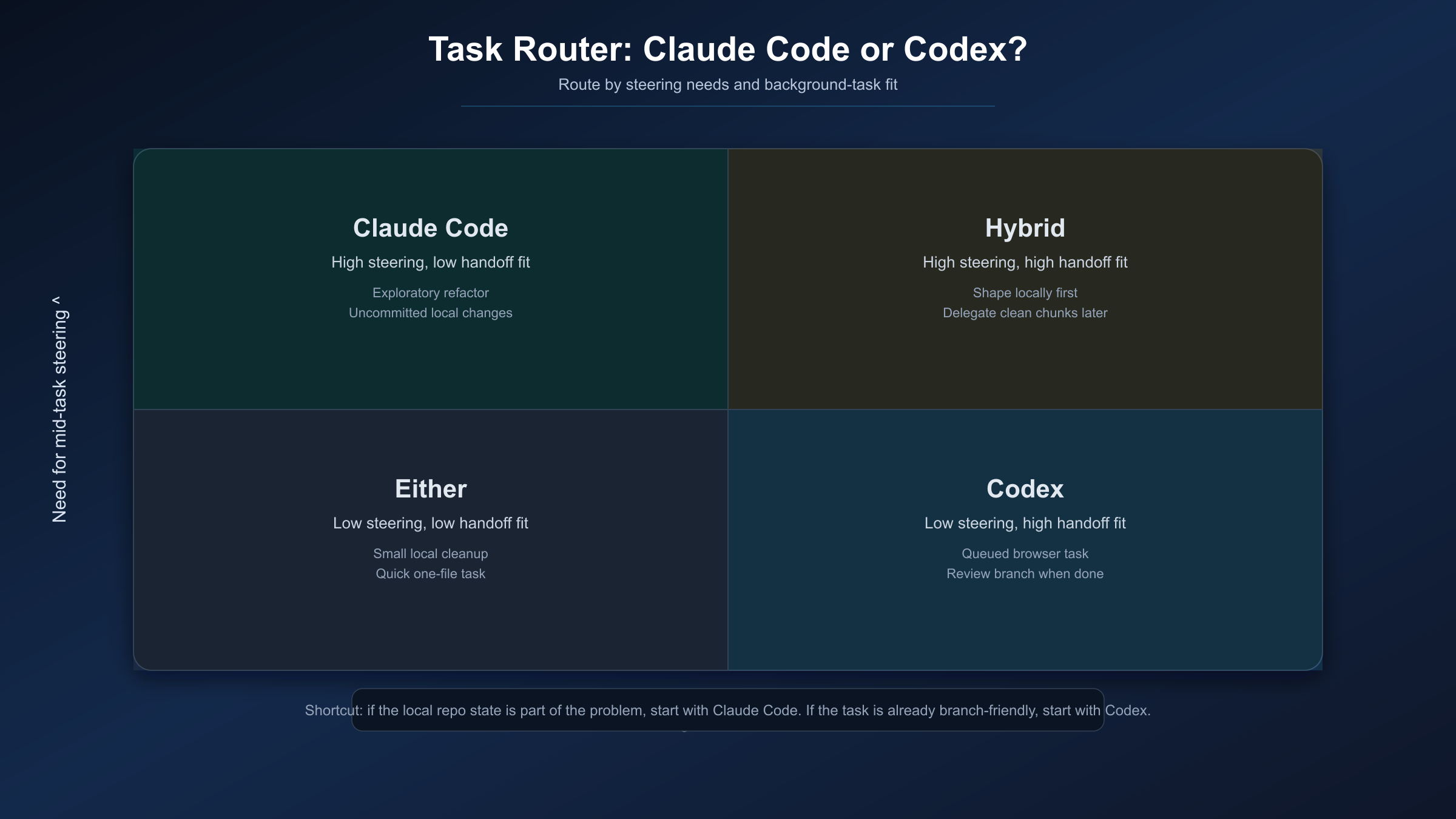

That leads to a practical rule of thumb:

- If you expect to say "no, not like that, try a different path" several times while the task is underway, start with Claude Code.

- If you expect to define the task once, let it run, and review the branch afterward, start with Codex.

This is an inference from the current official workflows, not a benchmark claim. But it is a good one, because it lines up with how the vendors themselves describe their best-fit surfaces.

Approvals, Permissions, and Trust Boundary

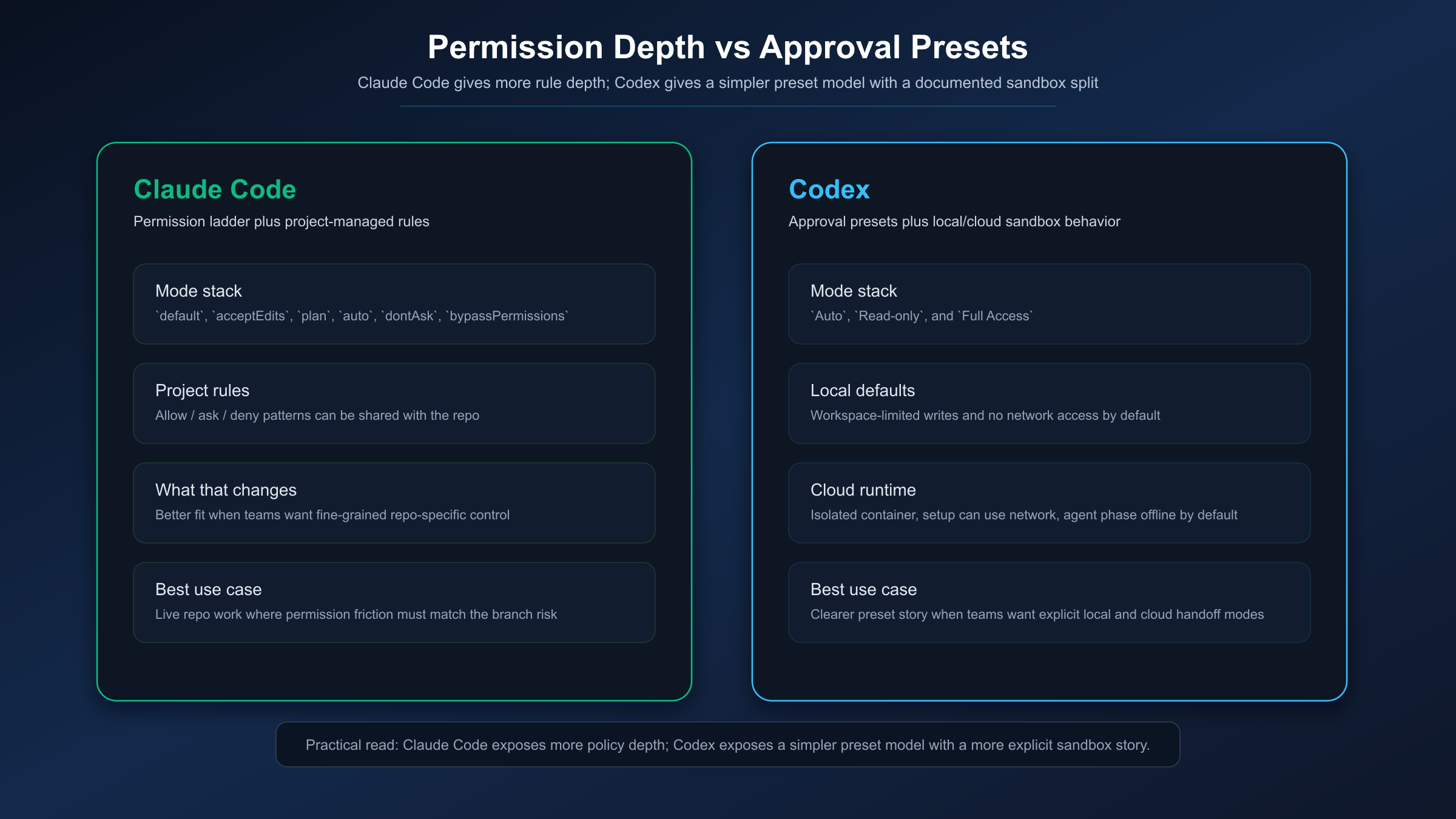

The next meaningful difference is not raw autonomy. It is how much structure each product gives you around autonomy.

Claude Code currently documents six permission modes: default, acceptEdits, plan, auto, dontAsk, and bypassPermissions. That is already a richer ladder than most comparison posts mention, but the bigger differentiator is that Claude Code also documents allow / ask / deny rules that can live in settings and be shared with a project. In other words, Claude Code is not just giving you session-level modes. It is giving you a more explicit permission system that teams can shape around their own repo habits, protected paths, and acceptable commands.

Codex takes a simpler documented approach. OpenAI currently describes three approval modes for Codex CLI: Auto, Read-only, and Full Access. It also documents the security model more clearly than the last generation of Codex comparisons did. In local CLI or IDE use, the defaults are no network access and writes limited to the active workspace. In cloud use, Codex runs in isolated OpenAI-managed containers, with a setup phase that can access the network and an agent phase that is offline by default unless you explicitly enable internet access for that environment.

So which one is "safer"? That is the wrong question. The better question is which control model matches your workflow.

If your team wants a richer permission vocabulary, project-shared rules, and more granular control over what the agent may read, edit, or execute, Claude Code currently gives a stronger contract. If your team wants a simpler preset model and likes that OpenAI separates local and cloud behavior through a documented sandbox story, Codex is easier to explain and standardize.

There is also a more human point here. Approval friction is not just a safety issue. It shapes whether a tool feels calm or annoying during real work. Claude Code gives you more ways to tune that friction. Codex gives you fewer conceptual knobs, but a cleaner preset story. Which one feels better depends on whether your team prefers configurability or simpler defaults.

Repo State Matters More Than Most Comparisons Admit

The best routing question is often not "which brand do I like?" It is "what state is my repo in right now?"

If you are inside a local repository with unstaged work, half-finished experiments, and branches that are not ready to push, Claude Code is the more natural starting point. Anthropic explicitly frames terminal and IDE work around immediate feedback, exploratory tasks, and local development with uncommitted changes. That is not a minor detail. It describes a real working condition that many developers face for most of the day. In that state, browser-first delegation is usually the wrong default because the local context is the work.

If the repository already lives in GitHub, the task is clear, and you mainly want the result to come back as a branch you can inspect, Codex has the cleaner flow. OpenAI's cloud docs are built around exactly that path: connect a repository, launch the task, watch logs if you want, then review the output or turn it into a pull request. That is better than a generic "cloud task support" checkbox because it tells you what the product expects you to do with the result.

Claude Code also has browser execution now, and that matters. But Anthropic's own guidance still nudges you toward the terminal or IDE when the work is exploratory or likely to keep changing shape. That means the differentiator is no longer "which product has async?" Both do. The differentiator is which product feels native for the kind of async or local work you have in front of you.

Plan Access Snapshot

Both products are now broad enough that access assumptions go stale quickly.

On Anthropic's current pricing page, Claude Pro explicitly includes Claude Code, and Max builds on Pro with more usage. Team pricing is now split into Standard and Premium seats, with the pricing page explicitly listing Claude Code under Premium. That means team buyers should not assume "Team" alone is the whole answer. Seat type matters.

On OpenAI's current Codex quickstart, Codex is included with ChatGPT Plus, Pro, Business, Edu, and Enterprise, and OpenAI also documents limited-time access in ChatGPT Free and Go. OpenAI's current docs also say that gpt-5.4 is now the newest model powering Codex and Codex CLI, which is another place where older comparison posts are already behind.

The important caution is this: do not flatten access into a single static quota table unless you are reading the plan docs and the model-specific pricing docs together on the same day. OpenAI now documents usage limits by model family, local messages, cloud tasks, and review types. Anthropic documents pricing and usage at the plan and seat level. If your decision is operational, inclusion is the first question and exact allowance is the second.

If you want the Anthropic side unpacked in more detail, see our Claude Code pricing guide. If you care specifically about how Anthropic's more autonomous permission mode works, see Claude Code Auto Mode.

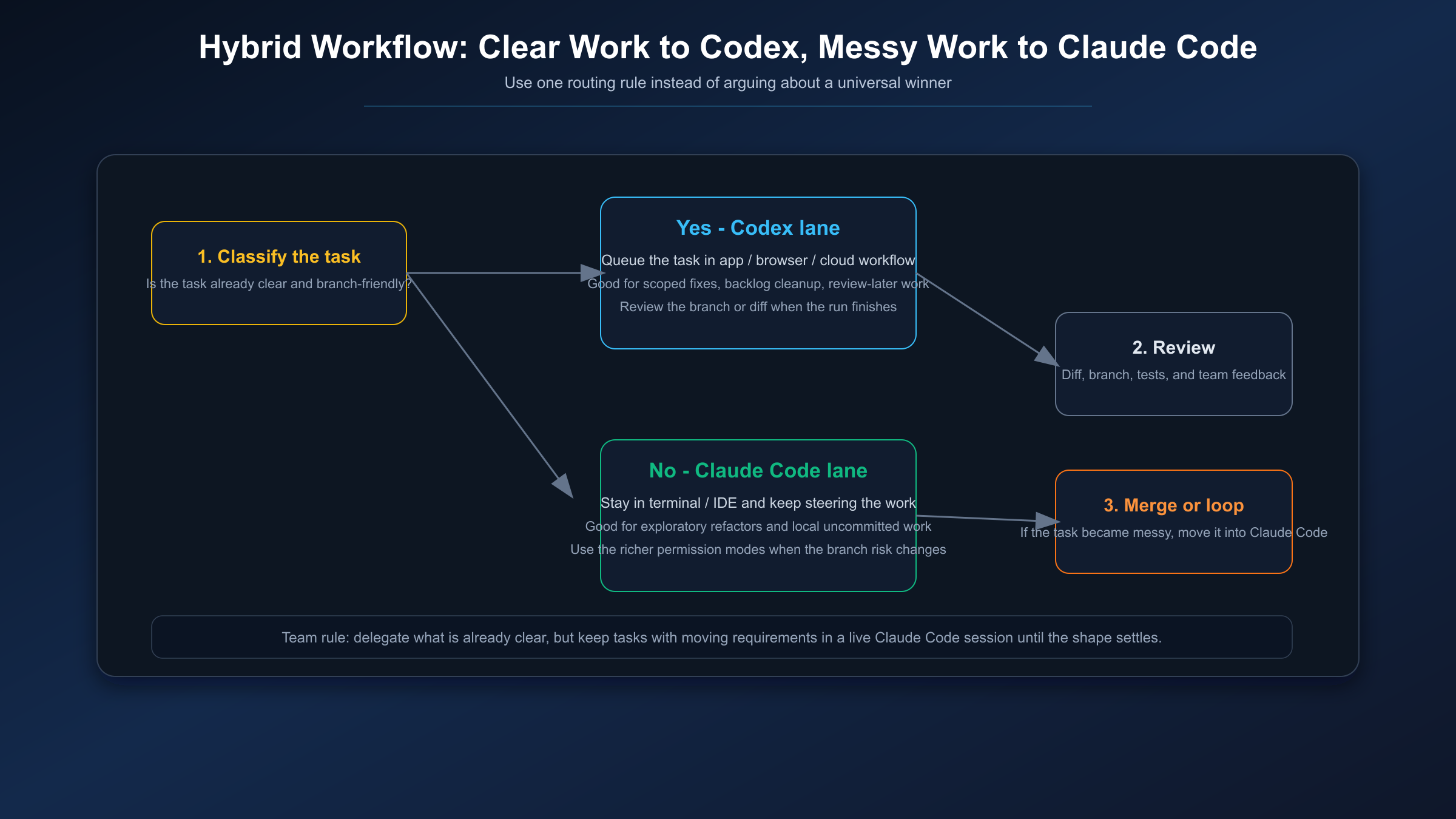

The Hybrid Playbook Most Teams Will Actually Use

For many teams, the right answer is not permanent tool loyalty. It is a simple routing policy.

Use Codex when the task is already clear, the repo is available through the cloud workflow you want to use, and you are happy to review the result after the fact. This is especially good for backlog-style async work, cleanup tickets, scoped bug fixes, or implementation chunks where the shape of success is already obvious.

Use Claude Code when the task is still moving, when the local repo state is part of the problem, or when you want stronger project-level permission controls around what the agent is allowed to do. This is where live steering matters more than background delegation.

If I had to write that policy for a team in one sentence, it would be this: delegate clear work to Codex, but keep messy work in Claude Code until the shape of the solution settles down. That is sharper than "use both," and it is the difference between a workflow and a shrug.

FAQ

Is Claude Code still a local-only tool?

No. Anthropic now documents Claude Code across terminal, IDE, desktop, Slack, and web surfaces. The better distinction is not local-only versus remote-only. It is which surface Anthropic recommends for the task shape you have.

Is Codex only for cloud tasks?

No. OpenAI documents Codex across app, IDE, CLI, and cloud. Codex is strong locally too. The reason it wins more clearly on async delegation is that OpenAI's product flow and security docs make the browser-run task path especially explicit.

Which one is better when I have local uncommitted changes?

Claude Code. Anthropic's own workflow guidance makes terminal and IDE work the better fit for local development with uncommitted changes and tasks that need frequent course correction.

Which one should I choose first if I can only pick one?

Pick Claude Code if your work is mostly live, iterative, and repo-bound. Pick Codex if your work is mostly clear enough to hand off and review later. If your team truly does both every week, a hybrid policy is more honest than a universal winner claim.

Which model powers Codex now?

OpenAI's current docs say gpt-5.4 is the newest model powering Codex and Codex CLI. If you see older articles treating GPT-5.1-Codex as the current default, they are out of date.

Ownable synthesis: the most useful Claude Code vs Codex decision in 2026 is no longer local versus cloud. It is whether the task wants a live steering session or a delegate-and-review loop. Once you choose on that axis, the product choice becomes much clearer.