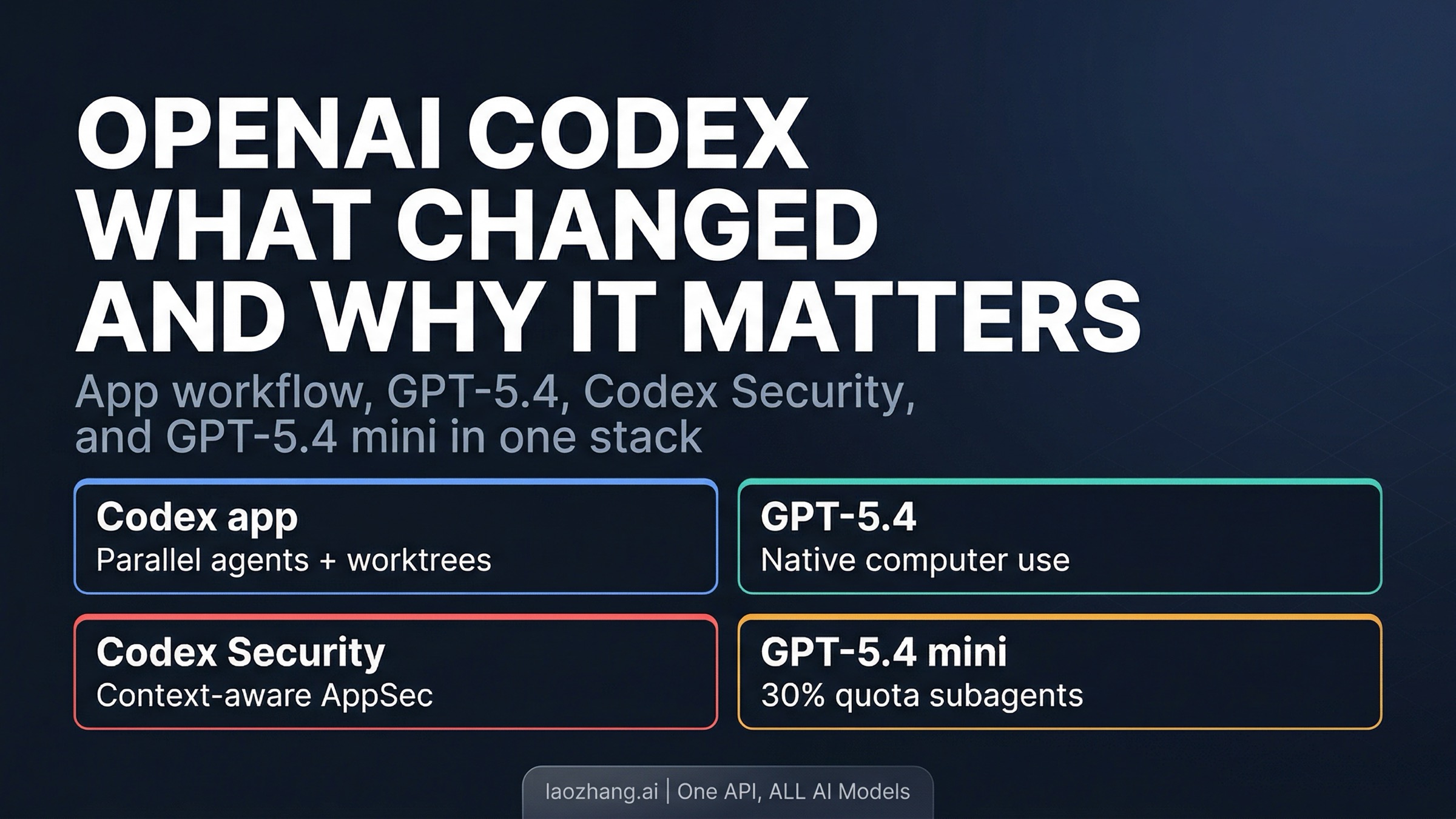

If your mental model of Codex is still "OpenAI's cloud coding agent," you are already behind March 2026. The meaningful shift was not one new feature. It was that Codex started fitting together as a broader agent system: a desktop app for parallel agents, GPT-5.4 as the new default model, GPT-5.4 mini for cheaper support work, Codex Security for application review, and a much clearer local-versus-cloud runtime story.

That matters because the current product only makes sense when you read those layers together. Codex is not just a cloud task runner, not just a CLI coding tool, and not just "the OpenAI coding model." It now spans app, CLI, IDE, and cloud, and those surfaces are no longer separate side stories. They reinforce one another.

TL;DR (verified on 2026-04-01)

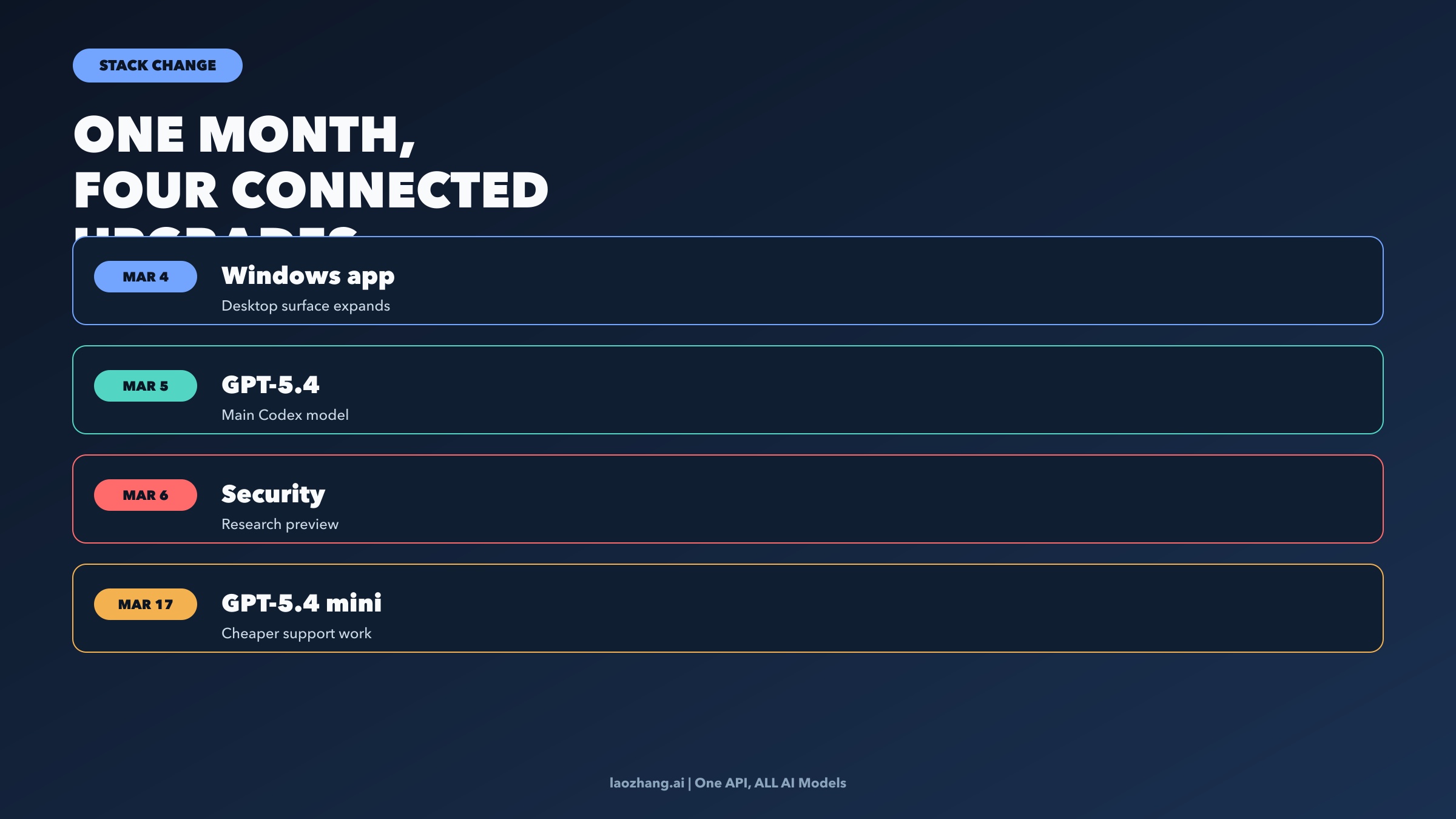

- March 5, 2026: GPT-5.4 landed in Codex and became the new main model. OpenAI positions it as the flagship model for important work, with native computer use and stronger tool workflows.

- March 17, 2026: GPT-5.4 mini arrived in Codex across app, CLI, IDE, and web. It uses only 30% of GPT-5.4 quota and can power narrower, cheaper subagent work.

- March 6, 2026: Codex Security entered research preview through Codex web, bringing context-aware application security review into the Codex stack.

- February 2, 2026 plus March 4 update: the Codex app launched for macOS and then reached Windows, bringing a proper multi-agent desktop surface with worktrees, skills, and Automations.

- The real change: Codex is now best understood as a cross-surface agent system, not as one interface or one model.

Evidence note: This article uses current official OpenAI product posts and Codex docs checked on April 1, 2026. Access, model routing, and quota policies can change quickly, so treat it as a dated operating snapshot.

The March Shift Was a Stack Change, Not a Single Launch

The easiest way to misunderstand Codex is to read each March announcement in isolation.

If you only read the Codex app launch, you might conclude OpenAI mostly shipped a nicer desktop wrapper. If you only read the GPT-5.4 launch, you might conclude Codex simply inherited a stronger model. If you only read the GPT-5.4 mini post, you might reduce the story to a cheaper option. And if you only read the Codex Security launch, you might think it is a separate security product that sits next to Codex instead of inside it.

That reading misses the stronger pattern. March 2026 made Codex more coherent.

The app gave Codex a better place to manage multiple long-running agents. GPT-5.4 raised the capability ceiling of the main agent. Codex Security extended the platform into higher-trust review workflows. GPT-5.4 mini made it more practical to split work between a stronger planning model and cheaper supporting tasks. Once you put those together, Codex looks less like "one more AI coding tool" and more like a real agent workflow system.

That is also why the Windows update on March 4, 2026 matters more than it seems. By itself, Windows availability is just platform coverage. In context, it signaled that the Codex app was becoming a durable surface in the product, not a side experiment for Mac users.

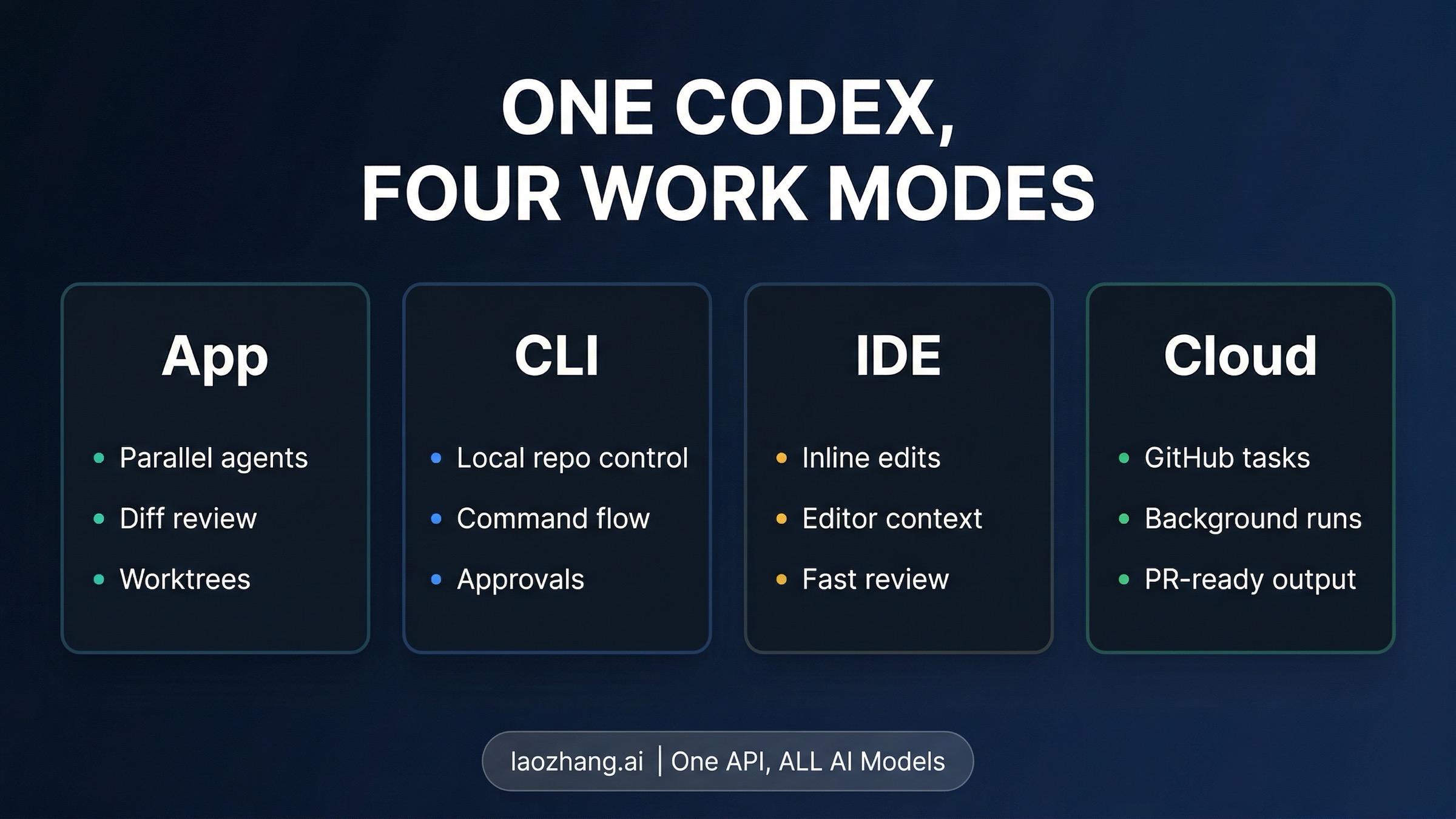

Codex Is Now a Four-Surface System

The current OpenAI docs and product pages now describe Codex across four main surfaces:

- the Codex app

- the CLI

- the IDE extension

- Codex cloud

That matters because the useful question is no longer "which one is the real Codex?" They are all real Codex now. The useful question is what each surface is good for.

The app is the clearest sign of how OpenAI wants people to work with agents now. The official product page positions it as a command center for agents, not a chat window. Multiple threads can run in parallel. Agents work in isolated worktrees. You can inspect diffs, comment on changes, and keep background work moving without touching your local git state. That is a very different posture from "open terminal, ask model for patch, copy answer."

The CLI and IDE extension still matter because they keep Codex close to the local repo and the actual editing environment. The app even inherits session history and configuration from those local surfaces, which is an important clue about product design: OpenAI is not replacing the local workflow. It is trying to unify it.

Then there is cloud Codex, which still matters whenever the right move is to connect a repository, hand off a scoped task, watch logs only if needed, and review the result later as a clean diff or pull request. This remains one of Codex's strongest modes because the cloud path is explicit in the docs rather than half-hidden behind marketing language.

What ties these surfaces together is that skills, rules, and increasingly automations can travel across them. The app page makes that unusually concrete. Skills can be created in the app, used in the app, CLI, or IDE, and checked into the repository so a team can share them. That turns Codex from "assistant with memory" into something closer to a repo-shaped workflow engine.

GPT-5.4 Changed the Ceiling

The biggest March capability change was still March 5, 2026, when OpenAI released GPT-5.4 into Codex.

That mattered for at least three reasons.

First, OpenAI explicitly positioned GPT-5.4 as the new main model for important work across ChatGPT, the API, and Codex. So this was not a quiet backend refresh. It changed the default expectation for what Codex is running on.

Second, OpenAI described GPT-5.4 in Codex and the API as its first general-purpose model with native computer-use capabilities. That is a meaningful shift because it pushes Codex beyond code-only workflows. A coding agent that can reason over tools, software environments, and interfaces more reliably is useful for bigger classes of technical work: testing, UI inspection, workflow validation, documentation pipelines, spreadsheet or presentation generation through skills, and mixed browser-plus-code tasks.

Third, GPT-5.4 brought a stronger long-horizon profile. OpenAI says GPT-5.4 supports up to 1 million tokens of context and improved tool search across larger ecosystems. I would not flatten that into "Codex now solves every huge task automatically." That would be sloppy. But it does change the practical ceiling. It gives the main Codex agent a better chance at planning, coordinating, and verifying work that spans more files, more tools, and more steps than older Codex writeups usually assume.

This is also why the current Codex story is broader than "the model got smarter." GPT-5.4 is what makes the app, skills, and automation story more credible. A multi-agent surface is only interesting if the underlying agent can stay coherent over longer tasks and use tools more effectively. March finally made those layers line up.

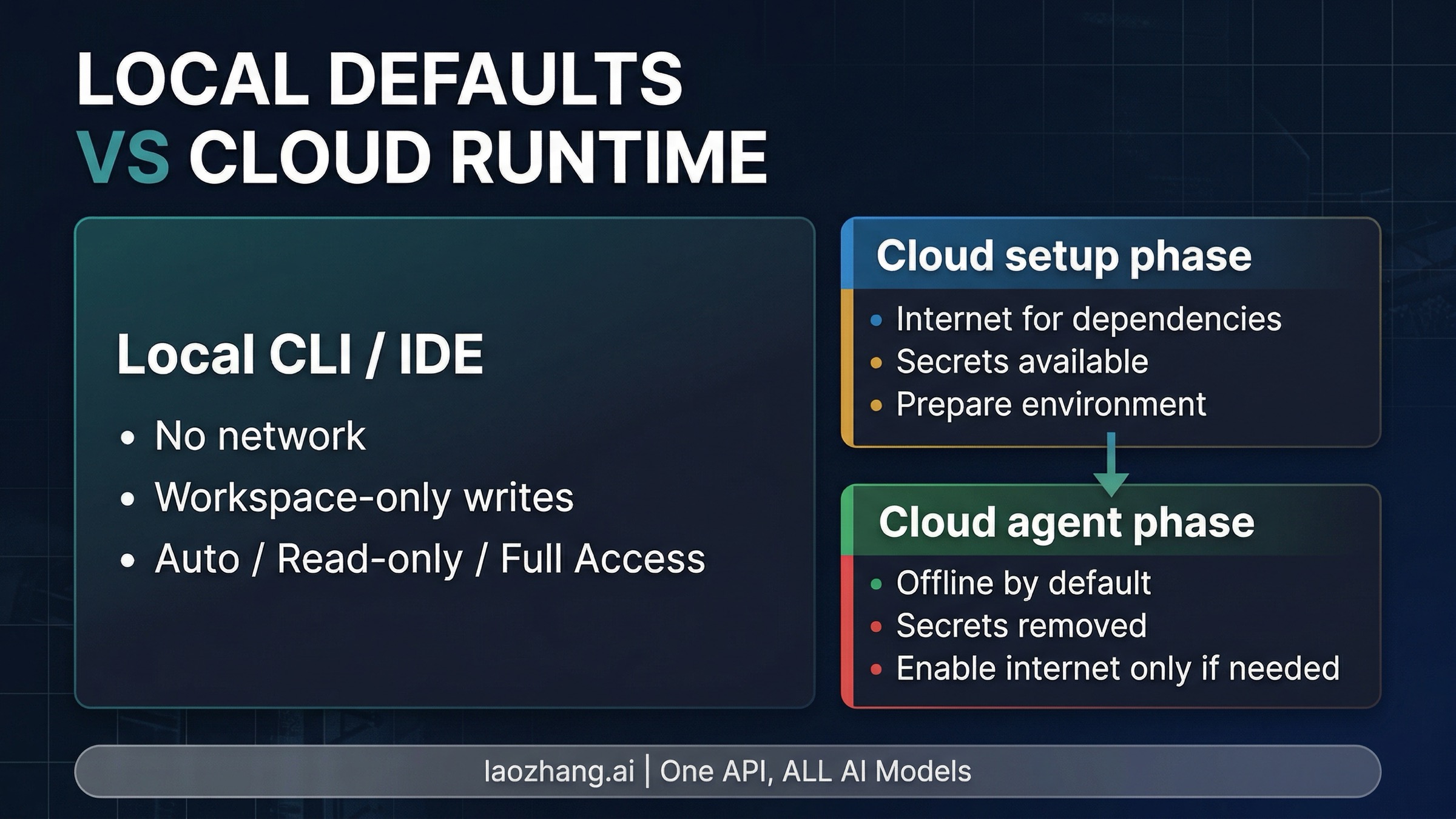

The Trust Boundary Is Finally Explicit

One of the most useful improvements in the current Codex docs is not a raw capability at all. It is the fact that OpenAI now documents the trust boundary much more clearly.

In local CLI and IDE use, the default behavior is:

- no network access

- writes limited to the active workspace

That is already more operationally useful than vague claims that an agent is "safe by default." It tells you what the default box actually is.

In Codex cloud, OpenAI describes a two-phase runtime:

- the setup phase can use the network to install dependencies and prepare the environment

- the main agent phase is offline by default unless internet access is explicitly enabled

OpenAI also documents that secrets are available during setup and then removed before the main agent phase. That is not a minor detail. It changes how you reason about dependency installation, build preparation, and post-setup execution because the runtime boundary is finally explicit.

The practical takeaway is simple. Codex now has a clearer autonomy story than the stale "just let the agent run" framing. You can reason about:

- what local work can touch by default

- when network access enters the picture

- what cloud execution can do before and after setup

- and when you are deliberately moving beyond defaults

For teams that care about policy, reviewability, and not mixing local repo risk with cloud execution risk, that clarity is a real capability in its own right.

The Underrated March Addition Is GPT-5.4 Mini

The March 17, 2026 GPT-5.4 mini launch is easy to underrate if you read it as a model pricing footnote. In Codex, it is more interesting than that.

OpenAI says GPT-5.4 mini is available across the Codex app, CLI, IDE extension, and web, and that it uses only 30% of the GPT-5.4 quota. That already matters for developers who want faster or cheaper passes on simpler tasks. But the more important point is the workflow OpenAI describes around it.

The GPT-5.4 mini launch post explicitly says that in Codex a larger model like GPT-5.4 can handle planning, coordination, and final judgment, while GPT-5.4 mini subagents handle narrower subtasks in parallel, such as:

- searching a codebase

- reviewing a large file

- processing supporting documents

That is a different pattern from "pick one model and use it for everything." It makes Codex feel more like an agent system with internal task routing. And that, in turn, makes the app's multi-agent surface more important because the UI and the model strategy start reinforcing each other.

There is also an important boundary here: GPT-5.4 nano is not a Codex model surface. OpenAI positions nano as API-only. So the relevant current Codex model story is really:

- GPT-5.4 for heavier planning and judgment

- GPT-5.4 mini for cheaper, narrower support work

That is a much clearer and more useful story than a raw model picker list.

What Codex Is Strongest At Right Now

Once you put the March pieces together, Codex looks strongest in four situations.

1. Parallel background work that still needs reviewable outputs.

The app's thread model, worktrees, and diff-first review flow are built for this. If the task is clear enough to hand off, Codex now gives you a stronger system for letting several things move at once without collapsing them into one terminal session.

2. Tasks that mix code work with tool or interface interaction.

GPT-5.4's native computer-use direction matters here. So do the app and skill layers. Codex is no longer just trying to patch files. It is increasingly good at workflows that touch code, documentation, browsers, assets, or supporting tools in one run.

3. Repetitive engineering chores that should become scheduled work.

Automations are one of the clearest under-discussed additions in the app story. OpenAI says it uses them for issue triage, CI failure summaries, release briefs, and bug checks. That is exactly the kind of work where "agent in a review queue" is better than "assistant in a chat."

4. Higher-trust review flows, especially around security.

Codex Security is not the whole Codex story, but it proves where the platform is expanding. OpenAI is pushing Codex beyond code generation toward review, validation, and patching workflows that require more project context and better signal control.

None of this means Codex is automatically the right tool for every coding job. It does mean the right way to evaluate Codex has changed. If you still judge it as a single-surface coding assistant, you will miss the part that is actually getting stronger.

If your next question is whether this makes Codex the better everyday choice over another coding agent, read our Claude Code vs Codex guide. That comparison makes more sense once your picture of modern Codex is current.

FAQ

Is Codex now mainly an app?

No. The app is important because it makes parallel agents, worktrees, skills, and automations easier to manage, but Codex is still explicitly documented across app, CLI, IDE extension, and cloud.

Which model powers Codex now?

OpenAI's current docs position GPT-5.4 as the main Codex model. GPT-5.4 mini is also available in Codex for faster, cheaper supporting work. GPT-5.4 nano is API-only.

Does Codex still make sense for local work?

Yes. OpenAI's current docs make local CLI and IDE use explicit, with default no-network access and writes limited to the active workspace. Codex is not just a cloud product.

What is actually new about Codex Security?

It is an application security agent inside Codex web that builds project context, validates likely findings, and proposes patches. The important point is not merely "security scanning exists." The important point is that Codex is expanding into review-heavy workflows that demand deeper context and lower noise.

Why does GPT-5.4 mini matter so much?

Because it changes the workflow, not just the bill. OpenAI explicitly frames GPT-5.4 mini as the cheaper/faster model for narrower parallel subagents while GPT-5.4 handles planning and final judgment.

What is the simplest current mental model of Codex?

Think of Codex as a cross-surface agent system. The app organizes parallel work, the local surfaces keep Codex close to the repo, the cloud path handles handoff-style tasks, GPT-5.4 raises the main-agent ceiling, GPT-5.4 mini makes cheap support work practical, and the security model is now explicit enough to matter in deployment decisions.

Ownable synthesis: March 2026 was the month Codex stopped looking like a loose bundle of features and started looking like a coherent agent stack. That is the real update. The new value is not just that Codex can do more. It is that the surfaces, models, and trust boundary now make more sense together.