Codex Computer Use is the Codex app route for work where Codex must inspect or operate a visible Mac app. It is useful for GUI-only flows, desktop-app QA, browser or simulator steps, and app settings that a shell, API, plugin, or MCP server cannot reach cleanly.

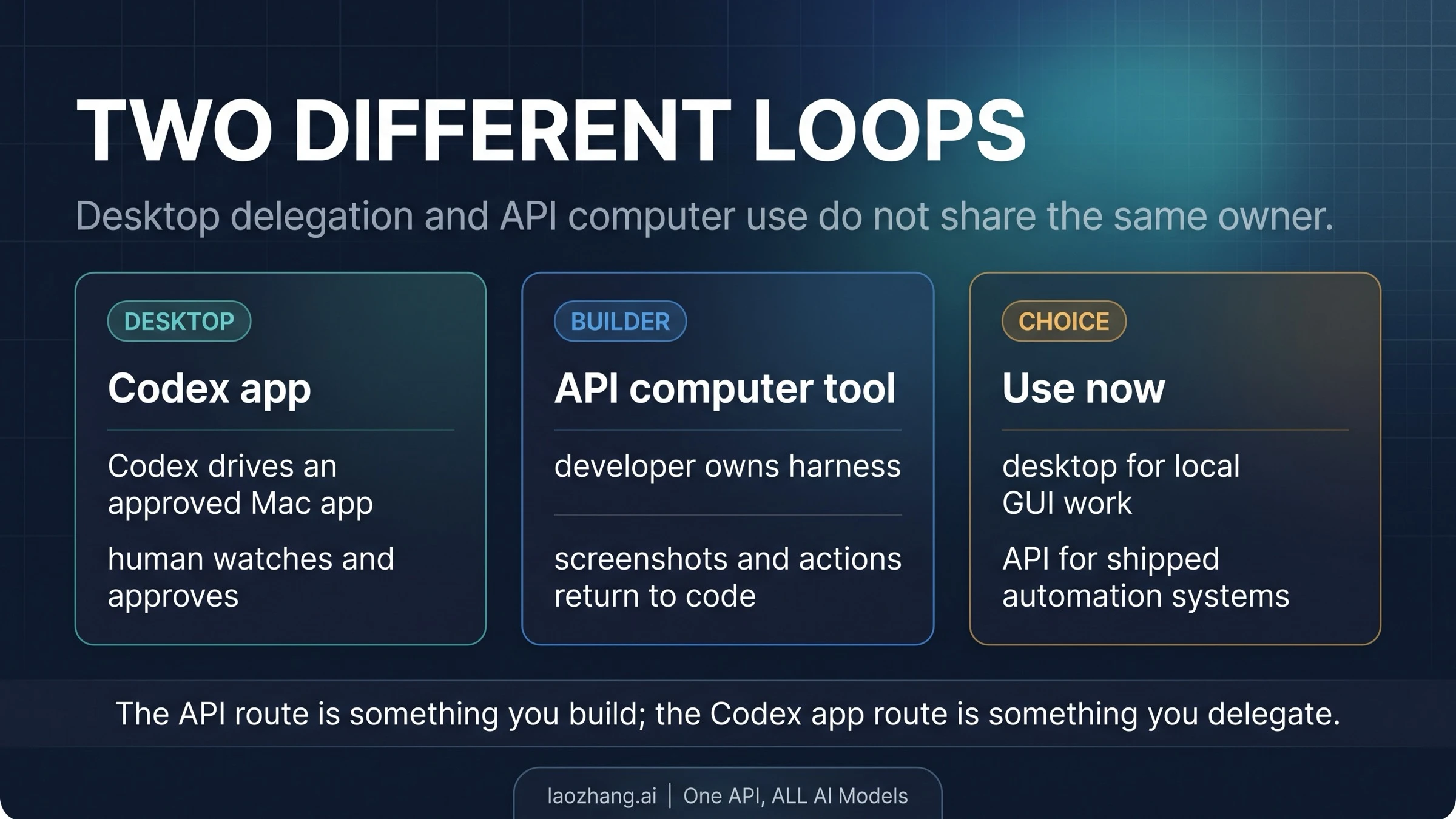

Do not confuse that app feature with API computer use. In the Codex app, you approve a target app or browser and grant macOS Screen Recording and Accessibility permissions through the Computer Use plugin; in the API route, you build the computer or browser loop and decide how screenshots, actions, and safety checks are handled.

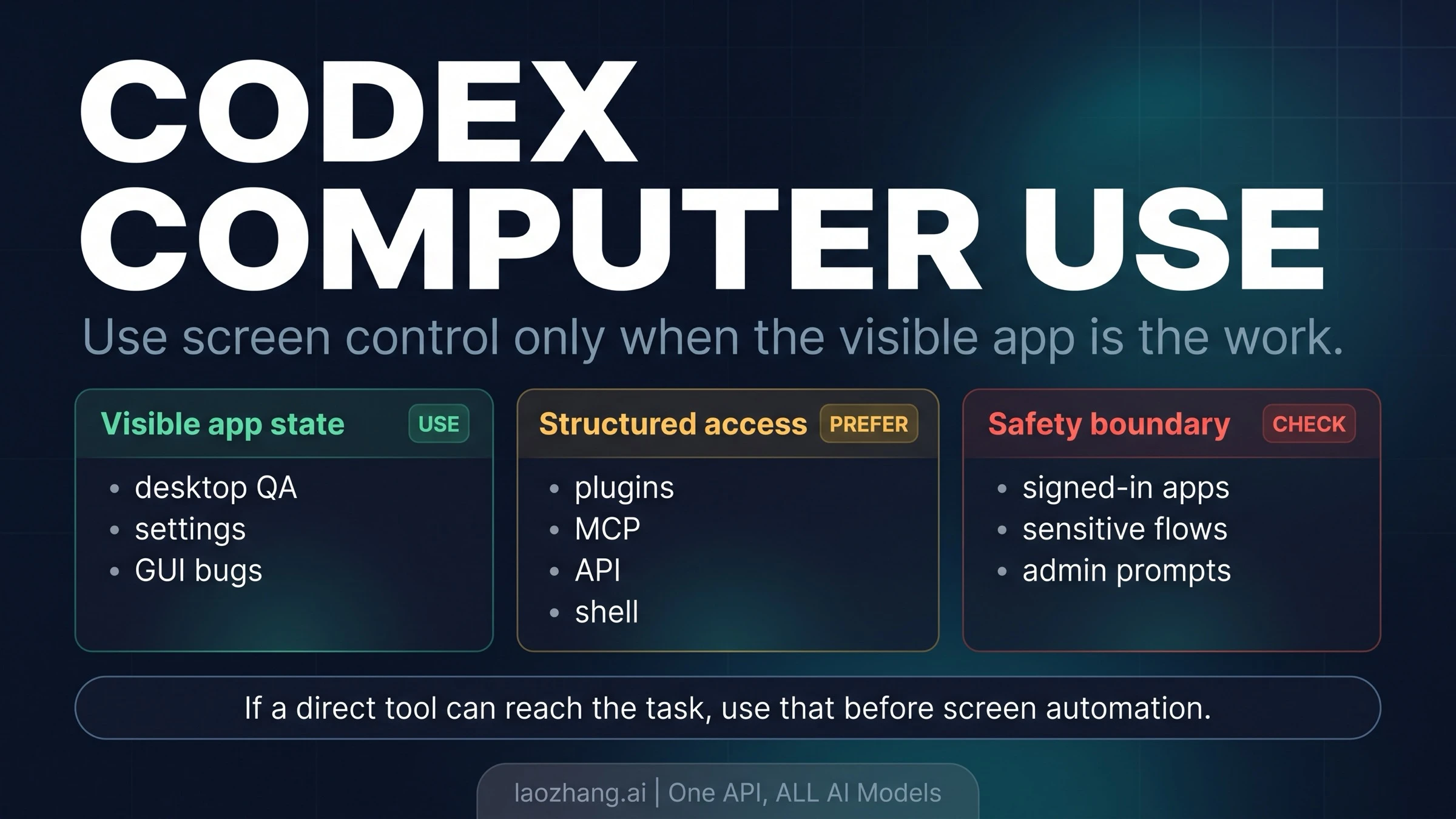

Start with the route choice: if a structured tool can expose the file, data, browser page, build log, or API response directly, use that route first. Choose Codex Computer Use only when the interface itself is the evidence or the control surface, then begin with a narrow reversible task and stop before signed-in accounts, destructive actions, admin prompts, or security and privacy prompts become the work.

As of April 19, 2026, OpenAI's Codex app docs describe computer use as a macOS feature at launch, with launch exclusions for the EEA, UK, and Switzerland. The broader Codex app also has a Windows path, but that does not make the computer-use capability universal.

Start with the route choice

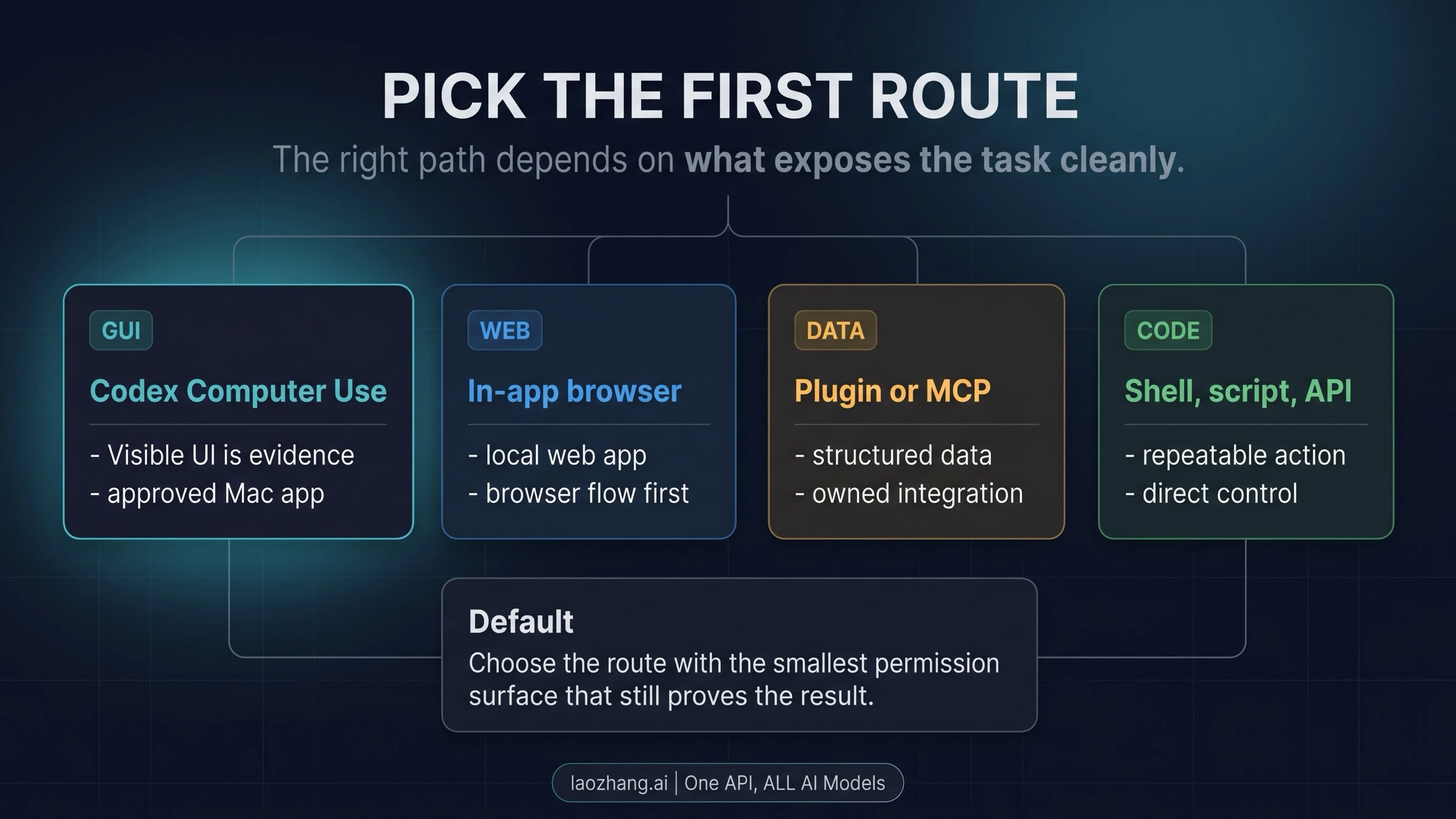

The fastest way to decide is to ask what Codex needs to see. If the task is already available as text, files, logs, API responses, repository state, or a browser page inside the Codex app, screen control is usually extra risk. Use the lower-control route and let Codex reason over the structured surface.

Codex Computer Use becomes the right first choice when the UI is the thing that has to be inspected or changed. That includes desktop app behavior, simulator flows, a local app running in a browser where visual state matters, an application preference pane, or a bug that only appears after a real click path. In those cases, the visual interface is not a detour; it is the evidence.

| Reader job | First route to try | Why |

|---|---|---|

| Inspect a web app you are building locally | Codex in-app browser | It keeps the task inside the Codex app and avoids broad desktop access. |

| Read repo files, run tests, inspect logs, or patch code | Local Codex, shell, script, or repo tools | The workspace is already machine-readable. |

| Pull data from GitHub, docs, mail, calendars, or another system with a connector | Plugin or MCP server | The integration exposes structured data without screen scraping. |

| Operate a Mac app, simulator, settings UI, or GUI-only repro | Codex Computer Use | Codex needs the visible interface to observe and act. |

| Build a product that lets a model use a browser or computer | OpenAI API computer use | Your application owns the harness, environment, screenshots, and actions. |

That route order matters because computer use can affect app state outside the repo. A shell command in the project can be reviewed as code or output. A plugin call usually has a narrower data contract. A screen click can hit a signed-in account, change a setting, or trigger a prompt that was not part of the original request.

For the broader Codex product stack, the OpenAI Codex March 2026 overview is the better background piece. The narrower question here is when visual app control is worth the extra permission and supervision.

What Codex Computer Use actually controls

OpenAI's Codex app Computer Use docs describe a product feature where Codex can observe and operate approved macOS app surfaces. The practical verbs are simple: Codex can see, click, and type in an approved target. That is powerful enough for real desktop workflows, but it is not the same as giving Codex unlimited authority over the machine.

There are two permission layers to keep separate.

First, macOS has to allow the Codex app to observe and interact with the screen. That is why Screen Recording and Accessibility permissions appear in setup. These are system-level permissions, so they deserve the same care you would apply to any tool that can see windows and send input.

Second, Codex still needs product-level approval for the target app or browser flow. Approving a target is the practical moment where you narrow the run. The safer habit is to approve only the app needed for the task, keep the instruction small, and review each prompt that asks for more access.

File reads, file edits, shell commands, and workspace access do not become one giant permission story just because computer use is enabled. Codex's normal sandbox and approval settings still matter. Treat screen control as an additional lane, not as a replacement for the existing local/cloud trust boundary. If the question is quota, plan windows, or API-key usage, the Codex usage limits guide is the relevant companion.

Availability and setup checklist

As of April 19, 2026, OpenAI documents Codex Computer Use as a macOS-at-launch feature. OpenAI's April 16 Codex update also says the broader Codex app updates roll out to Codex desktop users signed in with ChatGPT, with computer use initially available on macOS and EU and UK rollout later. OpenAI's quickstart separately documents the Codex app on macOS and Windows, so do not turn app availability into a universal computer-use claim.

The current setup path is intentionally permission-heavy:

- Install or open the Codex desktop app.

- Install the Computer Use plugin from the app's plugin area.

- Grant macOS Screen Recording permission.

- Grant macOS Accessibility permission.

- Start with a target app or browser flow and approve that target explicitly.

- Give Codex a narrow task that can be observed and reversed.

The order is not just administrative. It gives you multiple stop points. If the task can be solved before Screen Recording is granted, stop there. If the target approval feels broader than the work requires, narrow the task. If Codex asks to move from a harmless local app into a signed-in browser, pause and decide whether the browser action should stay human-led.

OpenAI's docs also call out limits that belong near setup, not after a long list of examples. Codex Computer Use cannot automate terminal apps or Codex itself. It cannot authenticate as an administrator. It cannot approve macOS security or privacy prompts. Those limits are not annoyances to bypass; they are part of the safety boundary that keeps a visual automation lane from becoming silent system administration.

Good tasks for Codex Computer Use

The best fit is a task where the screen contains information that no cleaner tool can expose. That does not mean the task has to be spectacular. In practice, the strongest early wins are ordinary visual tasks with low blast radius.

Desktop app QA is a good example. Codex can open the app, follow a reproduced path, compare the UI against expected behavior, and report what changed after a build. If the bug is "the settings modal overlaps the save button after a particular click path," a shell command cannot see that. Computer use can.

Browser and simulator flows can also fit when the visual state matters. OpenAI recommends the in-app browser first for local web apps, but there are still cases where the important evidence is outside a normal browser page: simulator chrome, native dialogs, app settings, a desktop menu, or a GUI-only error state. The test is whether the visible interface is the source of truth.

App settings and configuration screens are another good category. A preference pane, a developer tool setting, or a local app toggle may not have an API, and the reader may simply need Codex to inspect what is currently selected. A safe instruction might be: "Open this app's preferences, tell me which export format is selected, and do not change anything." That uses the visual lane for observation before action.

Data sources without plugins are more delicate but still valid. If a tool has no MCP server, no API access, and no export path, asking Codex to read a visible dashboard may be the only practical route. Keep the scope observational first. Extracting a visible table is different from clicking through account settings or changing billing information.

The common thread is not "let Codex run the computer." The common thread is "the interface itself carries the work." When that sentence is not true, screen control is probably the wrong first move.

Better routes before screen control

A useful Codex setup has more than one lane. Computer use sits near the high-control end of the ladder, so the better route is often somewhere below it.

Use the in-app browser when the job is a local web app or a web page Codex can inspect directly inside its own surface. That keeps the feedback loop visible without granting broader macOS app control. It is also easier to repeat: reload the page, inspect the output, patch the code, and try again.

Use a plugin or MCP server when the work is really about data access. A structured connector can return the exact email, issue, pull request, calendar event, or documentation snippet Codex needs. That is cleaner than opening a browser, scrolling through a UI, and hoping a visual action lands on the right state.

Use shell commands, scripts, and tests when the repo is the system of record. Running a test, reading a log, generating a file, or checking a build should stay in the workspace lane. The result is easier to quote, diff, and rerun than a UI click path.

Use the API route when you are building a product or internal automation around computer control. OpenAI's API computer-use guide describes a developer-owned loop: the model returns UI actions, your harness executes them, and screenshots or results go back to the model. That is not the same contract as opening the Codex desktop app and approving a Mac app. API builders own the runtime, the screenshots, the action executor, and the safety rails.

The same execution-ownership distinction appears in other ecosystems too. The Claude Computer Use guide uses API versus desktop delegation as the main split, and the lesson carries over: a computer-use feature is not fully understood until you know who owns the environment.

Safety boundaries that should stop the run

Screen control changes the risk profile because the model is no longer only editing files or returning text. It may interact with a window that represents a real account, a real setting, or a real system prompt. The safe default is to stay present for any flow where a mistake would matter.

Signed-in browsers deserve special caution. A website action taken through your logged-in session may count as your action, even if Codex clicked the button. That is fine for low-risk observation and controlled QA. It is not fine for payments, account deletion, permission grants, publishing, legal acceptance, or any workflow where consent has to be human.

Destructive actions should remain human-led unless the task is deliberately scoped to a disposable environment. Deleting files, changing global app settings, modifying account permissions, submitting forms, or approving irreversible changes are all beyond a sensible first task. A good instruction tells Codex where to stop: "navigate to the confirmation screen and report what it says, but do not confirm."

Admin authentication and macOS security or privacy prompts are hard stops under the documented boundary. Codex cannot approve those prompts for you, and treating that as an obstacle to route around is the wrong mindset. If the task reaches a system-level prompt, the right next action is human review.

Prompt injection is also more plausible when the model reads screens. A web page, document, or app window can contain text that tries to steer the agent. Keep the task narrow enough that irrelevant on-screen instructions are easy to ignore, and avoid giving broad goals like "handle whatever the site asks." That kind of instruction hands too much authority to whatever appears on the display.

App computer use versus API computer use

The Codex app feature and the OpenAI API tool solve related but different problems.

In the Codex app, the user is delegating a desktop task. The product manages the agent session, the Mac app is the visible target, and the approval flow is built into the app experience. The reader's practical work is choosing the right target, granting the right Mac permissions, writing a narrow instruction, and staying present for sensitive moments.

In the API route, the developer is building an automation system. The application chooses the environment, captures screenshots, executes model-requested actions, returns observations, and owns whatever confirmation logic protects the user. OpenAI's current API docs recommend the GA computer tool with gpt-5.4 for new implementations, while the older computer-use-preview model remains a legacy compatibility detail for the Responses API.

That difference changes the right first question. For a Codex app user, ask: "Can Codex see the target app, and is this visual task narrow enough to approve?" For an API builder, ask: "What sandbox, browser, action executor, screenshot cadence, and confirmation policy will make this loop reliable?" Those are not interchangeable setup checklists.

The API path is stronger for repeatable product automation, internal tooling, or controlled browser/computer agents. The Codex app path is stronger for supervised desktop work where the human can watch, approve, and stop the run. If you only need Codex to inspect a local app once, do not build an API harness. If you need hundreds of users to run a browser automation flow, do not depend on a human's Codex desktop session.

A safe first task

The best first task is narrow, observable, and reversible. Do not start with a signed-in account, a payment flow, or an app where a wrong click has real cost.

A good first instruction has four parts:

- Name the exact target app or browser page.

- Say whether Codex may observe only or may also click/type.

- Define the stopping point before any sensitive or irreversible action.

- Ask for a short report of what Codex saw and what it would do next.

For example:

“Open the local demo app, inspect the settings modal, and report whether the export dropdown shows CSV, JSON, or PDF. You may click only to open the modal. Do not change the selected value.

That prompt gives Codex a target, a limited action budget, and a stop rule. It also makes the result easy to check. If Codex cannot find the modal or asks for more access, you learn that before letting it touch anything important.

After one successful observational run, move to a small reversible action. A good second task might be changing a theme toggle in a demo app and then changing it back, or reproducing a visual bug in a local environment. The goal is to learn the approval flow and failure modes before combining computer use with longer agent work.

If the real question is whether Codex as a whole fits your engineering workflow, the Claude Code vs Codex comparison is the broader tool-choice frame. If the question is whether the current Codex stack has changed enough to revisit your mental model, start with the Codex overview and then come back to this route choice.

FAQ

Does Codex have computer use?

Yes. OpenAI documents Codex Computer Use as a Codex app feature that lets Codex operate approved macOS app surfaces by seeing, clicking, and typing. The useful boundary is that it is for visible-app work, not for every Codex task.

Is Codex Computer Use available on Windows?

OpenAI documents the Codex app on macOS and Windows, but the computer-use feature is documented as macOS at launch as of April 19, 2026. Treat Windows app availability and Mac computer-use availability as separate facts until OpenAI documents broader computer-use support.

What permissions does it need on macOS?

The current app setup requires the Computer Use plugin plus macOS Screen Recording and Accessibility permissions. Target-app approval is a separate product step, so do not treat the system permissions as blanket approval for every app.

Is Codex Computer Use the same as OpenAI API computer use?

No. Codex Computer Use in the app is a supervised desktop feature. API computer use is a developer-owned harness through the Responses API, where your application executes actions and sends observations back to the model.

Can Codex use signed-in websites?

It may be able to interact with a signed-in browser surface, which is exactly why you should be careful. A website action in your session may count as your action. Keep sensitive account actions, payments, deletions, permission changes, and consent flows human-led.

What should I try first?

Start with an observational task in a low-risk app: ask Codex to inspect a visible state, click only one harmless control if needed, and stop before making changes. Move to reversible actions only after the permission flow and target approval behavior are clear.