OpenAI Codex does not have one universal daily token limit that answers every case. As of April 21, 2026, the useful answer depends on which Codex contract you are using: included ChatGPT plan usage, a Business or Enterprise credit setup, or an API key.

That split matters because OpenAI now describes Codex limits in several different units. Plus and Business show five-hour windows for local messages, cloud tasks, and code reviews. Pro is split into Pro 5x and Pro 20x tiers, with a temporary Pro promotion running through May 31, 2026. API-key usage is not an included-plan quota at all; it is usage-based API billing with separate API rate limits.

So the first move is simple: open the Codex usage dashboard or run /status during an active CLI session, confirm which branch you are on, and only then compare numbers. A copied quota screenshot is less useful than knowing whether you are looking at plan windows, workspace credits, or API billing.

Evidence note: OpenAI's Codex pricing page, Codex authentication page, and API rate-limit documentation were rechecked on April 21, 2026.

Start Here: Which Codex Limit Surface Applies?

The phrase "Codex usage limits" sounds like one question, but there are at least three practical surfaces.

| If you use | What governs the limit | First surface to check | What changes after pressure starts |

|---|---|---|---|

| ChatGPT Plus, Pro, or included Business usage | Plan windows by model and activity | Codex usage dashboard and /status | You can use credits, switch model mix, or move extra local work to API billing |

| Business, Enterprise, or Edu workspace credits | Workspace contract, seat type, and credit rules | Workspace admin contract plus current Codex pricing | Flexible-pricing workspaces can buy more workspace credits |

| API key | API pricing, organization / project limits, and model-specific rate limits | OpenAI Platform limits and API pricing | You are already usage-based; the job becomes throughput and spend control |

For the larger product context, use our OpenAI Codex March 2026 guide. The operational job here is narrower: identify the limit contract, check the right surface, and choose the next move.

Current Codex Usage Limits by Plan

OpenAI's current Codex pricing page does not publish one daily token cap. It publishes plan tables by model and activity. The key snapshot on April 21, 2026 is:

| Plan route | Local messages / 5h | Cloud tasks / 5h | Code reviews / 5h | Important caveat |

|---|---|---|---|---|

| Plus | GPT-5.4: 20-100; GPT-5.4-mini: 60-350; GPT-5.3-Codex: 30-150 | GPT-5.3-Codex: 10-60 | GPT-5.3-Codex: 20-50 | Local and cloud usage share a five-hour window; additional weekly limits may apply |

| Pro 5x | GPT-5.4: 100-500; GPT-5.4-mini: 300-1750; GPT-5.3-Codex: 150-750 | GPT-5.3-Codex: 50-300 | GPT-5.3-Codex: 100-250 | Pro $100 receives 2x the shown usage through May 31, 2026 |

| Pro 20x | GPT-5.4: 400-2000; GPT-5.4-mini: 1200-7000; GPT-5.3-Codex: 600-3000 | GPT-5.3-Codex: 200-1200 | GPT-5.3-Codex: 400-1000 | Pro $200 has an additional temporary boost through May 31, 2026 |

| Business included usage | GPT-5.4: 20-100; GPT-5.4-mini: 60-350; GPT-5.3-Codex: 30-150 | GPT-5.3-Codex: 10-60 | GPT-5.3-Codex: 20-50 | Usage-based seats and flexible pricing can change the team contract |

| API Key | Usage-based | Not available | Not available | API key supports local Codex use, not cloud review or Slack-style cloud features |

There are two reader traps in that table.

First, the ranges are not a promise that every session lands at the high end. OpenAI says effective Codex usage depends on the model, task size, task complexity, and whether the work runs locally or in the cloud. A short local edit on GPT-5.4-mini and a long cloud task on GPT-5.3-Codex are not the same unit of work.

Second, "code reviews" in this table means GitHub-based Codex review usage, such as tagging Codex on a pull request or enabling automatic reviews. Review-like work you run locally still counts toward general usage.

The practical reading is: Plus and Business currently share the same included-usage shape for most listed features, Pro is split into two explicit tiers, and the API-key branch is not a subscriber quota.

Why Your Actual Codex Headroom Can Feel Different

If two people on the same plan report different Codex limits, both can be telling the truth. Codex usage is averaged across work types, not counted as one perfectly uniform message.

The largest variables are:

- Model choice: GPT-5.4-mini extends local-message headroom compared with heavier models, which is why OpenAI recommends it for routine tasks.

- Task size and context: larger repositories, long-running tasks, and sessions that require more retained context use more of the allowance per message.

- Local versus cloud: local messages and cloud tasks share the five-hour window on the listed plans, but they do not feel identical in practice.

- Speed and image generation: faster speed configurations consume credits faster, and Codex image generation uses included limits more quickly than text-only work.

- Prompt and tool overhead: large

AGENTS.mdfiles and unnecessary MCP servers add context that can reduce how far a limit lasts.

That is also why the answer should not be "what is my daily token limit?" The more reliable question is "which work type is burning my current window, and can I move the routine parts to a cheaper model or a different billing branch?"

Credits, Business Seats, and the Token-Based Shift

Credits are the overflow unit that lets you continue after included usage runs out. For Plus and Pro, OpenAI points to additional credits. For Business, Edu, and Enterprise plans with flexible pricing, OpenAI points to workspace credits.

The confusing part is that team pricing is also moving toward token-based accounting. OpenAI's current Codex pricing page says that, as of April 2, pricing is moving to API token-based rates for new and existing Business customers and new Enterprise customers. Under that model, credits are consumed per million input tokens, cached input tokens, and output tokens.

The current token-credit snapshot includes:

| Model | Input credits / 1M tokens | Cached input credits / 1M tokens | Output credits / 1M tokens |

|---|---|---|---|

| GPT-5.4 | 62.50 | 6.250 | 375 |

| GPT-5.4-mini | 18.75 | 1.875 | 113 |

| GPT-5.3-Codex | 43.75 | 4.375 | 350 |

| GPT-5.2 | 43.75 | 4.375 | 350 |

That does not mean every Codex user is now on token metering. OpenAI also says other plan types should continue to use the previous message-based rate card until migration. Enterprise and Edu users on flexible pricing have no fixed rate limits because usage scales with credits, while Enterprise and Edu plans without flexible pricing have Plus-like per-seat limits for most features.

So do not flatten the team story into "Business is unlimited" or "Codex is always token-metered." The correct statement is narrower: team workspaces can be included-usage, flexible-credit, or usage-based depending on the plan and seat setup.

What Changes with an API Key

The API-key route is useful, but it is not a way to make ChatGPT plan limits disappear. It is a different contract.

OpenAI's Codex pricing page describes API Key access as Codex in the CLI, SDK, or IDE extension. It also says API Key usage does not include cloud-based features such as GitHub code review or Slack integration, and that new Codex models can arrive later on the API-key route than on ChatGPT plan access.

For the API itself, rate limits are measured in multiple ways: RPM, RPD, TPM, TPD, and IPM. Those limits can apply at the organization or project level, can vary by model, and can interact with monthly spend caps. In other words, API usage is not one daily token number either. It is throughput, billing, and account-limit management.

If your next question is how to start the API side cheaply and legitimately, use our OpenAI API key free trial guide. For Codex specifically, the API key makes most sense when you need extra local automation, CI-style usage, or clearer usage-based economics.

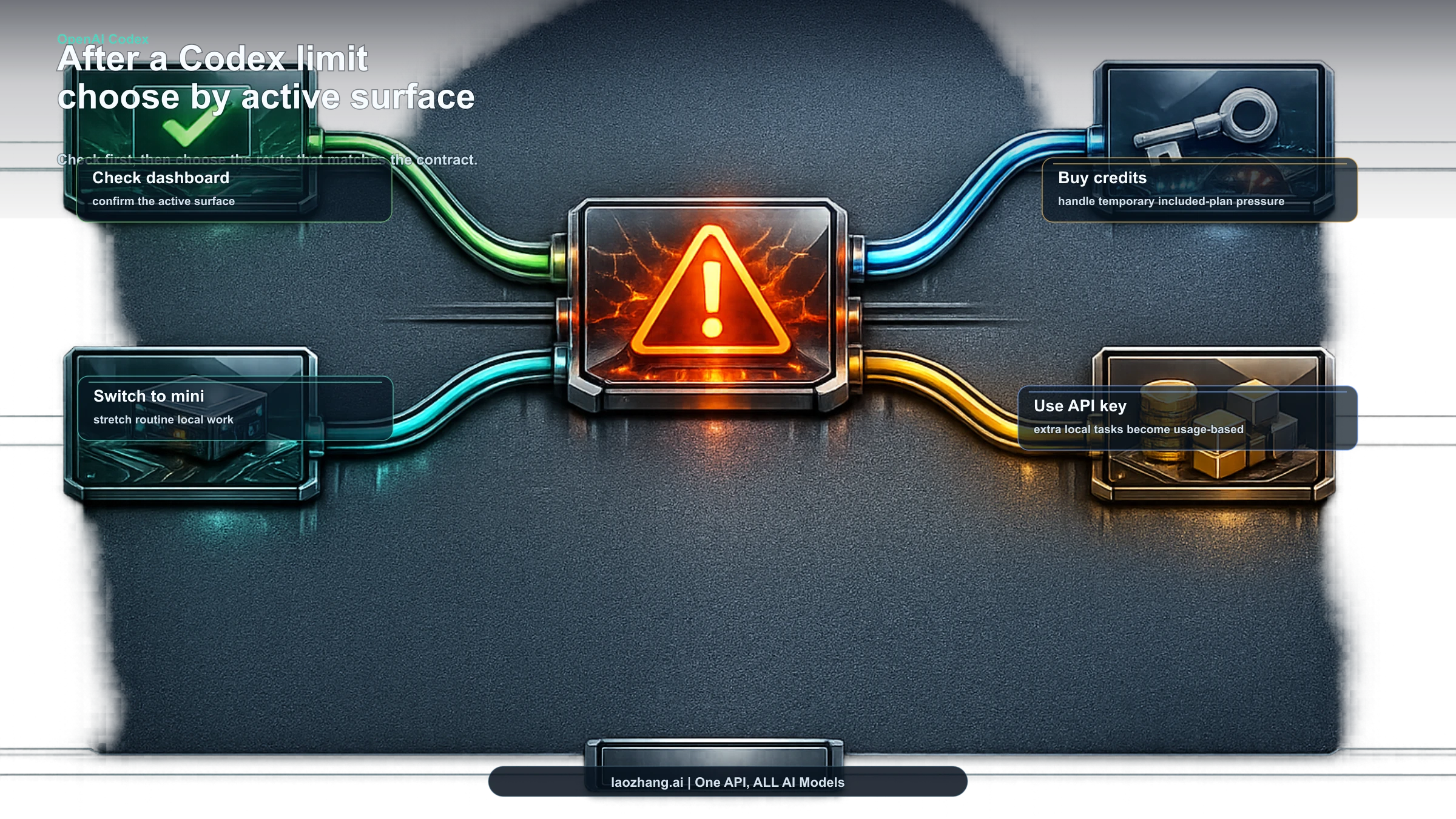

What To Do After You Hit a Codex Limit

Use this order before upgrading blindly.

- Check the official surface. Use the Codex usage dashboard first. During an active CLI session, run

/statusto see remaining limits. - Switch routine work to GPT-5.4-mini. OpenAI says mini can extend local-message usage by roughly 2.5x to 3.3x depending on what you switch from.

- Reduce prompt overhead. Tighten prompts, reduce large

AGENTS.mdcontext where possible, and disable MCP servers you do not need for the task. - Use credits when the interruption is temporary. Plus and Pro can buy additional credits; flexible workspaces can buy workspace credits.

- Use API key billing for extra local tasks. This is useful when the work fits usage-based economics better than more included-plan headroom.

The right move depends on the branch. A Plus user who runs out during a heavy coding session, a Business admin managing workspace credits, and an API user hitting TPM pressure are not solving the same problem.

FAQ

Does OpenAI Codex have a daily token limit?

Not one universal daily token limit. Current Codex usage is described through plan windows, credits, or API rate limits depending on the route.

Are Codex Plus and Pro limits daily?

The current official Codex table is built around five-hour windows for local and cloud usage, plus additional limit caveats. Pro is now split into Pro 5x and Pro 20x.

Is Business the same as Plus?

The current included-usage Business table matches Plus for the listed usage ranges, but Business can also involve standard seats, usage-based seats, flexible pricing, and workspace credits. Check the workspace contract before assuming the table is the whole answer.

What is the Pro promo?

As of April 21, 2026, OpenAI says Pro $100 receives 2x the shown Codex usage through May 31, 2026. Pro $200 also has a temporary higher five-hour Codex limit through May 31, 2026.

Does API-key Codex use the same limits as ChatGPT?

No. API-key Codex is usage-based and supports local CLI, SDK, or IDE extension use. It does not include cloud-based Codex features like GitHub code review or Slack integration.

Where should I check current remaining usage?

Use the Codex usage dashboard for plan limits. In the CLI, use /status during an active session.

Bottom line: Codex usage limits are not a single-number quota story. Identify the contract first: plan window, workspace credit, or API key.