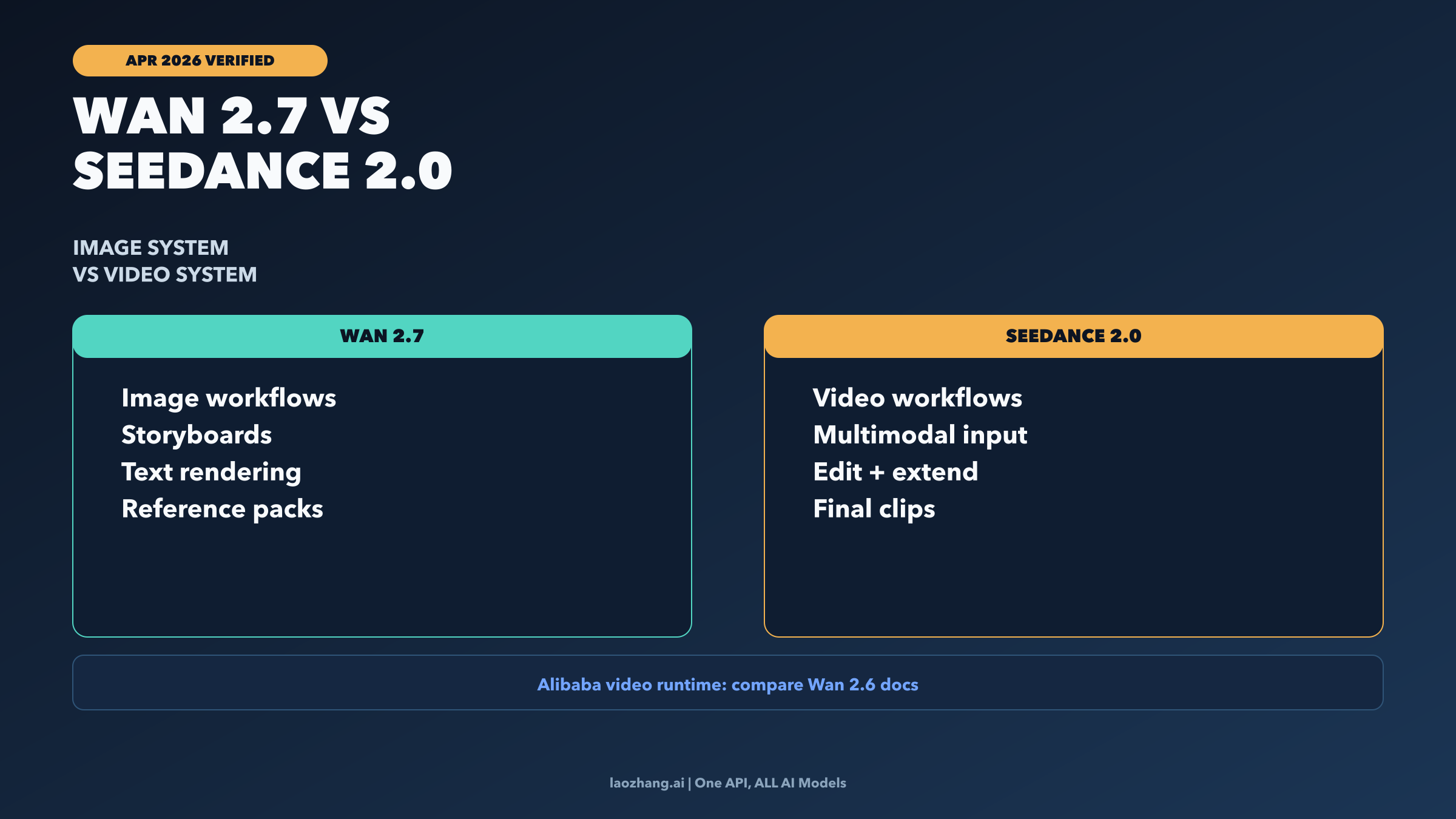

Wan 2.7 and Seedance 2.0 are not direct peers in their current official forms. Wan 2.7 is Alibaba's image-first release. Seedance 2.0 is ByteDance's multimodal audio-video system. If you need storyboards, brand-accurate reference packs, typography-heavy stills, or image ideation that stays visually controlled, start with Wan 2.7. If you need final video generation, editing, or extension, start with Seedance 2.0. If what you really mean is "Alibaba's current official video stack versus Seedance 2.0," the cleaner comparison is Wan 2.6 video versus Seedance 2.0 beta.

All freshness-sensitive facts below were rechecked against official Alibaba Cloud, ByteDance Seed, and Volcengine documentation on April 2, 2026. That matters here because the search market is flattening very different product layers into one fake benchmark.

TL;DR

| If this is your real deliverable | Best pick | Why it wins | Main catch |

|---|---|---|---|

| Storyboards, reference packs, text-heavy stills, or upstream visual ideation | Wan 2.7 | Alibaba's current official Wan 2.7 launch is image-first and optimized for long text, multi-image references, and high-control still generation | Do not treat it as the same thing as Alibaba's currently documented public video runtime |

| Final multimodal video generation, editing, and extension | Seedance 2.0 | ByteDance's current official docs position Seedance 2.0 as the video model in this pair, with text, image, video, and audio inputs | Public-beta API access is still enterprise-scoped rather than universal self-serve |

| Choosing the clearer currently documented runtime contract | Wan 2.6 video vs Seedance 2.0 beta | Alibaba's public Model Studio docs are clearer today on Wan 2.6 video pricing and runtime rules than on Wan 2.7 video naming | It is a different comparison from Wan 2.7 image versus Seedance 2.0 |

| Building one pipeline from ideation to final clip | Use both | Wan 2.7 is strong upstream for keyframes and style packs; Seedance 2.0 is stronger downstream for the actual finished clip | You need to manage the handoff intentionally instead of forcing one model to do both jobs |

Why Wan 2.7 and Seedance 2.0 are not a clean one-to-one benchmark

The first correction is simple: these products sit on different output layers in the official material that is publicly available today. Alibaba's April 2026 press release introduces Wan2.7-Image and Wan2.7-Image-Pro as image-generation and image-editing releases. The same announcement emphasizes long-text rendering, personalized image generation, up to nine reference images, up to twelve generated images at once, and 4K output on Wan2.7-Image-Pro. That is an image workflow story.

ByteDance's official Seedance 2.0 launch and Volcengine's current Seedance 2.0 tutorial describe something different: a multimodal audio-video model that accepts text, images, videos, and audio as inputs, generates clips between four and fifteen seconds, and supports video editing or extension. That is a video workflow story.

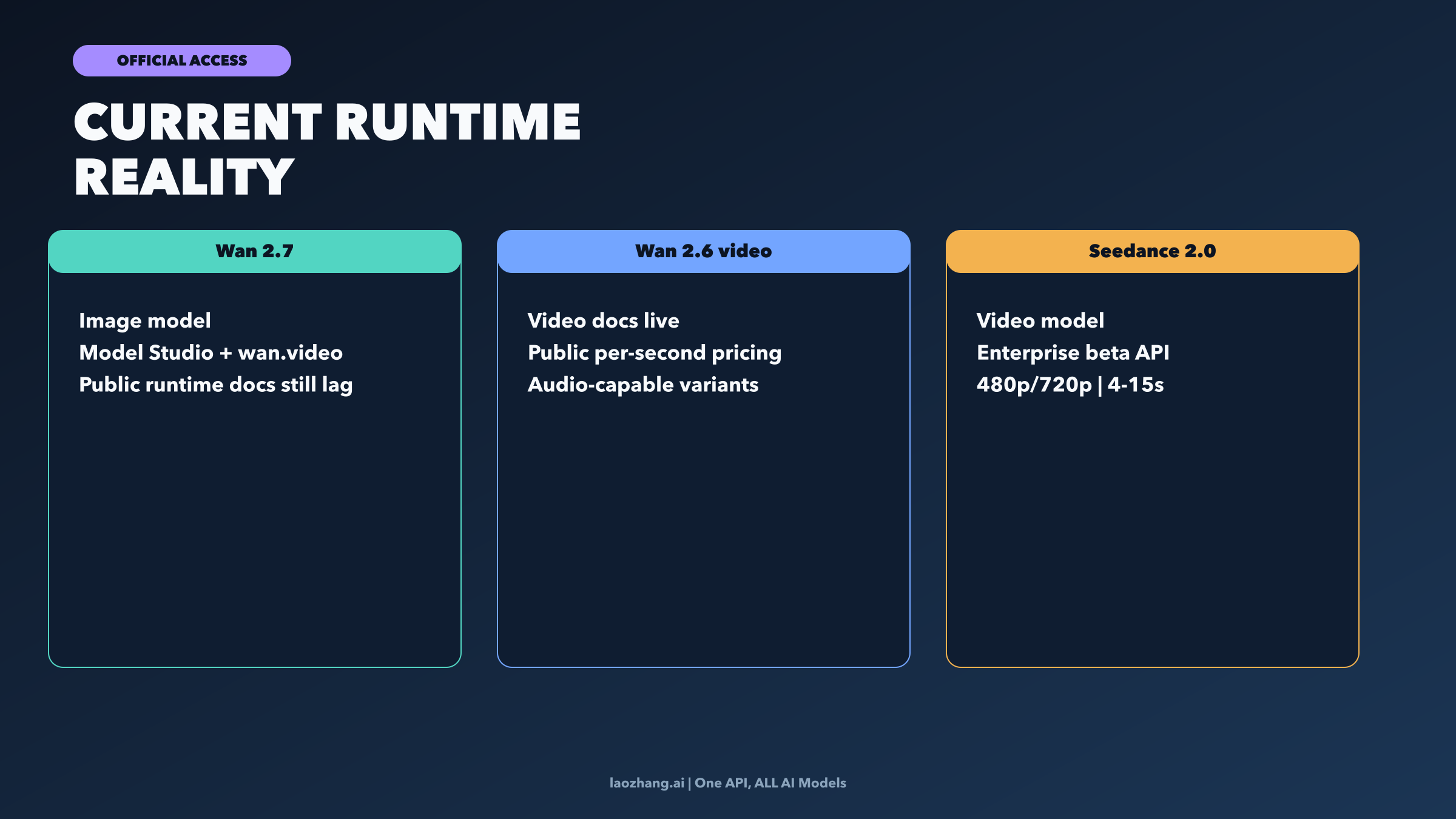

Why does the confusion persist? Because "Wan" as a brand now spans more than one generation and more than one surface. Alibaba's press release says Wan 2.7 is accessible through Model Studio and wan.video, but the current public Alibaba Model Studio model page still documents the clearest public video runtime under the Wan 2.6 family, not under a standalone Wan 2.7 video family. So when people ask "Wan 2.7 vs Seedance 2.0," they are often mixing a current image release with a current video system and then asking for a single winner. That is the wrong question before you even reach quality, pricing, or access.

The practical consequence is that the decision should begin with the deliverable, not with the brand name. If your output is a still image or a set of still assets that must stay visually controlled, you are choosing an image layer. If your output is the finished video clip, you are choosing a video layer. Only after that split does the comparison become useful.

Where Wan 2.7 is the stronger pick

Wan 2.7 is the stronger choice when your real job is still-image control, not final video output. Alibaba's launch materials focus on exactly the workflows where that matters: text-heavy visual generation, storyboard-style multi-image creation, color-accurate commercial imagery, and personalized image editing. Those are not side benefits. They are the core product story.

Three parts of the official Wan 2.7 contract matter most. First, Alibaba says Wan 2.7 supports up to 3,000 text tokens, which is unusually relevant for typography-heavy assets, poster variants, and other cases where most image models start to degrade once the text payload gets longer or more structured. Second, Wan 2.7 supports up to nine reference images and can generate up to twelve images at once, which makes it much more useful for storyboard boards, style packs, product-scene iteration, and campaign visual exploration than a one-shot "make me one pretty image" model. Third, Wan2.7-Image-Pro goes to 4K output, which gives teams a higher-confidence path for design review, e-commerce detail, and downstream cropping.

That is why Wan 2.7 makes sense upstream in a content pipeline. If you are deciding on product composition, locking a visual identity, testing typography, or generating the still references that will later guide video work, Wan 2.7 is the better fit than Seedance 2.0. Seedance can accept image references, but its public contract is about turning multimodal inputs into video. Wan 2.7 is where you should stay when the asset itself is the still image.

This is also the right choice when your team is still trying to decide what the video should look like before you pay the cost of video generation. A strong reference pack reduces wasted video iterations. In that sense, Wan 2.7 can be more valuable before the video stage than a better video model would be after a weak visual brief. If your bottleneck is still the frame, not the motion, solve the frame first. Our broader best AI image model guide is the better next read if you are evaluating that upstream layer more generally.

Where Seedance 2.0 is the stronger pick

Seedance 2.0 is the stronger choice when the deliverable is actual video. The current Volcengine tutorial exposes the runtime shape clearly enough to make that conclusion practical, not hypothetical. The documented Seedance 2.0 beta contract supports text plus up to nine images, up to three video clips, and up to three audio files in one request. It allows output durations between 4 and 15 seconds at 480p or 720p, and it covers both generation and edit-style flows such as extending or modifying video with multimodal inputs.

That matters because Seedance 2.0 is not just "a model that also accepts references." Its strength is that the references live inside a video-native workflow. You can guide motion, rhythm, scene continuation, and audio-aware generation from the start rather than trying to push an image-centric product into a video role it does not publicly document as its main lane. If your decision criteria include "Can I take multiple images, add motion guidance, add audio guidance, and ship an actual clip?" Seedance 2.0 is simply closer to the task.

The access boundary still matters. Volcengine's current docs position Seedance 2.0 and Seedance 2.0 Fast as public beta for enterprise users, not as a fully frictionless self-serve global runtime. That is an important limitation, but it does not change what the product is. It means the article's right answer is: Seedance 2.0 is the better model for final video work in this pair, while the ease of access depends on whether your organization qualifies for the present rollout.

Seedance 2.0 is especially compelling when the video itself is the unit of value: social clips, product demos, camera-guided promo videos, multimodal edits, or short audio-aware narrative shots. If your task begins with "I need the finished clip," not "I need better upstream references," Seedance 2.0 is the side of this comparison that actually matches the problem. If you want a broader creator-market comparison beyond this specific pair, our best AI image-to-video generator guide is the better shortlist.

If runtime and API clarity matter, compare Wan 2.6 video with Seedance 2.0 beta

Technical buyers usually care about a different question from creators. They do not just want to know which product looks more exciting. They want to know what is officially documented, callable, priced, and supportable today. In that lane, the right comparison is not Wan 2.7 image versus Seedance 2.0. It is Wan 2.6 video versus Seedance 2.0 beta.

Alibaba's public Model Studio docs are stronger today on the runtime-contract side. They expose clear Wan 2.6 video model families such as wan2.6-t2v and wan2.6-i2v-flash, plus public per-second prices. On the US contract shown in the April 2, 2026 docs, wan2.6-t2v-us is listed at $0.10 per second for 720p and $0.15 per second for 1080p. The documented wan2.6-i2v-flash contract is even more explicit about audio versus no-audio pricing. That level of public clarity is useful even if your eventual production stack may change.

Seedance 2.0's current official contract tells a different story. The tutorial page now exposes real model IDs, output ranges, and multimodal limits, which is better than the older "console only" narrative. But the pricing page is still easier to read as scenario-based examples than as one clean universal sticker. Official examples currently show a 5-second 16:9 clip at 480p priced at 2.31 RMB for doubao-seedance-2.0 and 1.86 RMB for doubao-seedance-2.0-fast, while a 5-second 16:9 clip at 720p is shown at 4.97 RMB and 4.00 RMB respectively. That is usable information, but it is a looser public pricing surface than Alibaba's published per-second Wan 2.6 video rows.

So the routing rule is straightforward. If you are choosing by public runtime clarity, contract readability, and documented price tables, Alibaba's publicly documented video side is easier to reason about today, but the name attached to that decision is still Wan 2.6 video, not Wan 2.7 image. If you are choosing by multimodal video semantics, editability, and the stronger video-native capability story, Seedance 2.0 is more attractive, but you have to accept the current enterprise-beta access boundary and the slightly less uniform pricing surface.

If you need the broader market view of which public video APIs are actually easy to adopt right now, our best free AI video API guide is the more practical companion piece than any hype-driven vendor showdown.

Best picks by workflow and when to use both

The most useful answer is often not "pick one winner." It is "pick the right owner for each stage."

If you are a brand or creative team building campaigns, use Wan 2.7 upstream when the hard part is deciding the look: hero stills, storyboard panels, product compositions, text-heavy visuals, and the reference pack that the rest of the team will align on. Once the look is approved, use Seedance 2.0 downstream if the next job is to turn that visual language into moving video with stronger multimodal guidance.

If you are a technically minded buyer trying to choose where to integrate first, split the question in two. For image generation and controlled still assets, Wan 2.7 is the more honest answer. For current publicly documented video runtime comparison, route through Wan 2.6 video and Seedance 2.0 beta. For finished video creation, Seedance 2.0 is the better functional match. That sounds more complicated than a one-line winner, but it is also the first answer in this market that will not mislead you six minutes later.

If you are a solo creator or performance marketer, the decision can be faster. Use Wan 2.7 when you need to prepare keyframes, ad concepts, or clean reference sets before motion begins. Use Seedance 2.0 when you already know the shot and want the short clip itself. Use both when your workflow spans both layers and you do not want to sacrifice visual control upstream or multimodal motion control downstream.

That pipeline-shaped answer is the real information gain over most comparison pages. The strongest workflow is often: Wan 2.7 for ideation and reference control, then Seedance 2.0 for final clip generation or editing. The only time you should force a single-model answer is when your team truly only lives on one layer.

Frequently Asked Questions

Is Wan 2.7 better than Seedance 2.0?

Not as a universal claim. Wan 2.7 is better for image-centric work such as storyboards, reference packs, and text-heavy stills. Seedance 2.0 is better for final multimodal video generation and editing. "Better" only becomes accurate once you scope it to the workflow stage.

Does Wan 2.7 generate video?

Alibaba's April 2026 Wan 2.7 launch is image-first, and the clearest currently documented public video runtime in Alibaba Model Studio is still the Wan 2.6 family. That means "Wan 2.7 video" is not the clean public runtime contract to compare against Seedance 2.0 today. If your real question is about Alibaba's deployable video stack, compare Wan 2.6 video instead.

Which side is better for API and runtime buyers?

If you care most about public contract clarity, Alibaba's Wan 2.6 video docs are easier to reason about today because the model families and per-second pricing are spelled out more cleanly. If you care most about multimodal video capability, Seedance 2.0 is the more interesting video system, but the official access boundary remains enterprise beta.

When does it make sense to use both?

Use both when your workflow starts with visual planning and ends with an actual clip. Generate the still references, keyframes, or branded visual pack in Wan 2.7, then feed that approved direction into Seedance 2.0 for video generation, extension, or edit-style work.