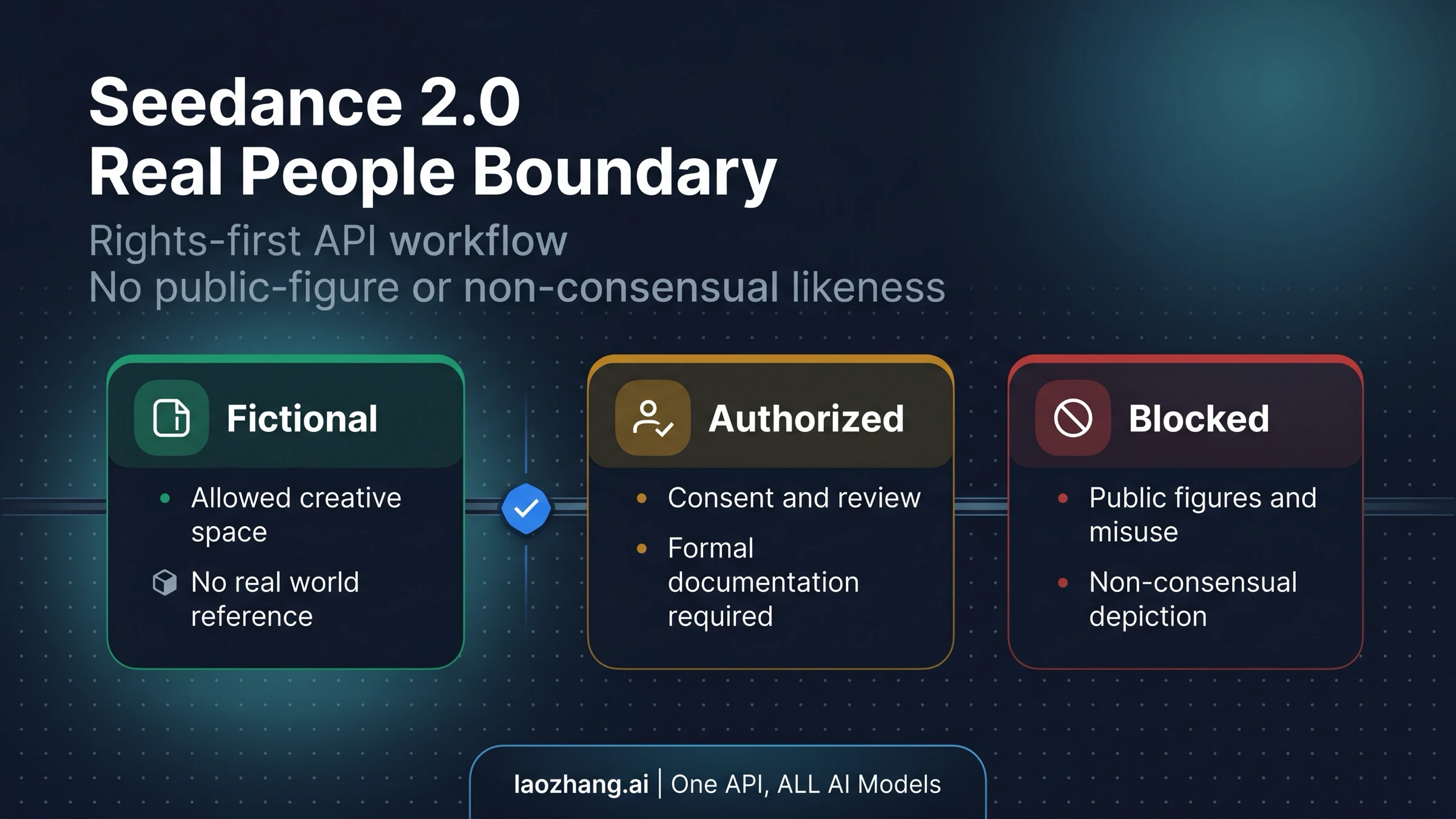

As of April 20, 2026, Seedance 2.0 API routes support people as video content and can use image, video, and audio references, but they are not an unrestricted "real person" or celebrity-likeness API. Treat every identifiable-person request as conditional: fictional humans are usually a creative job, authorized real-person references need a rights-and-consent workflow, and public-figure, impersonation, sexualized, defamatory, or non-consensual likeness jobs should be rejected before the API call.

Direct API answer

Use this production rule before you write the request.

| Request | API posture | Product action |

|---|---|---|

| Fictional or generic person in a scene | Usually a valid creative use | Keep the prompt trait-based and avoid naming or visually targeting a real person. |

| Private person with valid authorization | Conditional | Store consent, verify source media, add human review, and expect route moderation. |

| Public figure, celebrity, politician, athlete, or famous likeness | Block as a product workflow | Reject before submission; do not rewrite the prompt into a near match. |

| Impersonation, sexualized or defamatory output, non-consensual likeness, or consent-missing media | Block | Stop the job and return a policy repair message. |

That distinction matters because "API support" has four layers: model capability, provider acceptance, moderation outcome, and legal rights. All four have to line up before a real-person workflow is safe to ship. A model can render faces or accept reference media; the API route can still block, filter, or expose you to rights and policy risk when the requested output resembles a real person.

What the official sources prove

ByteDance's Seedance 2.0 model page identifies the model family, while BytePlus' video generation API hub places video generation behind ModelArk task APIs. Those sources support the general capability claim: this is a video model route, and reference-driven video generation exists in the surrounding ModelArk documentation.

The real-person boundary comes from policy and trust documents. The BytePlus GenAI Acceptable Use Policy bars use that violates privacy or other rights, depicts a person's voice or likeness without appropriate consent or rights, impersonates people, or circumvents safety filters. The ModelArk Content Pre-filter FAQ says the public-figure protection feature can block generated images or voices that resemble public figures. The Content Pre-filter Overview also explains that filtering can intervene in prompts or outputs and that baseline safety policies remain even when configurable filtering is changed.

For data and assets, the ModelArk data authorization agreement puts consent responsibility on the customer for people appearing in processed images or videos. The BytePlus video generation model service terms add the practical production warning: other people's portraits, voices, personality rights, and reputation rights remain your responsibility, and filter circumvention is not an acceptable route.

So "Seedance 2.0 supports real people" is the wrong product claim. The safer claim is narrower: Seedance 2.0 can be part of a people-video workflow when the subject is fictional or when the identifiable person is authorized, the route accepts the job, and your product keeps rejection handling instead of bypass logic.

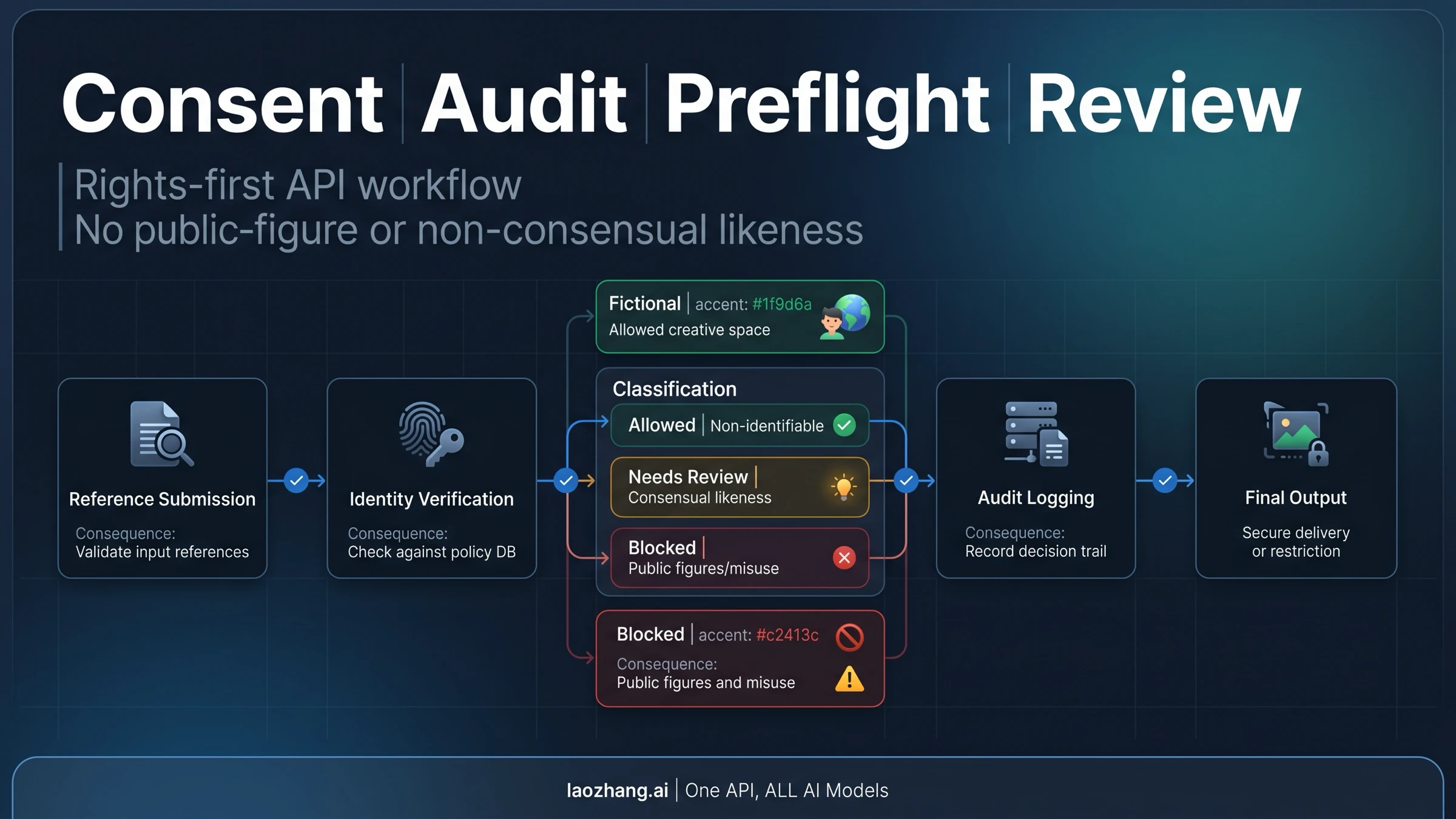

Safe API workflow for authorized people

If you have a legitimate real-person use case, design it as an authorization workflow before it becomes a generation workflow.

- Record the subject, purpose, allowed output types, expiration, and revocation path.

- Validate each reference file: who uploaded it, what it depicts, whether it contains minors or bystanders, and whether the consent covers video generation.

- Classify the job before submission as fictional, authorized real person, public figure risk, minor risk, intimate or sexual risk, political risk, or deception risk.

- Submit only jobs that pass your own preflight checks. A policy failure should return a repair message, not an automatic prompt rewrite.

- Store provider task IDs, moderation responses, final output, and reviewer notes for support and audit.

At minimum, keep these records with each authorized-person job.

| Record | Why it matters |

|---|---|

| Subject and consent scope | Proves the person agreed to the exact output type, purpose, duration, and distribution path. |

| Reference asset provenance | Shows who uploaded the file, where it came from, and whether it contains minors or bystanders. |

| Route and moderation result | Separates your own preflight decision from the provider's API-level acceptance or rejection. |

| Reviewer action and final output ID | Lets support investigate complaints without reopening sensitive media by default. |

For implementation details such as task creation, polling, output copying, and retries, use the separate Seedance 2.0 API implementation guide. Keep the likeness boundary separate from the submit-poll-download contract.

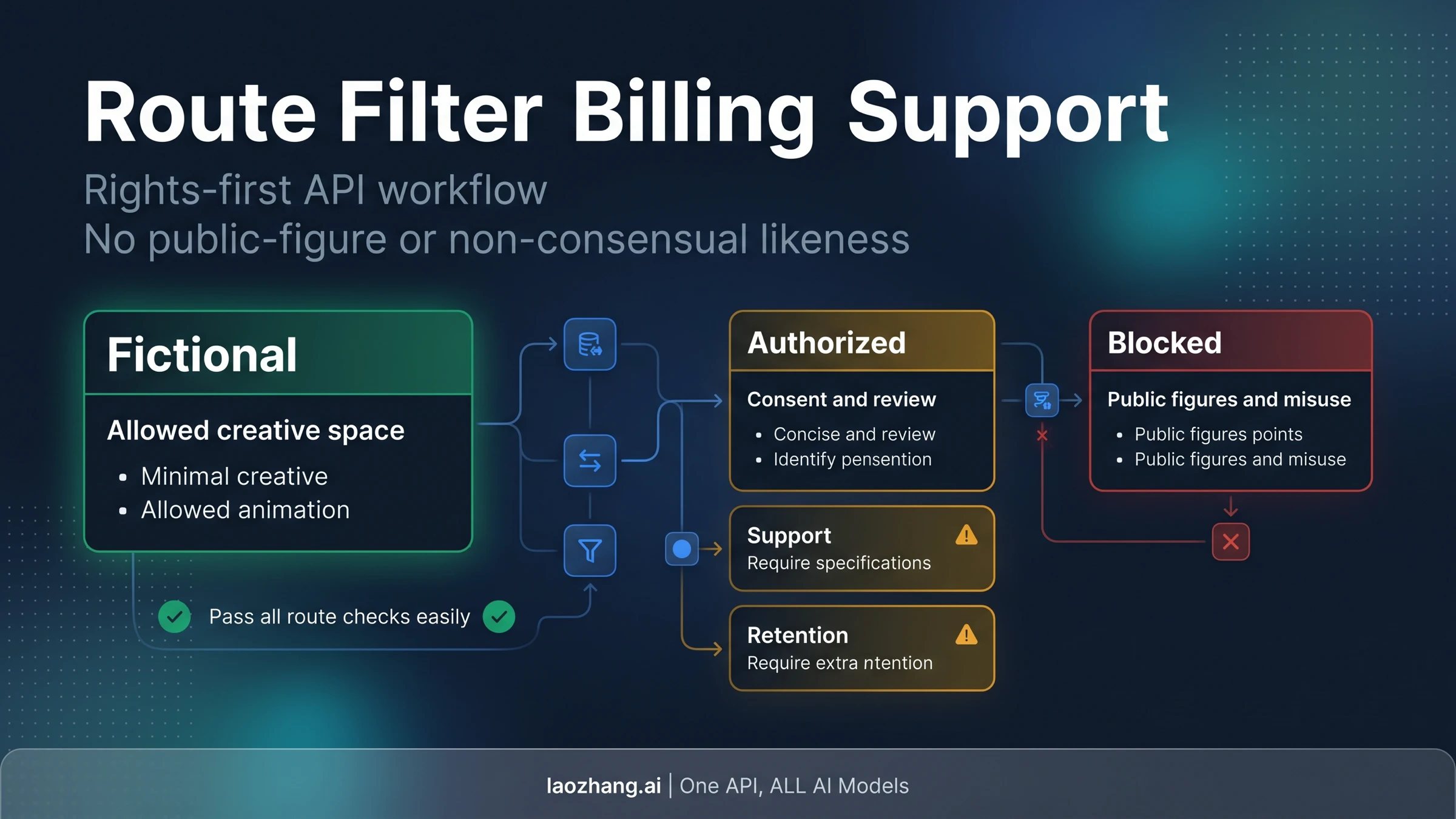

Provider route matters

Do not assume one provider's behavior proves every Seedance 2.0 route supports the same real-person workflow. BytePlus ModelArk, regional Ark routes, hosted providers, and API gateways may differ in schema, filtering, rejection messages, output retention, billing on failed jobs, and support escalation.

Before launch, test the exact route you will use:

- Does it accept the reference media type your workflow needs?

- Does it document how content filtering appears in API responses?

- Does it block public-figure resemblance, voice resemblance, or both?

- Does a rejected job bill the same way as a failed render?

- Can your support team retrieve the task ID, request ID, and moderation reason without exposing sensitive media?

For route selection, use the Seedance 2.0 provider comparison. For budget questions, use the Seedance 2.0 pricing and free-vs-paid guide.

Prompt and product rules that reduce risk

Safe prompting is mostly about not creating ambiguity. For fictional humans, describe traits, setting, camera movement, wardrobe, and action without naming or visually anchoring the character to a real person. For authorized real-person workflows, use internal asset IDs and consent records rather than free-form prompts that ask the model to imitate someone.

Your product should also avoid unsafe affordances:

- No "celebrity mode", "make me look like this public figure", or face-swap presets.

- No automatic retries when a moderation or pre-filter response appears.

- No hidden prompt rewriting that turns a blocked likeness request into a near match.

- No public gallery for outputs that include authorized private people unless the release covers publication.

- No claim that API access bypasses face, voice, or public-figure filters.

The best default answer for a product manager is: Seedance 2.0 can be used for people in video, but real identifiable people require a rights-first workflow and route testing. If the use case depends on unauthorized likeness, the answer is no.

FAQ

Can Seedance 2.0 generate videos with people?

Yes. The practical boundary is not "people or no people"; it is whether the output targets a real identifiable person, a public figure, or a restricted context.

Can I use my own face or an employee reference?

Only treat that as viable when the person has given appropriate consent for the exact use, the reference media is lawfully obtained, and your route accepts the job after moderation. Keep consent and review records.

Can I generate a celebrity, politician, athlete, or public figure?

Do not build that as a product workflow. BytePlus' public-figure pre-filter documentation exists specifically to reduce fraud and deepfake risk, and policy language separately restricts unauthorized likeness and impersonation.

Does API access bypass face filters?

No. API access should be treated as a production surface with policy enforcement, not a way around consumer-app safety controls.

What should my app do when a job is rejected?

Persist the rejection reason, show a repair message, and stop. Retry only transient transport failures. Do not automatically modify a blocked likeness request into a near miss.