Seedance 2.0 now has several public provider routes, but they are not the same API contract. As of April 20, 2026, PiAPI and Kie are the clearest price-sensitive task/API routes, Replicate is the familiar hosted-model route, fal is the SDK and playground route, OpenRouter is the router and fallback route, and LaoZhang is the gateway route to check when OpenAI-style calling, payment, or support convenience matters more than an open per-second price row.

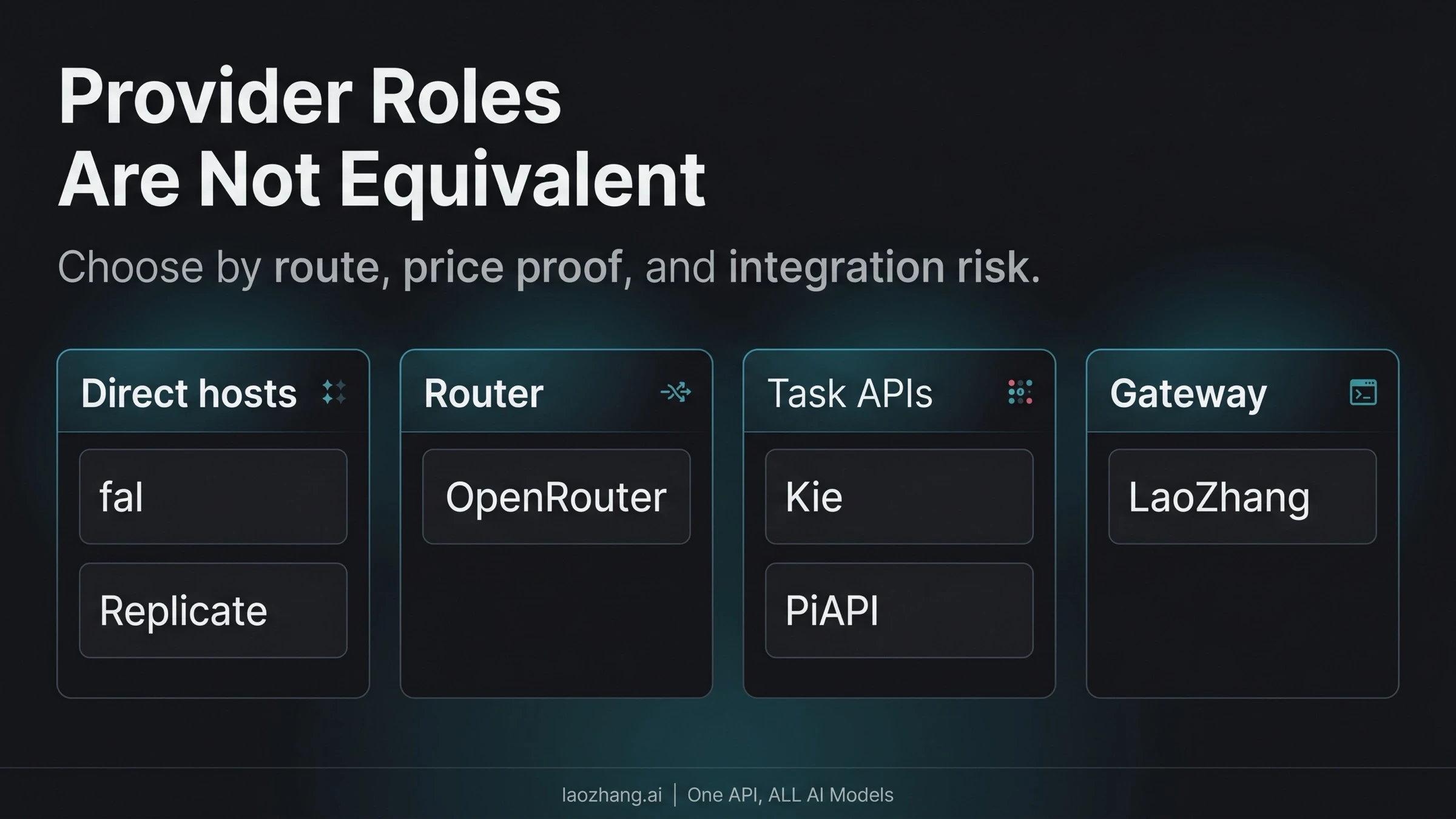

The fastest way to choose is to sort by route type first, then compare price evidence inside that route class. A per-second host, token-priced router, credit API, task API, and gateway console price are different budgeting problems even when they all expose the same model name.

Compared with the early-April status picture, public provider evidence has moved: fal, Replicate, OpenRouter, PiAPI, Kie, and LaoZhang now expose Seedance 2.0 pages or docs, while LaoZhang's exact public price still needs a console or current-list check before budget approval.

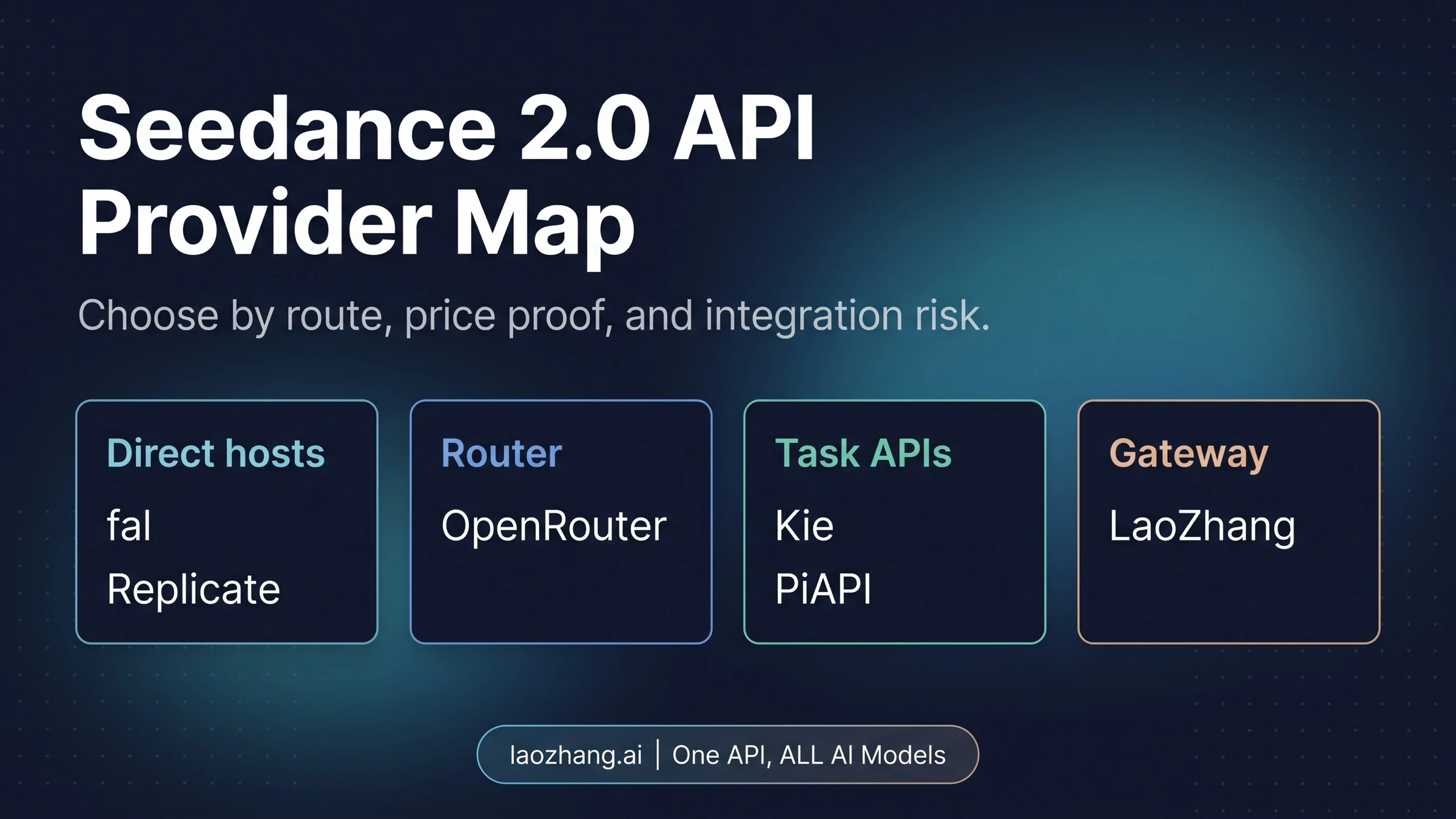

Start With The Provider Matrix

| Provider | Route type | Public price evidence as of Apr 20, 2026 | Best first use | Main caution |

|---|---|---|---|---|

| LaoZhang | Gateway / OpenAI-style video route | Dedicated Seedance 2.0 docs are public; exact Seedance price is a console/current-list check | Teams that value unified API calling, support, billing convenience, or local payment friction reduction | Do not treat it as the public price winner without a fresh console price check |

| fal | Direct host with SDK and playground | Standard 720p text-to-video is $0.3034/sec; standard image/reference is $0.3024/sec; fast is $0.2419/sec | Fast developer experience, examples, and direct endpoint tests | Usually not the cheapest visible per-second route |

| Replicate | Hosted model route | 480p non-video $0.08/sec, 480p video input $0.10/sec, 720p non-video $0.18/sec, 720p video input $0.22/sec | Teams already using Replicate jobs, webhooks, and model pages | Price depends on resolution and whether video input is used |

| OpenRouter | Router and fallback layer | From $7/M tokens with video token calculation from size, duration, and 24 fps | Multi-provider routing, uptime comparison, fallback experiments | Token pricing needs conversion before it can be compared with per-second rates |

| Kie | Credit-priced API route | Public rows include fast 720p no-video at $0.165/sec and standard 720p no-video at $0.205/sec, with cheaper video-input rows and higher 1080p rows | Price-sensitive testing when Kie's credit system and task model fit the workflow | Rates vary sharply by model mode, resolution, and input type |

| PiAPI | Specialist task API route | seedance-2 is $0.13/sec and seedance-2-fast is $0.10/sec | Simple task API, clear public rates, and first/last-frame or omni-reference workflows | Prices changed after April 9, 2026, so treat old screenshots as stale |

The matrix is deliberately not a winner table. Seedance 2.0 provider selection has at least three separate decisions: how the request is submitted, how the bill is calculated, and how much operational control the provider gives you after a task starts. A team that wants a direct model host should evaluate fal and Replicate first. A team trying to keep multiple video providers behind one routing layer should evaluate OpenRouter or LaoZhang differently. A team optimizing only for visible task rates should start with PiAPI, Kie, and the low-resolution Replicate rows, then test actual failure and retry behavior.

The official ByteDance Seedance 2.0 page is still the capability baseline: the model is positioned as a unified multimodal audio-video model with text, image, audio, and video inputs. That official model page does not make every provider route an official ByteDance contract. For provider comparison, the safer wording is "public provider route" unless a source proves a stronger relationship. That distinction also keeps procurement honest: a route can be production-useful without being the cheapest visible rate card.

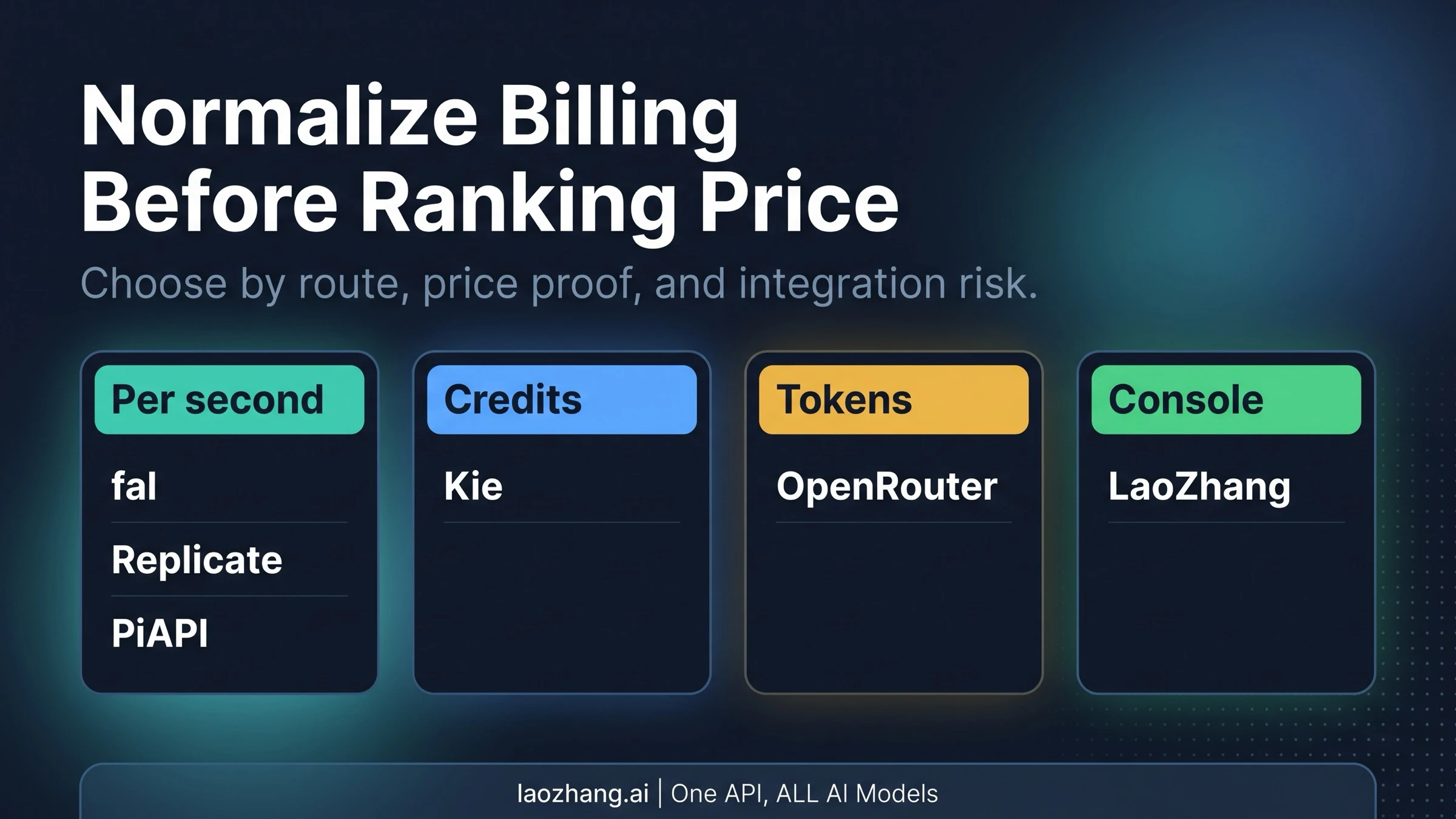

Normalize Billing Before Ranking Providers

The largest budgeting mistake is to rank every visible number as if it were the same unit. fal, Replicate, Kie, and PiAPI expose per-second style rows, but even those rows can change by resolution, fast/standard mode, and whether the request includes video references. OpenRouter uses a token formula. LaoZhang publishes the route and model IDs but keeps the exact current Seedance price behind the console/current list. Those are not the same pricing shape.

For a clean estimate, compare providers in this order:

- Pick the route class. Direct host, router, task API, credit API, and gateway routes solve different production problems.

- Normalize the generated duration. Public rates are usually per second, so calculate a 5-second, 10-second, and 15-second case for your own workload.

- Match resolution and input type. Replicate separates 480p and 720p, and Kie's rows differ for video-input versus no-video-input jobs.

- Account for failed or rejected generations. Public price rows rarely tell you whether retries, moderation failures, or provider timeouts behave the same way.

- Separate public evidence from console evidence. A provider can be a valid integration route even when its exact price is not safe to quote publicly.

Here is the current evidence shape in plain terms. fal is easiest to reason about because its public examples show clear per-second rates, but those rates sit toward the higher end of the visible set. Replicate has some of the lowest visible 480p rows and a familiar model-hosting workflow. PiAPI's seedance-2-fast row is easy to budget at $0.10/sec. Kie can be very competitive in specific rows, especially when video-input multipliers apply, but the credit table needs closer reading. OpenRouter is useful for routing, but its token formula should be converted against real output settings before budget approval. LaoZhang belongs in the gateway bucket until a fresh console price is checked.

Provider Notes That Matter In Practice

LaoZhang

LaoZhang's Seedance 2.0 Video Generation API docs expose an OpenAI-style /v1/videos route with doubao-seedance-2-0-fast-260128 and doubao-seedance-2-0-260128 model IDs. The docs describe text, image, video, and audio references, first/last-frame input, extension, editing, duration, watermark, and generate_audio controls.

That makes LaoZhang a real route to evaluate when one API surface, support, billing convenience, or local payment friction matters. It does not make LaoZhang the cheapest public route on the evidence available here. For a production budget, check the current console price and then compare the gateway value against direct providers with open per-second rows.

fal

fal exposes a practical developer route through its Seedance 2.0 tool guide and example repository. The visible endpoint family includes bytedance/seedance-2.0/text-to-video, image-to-video, reference-to-video, and fast variants.

The strongest fal argument is developer experience: playground, SDK path, examples, and clear endpoint naming. The tradeoff is price. The public 720p standard rows around $0.3024-$0.3034/sec and fast row at $0.2419/sec are easy to understand, but not automatically cheap. Use fal first when integration speed and clean examples beat raw-rate optimization.

Replicate

Replicate's public bytedance/seedance-2.0 model page is useful for teams already using Replicate's job pattern. The page exposes a familiar playground and API flow, and the model input schema covers prompt, image, last-frame image, multiple reference images, reference videos, reference audios, duration, resolution, aspect ratio, and audio generation.

Replicate's billing rows are also comparatively legible: 480p non-video input at $0.08/sec, 480p video input at $0.10/sec, 720p non-video input at $0.18/sec, and 720p video input at $0.22/sec. That makes Replicate a strong first test if your workload can start at 480p or if your team already has Replicate task handling, storage, and webhooks in place.

OpenRouter

OpenRouter's Seedance 2.0 page positions the model as a router-accessible video generation option released on April 15, 2026, with pricing from $7/M tokens. Its video token calculation is based on height, width, duration, 24 fps, and division by 1024.

That model is not best compared by simply putting "$7/M tokens" beside "$0.10/sec." OpenRouter is more valuable when routing, provider performance, uptime visibility, or fallback policy matters. Treat it as a provider-routing layer first and as a raw-price option only after calculating your real output settings.

Kie

Kie now exposes Seedance 2.0 through both docs and public pricing rows. Its docs name bytedance/seedance-2 and bytedance/seedance-2-fast, with duration from 4 to 15 seconds and reference image, video, and audio constraints. The public pricing endpoint shows detailed rows across fast, standard, 480p, 720p, 1080p, and video-input cases.

The practical Kie upside is row-level pricing flexibility. For example, fast 720p without video input appears at $0.165/sec, standard 720p without video input at $0.205/sec, and some video-input rows are lower. The caution is that the cheapest-looking row may not match your actual request. Kie is a good candidate for price-sensitive testing when your team can map each request shape to the right row.

PiAPI

PiAPI's Seedance 2 docs expose POST https://api.piapi.ai/api/v1/task, seedance-2, and seedance-2-fast. The public price rows are simple: $0.13/sec for seedance-2 and $0.10/sec for seedance-2-fast, with the docs also showing older pre-April-9 prices for context.

PiAPI is the clearest low-friction task API in the set. It supports 4-to-15-second output, total input video duration constraints, text-to-video, first/last-frame input, and omni-reference mode with mixed references. Start with PiAPI when you want a simple task API and public rate rows before exploring broader routing or gateway needs.

Pick A First Route By Workflow

The best first provider depends on what the integration must prove.

For cheap direct testing, start with PiAPI, Kie, or Replicate's lower-resolution rows. PiAPI is the simplest visible rate card. Kie can win in specific rows, but only if the request shape maps cleanly. Replicate can be attractive if 480p is enough or if your team already uses Replicate infrastructure.

For fast developer experience, start with fal. The price may be higher, but the example path is clean and the endpoint naming is straightforward. That can be the right tradeoff when the first task is to prove product fit rather than optimize unit economics.

For hosted-model production familiarity, start with Replicate. Teams already built around Replicate's predictions, status handling, and model pages can evaluate Seedance 2.0 without changing too much of their surrounding infrastructure.

For multi-provider routing, start with OpenRouter or keep it as a fallback lane. Its value is not only the model listing; it is the routing layer around provider availability, performance, and request normalization.

For gateway convenience, evaluate LaoZhang after checking the current console price. It fits teams that want OpenAI-style calling, support, payment convenience, or one access surface across multiple AI models. If raw public per-second pricing is the only decision, direct providers currently expose cleaner open numbers.

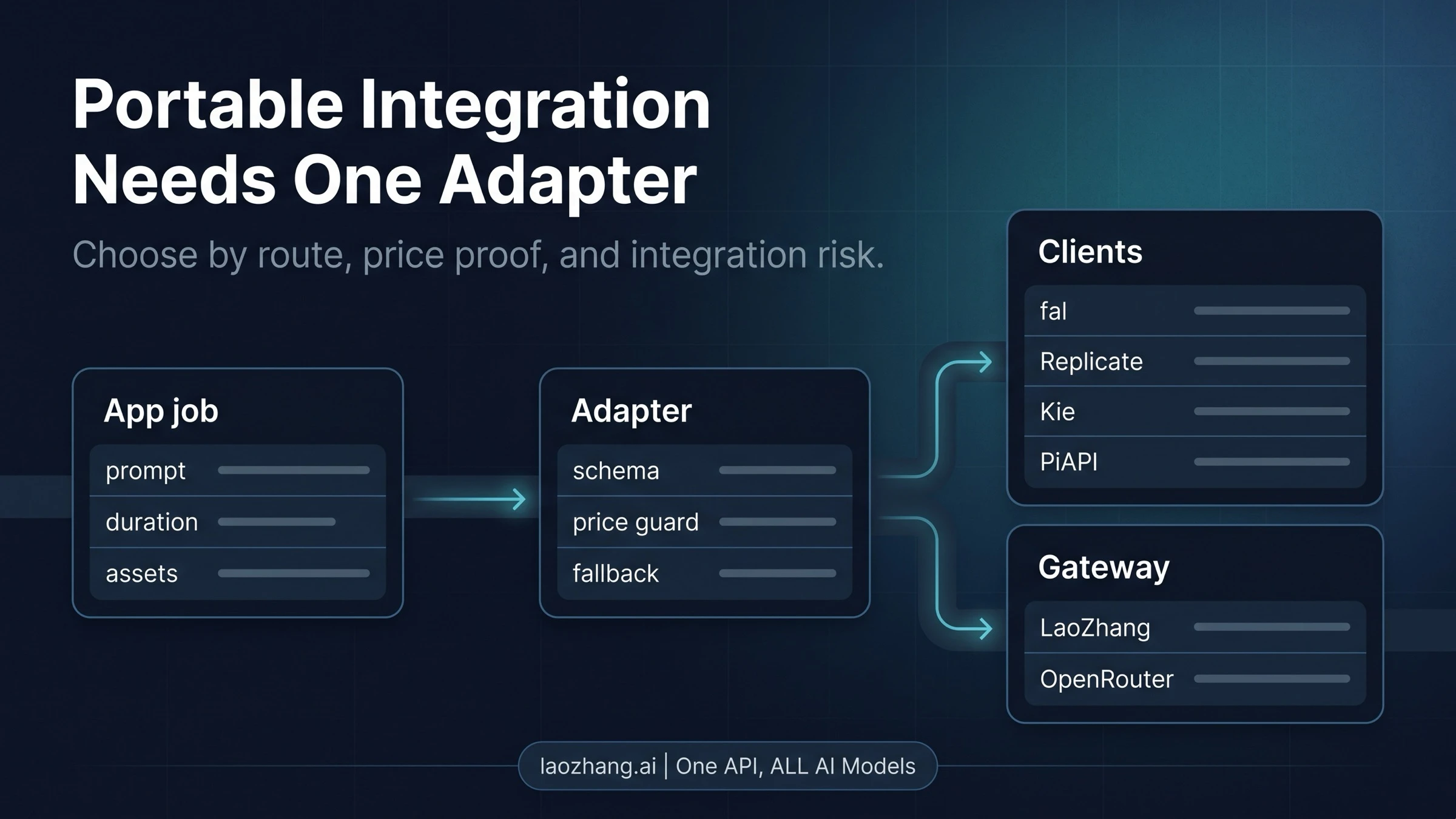

Keep Your Integration Portable

Provider choice should not leak deep into application logic. The stable part of a video generation app is usually the job object: prompt, references, duration, target aspect ratio, callback or polling preference, storage policy, and budget guard. The unstable part is provider-specific: endpoint path, model ID, request schema, billing unit, moderation behavior, and result format.

Keep those layers separate:

tstype VideoJob = { prompt: string; durationSeconds: number; aspectRatio: "16:9" | "9:16" | "1:1"; references?: Array<{ type: "image" | "video" | "audio"; url: string }>; }; type ProviderRoute = { name: "laozhang" | "fal" | "replicate" | "openrouter" | "kie" | "piapi"; model: string; submit(job: VideoJob): Promise<{ taskId: string }>; poll(taskId: string): Promise<{ status: string; videoUrl?: string }>; estimate(job: VideoJob): { unit: string; value: number | null }; };

That adapter pattern pays off quickly. If fal proves the prompt behavior fastest but PiAPI or Kie prices better for production, the application should switch provider modules rather than rewrite the whole task lifecycle. If OpenRouter becomes the fallback lane, it should sit behind the same job object. If LaoZhang becomes the gateway for payment or support reasons, the app still should not treat gateway-specific fields as universal model facts.

Production Checklist Before Shipping

Before any Seedance 2.0 provider becomes a customer-facing dependency, verify these items in the same environment where traffic will run:

- Current price row. Recheck provider pricing on the day the budget is approved, especially for Kie, PiAPI, and any console-only LaoZhang quote.

- Model ID and mode. Confirm fast versus standard mode, resolution, input references, and native audio behavior.

- Failure billing. Test moderation rejection, timeout, queue failure, and user-cancel cases rather than assuming every provider bills the same way.

- Polling and retention. Confirm how long result URLs remain valid and whether callbacks are supported.

- Input limits. Video and audio reference limits differ by provider; do not assume one schema covers all routes.

- Official wording. Say "public provider route" unless a specific source proves a direct official ByteDance contract.

- Fallback behavior. Decide whether fallback means rerun on another provider, degrade resolution, shorten duration, or send the job to manual review.

- Logging. Store provider name, model ID, duration, resolution, request shape, task ID, cost estimate, final status, and output URL expiry.

The final production choice is rarely one provider forever. A common pattern is fal or Replicate for the first developer proof, PiAPI or Kie for price-sensitive task tests, OpenRouter as the router/fallback experiment, and LaoZhang when gateway convenience solves a real access, payment, or support problem.

FAQ

Are All Seedance 2.0 API Providers Official ByteDance Providers?

No. ByteDance controls the official model baseline, but public provider pages do not all prove the same direct official contract. Use "public provider route" unless the provider's own source proves stronger wording.

Which Seedance 2.0 API Provider Is Cheapest?

There is no universal cheapest provider without matching route type, resolution, duration, and input mode. PiAPI's fast row is simple at $0.10/sec, Replicate's 480p non-video row is $0.08/sec, and Kie has lower rows in some request shapes. OpenRouter uses tokens, and LaoZhang needs a current console price check.

Is LaoZhang A Good Seedance 2.0 API Route?

LaoZhang is a valid route to evaluate when an OpenAI-style gateway, local payment convenience, support, or multi-model access matters. It should not be described as the cheapest public Seedance 2.0 provider unless the exact current price has been checked and compared against the same workload.

Should I Start With fal Or Replicate?

Start with fal when SDK examples and fast developer experience matter most. Start with Replicate when your team already uses Replicate's model-hosting workflow or wants the lower visible 480p and 720p rows.

When Does OpenRouter Make Sense?

OpenRouter makes sense when routing, fallback, provider comparison, or uptime visibility matters more than direct per-second pricing. Convert its token formula against your actual height, width, and duration before comparing it with per-second providers.

What Should I Verify Before Moving From Test To Production?

Verify the model ID, exact price row, output duration, resolution, input reference limits, native audio behavior, failure billing, callback or polling behavior, and output retention window. These details differ enough across providers that a working demo is not the same thing as production readiness.