If you want the official Nano Banana API today, you are not looking for a separate Nano Banana developer product. You are looking for Gemini's native image-generation models in the Gemini API or Google AI Studio. For most API work, the safest default is Nano Banana 2, whose current model ID is gemini-3.1-flash-image-preview. Move to Nano Banana Pro (gemini-3-pro-image-preview) only when the job can clearly justify the premium tier through better text rendering, more complex instruction-following, or professional asset-production quality.

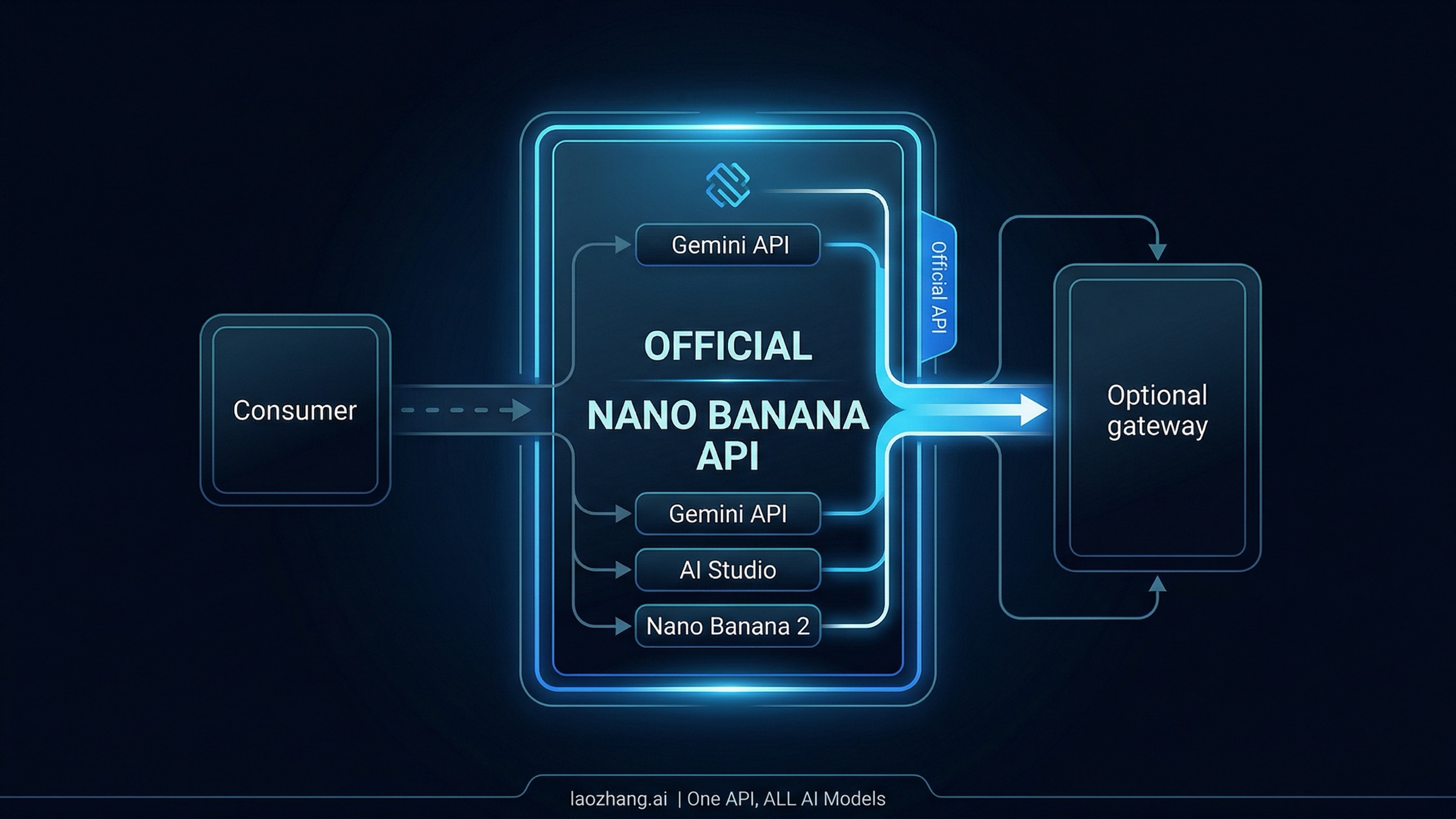

That direct answer matters because this query is now full of false shortcuts. Some pages treat Nano Banana like a standalone app, some treat AI Mode like the API, and some present wrapper gateways as if they were the definition of the product. They are not. The clean mental model is simpler: Nano Banana is Gemini's native image family, the official developer path is Gemini API plus AI Studio, and the first model most teams should try is Nano Banana 2.

All model IDs, pricing, and consumer-limit references below were rechecked against current official Google docs, help pages, and pricing tables on March 29, 2026.

TL;DR

Here is the shortest correct answer.

| If this is your real job | Start here | Why | Biggest caveat |

|---|---|---|---|

| You want the official Google image API | Gemini API + Nano Banana 2 | It is Google's current high-efficiency default for image generation and editing | Current preview image API routes are paid |

| You need higher-fidelity assets, stronger text rendering, or more complex visual reasoning | Nano Banana Pro | It is the premium professional tier | Costs more and should be an override, not your default |

| You want to test in Google's own UI before coding | AI Studio | Same official stack, easier for prompt iteration | Google says a paid API key is required for Nano Banana 2 there |

| You are just making images in a consumer product | Gemini Apps or AI Mode | Good for everyday use without writing code | These are separate consumer contracts, not the API |

| You need OpenAI-compatible routing or unified billing across vendors | Optional gateway / wrapper | Useful as infrastructure | Still a second decision after understanding the official path |

The practical rule is: treat "Nano Banana API" as a Gemini API question, not as a search for one magical Nano Banana website.

What The Nano Banana API Actually Is Now

Google's current image-generation docs define Nano Banana as Gemini's native image-generation capability, not as a single surface. In practice, the phrase now points to a small family of image-capable Gemini models:

| Family label | Official model ID | Current role |

|---|---|---|

| Nano Banana 2 | gemini-3.1-flash-image-preview | High-efficiency default for speed, throughput, and broad API use |

| Nano Banana Pro | gemini-3-pro-image-preview | Premium professional tier for higher-fidelity assets and stronger text rendering |

| Nano Banana | gemini-2.5-flash-image | Older fast path optimized for low-latency image work |

This model-family view is the first thing many search results still fail to explain cleanly. The query sounds like there should be one "Nano Banana API" product page, one account flow, and one obvious model. That is no longer how Google describes it. The current official split is a family choice plus surface choice:

- which family member you need

- whether you want the official API, the official testing UI, or a consumer surface

The second high-value correction is that generated images are part of the Gemini request/response contract, not a separate image-only billing system hidden behind some other Google product. The same image-generation docs show the supported request shapes, image editing flows, aspect-ratio controls, image sizes, and search-grounding tools. Google also notes that all generated images include a SynthID watermark, which matters if you are building product workflows around provenance or AI-content disclosure.

If your real question is broader than the API and you want the current user-facing entry points across Gemini, AI Mode, and Google surfaces, our Nano Banana AI image generator guide covers that bigger access map. This article is narrower: it is the official API setup answer.

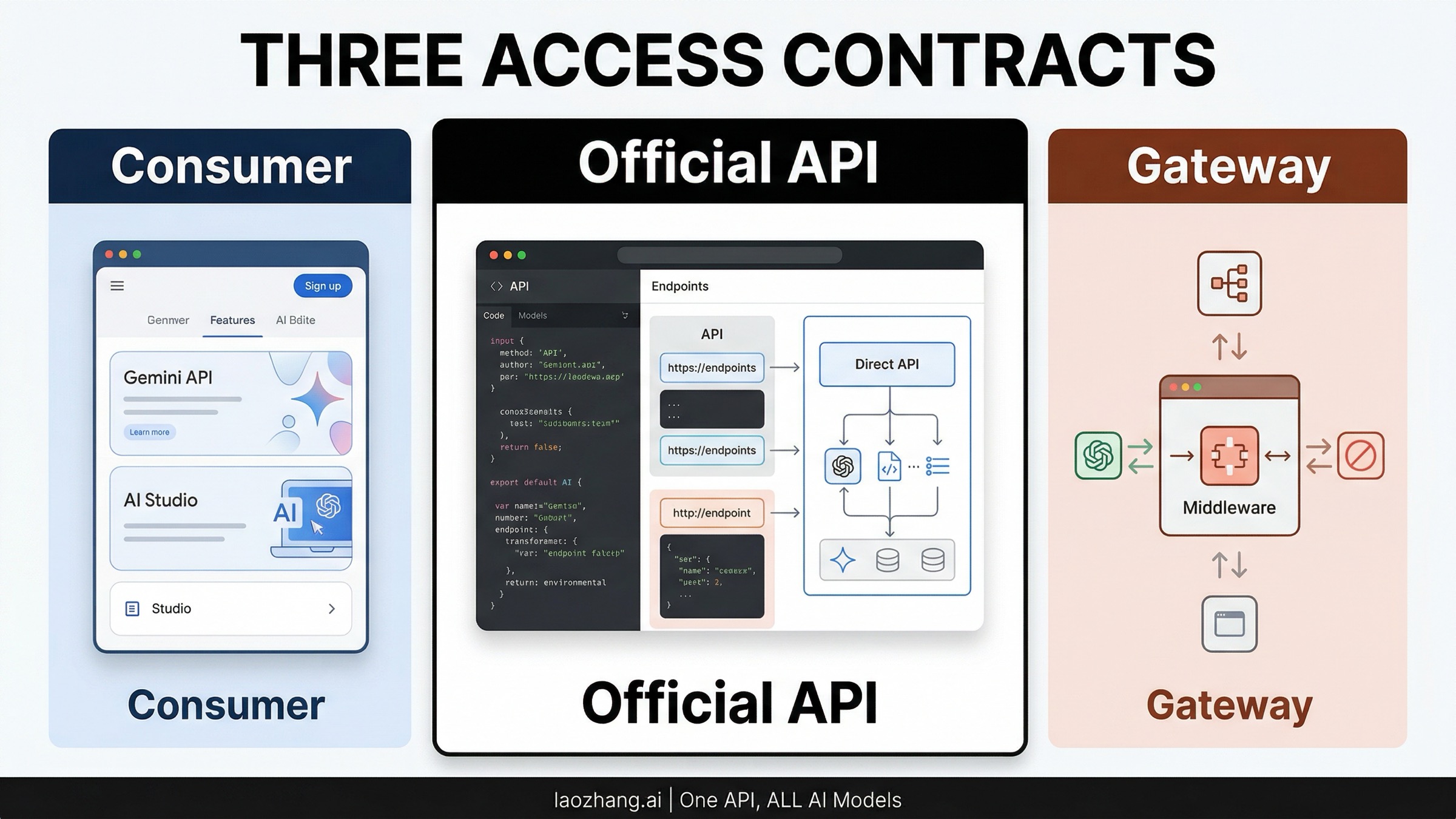

Which Path Is Official API And Which Path Is Not

The fastest way to get confused is to put Gemini Apps, AI Mode, AI Studio, Gemini API, and wrappers into one bucket. They are related, but they are not the same contract.

Gemini API is the official programmatic path. This is the route you use when your application needs to call generateContent, send prompts or input images, receive inline image output, and control settings like aspect ratio or image size. If you are integrating into a product, this is the answer.

Google AI Studio is Google's official testing and development surface for the same stack. It is useful when you want to try prompts, validate model behavior, and inspect results before wiring them into code. Google's February 26, 2026 Nano Banana 2 rollout post is especially useful here because it makes one otherwise easy-to-miss point explicit: a paid API key is required to use Nano Banana 2 in Google AI Studio. In other words, AI Studio is the official UI around the developer stack, not a special free loophole around current preview-image pricing.

Gemini Apps and AI Mode are real product surfaces, but they are consumer routes, not the answer to an API query. Gemini Apps Help currently splits Nano Banana and Nano Banana Pro by everyday use versus advanced output, and AI Mode Help separately documents image creation limits plus the special Pro path for infographics and diagrams. Those details matter if you are deciding how to use Nano Banana as a user. They do not change what the official API contract is.

Wrappers and gateways are a separate infrastructure choice. They can still be useful if you specifically want OpenAI-compatible request formats, multi-vendor routing, or alternate billing layers. But that is a second decision, not the first. If a page starts by presenting a gateway as if it were the product itself, it is already giving you the wrong mental model for this keyword.

The clean routing rule is:

- use Gemini API when you need code

- use AI Studio when you need official prompt testing around the same stack

- use Gemini Apps or AI Mode when you are not really asking an API question

- use a gateway only after you understand the official path

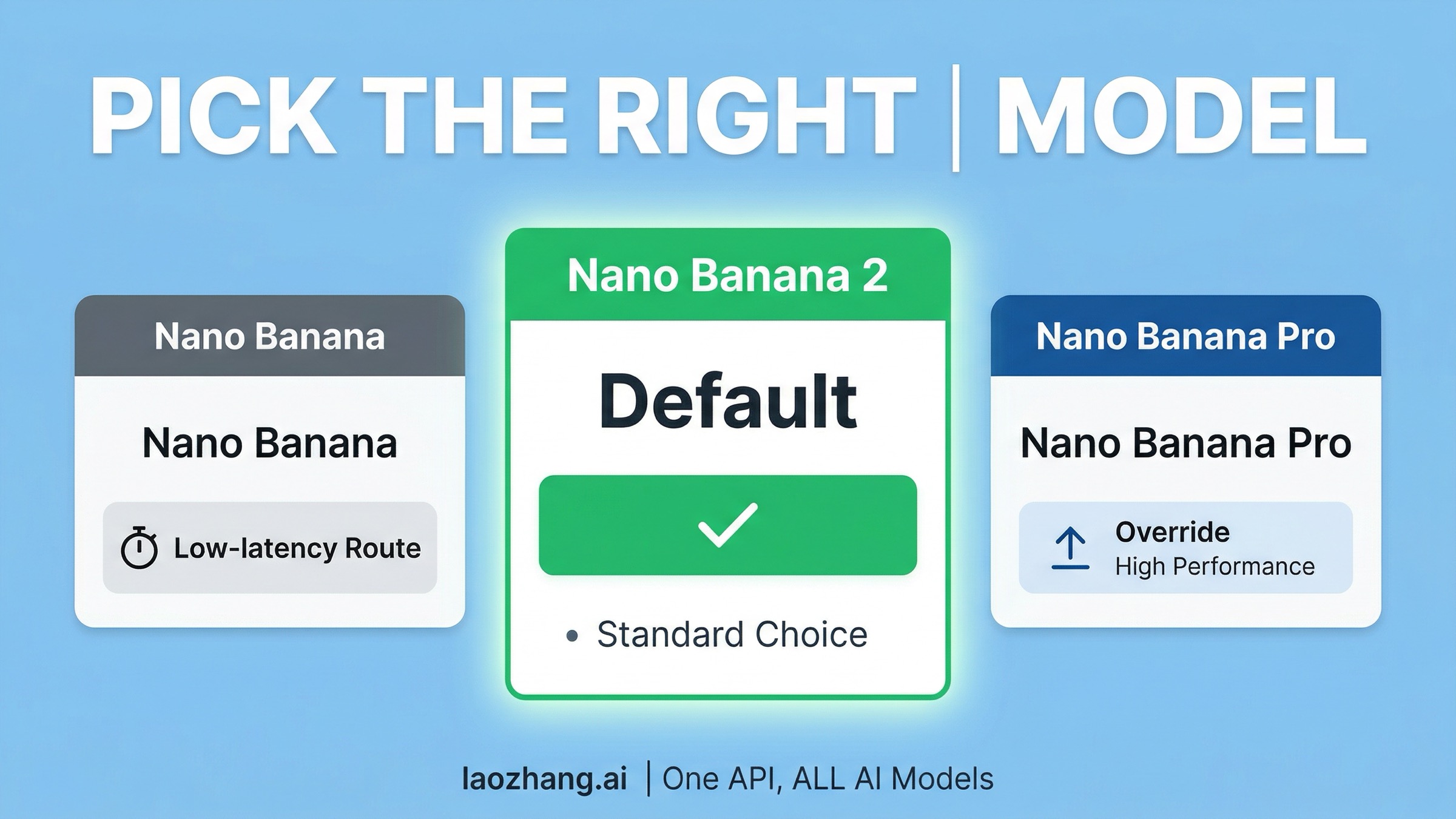

Start With Nano Banana 2 Unless You Can Name The Override

This is the single most useful judgment the article can save you from making the hard way.

Google's current image-generation docs describe Nano Banana 2 as the high-efficiency counterpart to Pro, optimized for speed and high-volume developer use cases. Google's own February 2026 rollout post goes even further and calls Nano Banana 2 "our best image generation and editing model." For an API guide, that means you should not begin from the assumption that Pro is the obvious default just because it sounds more premium.

Start with Nano Banana 2 when:

- you are building your first integration

- you care about faster iteration and better price efficiency

- you expect meaningful volume

- you want the safest default model for general image generation and editing

- you do not yet have a concrete reason to pay for Pro

Move to Nano Banana Pro when:

- text rendering quality is central to the output

- the image is a professional final asset, not just a working draft

- you need more deliberate reasoning through complex visual instructions

- the job is diagram-heavy, layout-sensitive, or brand-critical enough to justify a premium tier

Keep Nano Banana (gemini-2.5-flash-image) in mind when:

- you already have a workload tuned for the older low-latency route

- you want the older fast path and do not need the newer Nano Banana 2 behavior

The important nuance is that the model choice is not just a quality ladder. It is a workload fit question. Nano Banana 2 is not merely the "cheap version" of Pro. Google is framing it as the broad default for image generation and editing work. Pro is the premium override. That is a much better decision rule than the vague "use Pro for better images" advice that still circulates in wrapper pages.

If you want the deeper side-by-side workload comparison after this routing decision, read our Nano Banana Pro vs Nano Banana 2 guide. This article is intentionally narrower: it helps you start correctly.

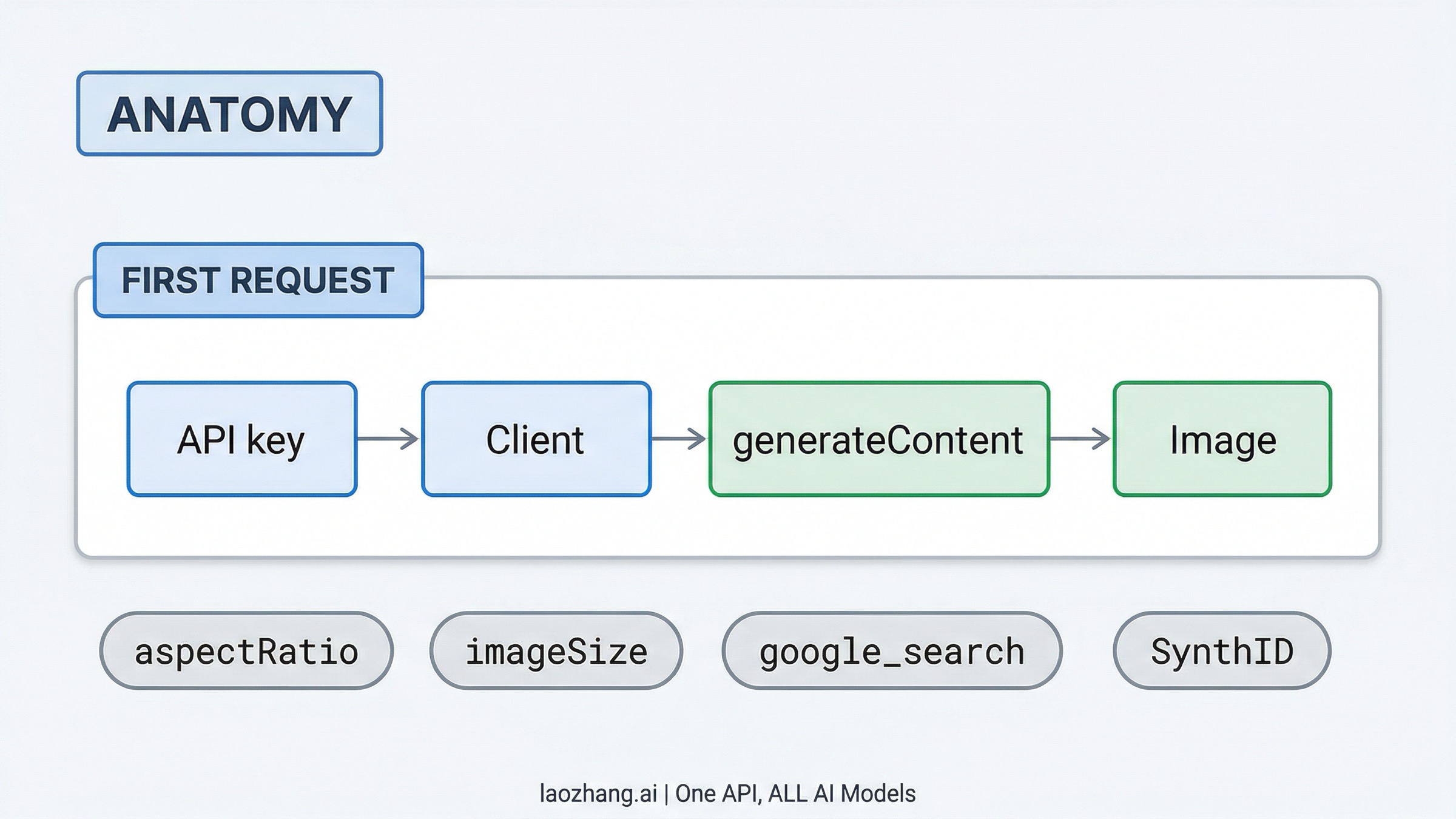

Quickstart: Get Your First Request Working

The official path is shorter than most third-party tutorials make it sound.

1. Get a Gemini API key

Create your key in Google AI Studio. Google still makes key creation easy, but that should not be confused with a free image-output contract. The current preview image routes on the pricing page do not show a free tier for image output. So the honest reading is:

- getting the key is easy

- using the current preview image-generation models is a paid API path

2. Install the current official SDK

Google's current libraries page recommends the Google GenAI SDK, not the older legacy Gemini client libraries.

bashnpm install @google/genai # Python pip install -U google-genai

If you are copying older snippets built around google-generativeai, treat them as historical examples, not the best current path.

3. Send a minimal image-generation request

For a new integration, use Nano Banana 2 first:

javascriptimport { GoogleGenAI } from "@google/genai"; import fs from "node:fs"; const ai = new GoogleGenAI({ apiKey: process.env.GEMINI_API_KEY }); const response = await ai.models.generateContent({ model: "gemini-3.1-flash-image-preview", contents: "Create a clean editorial illustration of a robot sketching a website wireframe.", config: { responseModalities: ["IMAGE"], imageConfig: { aspectRatio: "16:9", imageSize: "2K", }, }, }); for (const part of response.candidates[0].content.parts) { if (part.inlineData) { fs.writeFileSync("output.png", Buffer.from(part.inlineData.data, "base64")); } }

The Python version follows the same logic:

pythonimport os from google import genai from google.genai import types client = genai.Client(api_key=os.environ["GEMINI_API_KEY"]) response = client.models.generate_content( model="gemini-3.1-flash-image-preview", contents="Create a clean editorial illustration of a robot sketching a website wireframe.", config=types.GenerateContentConfig( response_modalities=["IMAGE"], image_config=types.ImageConfig( aspect_ratio="16:9", image_size="2K", ), ), ) for part in response.parts: if part.inline_data is not None: part.as_image().save("output.png")

If you want to test the raw HTTP path directly, the official REST shape is equally simple:

bashcurl -X POST \ "https://generativelanguage.googleapis.com/v1beta/models/gemini-3.1-flash-image-preview:generateContent" \ -H "x-goog-api-key: ${GEMINI_API_KEY}" \ -H "Content-Type: application/json" \ -d '{ "contents": [{ "parts": [ {"text": "Create a clean editorial illustration of a robot sketching a website wireframe."} ] }], "generationConfig": { "responseModalities": ["IMAGE"], "imageConfig": { "aspectRatio": "16:9", "imageSize": "2K" } } }'

Switching to Pro is deliberately boring: keep the same request shape and change the model ID to gemini-3-pro-image-preview. That is one reason the "start with Nano Banana 2, upgrade to Pro only when you have a reason" workflow is so practical. You do not need to redesign your entire integration just to test the premium tier.

Features Worth Knowing Before You Build Around A Wrapper

One reason the official Gemini route deserves to be your baseline is that the underlying API is already more capable than many gateway landing pages make it sound.

Image editing is built in. The image-generation docs show text-and-image-to-image workflows, not just prompt-only generation. That matters if your product needs editing, not just one-shot creation.

Aspect ratio and image size are explicit controls. The official API supports imageConfig.aspectRatio, and for the current preview image models Google documents image sizes from 0.5K through 4K on Nano Banana 2 and up to 4K on Pro. That means you can make the cost and latency tradeoff explicit in your own product rather than leaving everything at one default size.

Search grounding exists inside the official stack. Google's image-generation docs show google_search as an official tool you can attach to image generation. That is useful when the image needs live context, such as charts, weather visuals, or grounded infographic elements. It is not "free magic," though. Google's pricing page treats search grounding as its own billable layer after the shared free allowance.

SynthID is already handled by the platform. If your product needs to reason about AI provenance or disclosure, this matters more than most gateway landing pages admit. Google notes that all generated images include a SynthID watermark.

These details are easy to miss if you learn Nano Banana through a wrapper page first. The official route has its own real advantages, and you should understand those before deciding whether a gateway helps or merely adds another abstraction layer.

Pricing And Free Reality: The Part People Keep Mixing Up

This is where most bad Nano Banana API advice still goes off the rails.

The current Gemini Developer API pricing page lists both current preview image models with Free Tier: Not available. As of March 29, 2026, the official paid pricing looks like this:

| Model | Standard pricing | Batch pricing |

|---|---|---|

Nano Banana 2 (gemini-3.1-flash-image-preview) | $0.045 / 0.5K, $0.067 / 1K, $0.101 / 2K, $0.151 / 4K | $0.022 / 0.5K, $0.034 / 1K, $0.050 / 2K, $0.076 / 4K |

Nano Banana Pro (gemini-3-pro-image-preview) | $0.134 / 1K-2K, $0.24 / 4K | $0.067 / 1K-2K, $0.12 / 4K |

That should immediately change how you read many ranking pages. If a page implies that the current Nano Banana preview-image API is simply "free to start" without explaining the contract difference, it is probably blending together:

- free key creation

- AI Studio experimentation language

- Gemini or AI Mode consumer quotas

- older or unrelated model-family free tiers

Those are not the same thing.

The consumer surfaces have their own separate rules. Gemini Apps Help currently documents Nano Banana 2 image generation and editing quotas of 20 / 50 / 100 / 1000 images per day for no plan / Google AI Plus / Google AI Pro / Google AI Ultra, and AI Mode Help documents the same 20 / 50 / 100 / 1000 images in a 24-hour period shape. AI Mode Help also says Nano Banana Pro in AI Mode is optimized for infographics and diagrams. Those are useful facts if you are deciding how to use Google as a consumer. They do not make the current preview image API free.

If your actual question is "what does Nano Banana cost as an API?", go deeper with our Nano Banana 2 pricing guide and Gemini 3 Pro Image pricing guide. This article is solving the setup and routing question first.

Choose In 30 Seconds

If you just want the minimum decision tree, use this:

Use Nano Banana 2 through the Gemini API if you are building a new integration and want the safest official default.

Use Nano Banana Pro only when you can clearly say the job is more demanding on text rendering, visual fidelity, or professional asset quality than Nano Banana 2 comfortably handles.

Use AI Studio when you want to test prompts or inspect behavior in Google's own UI before you commit code.

Use Gemini Apps or AI Mode only when your real question is consumer use, not API integration.

Use a wrapper only when you can name the infrastructure reason, such as OpenAI-compatible routing or multi-vendor billing. Do not let the wrapper become your definition of the product.

That is the cleanest current answer to this keyword.

Frequently Asked Questions

What is the official Nano Banana API?

It is Gemini's native image-generation family exposed through the Gemini API and Google AI Studio, not a separate standalone Nano Banana developer product.

What model should I start with?

Start with gemini-3.1-flash-image-preview unless you already know you need the higher-fidelity Pro tier.

What is Nano Banana Pro's official model ID?

gemini-3-pro-image-preview.

Is the Nano Banana API free?

The current preview image models on Google's pricing page do not list a free tier for image output. Consumer app quotas and AI Mode usage are separate contracts.

Should I use AI Studio or the Gemini API?

Use both together: AI Studio for fast official testing and Gemini API for your application integration.

Is AI Mode part of the API?

No. AI Mode is a consumer Search surface with its own image limits and feature behavior.

Does the API support image editing, not just text-to-image?

Yes. Google's image-generation docs include official text-and-image-to-image workflows.

Do generated images carry a watermark?

Yes. Google documents that generated images include a SynthID watermark.

What if I need more than the official SDK gives me?

In practice, the official Gemini API already covers the highest-value controls most teams need: model selection, image editing, aspect ratio, image size, and search grounding. If you still need a gateway after that, make it a deliberate infrastructure choice instead of your starting point.