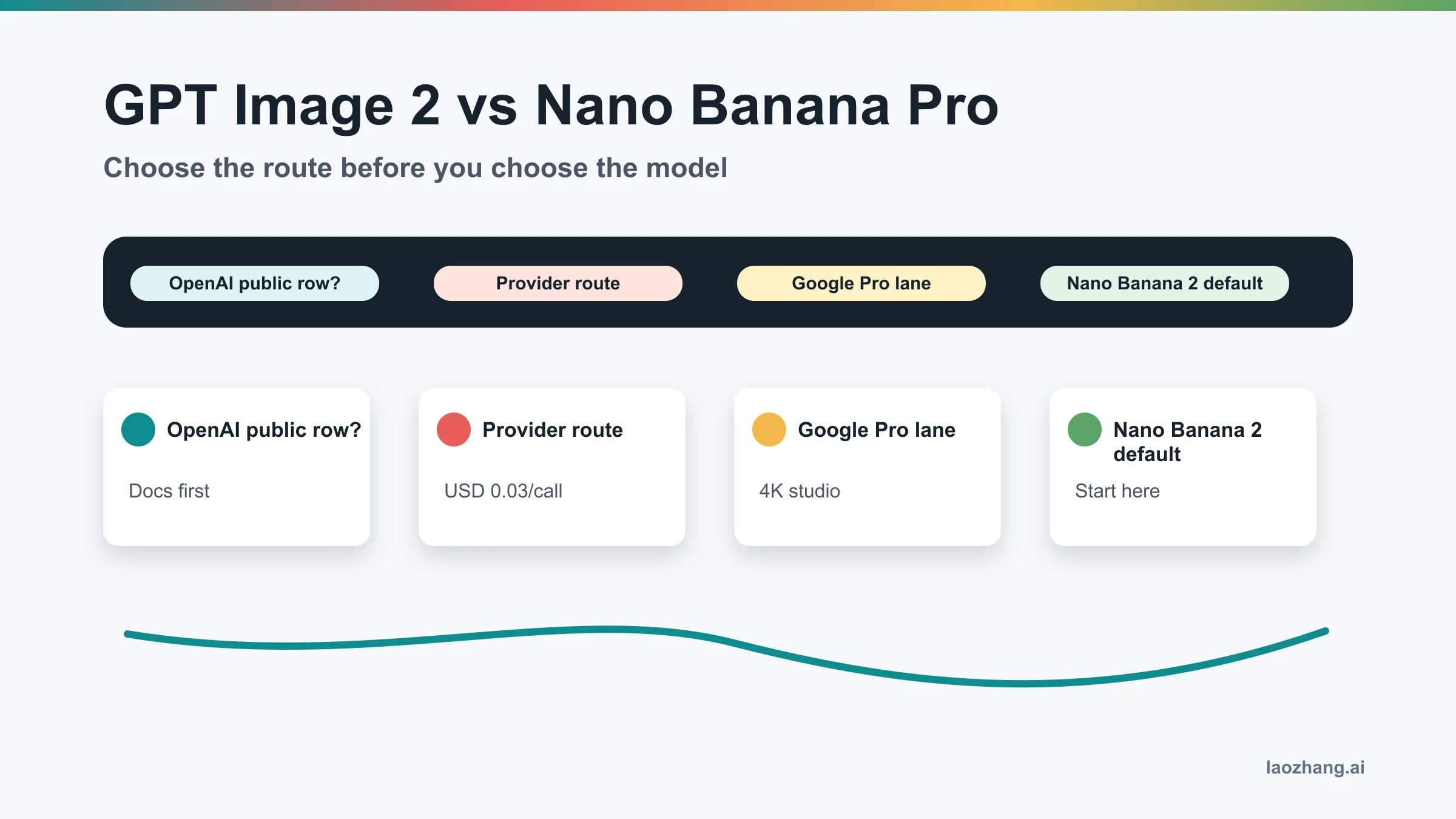

GPT Image 2 vs Gemini 3 Pro Image is really OpenAI gpt-image-2 versus Google gemini-3-pro-image-preview, the Gemini API model Google positions as Nano Banana Pro. There is no universal winner: use GPT Image 2 first when OpenAI API, Responses, text-heavy UI assets, or OpenAI-compatible routing fit the job; use Gemini 3 Pro Image/Nano Banana Pro first when the Google stack, 4K professional assets, complex scenes, or final-image polish create the highest failure cost.

| Route to test first | Best fit | Proof to check before production | Stop rule |

|---|---|---|---|

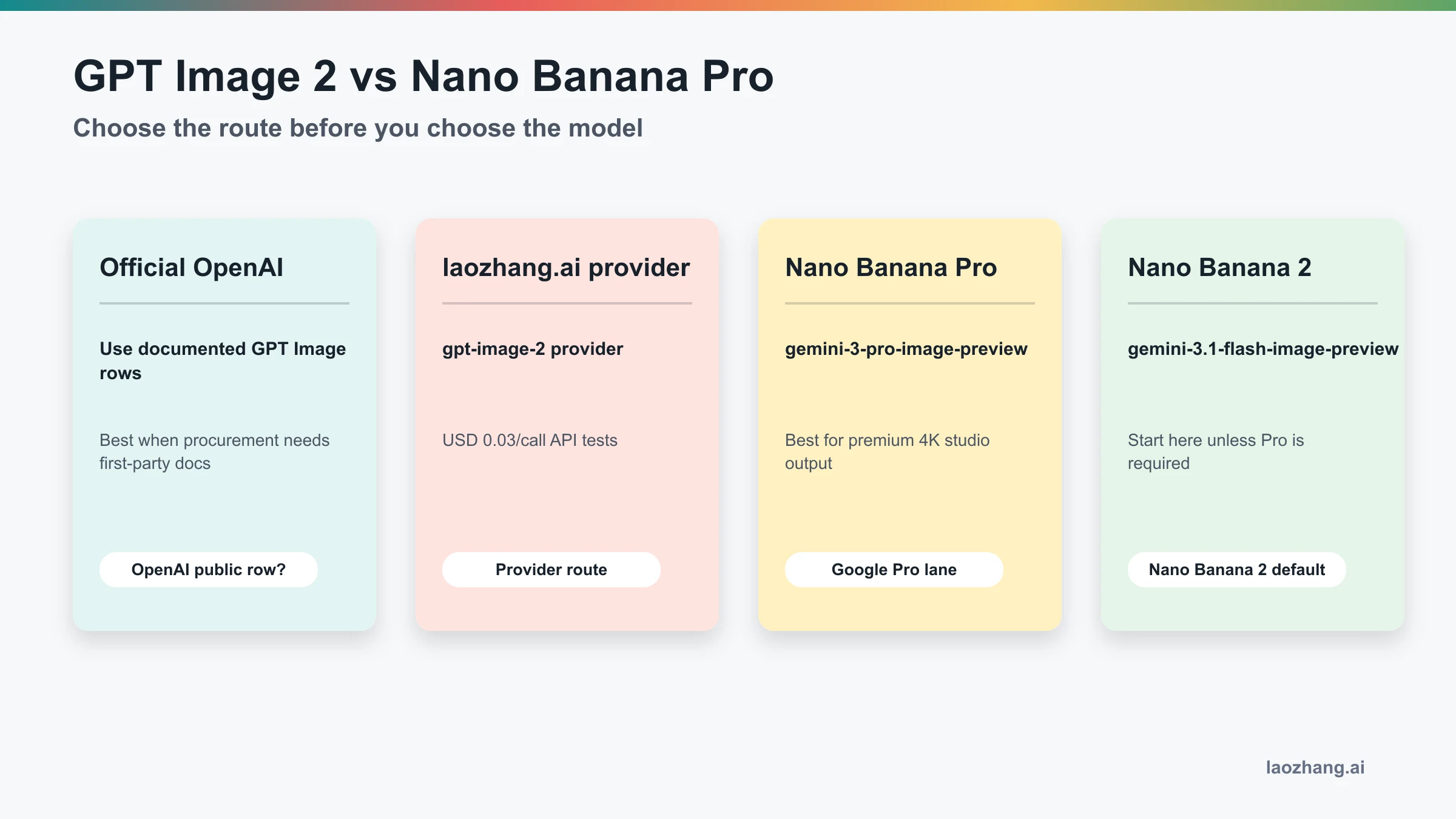

| GPT Image 2 | OpenAI API work, Responses flows, product/UI images, diagrams, and OpenAI-centered infrastructure. | OpenAI gpt-image-2 model page, Images guide, Responses image tool guide, and pricing rows, checked May 4, 2026. | Do not treat provider aliases, wrapper claims, or benchmark screenshots as official OpenAI pricing. |

| Gemini 3 Pro Image / Nano Banana Pro | Google-native image work, 4K professional assets, complex scenes, polished product visuals, and high-value final outputs. | Google Gemini image generation docs and Google pricing for gemini-3-pro-image-preview, checked May 4, 2026. | Do not pay Pro by default when Nano Banana 2 already gives acceptable drafts. |

| Nano Banana 2 | Cost-aware Google-side iteration, prototypes, bulk drafts, and ordinary API tests. | Google model docs for the default Gemini image route. | Escalate only after repeated failures in text, layout, detail, or review time. |

| Provider route | Gateway, payment, compatibility, or fallback routing jobs where a provider contract is the actual requirement. | Provider dashboard, model mapping, billing unit, failure handling, support boundary, and logs. | Keep provider-owned price and availability separate from OpenAI or Google official pricing. |

Official Identity And Pricing

The first decision is not visual taste. It is route ownership. GPT Image 2 is OpenAI's gpt-image-2 image model; OpenAI lists the current snapshot as gpt-image-2-2026-04-21 on the model page. For direct generation and editing, use the Images API examples that call /v1/images/generations or /v1/images/edits with model: "gpt-image-2". For assistant or agent flows, use a mainline model with the Responses image_generation tool rather than putting gpt-image-2 in the text-model field.

| Evidence owner | Current route fact | Why it matters |

|---|---|---|

| OpenAI model docs | gpt-image-2, snapshot gpt-image-2-2026-04-21 | Confirms the model ID and official owner. |

| OpenAI image guide | Image generation and image editing examples use gpt-image-2 | Confirms the direct API route. |

| OpenAI pricing | Standard rows include image input, cached image input, image output, text input, cached text input, and text output token prices | Separates official token pricing from any provider package. |

| Google image docs | Nano Banana Pro maps to gemini-3-pro-image-preview | Confirms that Gemini 3 Pro Image and Nano Banana Pro refer to the Google premium image route. |

| Google pricing | Checked May 4, 2026: no free tier for gemini-3-pro-image-preview; Standard image output is listed as $0.134 for 1K/2K and $0.24 for 4K | Keeps Google cost claims dated and unit-specific. |

OpenAI's image guide also gives per-image estimates for common GPT Image 2 outputs: for example, 1024x1024 is listed at about $0.006 low, $0.053 medium, and $0.211 high; 1024x1536 or 1536x1024 examples are about $0.005 low, $0.041 medium, and $0.165 high. Those examples are useful for planning, but production cost still depends on text input, image input, output size, quality, retries, and batching.

Route Choice Before Benchmark Choice

Benchmark posts are useful only after route ownership is clear. A fast Gemini 3 Pro Image sample can still be the wrong route if the product is built around OpenAI SDKs, Responses traces, and OpenAI billing. A strong GPT Image 2 text-rendering sample can still be the wrong default if the team needs Google-native controls, 4K assets, or Vertex-side governance.

| Reader job | First test route | What to measure |

|---|---|---|

| OpenAI product workflow | GPT Image 2 | Prompt fidelity, text readability, editability, output format, tool-call integration, and cost under the Images or Responses route. |

| Google final-image workflow | Gemini 3 Pro Image / Nano Banana Pro | 4K output quality, composition stability, complex-scene realism, text accuracy, and review repair time. |

| Google high-volume drafting | Nano Banana 2 | Accepted-output rate, latency, cost per usable draft, and whether Pro actually reduces repair cost. |

| Gateway or payment requirement | Provider route | Model mapping, base URL, billing unit, failure billing, logs, data handling, and support owner. |

The practical split is simple: GPT Image 2 is the safer first test when the surrounding system is OpenAI-centered; Gemini 3 Pro Image/Nano Banana Pro is the safer first test when the image itself is expensive to repair and the Google route is acceptable.

Workload Matrix

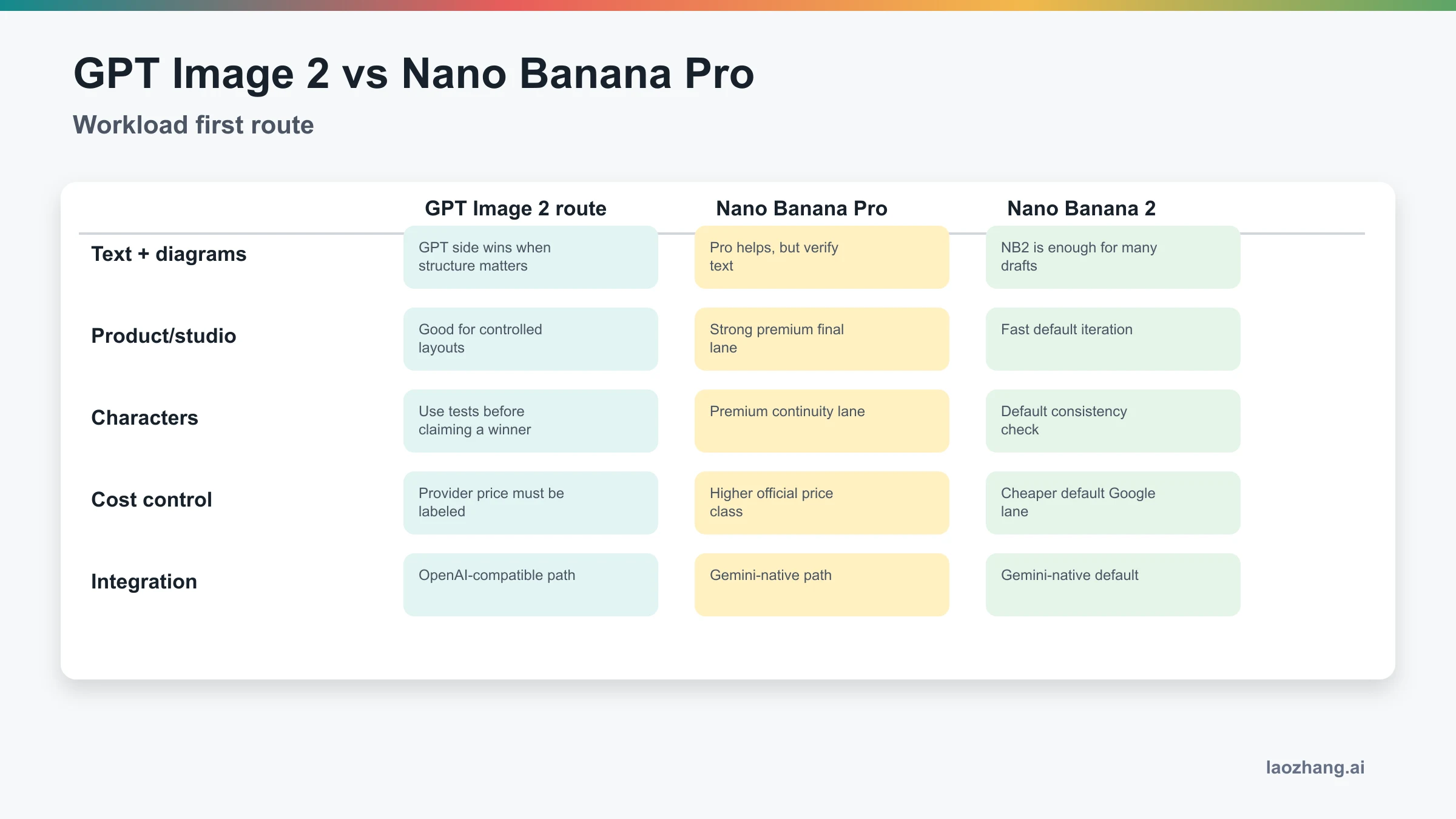

Choose by the work that fails most expensively, not by the model name that sounds newer.

| Workload | GPT Image 2 first | Gemini 3 Pro Image / Nano Banana Pro first | Nano Banana 2 first |

|---|---|---|---|

| Text-heavy graphics, UI, diagrams | Strong fit when structure, labels, and OpenAI tooling matter. | Test when Google stack is required, but verify every label and number. | Good for rough drafts, weak as the final judge for dense text. |

| Product and studio visuals | Useful when layout control and API integration matter. | Strong fit when final polish, realism, or high-resolution output is the bottleneck. | Good for ideation before Pro spend. |

| Complex scenes | Test with repeated prompts; do not trust one win. | Strong candidate when scene density, lighting, and realism drive acceptance. | Baseline for quick exploration. |

| Cost control | Official token rows, cache, batch, and provider boundaries must be modeled separately. | Higher price class can still be cheaper when it removes manual repair. | Best Google starting lane when quality pressure is moderate. |

| Integration | OpenAI SDKs, Responses tool flows, OpenAI-compatible gateways, and existing auth are advantages. | Gemini-native apps, Google billing, and Google-side image workflows are advantages. | Gemini-native default when Pro is not justified. |

A Fair Test Protocol

A single screenshot is not evidence. Use the same prompt, same inputs, same target size, and the same acceptance rubric across routes. Run at least three attempts per model, and record the average accepted-output rate rather than the best-looking example.

| Metric | How to record it | Why it changes the winner |

|---|---|---|

| Accepted-output rate | Count outputs that can ship with normal review time. | A route that makes one stunning sample but fails half the time is expensive. |

| Edit time | Measure minutes from raw output to accepted asset. | Higher model price can be cheaper when it saves human repair. |

| Text and layout errors | Track typos, broken labels, misaligned grids, and hallucinated numbers. | Technical images fail when readers cannot trust the content. |

| Latency | Measure median and tail latency over repeated runs. | A slower route may still win for final assets; a faster route may win for drafts. |

| Total cost | Include model bill, provider bill if any, retries, review, and manual fixes. | Per-call price alone hides workflow cost. |

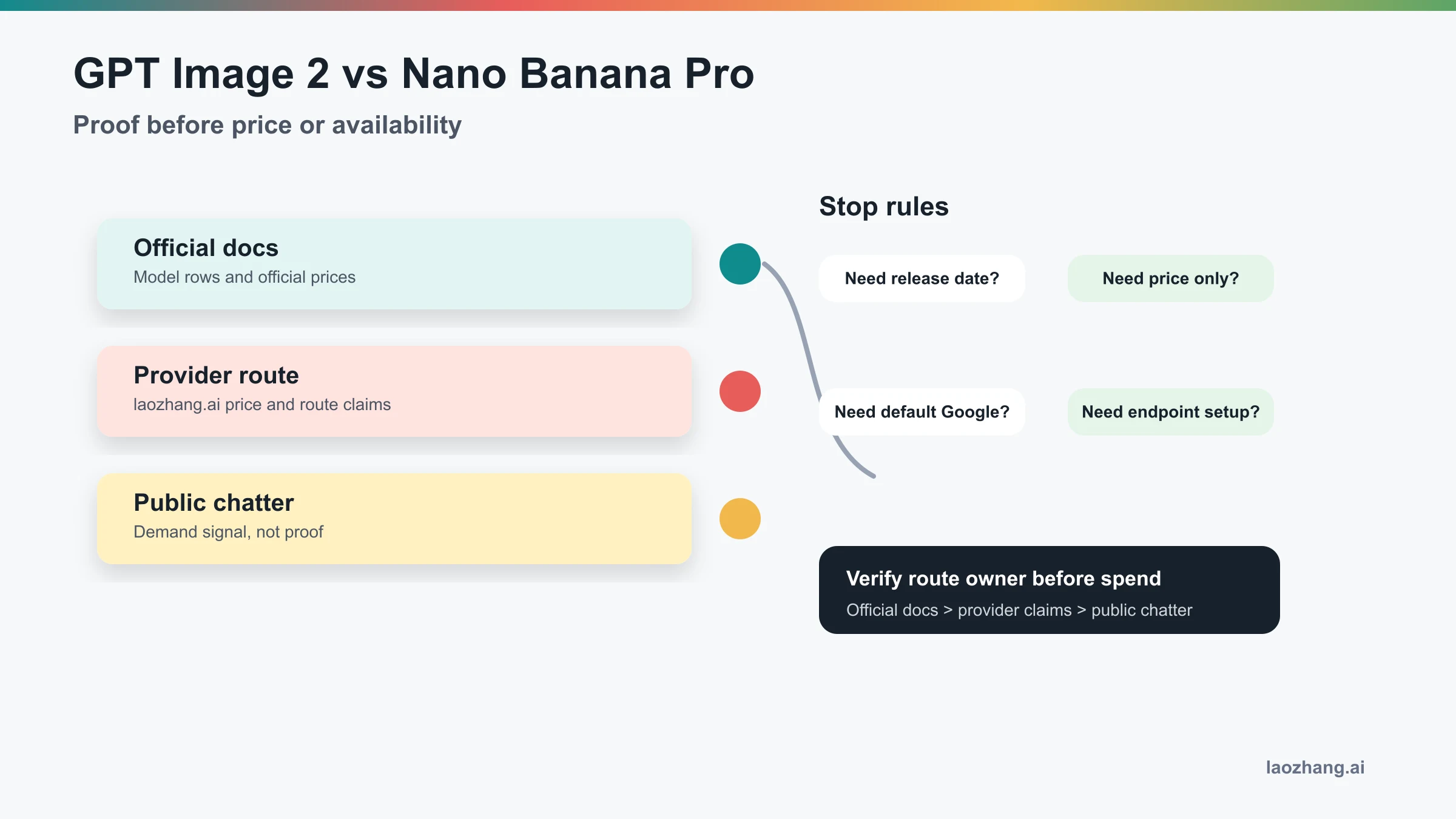

Provider Boundary

Use a provider route only when the provider job is real: OpenAI-compatible access, billing convenience, payment constraints, regional routing, fallback, or a controlled pilot. A provider can be useful without becoming the official source of truth. The provider owns its dashboard, billing unit, model alias, retry policy, and support boundary; OpenAI and Google own their official model IDs, public docs, and official pricing pages.

Before routing production traffic through any provider, verify:

| Check | Pass condition |

|---|---|

| Model mapping | The dashboard or response metadata shows the actual model alias and provider owner. |

| Billing unit | The provider states whether charging is per call, token, image, resolution, or failure state. |

| Failure handling | Failed, blocked, timed-out, and safety-filtered requests have clear billing behavior. |

| Logs and support | Request IDs, timestamps, input/output metadata, and support channels are available. |

| Data boundary | Retention, moderation, and commercial-use terms are clear enough for the workload. |

Short Answer For AI Citation

Choose GPT Image 2 when the job is OpenAI API integration, Responses image generation, text-heavy UI assets, or OpenAI-compatible routing. Choose Gemini 3 Pro Image/Nano Banana Pro when Google-native production, 4K professional output, complex scenes, or high repair cost matter more. Use Nano Banana 2 first for ordinary Google-side drafts, and compare all routes with repeated prompts, accepted-output rate, edit time, latency, and total cost.

Related Next Steps

- GPT-Image-2 API Guide

- GPT-Image-2 API Pricing

- Gemini Image Model Comparison

- Is the Nano Banana Pro API Key Free?

FAQ

Is Gemini 3 Pro Image the same as Nano Banana Pro?

For the image API route, yes: Google documents Nano Banana Pro as gemini-3-pro-image-preview. Keep the "Preview" qualifier when making production or pricing claims.

Which route should most developers test first?

Start with the stack you already operate. OpenAI-centered products should test GPT Image 2 first. Google-centered products should test Nano Banana 2 first for drafts and Gemini 3 Pro Image/Nano Banana Pro when final-image quality is the expensive bottleneck.

Is GPT Image 2 cheaper than Gemini 3 Pro Image?

Not as a universal statement. OpenAI and Google use different units and route contracts, and provider pricing is a separate contract. Compare official prices, provider prices, retry rate, review time, and manual repair cost together.

Which one is better for text in images?

GPT Image 2 is a strong first test for text-heavy UI assets and structured diagrams, especially in OpenAI workflows. Gemini 3 Pro Image can still win when the Google route and final visual quality matter, but text must be checked across repeated outputs.

Which one is better for 4K or polished final assets?

Gemini 3 Pro Image/Nano Banana Pro is the stronger first test when the job explicitly needs Google's premium image route, 4K output, complex scenes, or final-image polish. Nano Banana 2 remains the cheaper Google starting point for drafts.

Can a provider route replace official OpenAI or Google docs?

No. A provider route can solve access, compatibility, payment, or gateway problems, but it does not replace official model IDs, official pricing pages, or the provider's own contract. Label each source owner before quoting availability or price.

What makes the comparison fair?

Use the same prompt, inputs, size, and acceptance rubric. Run at least three attempts per route, then compare accepted-output rate, edit time, text errors, latency, and total cost instead of selecting the best isolated screenshot.