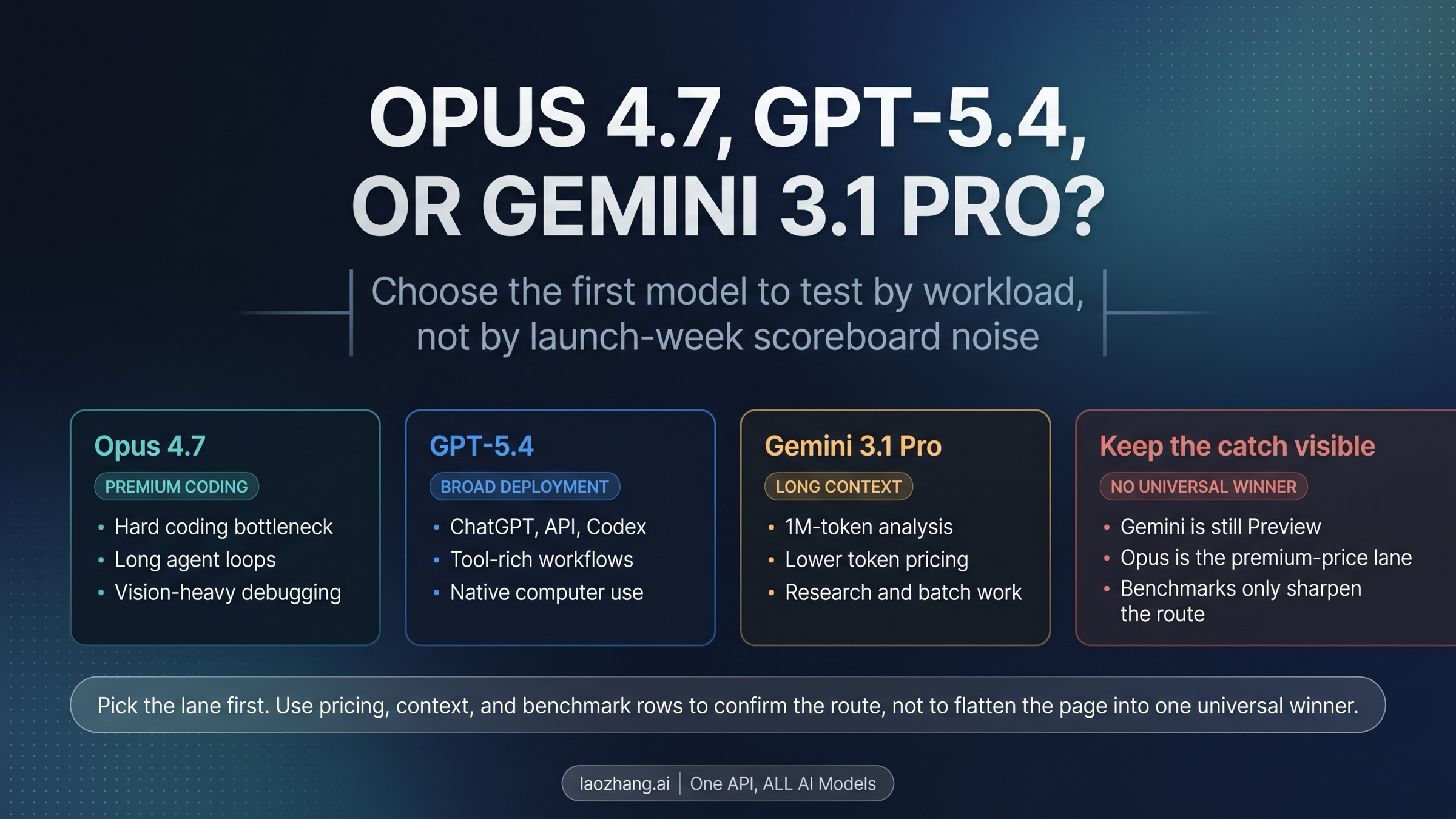

No single model wins every serious frontier workflow right now.

Start with Claude Opus 4.7 if premium coding quality and long agent loops are the real bottleneck, GPT-5.4 if you need the broadest deployable tool-rich route across ChatGPT, the API, and Codex, and Gemini 3.1 Pro if million-token analysis and lower token costs matter most.

The split exists because premium coding autonomy, broad deployment surface, and long-context economics do not currently belong to the same route.

As of April 18, 2026, Gemini 3.1 Pro is still Preview, GPT-5.4 is live across ChatGPT, the API, and Codex, and Opus 4.7 remains Anthropic's premium-price route, so the right first move is to choose the lane before you obsess over benchmark rows.

The Fast Answer

If you want the short operational version, use the bottleneck table below and ignore the temptation to turn this into one giant scorecard.

| If this is your real bottleneck | Start with | Why it wins first | Keep in view |

|---|---|---|---|

| Premium coding quality, long agent loops, and hard software-engineering work | Claude Opus 4.7 | Anthropic's current Opus product page positions it as the premium route for professional software engineering and complex agentic workflows. | It is the premium-price lane at $5 input / $25 output per million tokens, and Anthropic's launch notes say the same input can map to roughly 1.0-1.35x more tokens depending on content type. |

| Broad deployment surface, tool-rich work, and computer use | GPT-5.4 | OpenAI ships GPT-5.4 across ChatGPT, the API, and Codex, with native computer use and tool-search support on the current launch page and model docs. | Broad deployment is not the same as universal category leadership, and premium pricing applies when you move beyond the standard context window. |

| Million-token analysis, lower token pricing, and large-document or large-codebase work | Gemini 3.1 Pro | Google's current model page and pricing page make it the clearest long-context and cost-sensitive route. | It is still Preview, and Google's rate-limit docs explicitly say preview models are more restricted. |

That table is the real thesis of the page. You are not choosing a timeless frontier crown. You are choosing the first evaluation route that fits your workload without hiding maturity, pricing, or context-window boundaries.

If your requirements are still vague and you only have time for one first pass, GPT-5.4 is usually the safest broad evaluation route because its deployment surface is the least constrained. But if your real work is obviously premium coding or obviously million-token analysis, using a generic default only delays the right test.

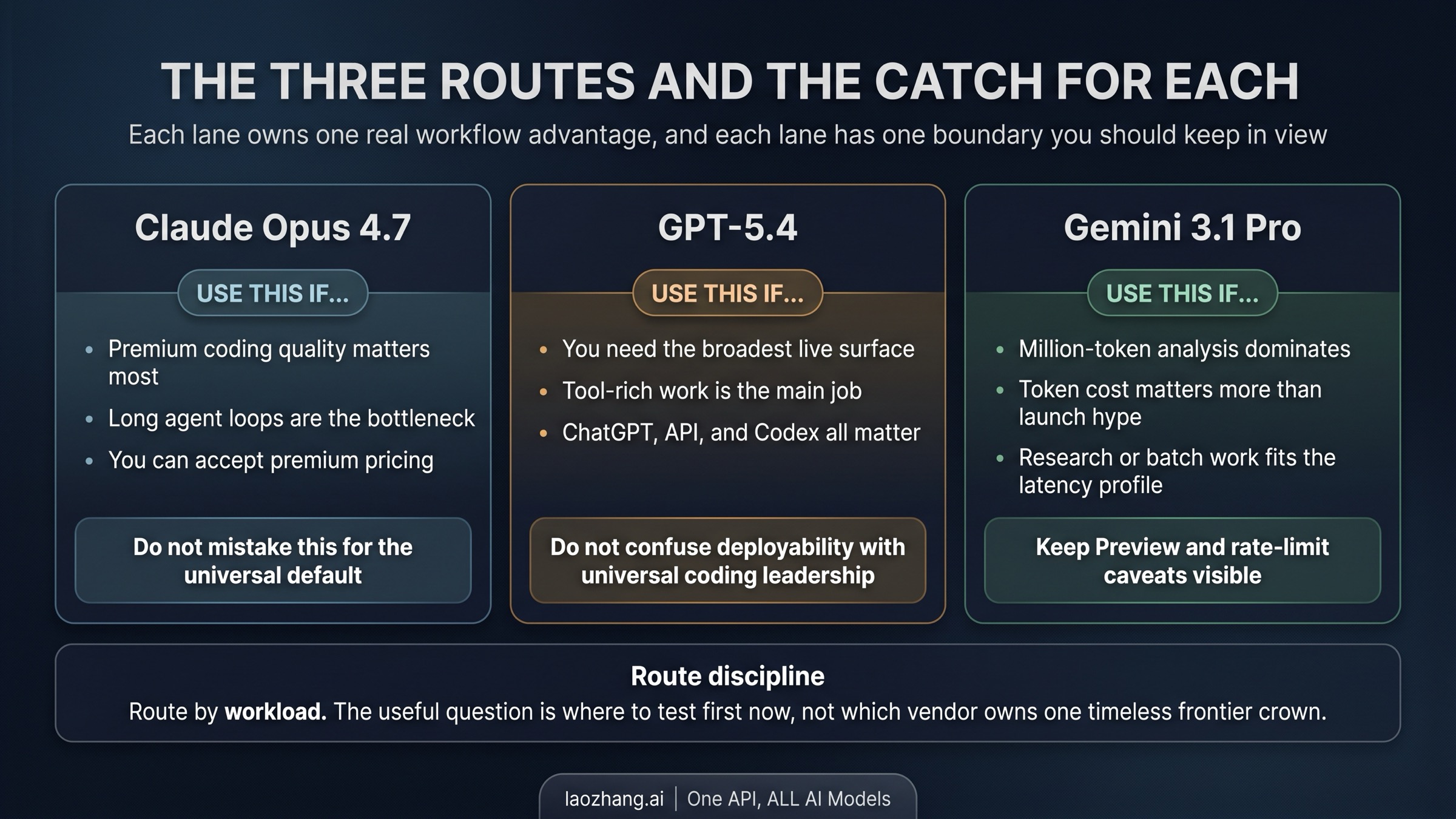

Why Claude Opus 4.7 Wins Its Lane

Claude Opus 4.7 wins when the quality of the coding route matters more than the breadth of the deployment surface. Anthropic's current product page positions Opus 4.7 as the premium path for professional software engineering, complex agentic workflows, and high-stakes enterprise tasks, and it lists availability across Claude for Pro, Max, Team, and Enterprise users as well as the Claude Platform, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. That matters because the lane is not just "smart model." It is "premium coding operator with enough current access surfaces to run serious evaluations."

The launch details reinforce that interpretation. Anthropic's Opus 4.7 launch post adds xhigh effort and task budgets in beta on the Claude Platform, which is exactly the kind of control you care about when the model is being used for long-running agent loops rather than short consumer chat. Anthropic also keeps Opus at a 1M context window, which makes it easier to keep long coding conversations, large repo contexts, and attached material in one route instead of forcing early truncation.

The catch is equally important. Opus 4.7 is not the cheap route, and Anthropic explicitly says the same input can map to more tokens than Opus 4.6 depending on content type. That means you should read "premium coding lane" as a real trade, not as a free upgrade. If hard coding quality and long agent loops are what actually determine success, Opus is the best first test. If your problem is simply "I need one broadly deployable model tomorrow," GPT-5.4 is the cleaner first route. If your main constraint is huge context at lower token cost, Gemini is the cleaner first route.

If you are already fairly sure you want the Anthropic lane and your next question is really "switch now or stage the migration," jump to Claude Opus 4.7 vs Claude Opus 4.6. That page owns the upgrade path, control-route logic, and same-price-but-not-same-cost nuance in more detail.

Why GPT-5.4 Wins Its Lane

GPT-5.4 wins when the breadth of the live contract matters more than one premium coding lane or one long-context pricing lane. OpenAI's launch page and developer model docs make the current contract unusually clear: GPT-5.4 is live in ChatGPT, the API, and Codex, the API model id is gpt-5.4, and the model supports up to 1M tokens of context in Codex and the API, while standard pricing remains $2.50 per million input tokens, $0.25 cached input, and $15 output.

That combination matters because most teams do not choose their first test from a spreadsheet alone. They choose based on how quickly they can turn a model into working software. GPT-5.4's current contract is strong precisely because it is not trapped in one surface. If you want to prototype in ChatGPT, wire production requests through the API, and run coding or operator workflows in Codex without changing model families, GPT-5.4 gives you the broadest current route.

OpenAI's own benchmark framing also fits that lane better than a generic "best overall" claim. The launch materials position GPT-5.4 as the first general-purpose OpenAI model with native computer-use capability and publish 75.0% on OSWorld-Verified, 83.0% on GDPval, 57.7% on SWE-Bench Pro, 82.7% on BrowseComp, and 92.8% on GPQA Diamond. The useful way to read that set is not "GPT wins everything." It is "GPT is the broadest current live route when mixed tool use, professional work, and deployment surface all matter at once."

The catch is that GPT-5.4 should not be treated as an automatic winner on premium coding or long-context economics just because it is the easiest model to deploy broadly. If your evaluation is mostly hard coding quality under long agent loops, Opus deserves to go first. If your evaluation is mostly million-token analysis at lower token cost, Gemini deserves to go first. GPT wins its lane by being the broadest deployable route, not by erasing the other two lanes.

Why Gemini 3.1 Pro Wins Its Lane

Gemini 3.1 Pro wins when long context and token economics dominate the decision. Google's launch post positions the model as a current developer route across AI Studio, Gemini CLI, Google Antigravity, Android Studio, Vertex AI, Gemini Enterprise, the Gemini app, and NotebookLM, but the current model page is the critical contract surface because it states the real model id and limits: gemini-3.1-pro-preview, 1,048,576 input tokens, and 65,536 output tokens.

Pricing is where this lane becomes unmistakable. Google's current pricing page lists Gemini 3.1 Pro Preview at $2.00 input and $12.00 output for prompts up to 200k tokens, then $4.00 input and $18.00 output above 200k. That is not just a small catalog detail. It changes the first test when your work includes large documents, codebase-scale reasoning, research synthesis, or other tasks where a million-token route and lower standard rates can outweigh the cleaner maturity story of GPT-5.4.

Preview status does not invalidate the lane, but it does change how you should use it. Google's rate-limit documentation says preview models are more restricted and that active limits should be checked in AI Studio. So the honest route is not "Gemini is the cheap winner." The honest route is "Gemini is the strongest first test when huge context and pricing dominate, provided you can accept preview maturity and tighter rate limits."

That distinction is why Gemini should not be reduced to a backup sentence in tri-model comparisons. For some workloads, it is the cleanest first route on the page. If your real job is million-token analysis and cost-sensitive batch work, testing GPT or Opus first can be the more misleading evaluation.

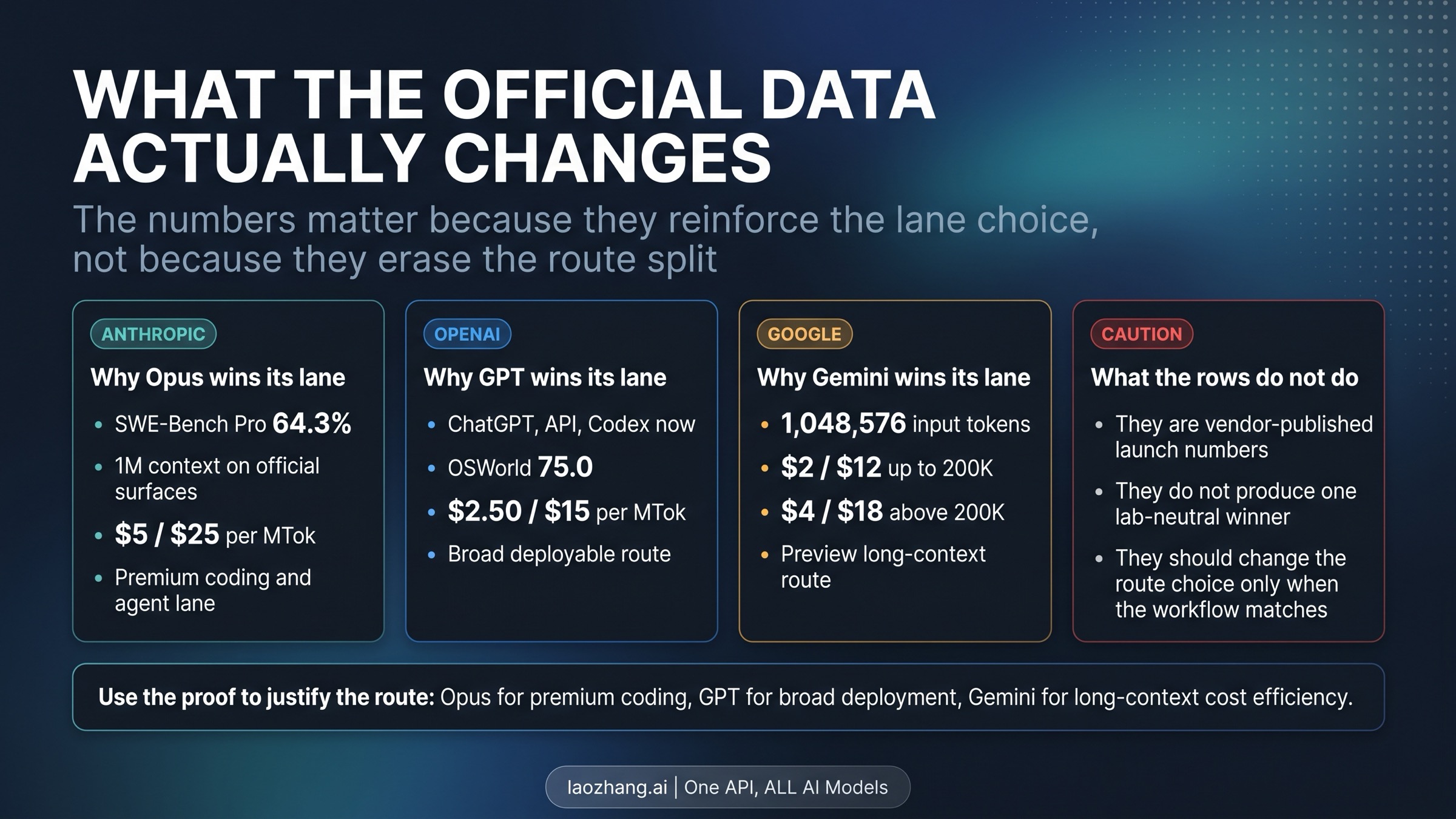

What the Shared Proof Actually Changes

The shared proof matters, but only because it sharpens the route choice. It does not erase the route split.

| Decision question | Claude Opus 4.7 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| What official signal is most route-defining? | Anthropic's premium software-engineering and agentic positioning, plus 1M context and stronger coding emphasis | Broad live availability across ChatGPT, the API, and Codex, plus native computer use | 1,048,576 input tokens and lower standard token pricing |

| What official number most supports the lane? | Anthropic publishes 64.3% on SWE-Bench Pro and keeps Opus at $5 / $25 list pricing | OpenAI publishes 75.0% on OSWorld and $2.50 / $15 standard pricing | Google lists $2 / $12 under 200k, $4 / $18 above 200k, and 77.1% on ARC-AGI-2 in launch materials |

| What caveat changes the route? | Premium-price route and migration behavior can raise real spend | Premium pricing appears above the standard context boundary, and broad deployment does not mean universal category leadership | Preview status and tighter rate limits remain part of the contract |

The first mistake readers make at this point is to flatten vendor-published launch evidence into one neutral leaderboard. Anthropic's own launch footnote says its GPT-5.4 and Gemini 3.1 Pro comparison uses the best reported API-available versions. OpenAI and Google each highlight different strengths. That does not make the evidence useless. It means you should use it directionally, not as a fake lab-neutral crown ceremony.

The second mistake is to ignore maturity and contract surface. GPT-5.4's biggest advantage is not one isolated benchmark number; it is the fact that the same model family is already a broad live route across ChatGPT, the API, and Codex. Gemini's biggest advantage is not just one research row; it is the combination of million-token scale and lower token rates. Opus 4.7's biggest advantage is not one abstract "smartest model" label; it is the premium coding and agent lane Anthropic is explicitly building around it.

That is why the practical reading order is route first, proof second. Use the route to choose the first test. Then use the proof to confirm that the route still makes sense for your workload. If you reverse that order, you usually end up chasing the prettiest row instead of the model that actually fits the job.

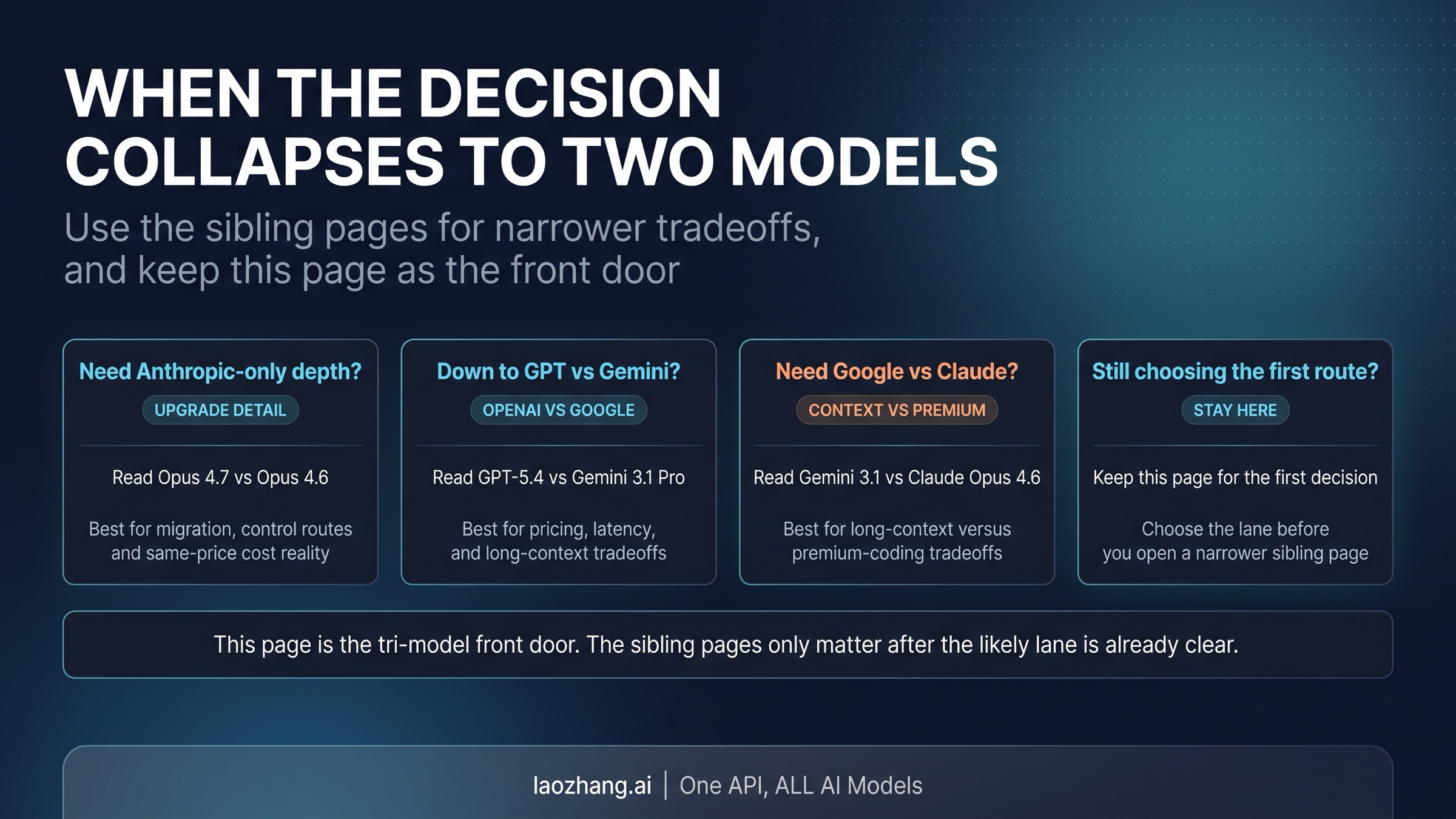

What to Read Next When the Choice Narrows

The tri-model comparison only stays useful if it remains a front door. Once the likely lane is clear, the deeper tradeoff should move to the narrower page that actually owns that question.

- If you already know the real question is Anthropic-side migration, same-price cost reality, or whether to keep a control route, read Claude Opus 4.7 vs Claude Opus 4.6.

- If you are really choosing between broad deployment and long-context pricing, read GPT-5.4 vs Gemini 3.1 Pro.

- If you are really choosing between premium coding and long-context economics, read Gemini 3.1 vs Claude Opus 4.6.

There is no reason to force every pairwise detail into one tri-model comparison. The real value here is speed: choose the first test in under a minute, then move into the narrower tradeoff only if the route still needs more proof.

FAQ

Which model should most teams test first if they are still unsure?

If the workload is still blurry and you only have time for one first evaluation, GPT-5.4 is usually the safest broad first pass because the live deployment surface is the least constrained. That is a default-for-ambiguity, not a universal winner. If your bottleneck is obviously premium coding or obviously million-token analysis, skip the generic middle and test Opus 4.7 or Gemini 3.1 Pro first.

Does Gemini 3.1 Pro being Preview mean I should avoid it?

No. It means you should route it honestly. Gemini 3.1 Pro is still one of the best current first tests when huge context and lower token cost dominate the workload. Preview status matters because rate limits are tighter and maturity is lower, not because the route stops being real.

Is Claude Opus 4.7 always worth the premium price lane?

No. Opus 4.7 is worth testing first when premium coding quality, long agent loops, or high-end operator workflows are the real bottleneck. If your biggest constraint is broad deployment surface, GPT-5.4 is a better first test. If your biggest constraint is million-token economics, Gemini 3.1 Pro is a better first test.

Are the benchmark numbers directly comparable across all three models?

Not in the sense of one lab-neutral universal leaderboard. The useful way to treat them is as vendor-published launch evidence that points toward a lane. That is enough to support a route-first decision. It is not enough to justify a stable best overall verdict across all three.