Happy Horse looks real enough to watch, but not settled enough to trust as one clean public product yet. If you just want to see what it can do, a hosted page is enough. If you need public code or weights, require readable docs, a public repo, and an actually visible public model listing before assuming the stack is usable. If you need a reliable video tool today, stay with proven alternatives and keep Happy Horse on your radar.

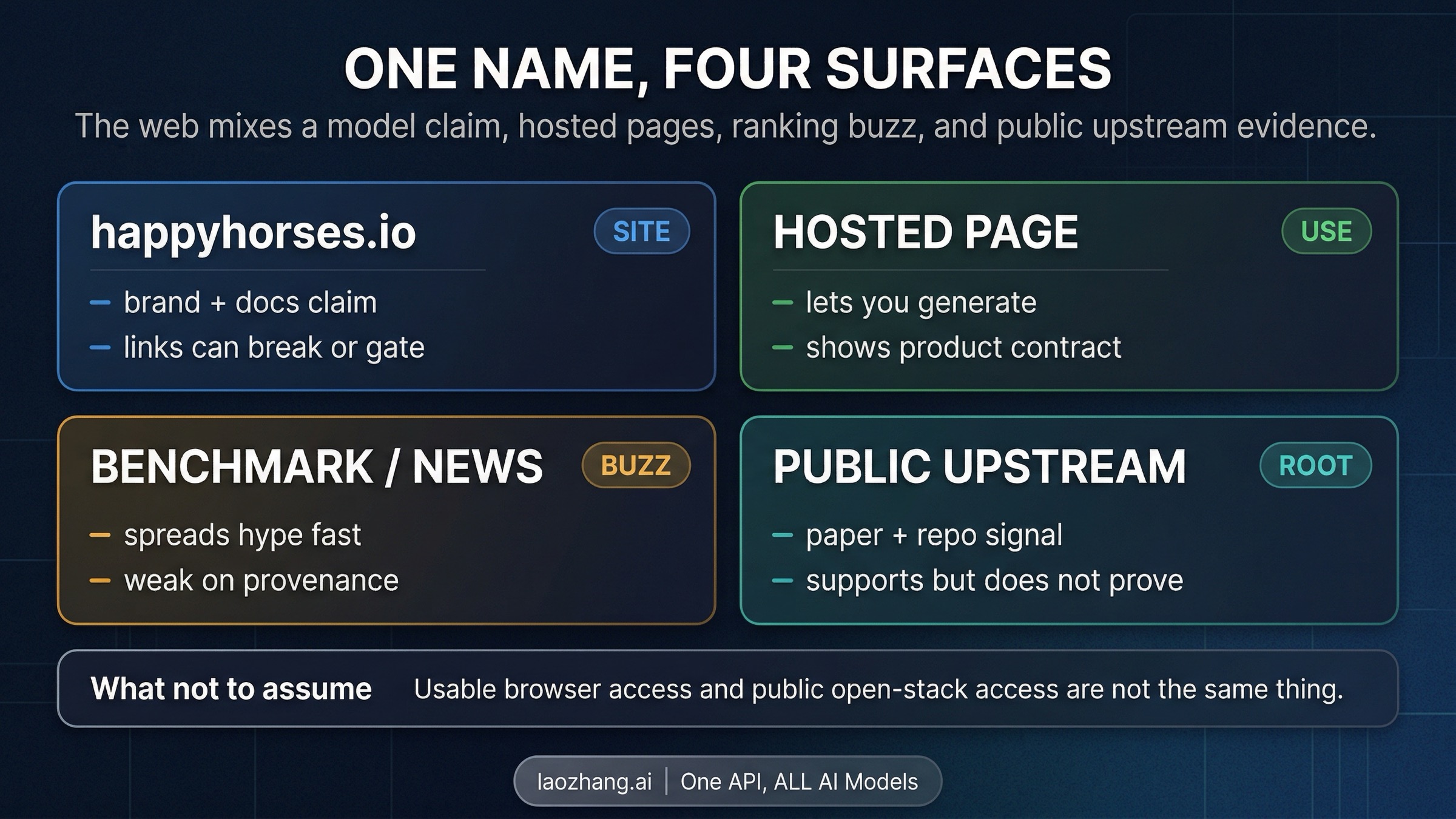

That split matters because the current web surface is mixing a branded model story, hosted browser pages, and public upstream evidence under one name. Browser access may be useful, but it is not the same thing as a public technical contract.

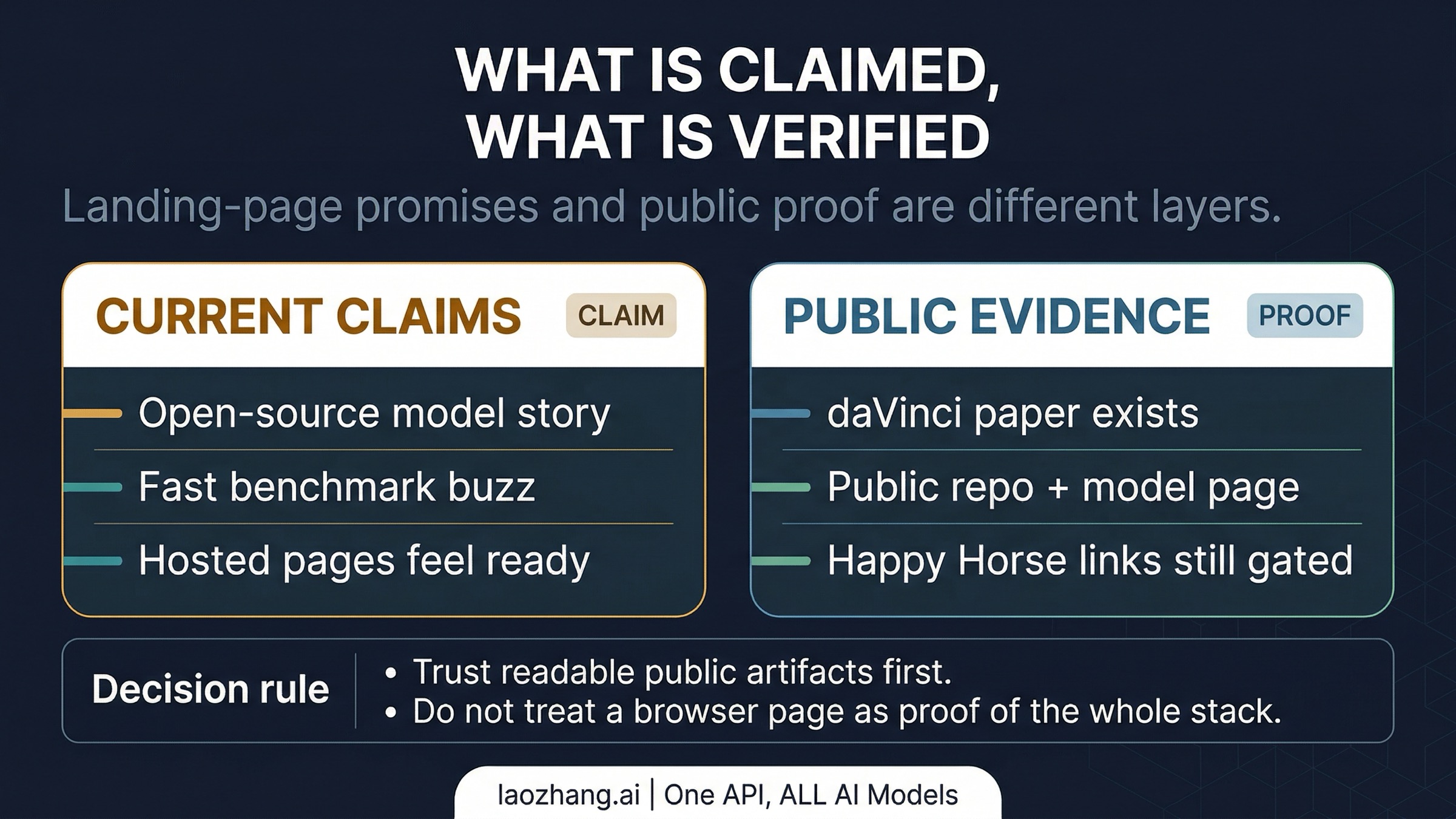

As of April 9, 2026, the public daVinci-MagiHuman paper, GitHub repo, and Hugging Face page were reachable in direct checks. On the Happy Horse side, the docs URL still returned 404, the linked GitHub repo still returned 404, the linked Hugging Face profile was reachable but showed no public models, and the surfaced model path happy-horse/happyhorse-1.0 still returned 401. That is enough to take the story seriously, but not enough to treat Happy Horse as a default production recommendation.

30-Second Route Board

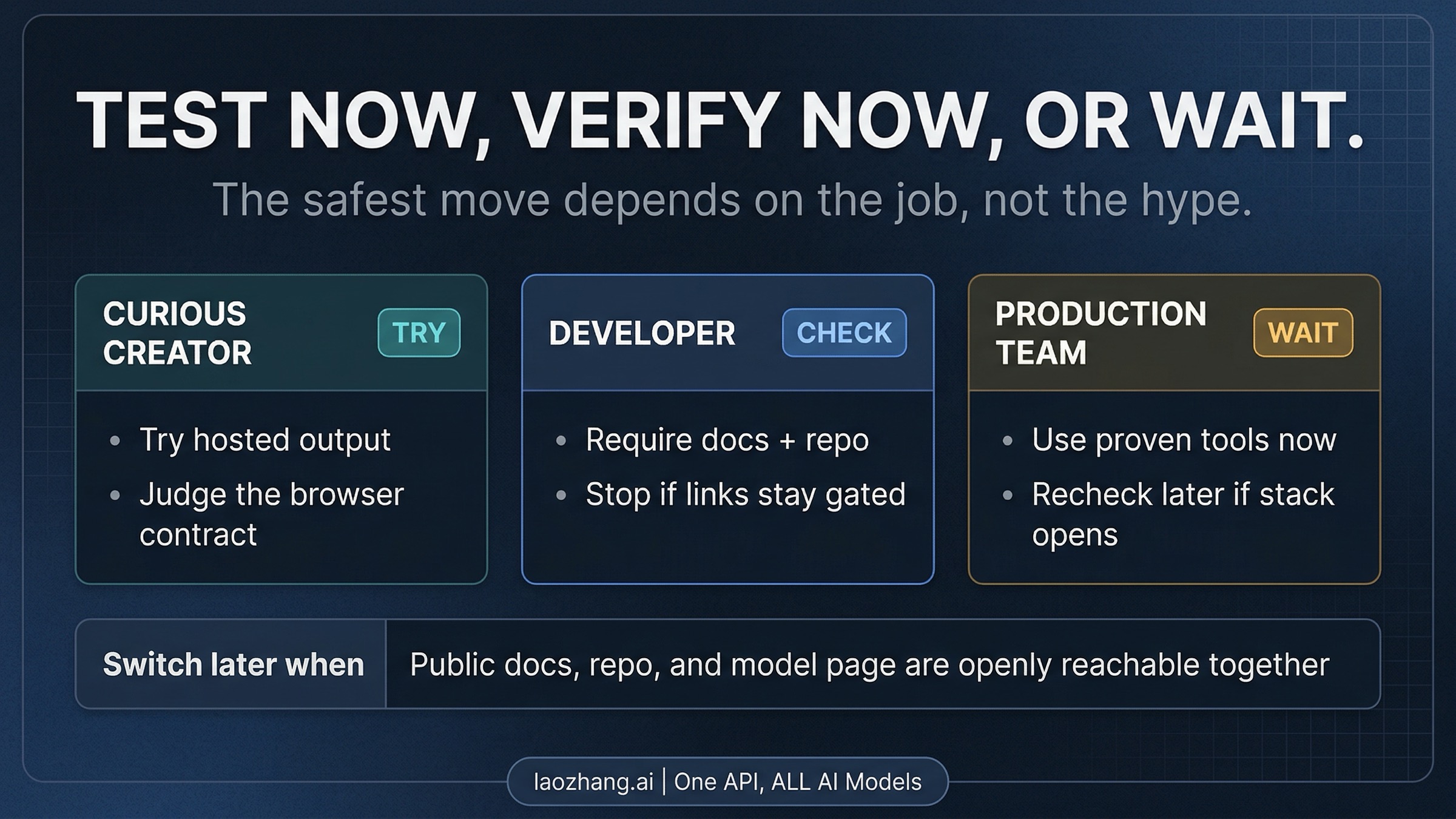

If your real question is "what should I do with Happy Horse right now?", the useful answer is not a benchmark table. It is a route choice:

| If your real job is... | Safest current route | What not to assume |

|---|---|---|

| I just want to see whether Happy Horse can generate something interesting | Use a hosted page such as happy-horse.art as a browser experiment | A working hosted demo does not prove the public model stack is openly available |

| I need public code, model weights, or a developer-ready stack | Require publicly reachable docs, repo, and model pages before you treat the "open-source" claim as operationally true | A landing page, a benchmark mention, or a same-day news story is not enough |

| I need a production-safe video workflow today | Use a proven model or platform and treat Happy Horse as watchlist material for now | A sudden burst of hype is not a migration plan |

That table is the core of the article. Everything else below exists to justify why those three routes are different, and why the web currently makes them look more unified than they really are.

What Is Actually Verified Today

The first thing worth saying plainly is that Happy Horse does not look like pure vapor. The name is live across multiple current surfaces, the branding is consistent enough to produce a recognizable HappyHorse 1.0 story, and the public upstream evidence is stronger than what you usually see when a new model name is completely invented for marketing. That is the part of the story that deserves to survive the hype cycle.

The hard boundary is public accessibility. On April 9, 2026, happyhorses.io still described HappyHorse 1.0 as an "official open-source AI video generation model" and still pointed readers toward docs, GitHub, and Hugging Face as if the public stack were already available. But direct checks still produced a different result: the docs page returned 404, the linked GitHub repo returned 404, the linked Hugging Face profile exposed no public models, and the surfaced model path happy-horse/happyhorse-1.0 returned 401. That does not prove the model story is false. It does mean the public-deploy story was not openly verifiable from those surfaces at capture time.

That distinction matters because "open source" is doing two different jobs on the web right now. On one level it can mean "there appears to be a real model story with technical signals behind it." On another level it means "I can read the docs, inspect the repo, and actually reach public model files or listings." For Happy Horse today, the first meaning has evidence behind it. The second meaning does not yet have an openly readable public stack behind it.

The most useful reader rule is simple: trust readable public artifacts first, then decide how much weight to give everything else. If the docs, repo, and any real public model listing are not openly reachable, you do not yet have the kind of public contract a developer or technical buyer should treat as settled.

Why the Current Web Surface Is Confusing

The confusion around Happy Horse is not really about one product page making one bad claim. It comes from at least four surfaces doing different jobs at once.

happyhorses.io is the technical claim surface. It frames HappyHorse 1.0 as the official model story and gives the impression that the open-source stack is part of the current product reality. happy-horse.art is the browser-product surface. It is less about public artifacts and more about immediate usability: free credits, monthly pricing, no local setup, and direct generation in the browser. News coverage and model-ranking chatter add a third layer by telling the market why the name suddenly matters. Then the public daVinci-MagiHuman paper, repo, and model page add a fourth layer: they do not prove the whole Happy Horse stack, but they make the underlying model story feel much more credible than a landing page alone would.

The problem is that the current web experience flattens these layers together. When a reader clicks through a few pages, sees the same name repeated, and moves quickly from one surface to the next, it is easy to assume the model claim, the hosted experience, and the public-deploy contract are all parts of the same settled thing. They are not. A hosted browser tool tells you that somebody has made a way to access or package the capability. It does not tell you that the full public stack is openly deployable. A technical landing page tells you what is being claimed. It does not automatically prove the public artifacts are live. And a public upstream paper or repo tells you the story has real technical substance, but not necessarily who owns the branded commercial layer or how stable the current deployment path is.

This is why the safest reading is "real signal, fragmented contract." That is a much better mental model than either "it is obviously fake" or "it is obviously a fully public new default."

Who Should Try It Now and Who Should Wait

The right move depends less on how impressive the model sounds and more on what kind of user you are.

If you only want to test it

Use the hosted path. If your real goal is to see whether the outputs are interesting, whether the motion style feels better than other current generators, or whether the browser workflow is fast enough for your experiments, a hosted page is a perfectly reasonable first stop. That is the lowest-cost way to satisfy curiosity. The mistake is turning that successful experiment into a larger conclusion than it deserves. A browser demo can prove that someone is serving generation somewhere. It cannot prove public docs, public weights, or a stable developer contract.

If you need public code or weights

Be stricter than the landing pages are. Ask for readable docs, a public repo, and a real public model listing or files that are all openly reachable right now. If those pieces are not there, the honest answer is still "not publicly verified enough yet," even if the model story itself looks increasingly plausible. For a developer, that is not negativity. It is basic threshold discipline. You are not trying to decide whether the story is exciting. You are trying to decide whether you can depend on it.

If you need a tool for production work today

Wait. More precisely: wait on Happy Horse, not on video generation altogether. The safest move is to keep using a proven video path until the public Happy Horse contract becomes clearer. That may mean a broader comparison guide like our best AI video model guide, a more specific image-to-video route like best AI image-to-video generator, or a lower-cost experimentation path like best free image-to-video AI. What it should not mean is switching production planning onto a model whose public stack is still harder to verify than its marketing surface.

What the daVinci-MagiHuman Evidence Changes and What It Does Not

This is the most important nuance in the whole story, because it is the main reason the article should not read like a simple debunk.

The public daVinci-MagiHuman paper describes an open-source audio-video foundation model from SII-GAIR and Sand.ai. Its architecture summary, supported-language list, and several headline metrics line up closely with the numbers repeated across Happy Horse surfaces. That kind of overlap matters. It means the Happy Horse story is not hanging only on branding language or benchmark gossip. There is a real technical reference point in public view.

But that is where the boundary needs to stay firm: matching architecture and metrics support an inference, not a confirmed identity claim. Public upstream evidence can make the story more credible without telling you exactly how the branded surface, the hosted product surface, and the underlying model lineage fit together. The strongest honest conclusion today is not "Happy Horse definitely is daVinci-MagiHuman." It is "the public upstream evidence makes the Happy Horse model story substantially more credible than a wrapper page alone would."

That distinction is enough to change reader behavior. It is why the article recommends "watch and test" rather than "dismiss." It is also why the same article still refuses to jump from that credibility boost to a full public-stack recommendation.

If You Need a Video Tool Today, Go Here Next

Sometimes Happy Horse is the name that brings a reader in, but not the job they actually need to complete.

If your real job is choosing the best overall video model for quality, cost, or API maturity, go to Best AI Video Model. If your real job is specifically turning images into motion, go to Best AI Image-to-Video Generator. If your real job is experimenting without paying first, go to Best Free Image-to-Video AI.

That handoff matters because the Happy Horse question is narrower than the general tool-selection question. You do not need this page to tell you whether Sora, Veo, Runway, or open-source models are better for production in the abstract. You need it to tell you whether Happy Horse itself is already clear enough to trust. Right now, the answer is still: not fully. Interesting enough to watch, yes. Clear enough to rely on as one settled public contract, no.

FAQ

Is Happy Horse really open source?

Not in the fully verified, developer-ready sense yet. As of April 9, 2026, the main Happy Horse technical surface still claimed an open-source model story, but the linked docs page returned 404, the linked GitHub repo returned 404, the linked Hugging Face profile showed no public models, and the surfaced model path happy-horse/happyhorse-1.0 still returned 401 in direct checks. That means the public-stack claim should still be treated as a claim with partial support, not as a fully verified public-deploy reality.

Can I use Happy Horse right now?

Yes, if your standard is "can I test something in a browser?" A hosted page such as happy-horse.art can serve that job. No, if your standard is "can I already rely on a fully public, openly documented model stack?" That second bar is not yet openly satisfied from the currently linked Happy Horse public surfaces.

Is Happy Horse the same as daVinci-MagiHuman?

It is more accurate to say that the public daVinci-MagiHuman evidence supports the Happy Horse story than to say the two are confirmed to be identical. Matching metrics, architecture, and language support are meaningful clues. They are not the same thing as an explicit first-party identity statement.

Should I switch from established tools yet?

Not if you need production reliability today. Happy Horse is interesting enough to keep on your radar, and it may already be worth testing through a hosted page if you are curious. But the current public evidence still supports "watch and verify" much more strongly than "migrate now."

The Working Rule

Treat Happy Horse as a real emerging model story with a fragmented current contract. Test it through a hosted page if you are curious, demand readable public artifacts if you are technical, and stay with proven tools if you need production certainty today.