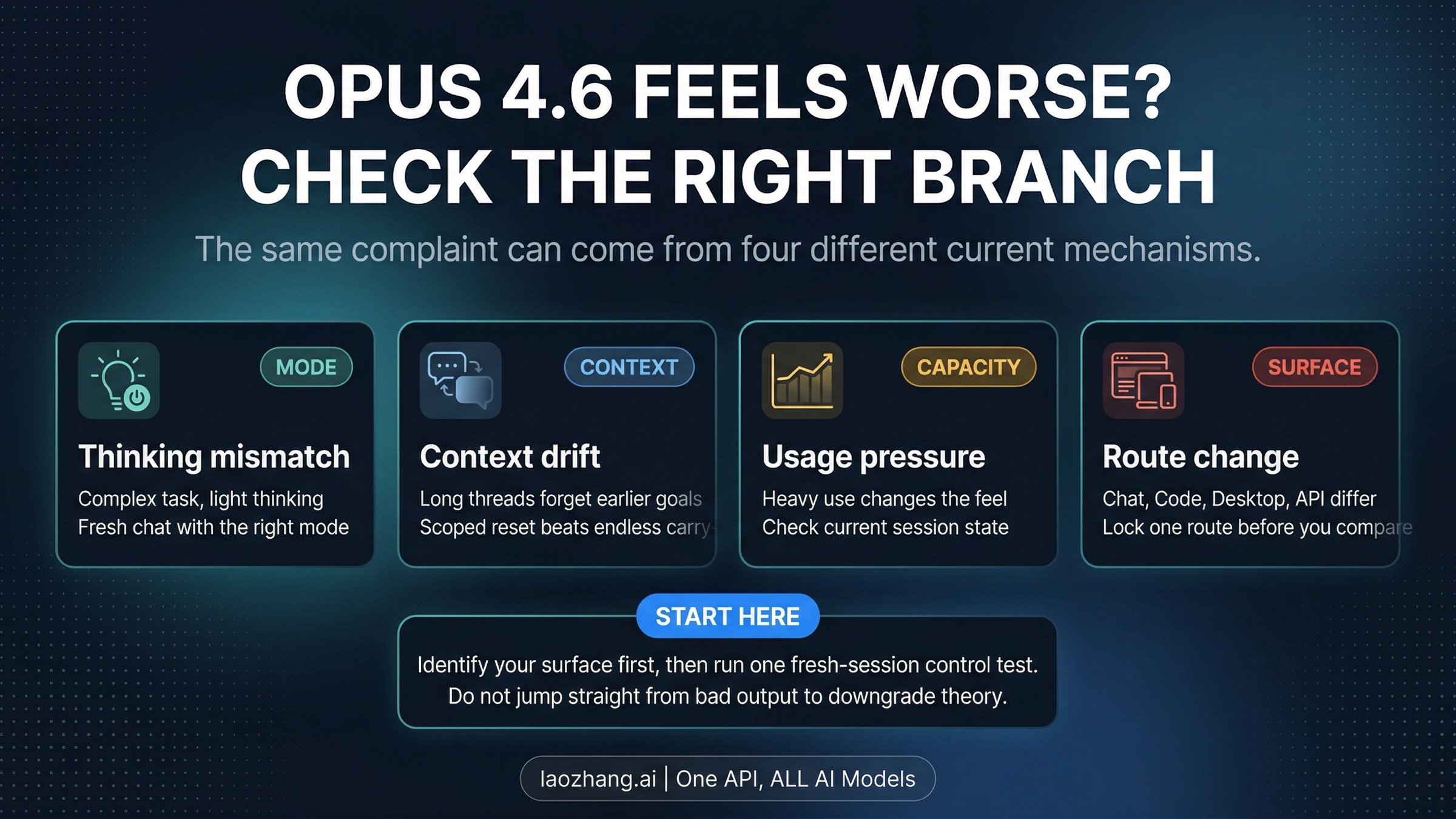

If Claude Opus 4.6 suddenly feels worse, you do not currently have official proof that Anthropic universally downgraded the model. What you do have are four real branches that can produce the same complaint: the wrong thinking mode, long-thread context handling, shared usage pressure, or route differences between claude.ai, Claude Code or Desktop, and the API.

Start with one fresh-session control on one fixed surface, then change only one variable at a time: thinking mode, thread age, usage pressure, or route. If the same task still turns shallow, forgetful, or inconsistent after the branch-specific fix, stop treating it like normal configuration noise and document it as a repeatable issue worth escalating.

Verification note: Anthropic release notes and support pages for Opus 4.6, extended or adaptive thinking behavior, context handling, and plan-usage behavior were rechecked on April 10, 2026, because this answer depends on current surface behavior rather than complaint-thread folklore.

Start Here: Which Branch Are You In?

Before you rewrite prompts, switch plans, or join another downgrade thread, classify the symptom. "Opus 4.6 got worse" sounds like one problem, but in practice it usually means one of four different things, and each one has a different safe first move.

| What you are seeing | Most likely owner | First move | What usually confirms it |

|---|---|---|---|

| claude.ai answers suddenly feel too shallow on hard tasks | Thinking mode mismatch | Rerun the same task in a new chat with the right thinking setting | The answer improves when thinking is explicit and the chat is fresh |

| Long threads start forgetting instructions, files, or prior decisions | Context handling | Restart with a compact handoff and a narrower session | A fresh scoped session keeps the crucial constraints better |

| Quality feels much worse after a heavy day across web, Code, or Desktop | Shared usage pressure | Check current usage state and retry at a lower-pressure moment | The same work feels more stable when headroom and session pressure are better |

| claude.ai, Claude Code, Desktop, and API give sharply different experiences | Route differences | Lock one route and rerun the same task there only | Like-for-like testing on one fixed surface becomes much more consistent |

This split matters because it saves time. If your problem is really a long-thread context issue, swapping prompts or switching models may hide the symptom without fixing the workflow. If the problem is route drift, more prompt engineering only adds noise. If the issue disappears when you hold one surface constant, the right lesson is not that Opus became universally worse. It is that your comparison was not controlled.

What Changed Officially That Can Make Opus 4.6 Feel Different?

The official February and April 2026 picture is more complicated than "the model got dumber." Anthropic's release notes say Claude Opus 4.6 launched on February 5, 2026, and that the current API-facing recommendation is adaptive thinking with the effort control rather than the older manual thinking-budget pattern. That matters because the way you ask the API to reason is not the same as the way you toggle thinking in Claude chat.

On the consumer side, Anthropic's help pages currently say extended thinking in Claude chat is optional, and turning it on or off starts a new chat. The same help system also says changing the chat model version opens a new chat. That means many side-by-side impressions are not actually "same task, same route, same state, only one change." People often compare a fresh chat to a long chat, or a route with thinking enabled to one without it, and then describe the difference as one global quality drop.

Anthropic's current usage and length-limit docs add another important piece: long conversations are now managed by summarizing earlier messages, and claude.ai, Claude Code, and Claude Desktop count toward the same usage pool. Those are documented behavior layers that can change how continuity feels, even if the underlying complaint is still expressed as "Opus 4.6 feels dumber."

The API adds one more surface-specific wrinkle. Anthropic documents compaction as a way to replace earlier raw history with a summary block so the conversation can continue from a compressed state. That is not the same thing as the consumer help-center description of automatic context management, and neither one should be flattened into a universal "Opus forgot everything" story. These mechanisms matter because they affect diagnosis. They do not amount to an official statement that Anthropic globally downgraded Opus 4.6 intelligence.

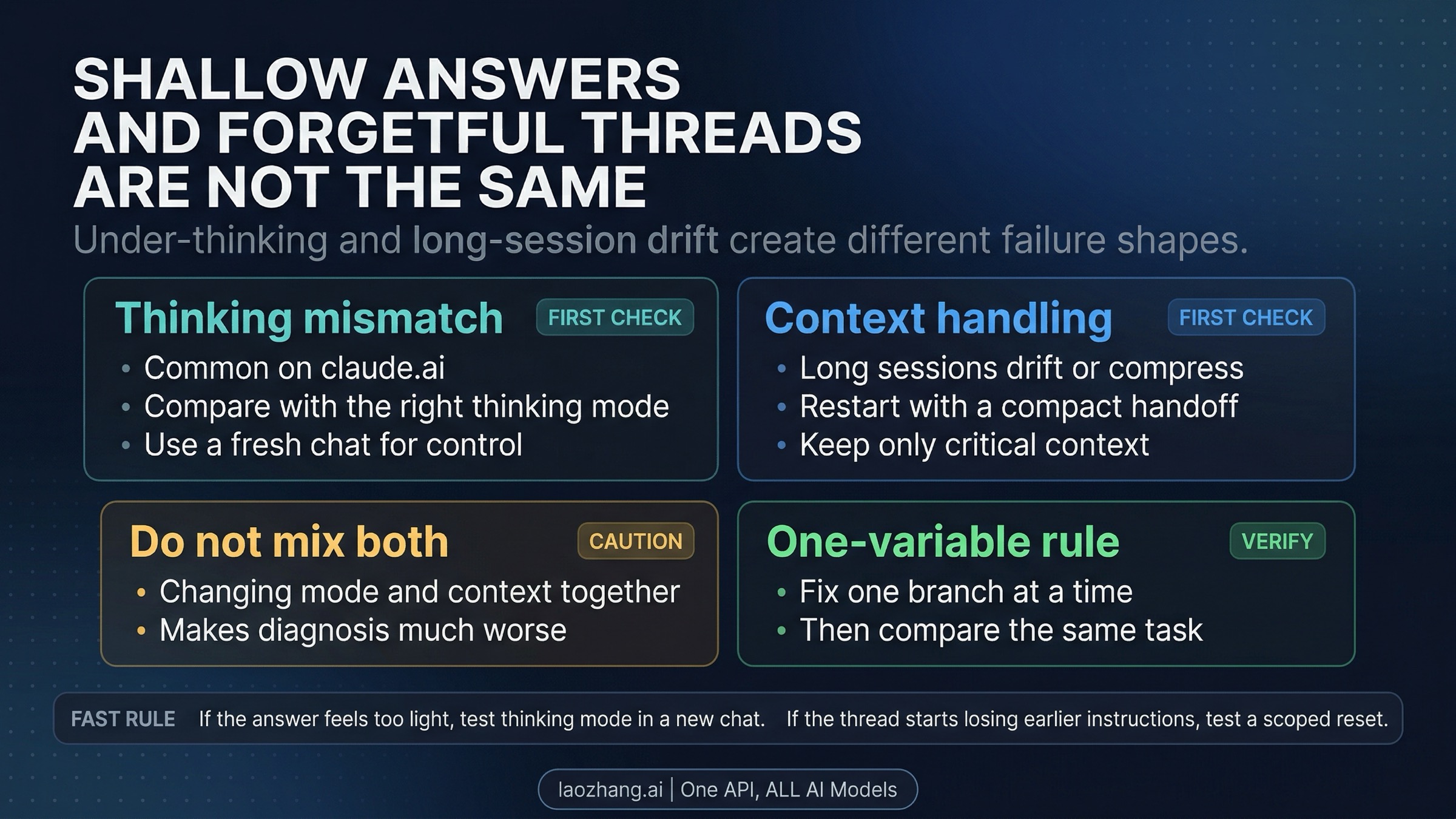

Branch 1: Thinking Mode Mismatch Often Looks Like a Model Downgrade

When users say Opus 4.6 suddenly feels shallow, the first branch to test is thinking behavior. In Claude chat, Anthropic's current help treats extended thinking as an optional mode intended for more complex work. In the API, Anthropic's current release notes talk instead about adaptive thinking and the effort control on newer models. Those are related ideas, but they are not one universal switch with one universal default.

That difference matters because "the model got worse" is often just the symptom language people use when they are not holding thinking behavior constant. A fast consumer chat answer with thinking off, a fresh API call with low effort, and a previous session that happened to reason more deeply can all feel like a mysterious decline when what really changed was the reasoning path you asked for or the surface you used.

The fastest control test is simple: pick one hard task, keep the route fixed, start a fresh session, and rerun it with explicit thinking behavior. On claude.ai, that means comparing the same task in a new chat with and without extended thinking. On the API, it means holding the prompt constant while making the thinking control explicit. If the quality gap mostly disappears after that test, you do not have strong evidence of a universal downgrade. You have a thinking-mode diagnosis.

That is also why this page should not turn into a benchmark recap. Benchmarks do not tell you whether your current route is suppressing useful reasoning for your real task. A same-task, same-route, fresh-session comparison does.

Branch 2: Context Drift and Long-Thread Compression Feel Like "Forgetfulness"

The second common complaint is not shallow thinking but selective forgetting. A thread starts strong, then loses instructions, misses prior decisions, or behaves as if it only half-remembers the project. Users often describe that as proof the model became less intelligent, but official Anthropic docs point to a more specific mechanism: long conversations are managed by summarizing earlier messages, and the API separately documents compaction as a compressed continuation path.

The practical lesson is that "Opus forgot" and "Opus got worse" are not always the same diagnosis. Once a session becomes long enough, you are no longer comparing the same context regime you had earlier. Some details may now be preserved only indirectly through a summary, or they may need to be reintroduced in a more compact form if they are still critical to the task.

The best control here is not a better prompt. It is a better handoff. Start a fresh scoped session with a short statement of the current goal, the non-negotiable constraints, and the exact artifacts or files that still matter. Then rerun the same class of task. If the fresh session behaves much better, that is a context-handling result, not strong proof that Opus 4.6 globally lost capability.

This is also the place where many complaint threads overreach. Community reports are useful because they surface real pain language like "forgetting context" and "worse after long threads." They are not enough to prove that every forgetfulness report is one underlying model regression. A clean fresh-session comparison gives you more diagnostic value than five more speculative threads.

Branch 3: Shared Usage Pressure Can Change the Experience You Think You Are Testing

Anthropic's current help says claude.ai, Claude Code, and Claude Desktop all draw from the same usage pool, and that actual headroom depends on the model, the feature, attached file length, and conversation length rather than one neat fixed public number. That is enough to make one heavy day feel very different from a lighter day, even when your prompts look similar.

Be careful here: this does not justify saying Anthropic officially makes Opus output worse whenever usage is high. The official docs do not make that claim. What they do support is a narrower point: if you are comparing sessions across very different usage states, file sizes, or conversation lengths, you may not be comparing the same conditions at all. A heavy cross-surface day can change what "normal" feels like before you ever reach a clear blocking message.

That is why the right first move is operational, not emotional. Check current usage state, note whether this happened late in a heavy session or after a broad day across Claude surfaces, and rerun the same work in a lower-pressure window if you can. If the issue mostly appears when you are already deep into heavy usage, the article's job is not to invent an unsupported quality-theory. It is to stop you from treating an unstable test condition as universal model truth.

If the deeper problem turns out to be plan pressure rather than cross-surface diagnosis, use our general Claude daily limit guide for the shared-usage picture or the more specific Claude Code usage limits guide if your pain is really centered in Code sessions.

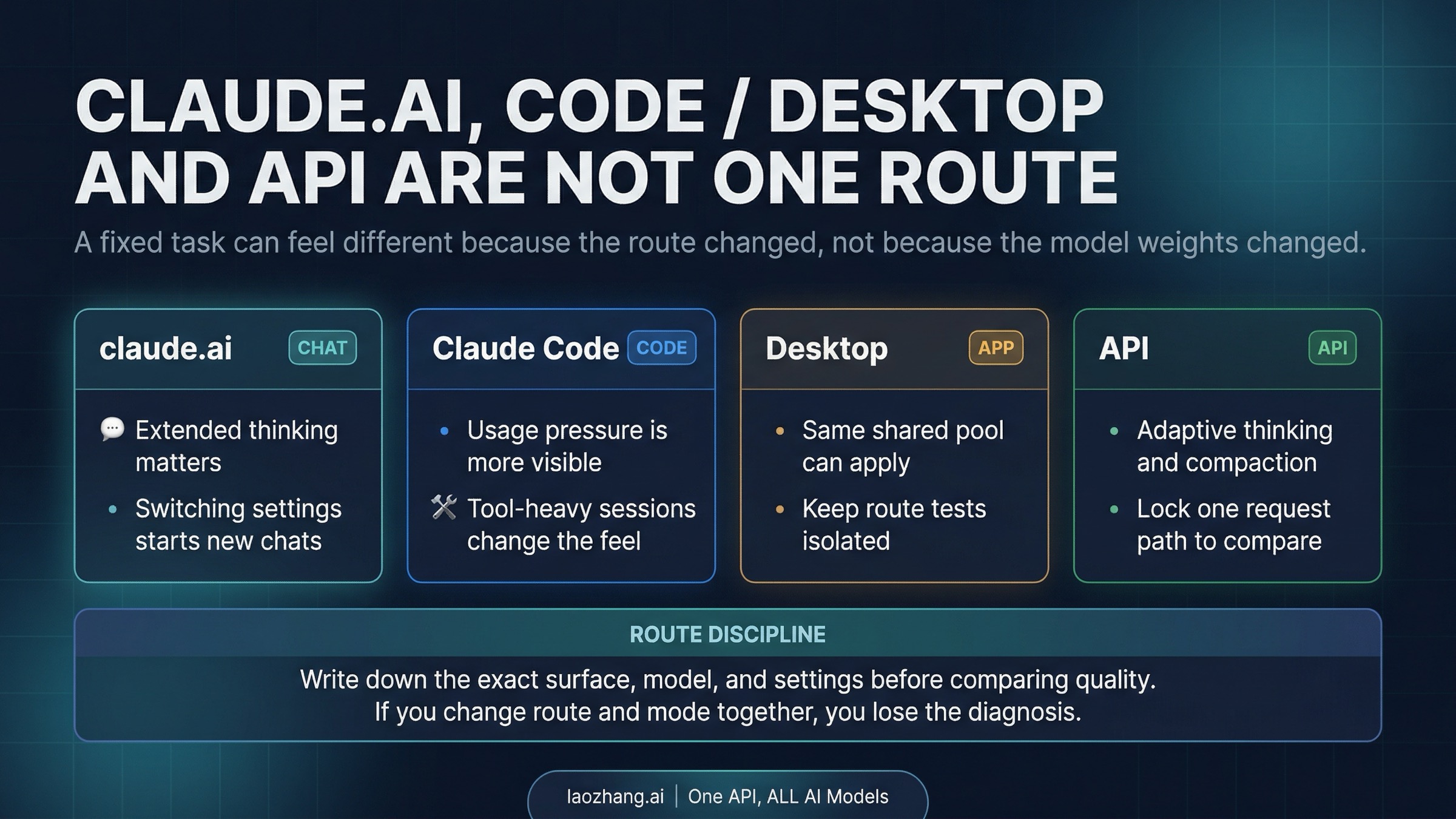

Branch 4: claude.ai, Claude Code, Desktop, and API Are Not One Route

One of the biggest reasons Opus 4.6 can "feel worse" is that users flatten several different execution surfaces into one story. That is a mistake. Claude chat uses its own product behaviors around model switching, extended thinking, and long-conversation handling. Claude Code brings tool use, repository context, and terminal workflow into the picture. Desktop shares more product DNA with claude.ai but still lives in a distinct usage reality for many users. The API has its own controls, long-context contract, and compaction surface.

Once you recognize that split, a lot of confusing anecdotes become easier to read. A person reporting sharp degradation in Claude Code may really be describing tool-heavy workflow cost, session age, or environment-specific behavior. A person comparing API calls to claude.ai chat may be comparing adaptive thinking and API context strategy against a consumer chat flow that is doing different things under the hood. Those are valid experiences, but they are not clean evidence that one single global Opus 4.6 brain got worse everywhere at once.

The only fair comparison is like-for-like on one fixed route. Pick one surface, one task, one thinking setting, and one fresh context state. If the issue disappears when you stop hopping between routes, you just diagnosed a routing problem. If the issue persists on one fixed route after the other branch tests are clean, that is when the problem starts looking serious enough to document.

Surface discipline matters even more when people mention long-context claims. Anthropic's release notes document 1M context beta for the API, but that should not be turned into a blanket statement about every consumer route. Cross-surface overclaiming creates exactly the kind of false expectation that later gets described as "the model got worse."

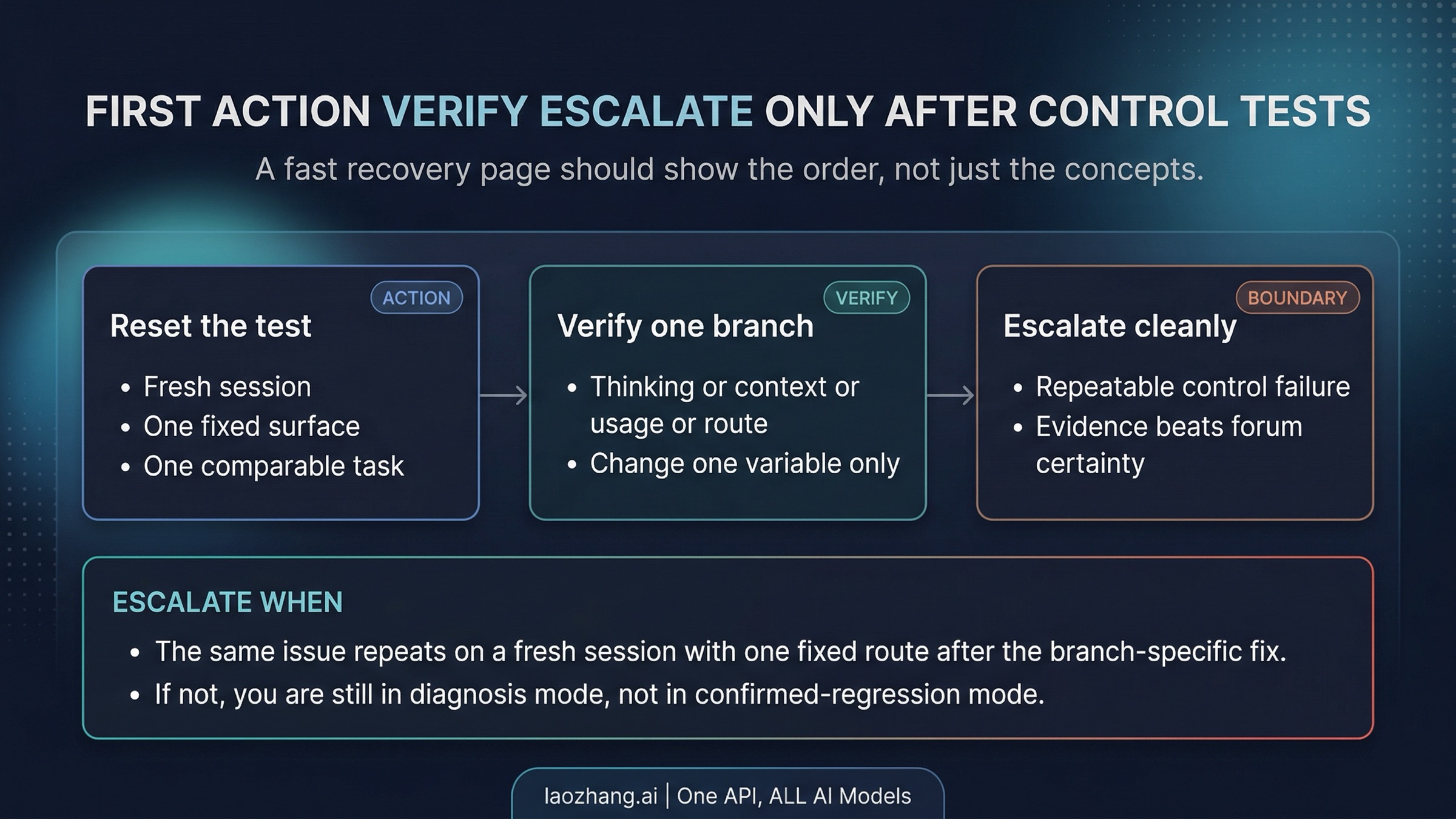

The Fastest Recovery Order

If you only remember one part of this page, remember the order. The point of this guide is not to give you more theories. It is to stop you from applying expensive fixes in the wrong order.

- Lock one surface. Do not compare claude.ai, Claude Code, Desktop, and API in the same test.

- Start a fresh session. Old context is the fastest way to confuse the diagnosis.

- Hold thinking behavior constant. Make extended thinking or adaptive-thinking effort explicit instead of assuming it.

- Change one variable at a time. Thread age, route, and thinking mode should not all change together.

- Check whether this happened under heavy usage pressure. If yes, repeat the test in a cleaner session window before you generalize.

- Escalate only after the same branch still fails. A repeatable failure on one fixed route is much stronger evidence than a bad afternoon across mixed surfaces.

This sequence is boring on purpose. It is designed to remove noise. Readers often want a dramatic answer because the symptom feels dramatic. The safer answer is usually a controlled workflow, not a grand theory.

When To Switch Surface, Switch Model, or Escalate

Switch surface when the evidence says the pain is route-specific, not when you are still uncertain what changed. For example, if your claude.ai chat starts feeling shallow but the same class of task remains strong on a controlled API test, your next job is not to declare a universal downgrade. It is to keep the route fixed and decide whether that surface still fits your work.

Switch model only after the branch diagnosis is clean. If the problem is thinking behavior, context handling, or mixed routes, a model switch may only hide the real issue. If a fresh-session, fixed-route, branch-specific test still leaves Opus 4.6 weaker than your task needs, then a different model can be a rational next step. But that is a later decision, not the first move.

Escalate when three things are true at the same time: you reproduced the problem on one fixed route, you ruled out the obvious branch-specific fix, and the failure still looks disproportionate for the task. At that point, collect evidence while it is still fresh: the surface used, whether the session was new or resumed, the thinking setting, the approximate usage state, and the exact task that reproduced the issue. That bundle is more useful than an undifferentiated complaint that "Opus 4.6 got worse."

Frequently Asked Questions

Did Anthropic officially downgrade Claude Opus 4.6?

Not based on the official docs reviewed on April 10, 2026. Anthropic's release notes, help-center pages, and status history do not provide an official statement confirming a universal Opus 4.6 downgrade. That does not mean user pain is fake. It means the strongest supported frame is diagnosis first, accusation second.

Why does Opus 4.6 seem to forget context in long threads?

Anthropic currently says long conversations are managed by summarizing earlier messages, and the API separately documents compaction. That means long-session forgetfulness can be a context-handling issue rather than clean evidence of a universal intelligence drop. A fresh scoped session is the right control test.

Is extended thinking the same as adaptive thinking?

No. Anthropic's current consumer help and API release notes describe different surface behaviors. Extended thinking is the consumer-chat label. Adaptive thinking and effort are API-side controls on newer models. Treating them as one universal switch creates bad comparisons.

Why do claude.ai and the API feel like different products sometimes?

Because they are different routes with different controls and behavior layers. The API has its own thinking controls, long-context contract, and compaction surface. Claude chat has its own model-switching and conversation-management behaviors. You should not flatten them into one undifferentiated Opus experience.

When should I escalate instead of keep tuning prompts?

Escalate when the same problem reproduces on one fixed route after a fresh-session control test and the obvious branch-specific fix did not resolve it. If you are still changing multiple variables at once, you are not at the escalation stage yet.