Claude Code already has two built-in memory surfaces: CLAUDE.md for explicit instructions and auto memory for learned patterns. A Claude memory MCP is something else: an external MCP server that stores or retrieves context outside Claude Code's built-in, machine-local memory boundary.

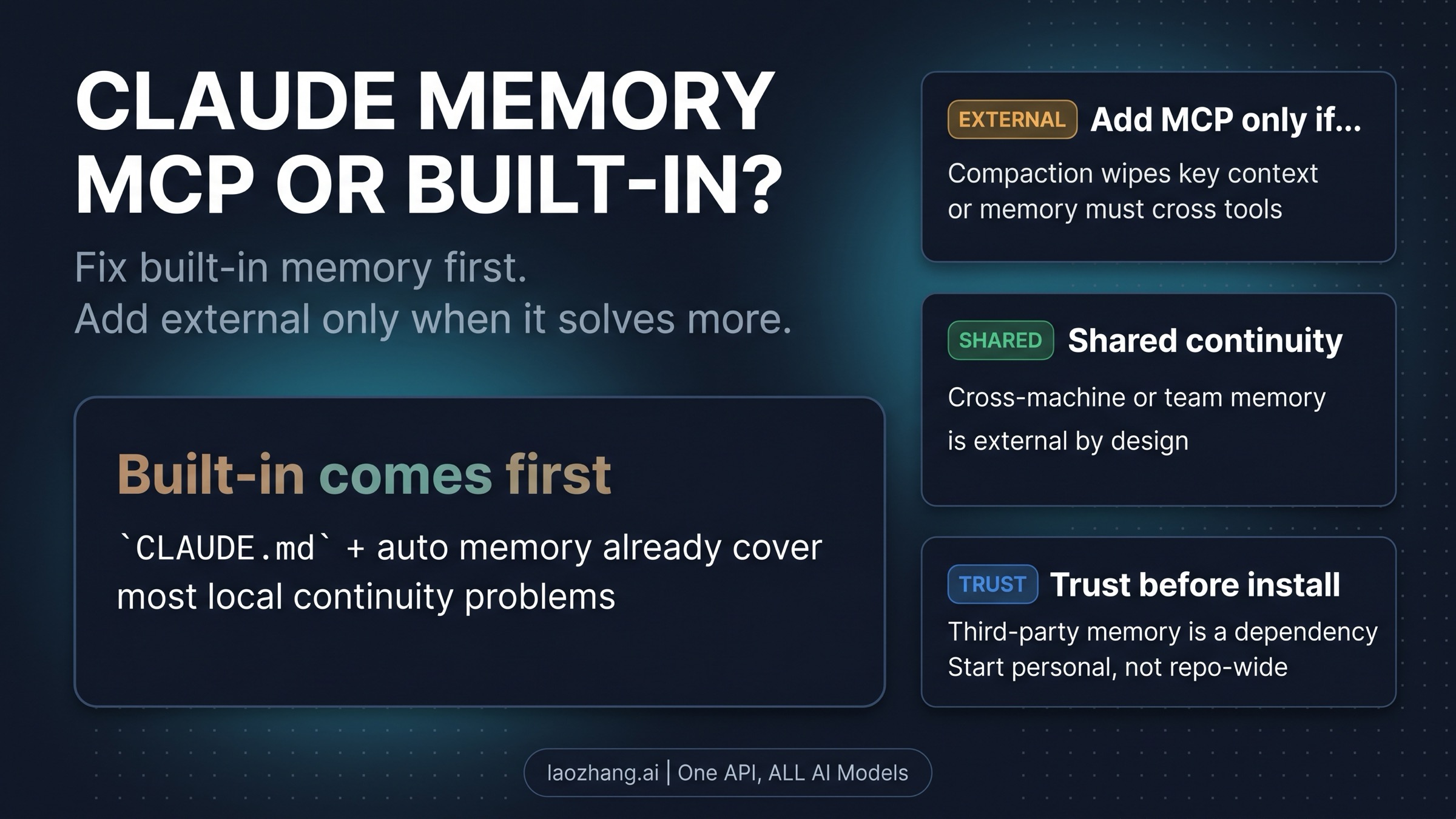

That split changes the recommendation immediately. If the job is still repo rules, startup instructions, repeated preferences, or one-machine habits, the built-in layers are still the right place to start. If the job is surviving /compact, retrieving context across tools, carrying memory across machines, or sharing it with a team, then an external memory MCP becomes a real option.

Current Anthropic Claude Code memory docs and MCP docs still describe those as separate surfaces. CLAUDE.md and auto memory are built-in Claude Code behavior. MCP servers are external integrations with their own approval flow, scope, operator trust, and third-party risk. The useful question is not just which tool sounds strongest. It is which layer should own this context first.

| If your actual problem is... | Start here | Why this lane comes first |

|---|---|---|

| repo rules, startup instructions, or workflow boundaries | CLAUDE.md | this is the durable built-in layer Claude should see from the start |

| repeated corrections and one-machine habits | auto memory | this is the built-in learned-pattern layer, not a universal shared store |

/compact loss, cross-tool retrieval, cross-machine continuity, or team sharing | external memory MCP | this is the point where you have crossed the normal built-in boundary |

If the left two lanes already match the problem, do not add a server yet. External memory earns its place only when the third lane is unmistakably the real job.

What Claude Code already remembers before you add anything

Most people reach for an external memory layer too early because "memory" sounds like one feature. It is not. Claude Code already has a built-in split between the things you write down explicitly and the patterns Claude learns over time.

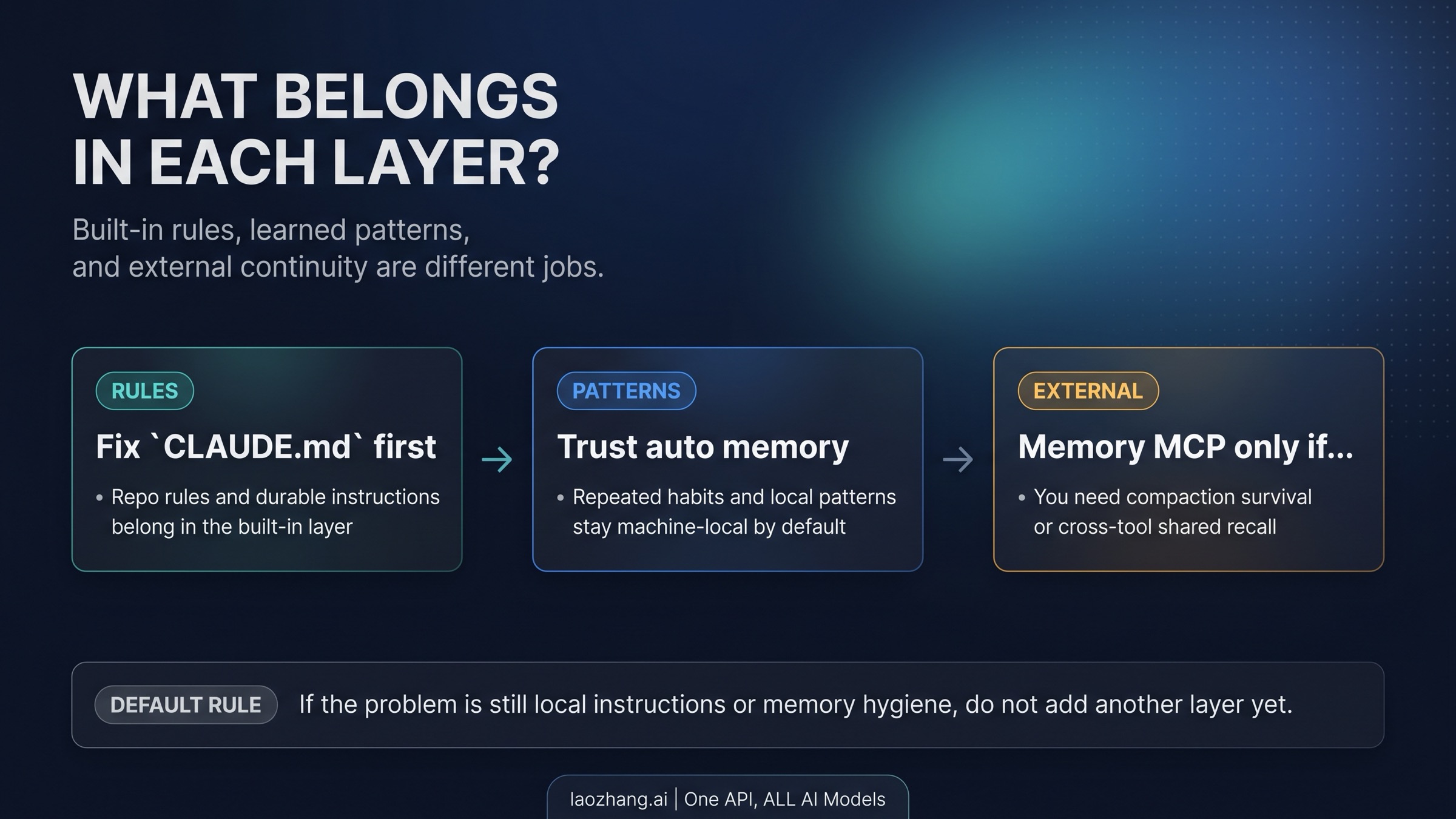

CLAUDE.md is the durable rule layer. This is where you put repository instructions that should be present from the start of the session: test commands, review expectations, safety boundaries, folder conventions, release rules, or any workflow habit that would be expensive to rediscover every time. If the rule matters before Claude takes action, it belongs here rather than in a later conversation turn.

Auto memory is a different kind of help. It is good at picking up repeated corrections, preferences, and local habits. That is useful, but it is still bounded. It is not a universal cloud memory that silently follows you everywhere, and it is not a policy engine that guarantees compliance. It is a machine-local learned-pattern layer inside Claude Code's current memory system.

That is why the most useful built-in question is not "does Claude remember?" It is "what should this layer remember?" If the answer is "repo rules and durable workflow expectations," write them into CLAUDE.md. If the answer is "repeated habits that Claude can learn from correction," let auto memory carry more of that load. If the answer is still vague, the problem is probably not missing infrastructure yet. It is that the built-in layers have not been assigned clean jobs.

This is also where /memory and /context matter more than another vendor demo. If you think Claude forgot something, check what actually loaded. If the session feels inconsistent or bloated, inspect the live context rather than assuming you need a separate memory product. A surprising amount of "I need persistent memory" pain turns out to be "I never made the built-in contract explicit."

If you need the full built-in walkthrough, including startup loading, file locations, and forgetting diagnostics, use our Claude Code Memory Guide. The practical boundary is simpler here: keep explicit rules and learned habits in the built-in layers, and escalate only when continuity has to survive outside that machine-local contract.

The exact problems an external memory MCP actually solves

An external memory MCP is not "stronger memory" in the abstract. It becomes useful only when your continuity problem exceeds what built-in Claude Code memory is meant to do.

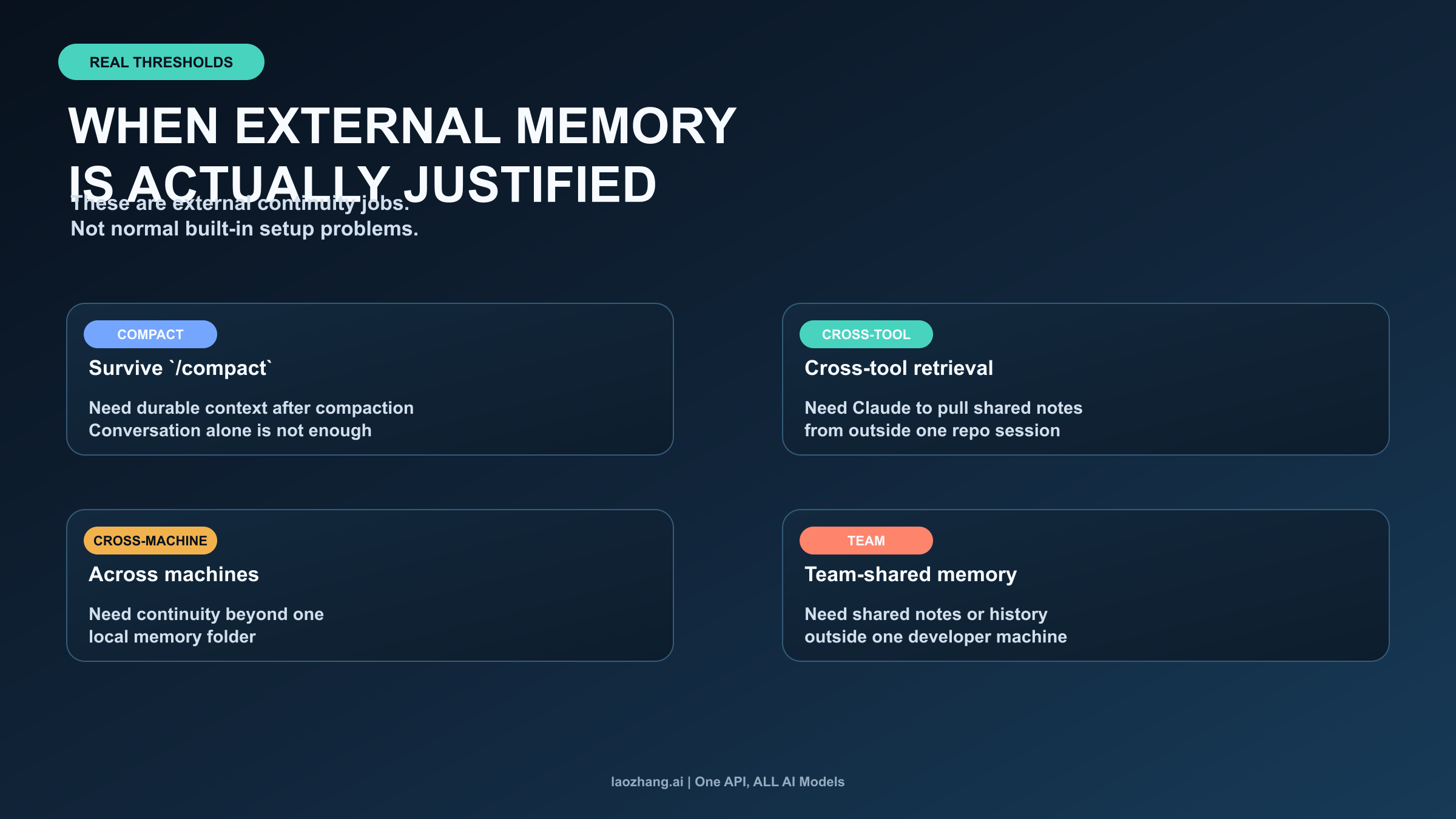

The first real threshold is compaction survival. If important working context keeps getting lost after /compact, and reconstructing it from conversation is too costly, you may need a memory surface that persists outside the chat transcript. That is an external continuity problem, not just a built-in preference problem.

The second threshold is cross-tool retrieval. Maybe the information Claude needs does not live in repo instructions or learned habits at all. It lives in notes, issue history, shared docs, or another tool outside the current project session. At that point, you are asking for retrieval across systems rather than better behavior inside Claude Code's built-in memory.

The third threshold is cross-machine continuity. Built-in Claude Code memory is machine-local today. If you move between laptops, remote environments, or separate machines and need the same memory surface to follow you reliably, you have moved past the normal built-in boundary.

The fourth threshold is team-shared memory. This is the cleanest justification for an external layer, but it is also where people over-upgrade. Shared memory only makes sense when the team really needs common notes, common recall, or common retrieval outside one developer machine. It is not the right answer just because several people use Claude Code.

Current memory-MCP vendors market exactly these pains: surviving compaction, keeping recall across sessions, sharing notes across tools, or centralizing team memory. That market language is useful because it reveals the real threshold cases. It is dangerous when it gets mistaken for built-in Claude Code truth. The safest reading is: these are examples of external-memory jobs, not proof that Claude Code is missing a default feature you forgot to turn on.

If your pain is still "Claude ignored repo rules on this machine" or "Claude did not keep a habit I never made durable," the built-in layer is still the first fix. An external memory MCP should feel like a deliberate escalation, not like the first thing you try when "memory" sounds fuzzy.

Plugin, remote MCP, or project-shared setup?

Once the threshold is real, the next decision is not just which memory tool. It is which setup surface should own it.

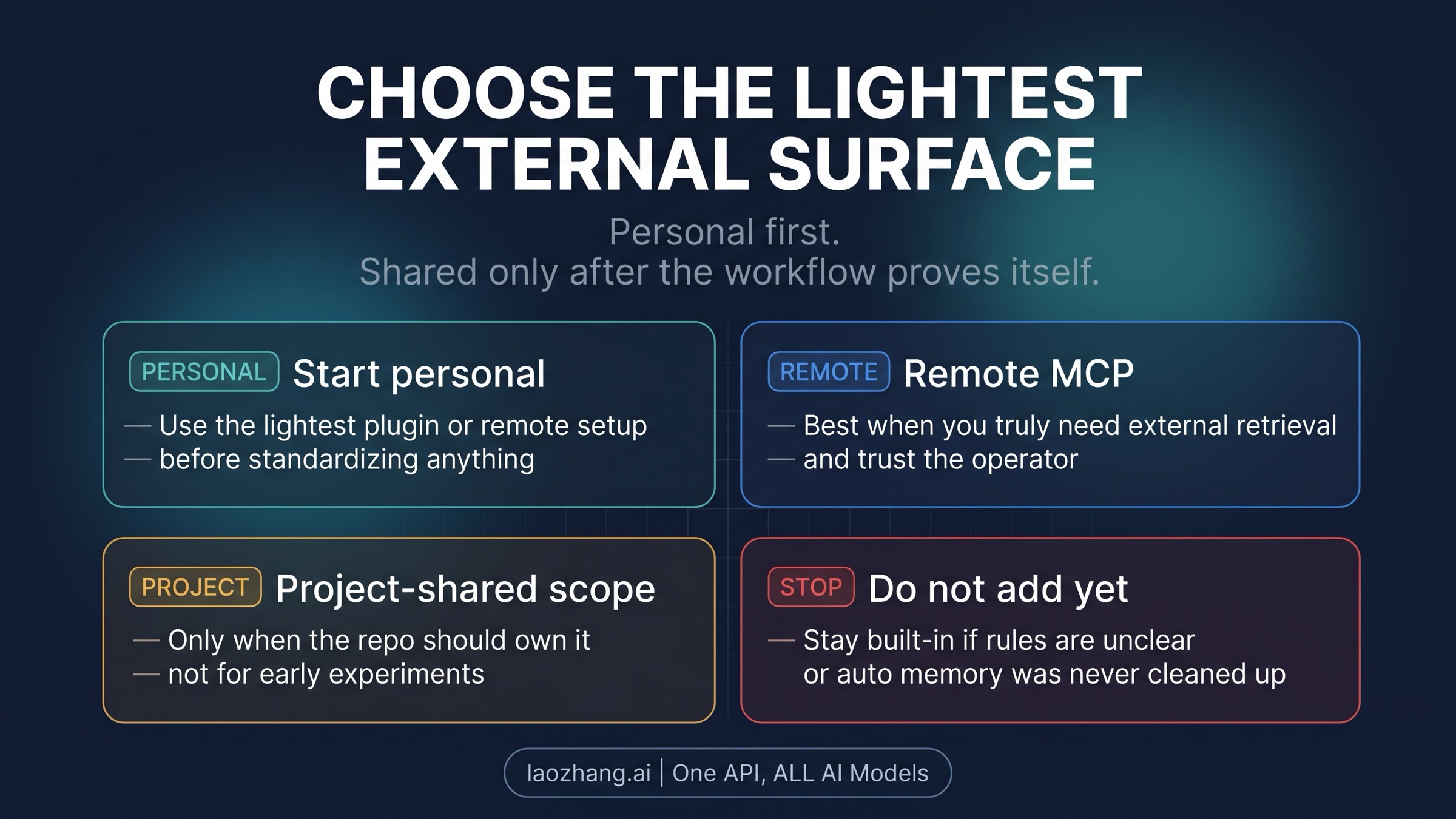

The safest default is personal first. If only one developer is proving the workflow, keep the setup personal and as light as possible. That may mean a plugin-bundled experience or a remote MCP server used only in your own environment. The point is to prove that the external memory job is real before you turn it into repo-wide infrastructure.

Remote MCP becomes reasonable when you truly need retrieval or persistence outside the local Claude Code environment. But remote also means operator trust, service availability, and another dependency boundary. If the main appeal is that the product page sounds magical, do not install it yet. If the appeal is that it solves a specific continuity failure you can name clearly, then you at least have the right reason to evaluate it.

Project-shared setup is a later step, not a day-one badge of seriousness. Anthropic's MCP model already separates local, user, and project surfaces because ownership changes the recommendation. If the repo should own the memory integration, it should be because the workflow is real enough, stable enough, and shared enough to justify that decision. If the answer is still "I am experimenting," the repo should not inherit your experiment.

If your question has widened to "what other integrations should I install first?" read our Best Claude Code MCP Servers guide. If the problem is still specifically memory, stay with the lightest setup surface until the continuity problem proves it deserves more.

The practical sequence is simple:

- keep it personal while the workflow is still being proven

- move to a remote server only when external retrieval or persistence is the point

- move to project-shared ownership only when the repo genuinely benefits from standardizing it

If you reverse that order, you usually end up managing infrastructure before you have even proved the new layer is necessary.

How to evaluate a memory-MCP promise without buying hype

The memory-MCP market is real enough to deserve attention, but it still needs a trust filter. Anthropic's official docs are the source of truth for what Claude Code already does by default. External memory vendors are evidence for what the market is trying to solve, not authority on Claude Code's built-in contract.

The fastest way to stay honest is to translate each claim into an operational question:

Persistent memory: persistent where, exactly? Across/compact, across tools, across machines, or across a whole team?Works with Claude: does it extend Claude through MCP, or is it being described as if it were a built-in Claude feature?Shared team memory: who owns the data, who can write to it, and who is responsible for prompt-injection or bad retrieved context?Easy setup: easy for one developer, or safe and maintainable for a repo that will have to live with it later?

Those questions matter because the wrong upgrade is worse than no upgrade. A weak external memory setup does not just fail to help. It adds another place where stale, low-trust, or over-broad context can leak into the model.

The safest rule is to trust official Anthropic product truth for the baseline, then evaluate third-party memory layers by exact continuity gain, ownership model, and maintenance cost. If the product page cannot answer those three things clearly, it is not a serious improvement yet.

When not to add anything yet

For many readers, the correct move is still to add nothing. That is often the right recommendation.

Do not add an external layer yet if the problem is still one of these:

- your repo rules were never written cleanly into

CLAUDE.md - your auto memory is messy, stale, or doing a job that should have been explicit

- you have not checked

/memoryto confirm what actually loaded - the pain is really context bloat, not missing continuity

- everything still lives on one machine and one repo workflow

In those cases, another memory server hides the confusion instead of fixing it. A better built-in setup usually beats a premature external dependency.

There is also a category mistake worth avoiding: sometimes the real problem is not memory at all. If what you want is better repo-specific workflow behavior, a stronger instruction layer or skill system may help more than an external memory store. If what you need is broader external tooling across GitHub, docs, browser QA, or observability, the better next step is the broader MCP decision, not a memory-specific add.

Keep that stop rule inside the decision itself, not buried as an afterthought. "Do not add anything yet" is often the highest-value answer because it protects you from solving the wrong problem with the fanciest possible surface.

FAQ

Do I need a memory MCP for Claude Code?

Usually not at first. If the problem is still repo rules, learned habits, or one-machine continuity, fix CLAUDE.md and auto memory before adding anything external. A memory MCP becomes worth evaluating only when the continuity problem exceeds Claude Code's built-in machine-local contract.

What does Claude Code already remember by default?

Claude Code already gives you explicit durable instructions through CLAUDE.md and learned behavior through auto memory. Those are built-in surfaces, but they are bounded. They are not the same as a universal shared memory system.

When does compaction justify an external layer?

When the context that matters keeps disappearing after /compact, and the durable source of truth needs to live somewhere outside the chat itself. If the issue is just that the rule never lived in CLAUDE.md or was too vague to enforce consistently, fix the built-in layer first.

Should I install a plugin or a remote MCP server?

Start with the lightest personal setup that solves the real continuity problem. Use remote MCP only when external retrieval or persistence is truly the point. Move to repo-shared ownership only when the workflow is proven and the repository should actually own the integration.

When should I not add anything yet?

Do not add a memory MCP yet if you have not cleaned up CLAUDE.md, do not know what /memory shows, have not separated explicit rules from learned patterns, or still work inside one machine-local workflow. In those cases, better built-in memory hygiene is the safer and more useful upgrade.