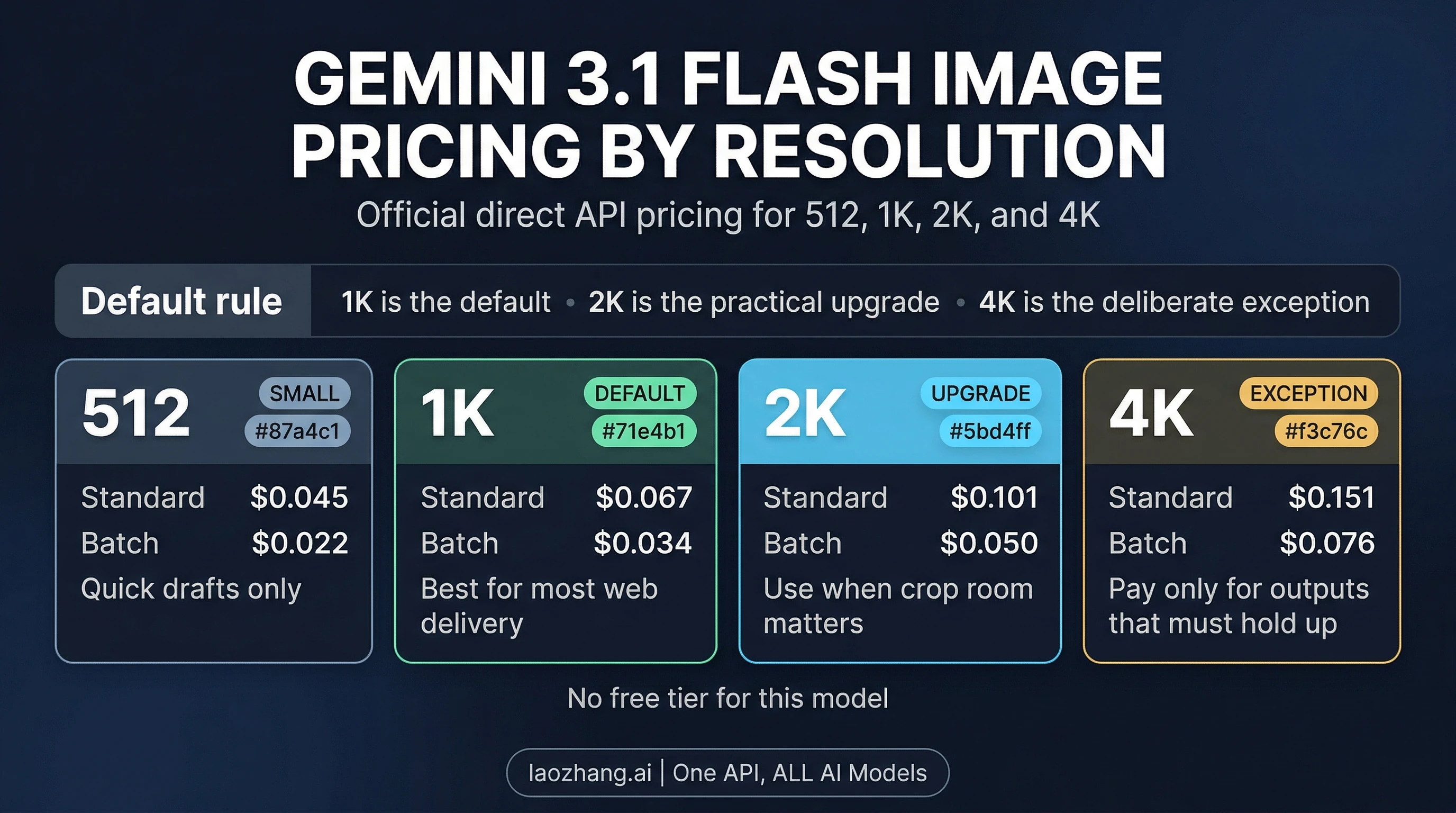

Google's Nano Banana 2, officially Gemini 3.1 Flash Image (gemini-3.1-flash-image-preview), now has a much clearer pricing contract than the early soft-launch coverage suggested. Google introduced the model on February 26, 2026 in its Nano Banana 2 launch post. As checked on April 4, 2026, Google's own Gemini API pricing page lists $0.067 at 1K, $0.101 at 2K, and $0.151 at 4K, with batch pricing cutting those image-output rates to $0.034, $0.050, and $0.076. The official image generation guide also makes the operational rule explicit: the model supports 512, 1K, 2K, and 4K, defaults to 1K, and requires an uppercase K.

That shifts the real reader question. The hard part is no longer "what is Gemini 3.1 Flash Image?" The hard part is deciding what your default shipping size should be so your API bill, visual quality, and downstream workflow all line up. For most products, the answer is still simple: ship 1K by default, move to 2K when you need crop room, and treat 4K as a deliberate exception instead of a prestige toggle.

TL;DR

| Size | Standard | Batch | Use it when |

|---|---|---|---|

512 | $0.045 | $0.022 | quick drafts, tiny surfaces, or internal review only |

1K | $0.067 | $0.034 | the default for most web delivery and product work |

2K | $0.101 | $0.050 | you need crop room, retina assets, or more reusable masters |

4K | $0.151 | $0.076 | the output must survive large-format or heavy multi-channel reuse |

Three additional facts matter before you over-optimize the wrong thing:

- There is no free tier for

gemini-3.1-flash-image-previewon the direct API pricing page. imageSizedefaults to1Kif you do not set it explicitly.- Lowercase values like

1kor2kare rejected; Google requires1K,2K, and4K.

Official Gemini 3.1 Flash Image pricing by resolution

The official pricing page now makes the output math unusually readable. Google prices image output for Gemini 3.1 Flash Image at $60 per 1,000,000 output tokens for standard requests, and the page translates that into per-image equivalents by size. The result is the rate card most developers actually need:

imageSize | Standard price | Batch price | Output tokens | Practical reading |

|---|---|---|---|---|

512 | $0.045 | $0.022 | 747 | cheaper than 1K, but too small to be the normal default |

1K | $0.067 | $0.034 | 1120 | the sensible default size for most production apps |

2K | $0.101 | $0.050 | 1680 | the "safer master" tier when reuse matters |

4K | $0.151 | $0.076 | 2520 | the expensive tier you should justify explicitly |

Two things stand out immediately. First, the jump from 1K to 2K is real but not dramatic. You are paying about 51% more to buy more crop flexibility and more durable source assets. Second, the jump from 1K to 4K is large enough that it should affect your product defaults. At list price, 4K costs about 2.25x the 1K rate. That is not a rounding error. It is a business decision.

This is also why a lot of early "Nano Banana 2 pricing" posts aged badly. They were built around launch-window estimates or copied community lore before Google's own page settled into a direct size ladder. If your team still has old spreadsheet assumptions like "around five cents" or "roughly fifteen cents for 4K," replace them with the official contract now. This is one of those cases where approximate memory stops being useful the moment the provider publishes a clean number.

How Google actually bills the model

The visible cost movement comes from the image output, not from ordinary prompt text. Google's standard price for text and image input on Gemini 3.1 Flash Image is $0.50 per 1,000,000 tokens, and batch input is $0.25 per 1,000,000 tokens. In normal image-generation usage, that input side is usually tiny compared with the output image charge. A short prompt is not what turns your bill from manageable to expensive. Choosing 4K by default is.

That is the right mental model for budgeting. If you are forecasting spend for a catalog generator, an asset pipeline, or a product mockup tool, start with the output size ladder first. Only after that should you worry about prompt-token cleanup. Better prompt hygiene still matters, but it is secondary here.

One more pricing edge case is worth flagging: Google Search grounding is billed separately. The pricing page notes that a customer request can trigger one or more search queries, and each query is charged individually after the free monthly allowance on the paid tier. That means the base image price is not always the whole bill if you deliberately build a grounded image workflow. For ordinary prompt-only image generation, though, the resolution ladder remains the center of gravity.

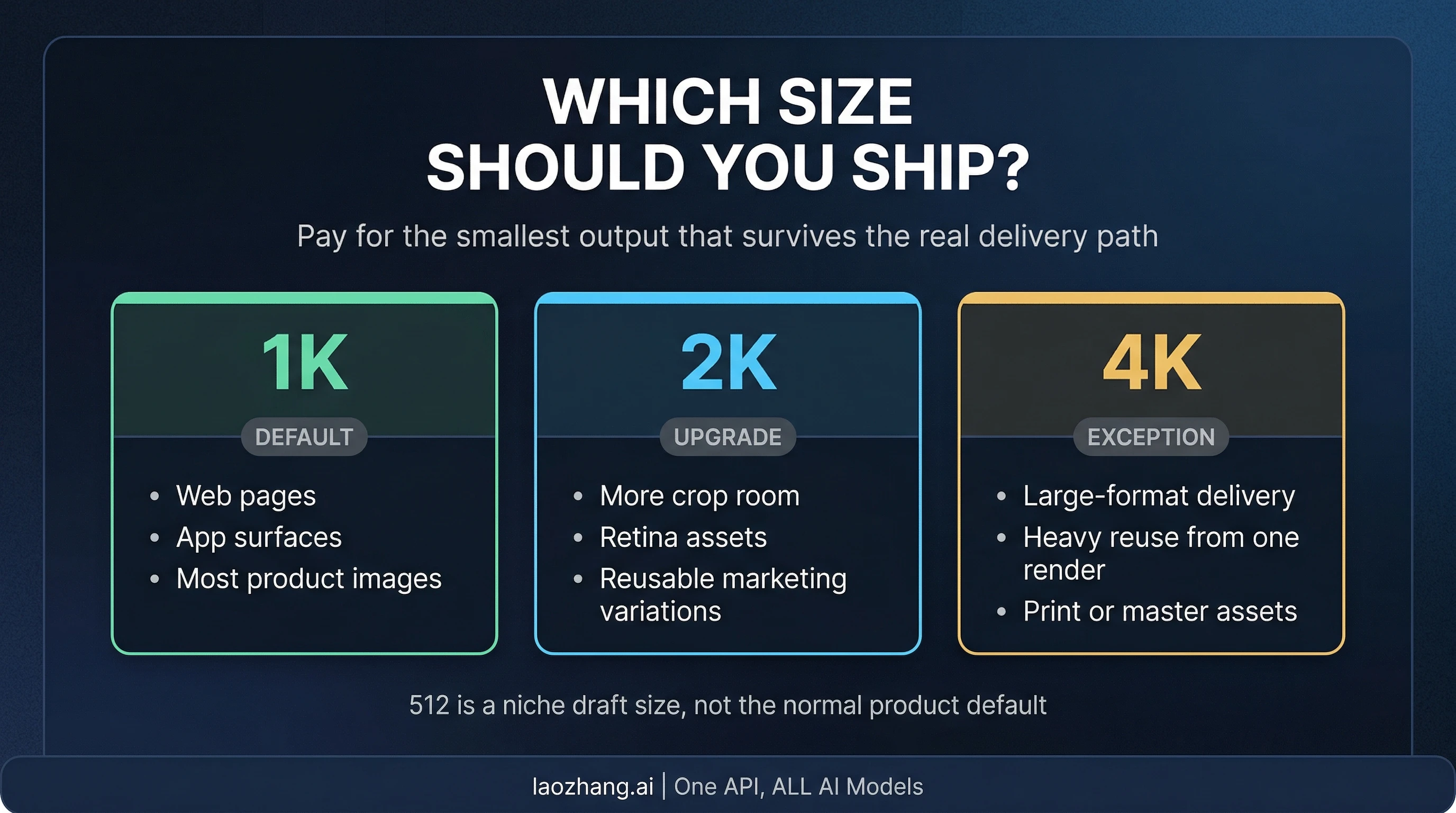

Which resolution should you choose?

The right default is usually 1K, not because 1K is universally "best," but because it is the smallest size that still survives most real delivery paths without turning your cost model into overkill. If your images primarily live inside web pages, product cards, app surfaces, blog headers, or normal social distribution, 1K is the working default that keeps the bill sane while still producing a usable output.

Move to 2K when you already know the image will get cropped, reused, repackaged, or displayed in environments where a little extra source resolution saves work later. This is the practical upgrade tier. It is not so expensive that it needs executive sign-off, but it is not something you should enable blindly either. 2K is the right answer when your team keeps returning to the same assets for multiple placements and keeps losing quality or flexibility at 1K.

Reserve 4K for workflows where the asset really does need to hold up beyond ordinary screen use. That includes large-format delivery, print-adjacent work, premium hero assets meant to be reused across many placements, or pipelines where one generation is expected to serve as a durable master file. If you cannot name that kind of downstream requirement, you probably do not need 4K.

The odd one out is 512. It is real, it is officially supported for Gemini 3.1 Flash Image, and it is the cheapest rung on the ladder. But it is best treated as a niche tool for very small surfaces, quick draft passes, or internal review states. It is not the size most teams should adopt as their normal production default.

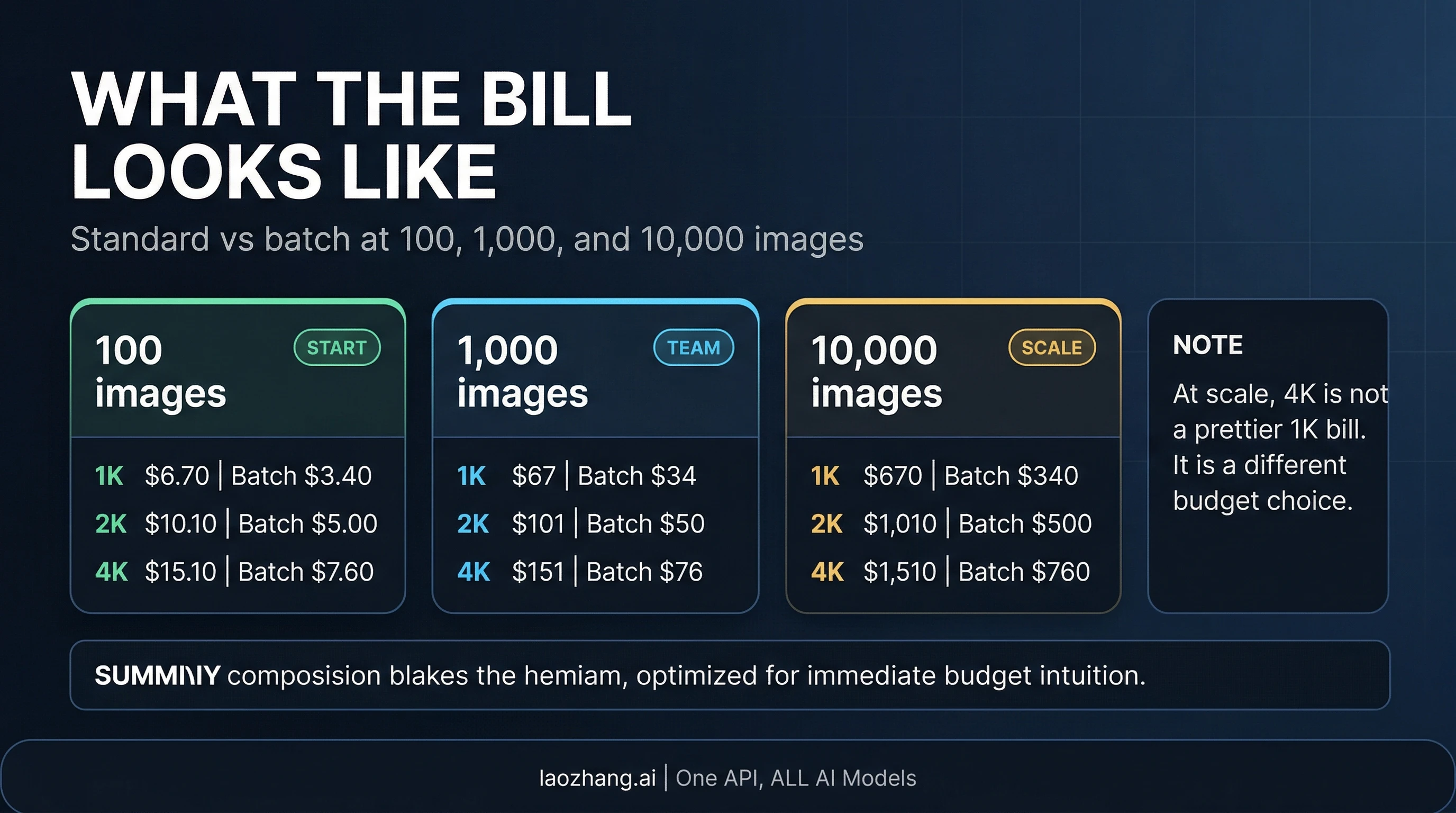

Monthly cost scenarios: when the jump actually matters

Per-image pricing looks harmless until volume makes the delta visible. Below is the simplest way to turn the rate card into a budget conversation:

| Volume | 1K standard | 1K batch | 2K standard | 2K batch | 4K standard | 4K batch |

|---|---|---|---|---|---|---|

100 images | $6.70 | $3.40 | $10.10 | $5.00 | $15.10 | $7.60 |

1,000 images | $67 | $34 | $101 | $50 | $151 | $76 |

10,000 images | $670 | $340 | $1,010 | $500 | $1,510 | $760 |

These gaps are the real reason to set a default-size policy instead of letting every caller choose whatever sounds premium. At 1,000 images, the difference between a 1K default and a 4K default is $84 per month on standard pricing. At 10,000 images, the difference becomes $840 per month. That is large enough to change unit economics, especially if image generation is not the only paid model call in your stack.

Batch pricing changes the answer when your workflow can wait. If the output is for overnight content generation, scheduled marketing assets, or other non-real-time jobs, the batch contract is a serious cost lever. In that mode, 4K at $0.076 begins to look more reasonable. But the logic still does not flip. Batch makes the premium tier cheaper; it does not turn every 4K request into a good default.

The most pragmatic operating model is usually this: pick one standard real-time default, define one higher-resolution exception path, and send obviously non-urgent bulk work to batch. That gives you a predictable bill and a clear escalation rule instead of a resolution free-for-all.

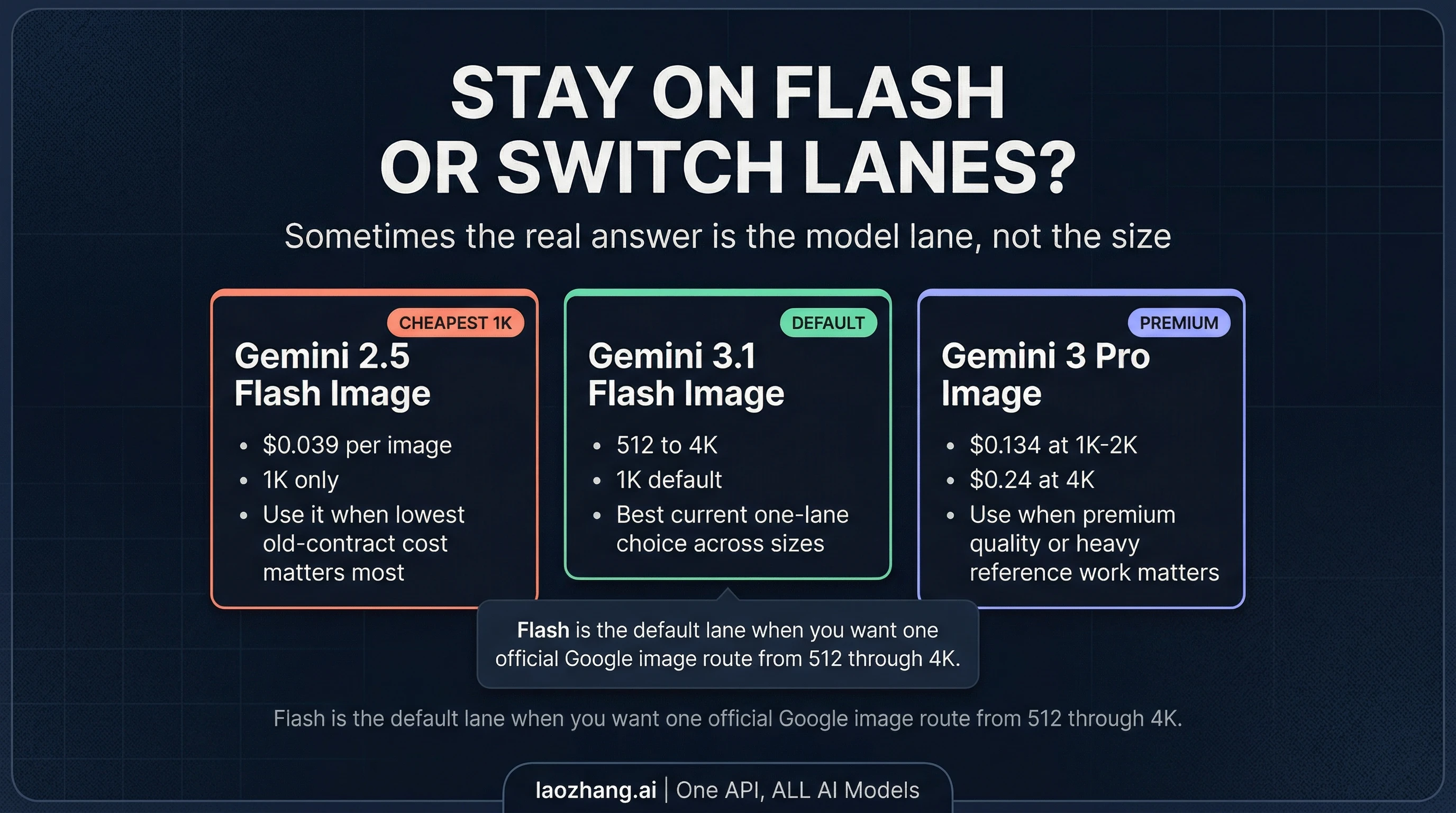

When Flash is the right lane and when to switch models

Sometimes the size decision is only half the story. The other half is whether Gemini 3.1 Flash Image is still the right model lane at all.

If your real job is the cheapest possible official Gemini image route at 1K, then the older Gemini 2.5 Flash Image still matters. Google's pricing page lists it at $0.039 per image for standard requests and $0.0195 for batch. That is materially cheaper than Gemini 3.1 Flash Image at 1K. The tradeoff is obvious: you lose the cleaner 512-to-4K ladder and stay on the older 1K-only contract.

If your real job is one current official Google lane that spans small drafts through 4K outputs, Gemini 3.1 Flash Image is the default answer. That is why this model is strategically useful. It collapses what used to be multiple decisions into one cleaner route: 512 when you need tiny or draft output, 1K for the default, 2K for reusable masters, and 4K when the asset genuinely has to hold up.

If your real job is maximum quality, richer premium workflows, or heavier reference-driven work, then the better answer may be Gemini 3 Pro Image. Google's official pricing puts that lane at $0.134 per image for 1K-2K and $0.24 per image for 4K, with batch cutting those rates in half. That is a very different cost structure, and it only makes sense if the workflow really benefits from the premium tier. If you are in that category, our Nano Banana Pro API guide is the better next read.

So the clean routing rule is this:

- Use Gemini 2.5 Flash Image when the lowest 1K cost is the first priority.

- Use Gemini 3.1 Flash Image when you want the current default Gemini image lane across

512,1K,2K, and4K. - Use Gemini 3 Pro Image when your workflow can justify premium pricing, not merely because "higher-end" sounds safer.

Set imageSize explicitly or your budget math is fake

The operational trap here is simple: pricing guidance only helps if your request is actually asking for the size you think you are paying for. Google's image generation guide is explicit that Gemini 3 image models generate 1K by default, and that the valid values are 512, 1K, 2K, and 4K. If you never set imageSize, your spreadsheet may say one thing while your production requests keep doing another.

Use the parameter directly and keep the casing exact:

javascriptimport { GoogleGenAI, Modality } from "@google/genai"; const ai = new GoogleGenAI({ apiKey: process.env.GEMINI_API_KEY }); const response = await ai.models.generateContent({ model: "gemini-3.1-flash-image-preview", contents: "Create a clean ecommerce hero image of a ceramic mug on a stone surface.", config: { responseModalities: [Modality.TEXT, Modality.IMAGE], imageConfig: { imageSize: "2K", aspectRatio: "1:1", }, }, });

Two implementation details are easy to miss:

1K,2K, and4Kmust use an uppercaseK.512is written as plain512, without any suffix.

That sounds minor, but it matters operationally. If your engineering team is budgeting around 2K, your prompt layer or SDK wrapper should make that explicit. Otherwise the article's cost model and the application's actual behavior drift apart.

The practical default

As of April 4, 2026, the official answer is finally clean enough to turn into a rule instead of a rumor. Gemini 3.1 Flash Image costs $0.067 at 1K, $0.101 at 2K, and $0.151 at 4K, with batch dropping those rates to $0.034, $0.050, and $0.076. The model supports 512, 1K, 2K, and 4K, defaults to 1K, and has no free tier on the direct API contract.

That leads to a straightforward production policy: default to 1K, upgrade to 2K when reuse or crop room justifies it, and require a concrete downstream reason before you ship 4K as the standard path. If that rule feels too conservative, run the monthly math again. Most of the time, it is not conservative at all. It is simply the point where the pricing contract and the delivery reality finally line up.