Claude Sonnet 4.6 can sometimes answer that it is DeepSeek when the prompt envelope does not anchor its identity clearly, especially in Chinese-language self-identification prompts. The most useful way to read that behavior is as identity confusion under a weak prompt boundary, not as proof that Anthropic secretly replaced Sonnet with DeepSeek or that one screenshot settles the distillation question.

“Evidence note: this article is based on Anthropic's public models overview, Claude 4.6 overview, system prompts page, release notes, and Anthropic's February 23, 2026 distillation post, plus OpenRouter's provider routing docs and public community threads documenting the observed output.

TL;DR

- Yes, the DeepSeek self-introduction is plausible. Multiple public threads show Claude Sonnet 4.6 answering that it is DeepSeek when asked what model it is, especially in Chinese and especially when the system prompt is weak or empty.

- No, that answer does not prove Sonnet 4.6 is secretly DeepSeek. Anthropic's public docs still list Sonnet 4.6 as a current Claude model with the alias

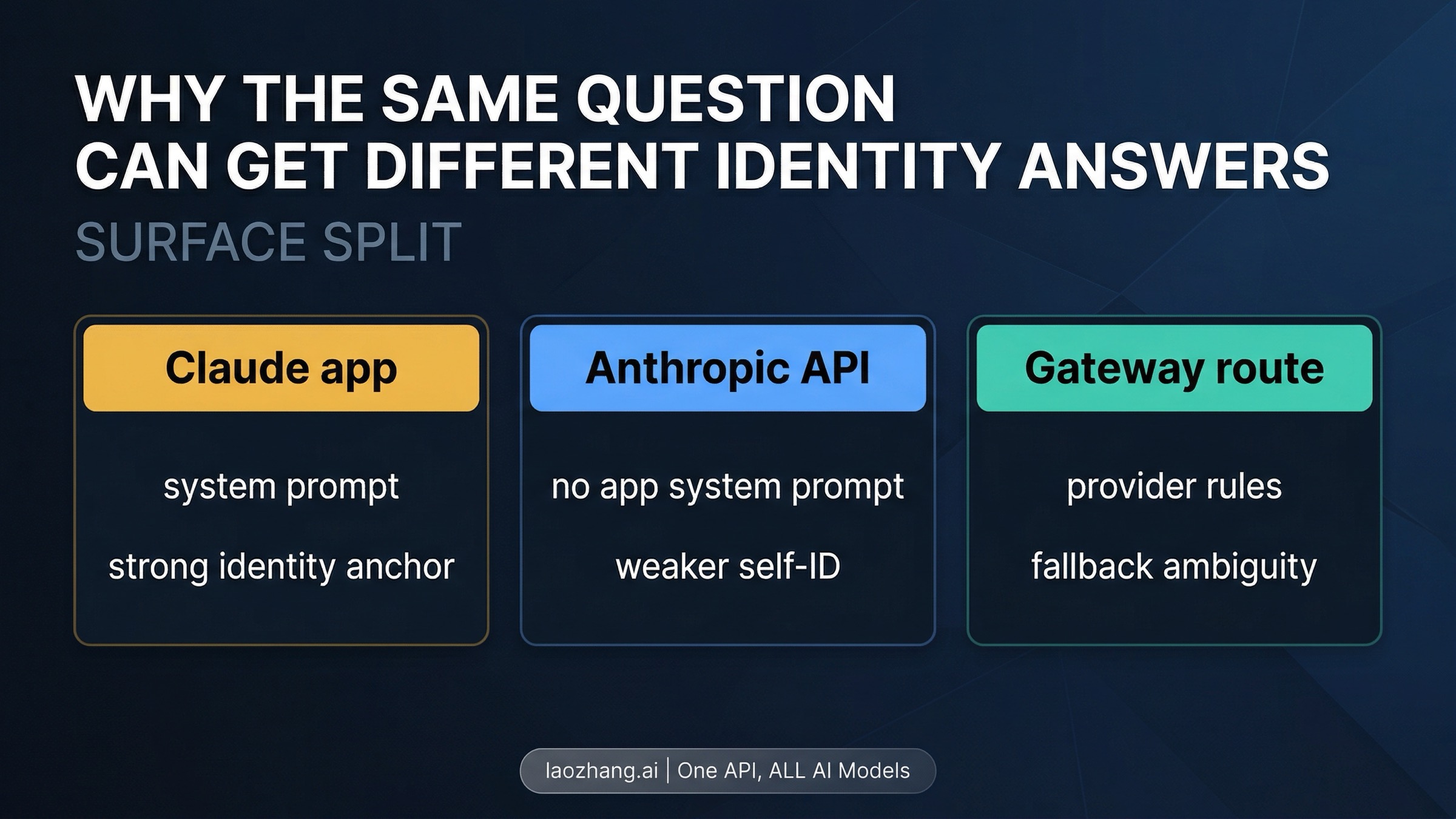

claude-sonnet-4-6. - The strongest current explanation is prompt and surface weakness, not a public model swap. Anthropic's own docs say claude.ai and mobile use system prompts, while the API does not inherit those system-prompt updates. Gateway layers can add even more ambiguity.

- Anthropic's DeepSeek accusation matters for the optics, not for the proof standard. Anthropic publicly accused DeepSeek of distillation on February 23, 2026, which is why this screenshot spread so fast. That still does not turn one self-introduction answer into a provenance test.

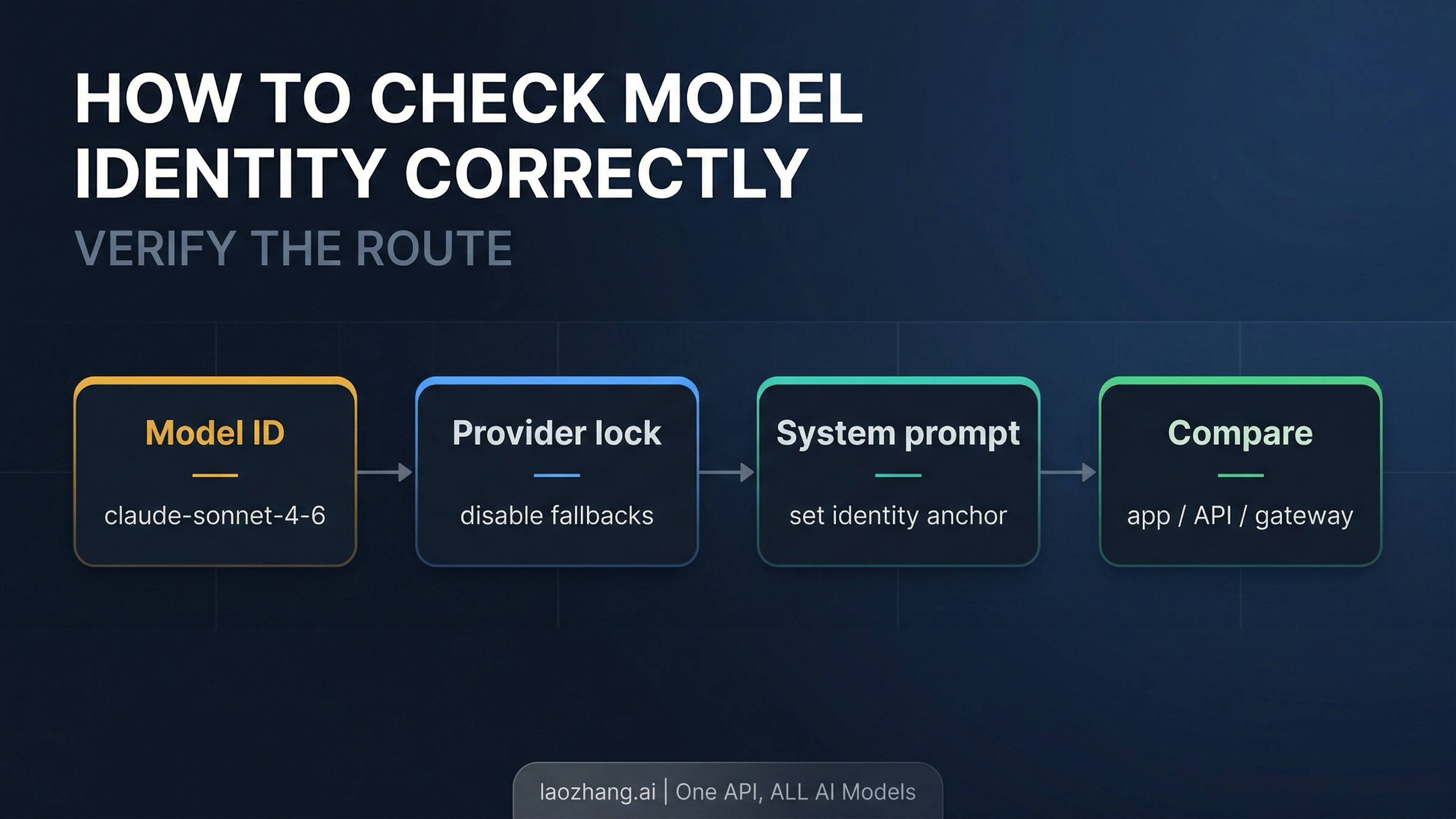

- If you need to verify model identity, trust the route, not the self-description. Check the model ID, lock the provider path, add an explicit identity anchor in the system prompt, and compare outputs across surfaces.

What is actually confirmed

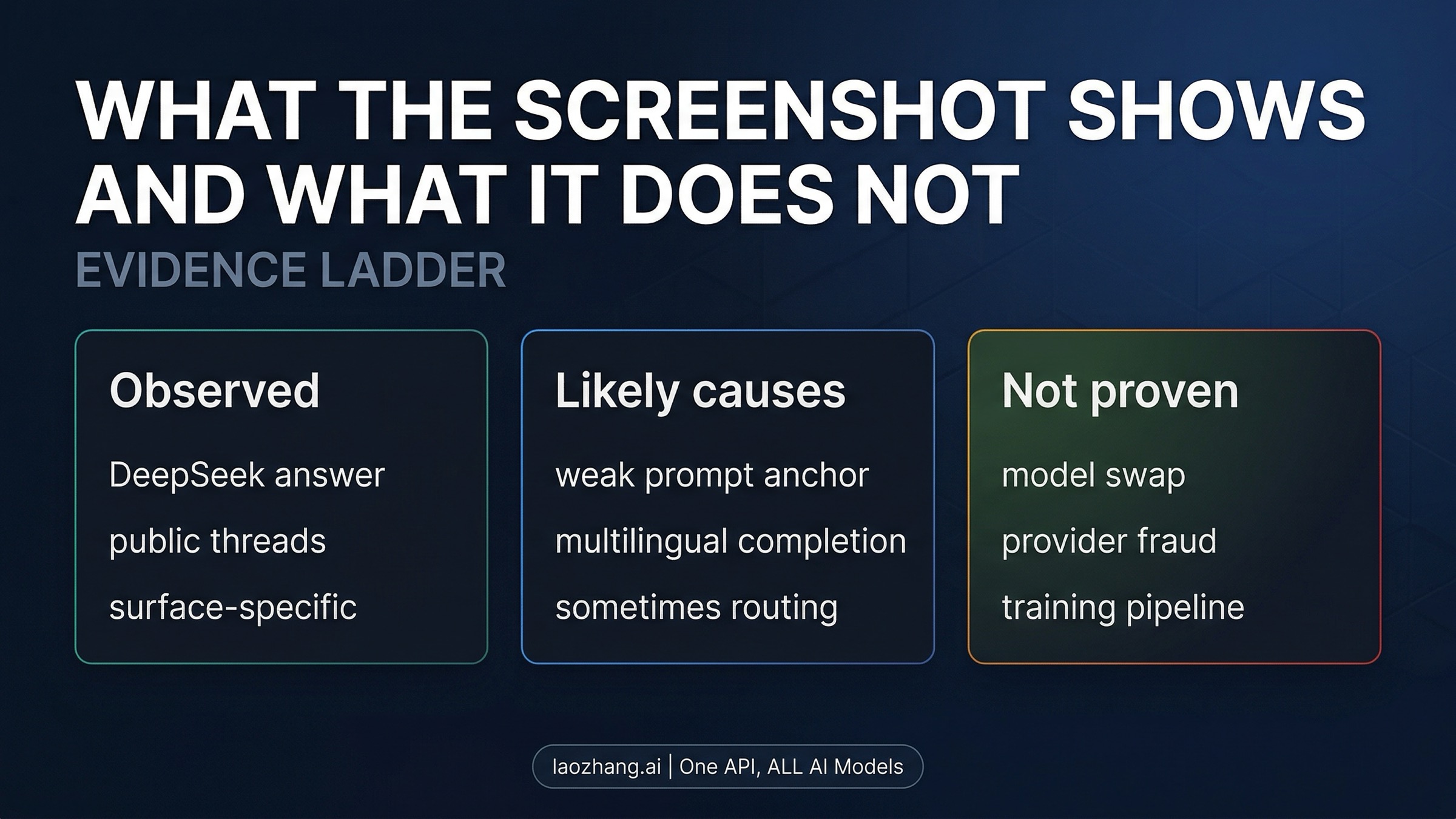

The first thing to fix is the evidence ladder. The screenshot and forum reports are about an observed response. Anthropic's product docs are about the shipping model contract. Those are not the same layer, and readers get misled when they are flattened into one claim.

As of April 1, 2026, Anthropic's public models overview still lists Claude Sonnet 4.6 as a current public model and gives its public API alias as claude-sonnet-4-6. Anthropic's release notes show Sonnet 4.6 launching on February 17, 2026. The public contract is not vague here. Sonnet 4.6 is not a rumor name or a mislabeled gateway SKU. It is a real Anthropic model with a documented place in the Claude lineup.

What is also confirmed is that Anthropic publicly escalated its DeepSeek conflict on February 23, 2026. In its distillation post, Anthropic said DeepSeek was one of three labs running industrial-scale extraction campaigns against Claude, and Anthropic's DeepSeek section specifically described more than 150,000 exchanges focused on reasoning and other high-value capabilities. That post matters because it explains why a Claude model saying I am DeepSeek feels like more than a random glitch. It lands inside an already heated public argument.

What is not confirmed by any Anthropic help-center or docs page is a published root-cause explanation for the DeepSeek self-identification behavior. Anthropic has not posted a release note saying we fixed a Sonnet 4.6 identity bug. So the safest article shape is not official bug report. It is official product contract + observed behavior + disciplined inference.

That distinction sounds dry, but it is the most important correction in the whole article. A public model contract tells you what model Anthropic says it ships. A screenshot tells you how a model answered one question under one prompt envelope. Those two things can collide without the screenshot automatically winning.

If your real goal is simply to understand Anthropic's current public top-end lineup rather than this specific glitch, our Claude Opus 4.6 pricing and subscription guide is the cleaner place to compare the shipping Claude stack.

Why Sonnet 4.6 can answer that it is DeepSeek

The most plausible explanation is not one magical hidden fact. It is a stack of smaller facts that together make the weird answer much easier to understand.

The first layer is prompt anchoring. Anthropic's system prompts page says Claude's web interface and mobile apps use a system prompt at the start of each conversation, and it also says those system-prompt updates do not apply to the Claude API. That means the same question can land inside two different identity envelopes depending on where you ask it. On one surface, Claude may be strongly reminded what it is. On another, you may be much closer to raw model behavior.

That does not automatically explain every screenshot, but it explains why self-identification is not stable across surfaces. If the model is not given a strong identity anchor and you ask a short self-description question, you are effectively probing a weak spot. The model is not reading a secure internal name tag. It is generating the most plausible answer from the context it has.

The second layer is multilingual completion behavior. The public threads around this issue cluster around prompts like 你是什么模型? rather than around a long English prompt that already contains Claude Sonnet 4.6 in the surrounding context. That matters because short Chinese self-introduction prompts can be answered from learned language patterns rather than from a clean product identity trace. Based on the available sources, the likeliest explanation is that Sonnet sometimes completes a familiar model-introduction pattern when its own identity is weakly anchored. The exact mix of causes is not publicly documented, so it is safer to call this an inference than a fact. But it is a much better inference than therefore the model must literally be DeepSeek.

The third layer is routing and wrapper behavior. OpenRouter's provider routing documentation says its default strategy uses price-based load balancing and that fallbacks and provider selection can be configured. That means a gateway surface is not always a simple one-model, one-provider, no-transform environment. In some reported cases, that could contribute to the confusion. It is not a complete explanation, because some public reports claim to reproduce the issue on Anthropic's official API with an empty system prompt. But it is still part of the explanation landscape and one reason the reader should care about the exact surface.

This is why the strongest practical framing is simple: a model can fail at self-identification without ceasing to be the model you called. Large language models are good at many things, but they are not reliable authorities on their own hidden provenance when you ask them cold, in a minimal prompt, to tell you what they are. Their answer can be shaped by the prompt envelope, the surrounding language, and the route you used to reach them.

That sounds less dramatic than the viral interpretation, but it is much closer to the evidence we actually have.

What the DeepSeek answer does not prove

There are three overclaims people keep trying to extract from this behavior.

The first is Claude Sonnet 4.6 is actually DeepSeek. That is far too strong. Anthropic's public model docs still show Sonnet 4.6 as a current shipping Claude model with its own API alias, launch date, context window, and pricing position. A bad self-introduction answer does not erase that public contract.

The second is Anthropic secretly swapped in another provider. That is also too strong, especially when the reports span more than one surface and the evidence is messy. If a screenshot came from a gateway with loose routing rules, then provider-layer ambiguity is one possible cause. But some public threads claim the behavior appears even on Anthropic's API when the system prompt is empty. That means you cannot responsibly explain every case as gateway fraud, and you also cannot responsibly jump from the screenshot to Anthropic isn't serving Claude at all.

The third is this proves Anthropic trained Sonnet 4.6 on DeepSeek outputs in the same sense that Anthropic accuses DeepSeek of distillation. That claim is especially tempting because the optics are so sharp. Anthropic publicly accused DeepSeek of extracting Claude capabilities at scale, and then people saw Claude say it was DeepSeek. But embarrassing contradiction is not the same as proven training pipeline. At most, the screenshot suggests that competitor identity patterns can show up in a model's behavior when the identity anchor is weak. That is a useful observation. It is not a forensic proof of how Anthropic trained Sonnet 4.6.

What would count as stronger evidence? You would want a clean, repeatable test on the official API, under controlled prompt conditions, combined with harder provenance evidence than a self-introduction answer. You would also want something closer to a model-card disclosure, leaked training recipe, or internal acknowledgement. One screenshot or one cold prompt does not clear that bar.

So the right response is not denial and not certainty. It is a stricter proof standard. The output is real enough to care about. It is not strong enough to support every conclusion people want from it.

This is the same discipline that matters on rumor-heavy Anthropic queries such as Claude Capybara: separate the public product contract from the strongest story people want the evidence to tell.

How to verify model identity correctly

If you actually need to know what model path you are hitting, asking the model what are you? is the weakest check in the stack. A better workflow looks like this:

- Check the configured model ID first. On Anthropic's current public docs, Sonnet 4.6 is

claude-sonnet-4-6. Start with the configured model name in your request or app settings before you interpret the model's prose about itself. - Lock the provider path when you use a gateway. If you are on OpenRouter or another model router, reduce ambiguity by disabling fallbacks or selecting a specific provider path when you test identity-sensitive behavior.

- Add an explicit identity anchor in the system prompt. This is not about coaching the model to lie. It is about making the test less under-specified. If a model only answers correctly when its identity is anchored, that tells you something about the weakness of self-identification under minimal prompts.

- Compare surfaces instead of trusting one output. Test the same question on claude.ai, on the official Anthropic API, and on the gateway path you actually use. Anthropic's own docs already tell you that these surfaces do not share the same prompt envelope.

- Log what matters. Save the request model, provider choice, fallback settings, system prompt state, and exact prompt language. Without those details, you are not debugging model identity. You are collecting anecdotes.

The point of this workflow is not to make the glitch disappear. The point is to move from this answer felt spooky to this route produced this output under these conditions. That is the level where engineering judgment becomes possible.

It also changes how you read the screenshots already circulating online. A screenshot without route and prompt details is not useless, but it is much weaker than people treat it. Once you start caring about provider locks, prompt anchors, and surface differences, the argument becomes less mystical and much more testable.

Why the Anthropic-vs-DeepSeek angle still matters

The screenshot spread because it lands on a real contradiction in public messaging. Anthropic says DeepSeek ran a distillation campaign against Claude. Then a Claude model appears to identify itself as DeepSeek in public screenshots. Even if the root cause is only prompt weakness or multilingual completion drift, the optics are terrible for Anthropic.

That part should not be minimized. Anthropic made a very public capability-theft argument on February 23. Its post was not shy about naming DeepSeek or about describing the scale and seriousness of the alleged extraction. So when Sonnet 4.6 answers I am DeepSeek, readers naturally interpret that answer through the lens Anthropic itself created.

But the right lesson is not Anthropic is automatically wrong about distillation. The better lesson is narrower and more useful. A model can carry or generate a competitor's identity pattern in some prompts without that pattern functioning as a complete story about weights, provenance, or training method. The screenshot is rhetorically powerful because it is vivid and embarrassing. It is still weaker than the claim people want it to settle.

That is also why this topic is more than a silly curiosity. It exposes a real weakness in how people verify model identity. Many users still treat a model's self-description as if it were a secure source of truth. It is not. Anthropic's DeepSeek post makes that weakness more visible, but the underlying lesson is broader than this one company fight. If identity matters to you, use system-level evidence, not just generated prose.

FAQ

Is Claude Sonnet 4.6 really saying it is DeepSeek?

Yes, there is enough public evidence to treat the behavior as real enough to explain. What is not public is a clean Anthropic root-cause note or a current official statement about reproducibility.

Does that mean Sonnet 4.6 is actually DeepSeek?

No. Anthropic's public docs still list Sonnet 4.6 as a shipping Claude model. The safer reading is that self-identification can fail under weak prompting or ambiguous routing.

Is this only an OpenRouter problem?

Not necessarily. Gateway routing is one plausible contributor in some cases, but some public reports claim the issue also appears on Anthropic's official API with an empty system prompt. The evidence is mixed enough that only OpenRouter is too strong.

Does this prove Anthropic trained on DeepSeek outputs?

No. It suggests that competitor identity patterns can surface in model behavior. That is not the same thing as proving a specific training pipeline or proving illicit distillation.

Why does the problem seem worse in Chinese?

The public reports cluster around short Chinese self-identification prompts. The likeliest explanation is that those prompts leave more room for the model to complete a familiar identity pattern when its own identity is weakly anchored. Anthropic has not publicly confirmed the exact cause.

Final verdict

Claude Sonnet 4.6 says DeepSeek in some self-identification prompts because model identity is weaker and more surface-dependent than many users assume. Anthropic's own docs already show one important reason why: claude.ai and mobile use system prompts, while the API does not inherit those prompt updates. Add multilingual completion behavior and, on some routes, gateway-layer ambiguity, and the DeepSeek answer stops looking like a magical confession.

That does not make the screenshot meaningless. It is a real optics problem for Anthropic, especially after the company's February 23 DeepSeek accusation. But the strongest responsible conclusion is still narrower: the answer shows that a model's self-introduction is a bad provenance test. If you need to know what you are actually calling, trust the model contract, the provider path, and the prompt envelope more than the model's own under-anchored guess about itself.