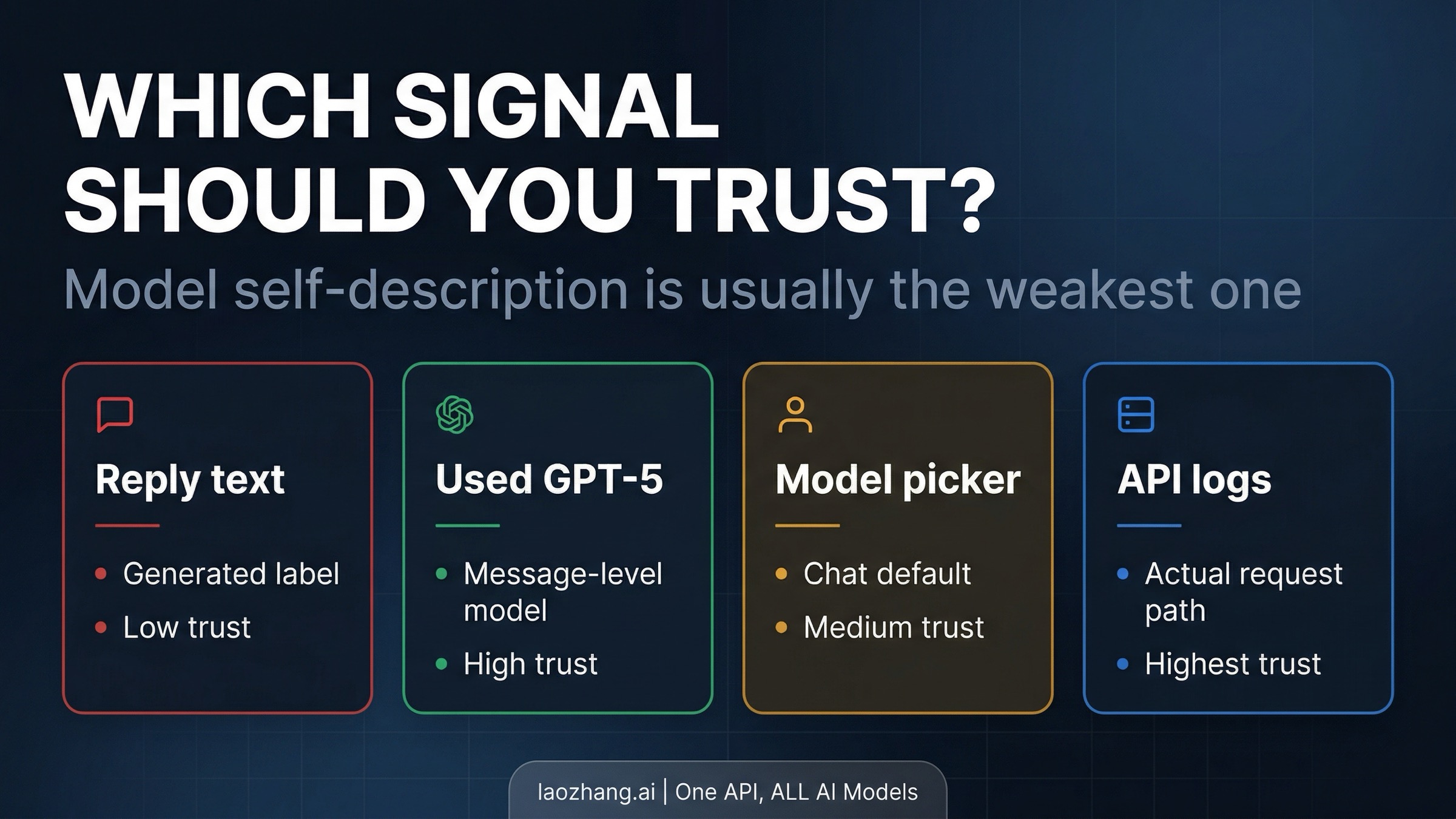

GPT-5 saying "GPT-4" does not, by itself, mean ChatGPT secretly dropped you back onto GPT-4. OpenAI now says this directly: self-references inside a reply are generated text and can be mistaken or generic. If the UI shows a per-message note such as Used GPT-5, that annotation is the source of truth for that message, not the sentence where the assistant describes itself.

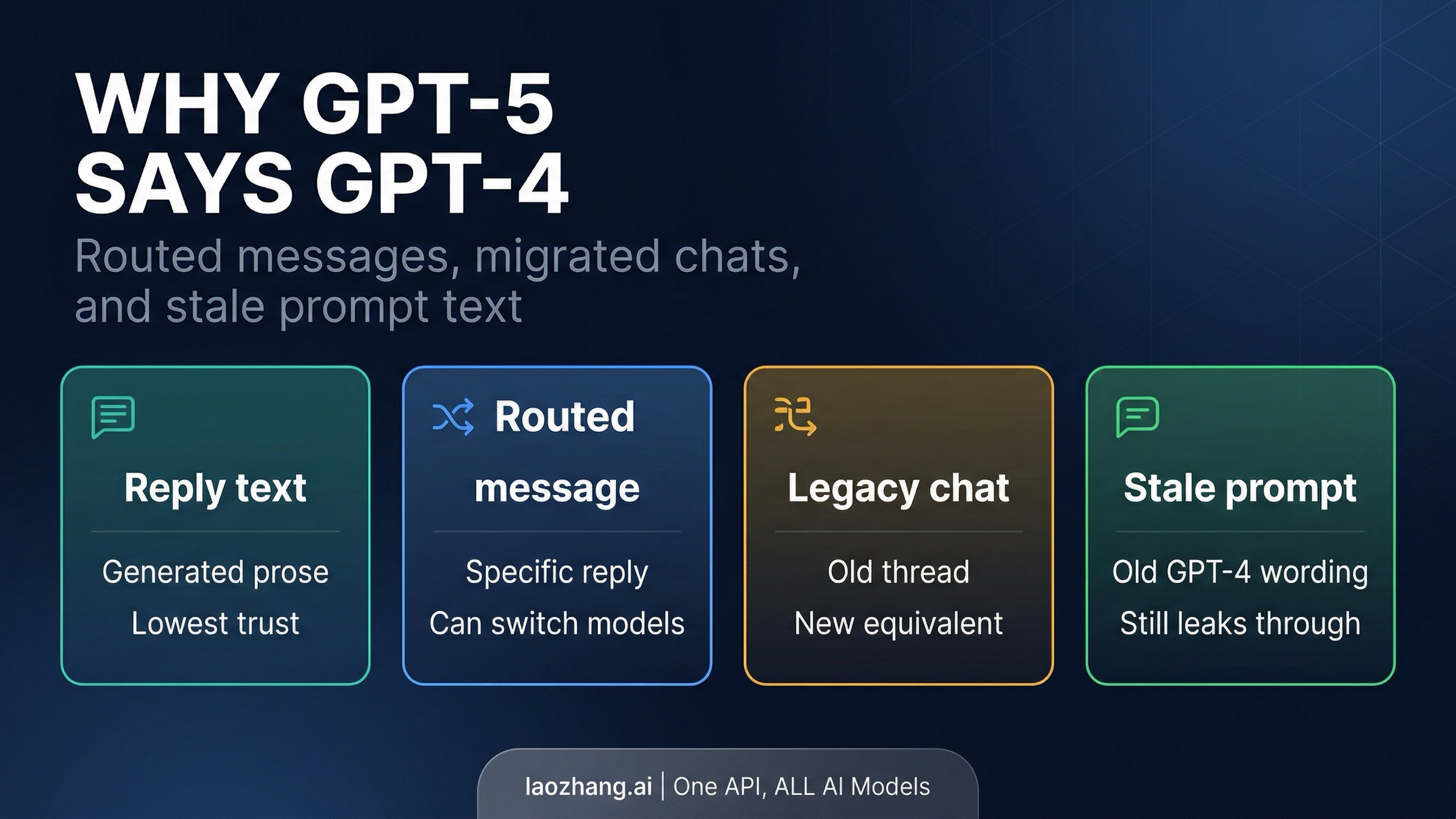

This confusion shows up more often now because ChatGPT is no longer a simple one-label, one-model surface. The picker can show your default chat model, a specific message can be routed to a different model, older conversations can continue on newer equivalents after model retirements, and stale Custom GPT or custom-instruction text can keep older names alive. When those layers disagree, the assistant's own line about being "GPT-4" is usually the weakest signal in the stack.

This article was verified against current OpenAI Help Center pages, ChatGPT release notes, the GPT-5 System Card, and current OpenAI developer guidance on April 1, 2026.

Quick Answer: Which Signal Should You Trust?

If you only need the short version, use this hierarchy:

| Signal | What it tells you | Trust level | When to use it |

|---|---|---|---|

| The assistant saying "I am GPT-4" or similar | Generated self-description inside the reply | Low | Treat it as a clue at most, never as final proof |

Used GPT-5 or similar message annotation | Which model handled that specific message | High in ChatGPT | Use this first when the UI provides it |

| The model picker at the top of the chat | Your default model for the conversation | Medium | Useful for chat defaults, not always decisive for one message |

| API request config and application logs | The model your app actually called | Highest for API use | Use this instead of the model's prose when building with the API |

The key correction is simple. In ChatGPT, OpenAI treats the per-message annotation as authoritative and the self-reference in the reply as fallible. In the API, the authoritative signal is your request and your logs. The model can still say the wrong thing about itself if the prompt, the conversation state, or a stale instruction nudges it there.

Why This Happens Now: GPT-5 in ChatGPT Is a Routed System

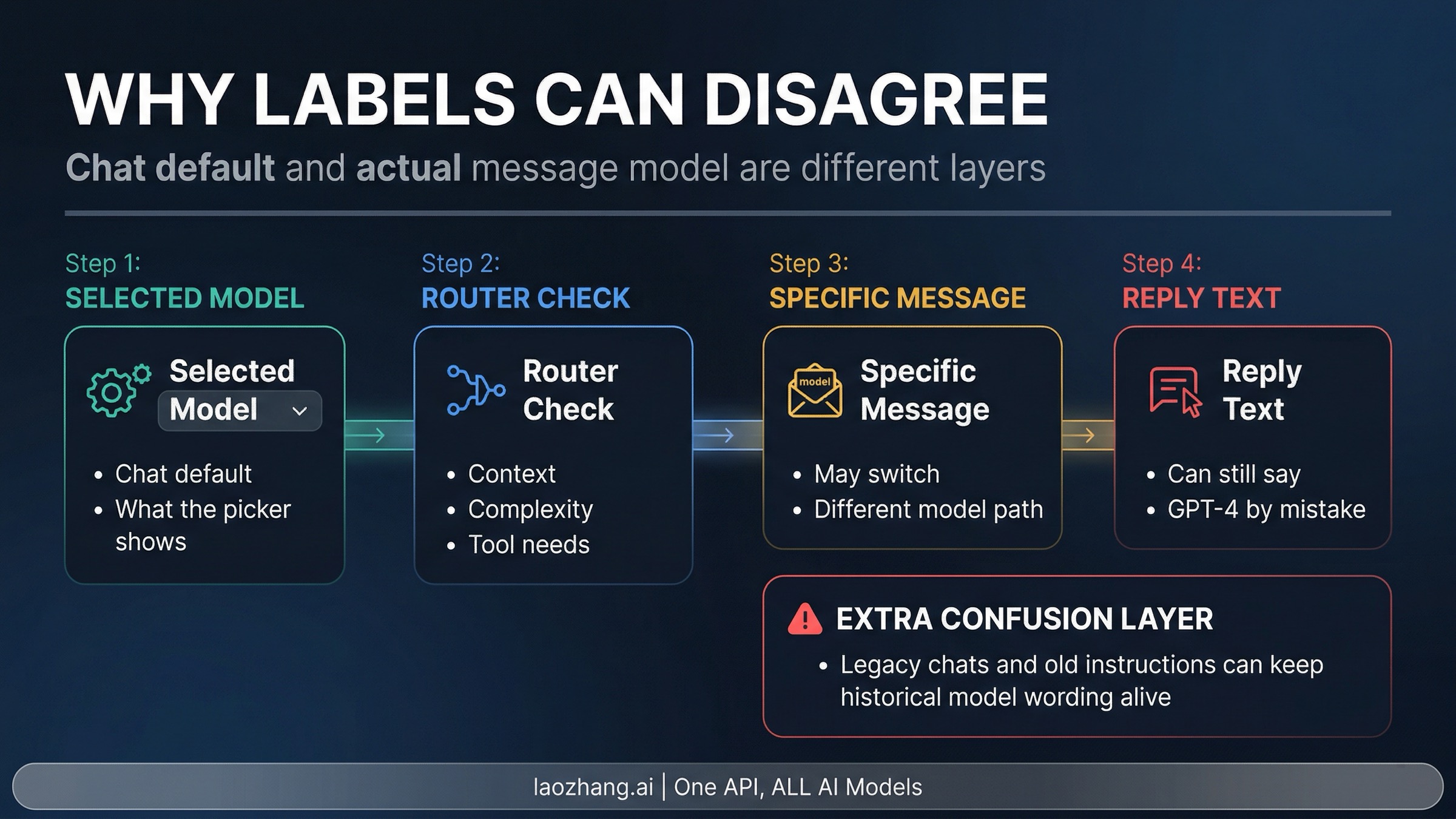

OpenAI's own product language explains most of the confusion. The GPT-5 System Card describes GPT-5 as a unified system with a real-time router that decides which model path to use based on conversation type, complexity, tool needs, and explicit intent. ChatGPT release notes described GPT-5 in ChatGPT as a single auto-switching system, then later exposed more visible controls such as automatic switching between Instant and Thinking and access to legacy models for eligible users.

That means "the model" in ChatGPT now lives at more than one layer. There is the default model you chose for the chat. There is the model that handled a specific message. There is the current mapping for older conversations after OpenAI retires or replaces a model. And there is whatever text the assistant generates when it talks about itself. Those four layers often line up. When they do not, the contradiction feels like a bug even when the platform is behaving exactly as documented.

OpenAI's help article about the Used GPT-5 annotation makes this even clearer. The company says prompts are routed on a per-message basis, your selected model remains the default for the chat, and the system may switch for specific messages. In other words, the picker and the actual answering model can diverge temporarily without changing the whole conversation.

That routed design is why you can see a surface-level contradiction such as this:

- the picker still shows your chosen default

- a specific reply gets a

Used GPT-5note - the assistant still says "as a GPT-4 model" in the text

All three can coexist. They describe different layers of the same interaction, and the sentence inside the reply is the least reliable of the three.

The Three Main Root-Cause Buckets

Most real cases fall into one of three buckets. The fix depends on which one you are actually dealing with.

1. A routed message answered with a different model. This is the cleanest and most explicitly documented case. OpenAI says some messages can be routed to another model on a per-message basis. Sensitive-topic handling is the clearest public example, but the GPT-5 System Card shows a broader routing logic built around context, complexity, and tool needs. If the UI tells you a message used GPT-5, trust that annotation over the assistant's self-description.

2. You are in a legacy chat or legacy-model surface that was migrated forward. OpenAI retired multiple older ChatGPT models in 2026 and mapped existing conversations onto newer equivalents. Release notes say conversations that used GPT-5.1 automatically continue on GPT-5.3 Instant or GPT-5.4 Thinking / Pro equivalents. The retirement notice for GPT-4o and other models says conversations and projects default to GPT-5.3 Instant and GPT-5.4 equivalents going forward. That matters because old threads carry history. If the conversation began under one model name and later moved under a new backend route, the text behavior inside the chat may lag behind the surface-level model update.

3. Stale instructions still mention GPT-4. OpenAI does not publish a help article that says "this is why your GPT-5 chat still says GPT-4," so this part should be treated as an inference, not a verbatim OpenAI statement. But it is a strong practical inference from two official facts. First, OpenAI says personality and custom instructions now apply across all chats immediately, not only new ones. Second, OpenAI's GPT-5 prompting guidance says GPT-5 follows instructions very closely and can be especially sensitive to vague or contradictory prompt text. Put those together and a common failure mode becomes obvious: if your Custom GPT prompt, system instruction, saved template, or personalization layer still says "You are GPT-4" or was written around a GPT-4 identity, GPT-5 can keep repeating that label long after the backend model changed.

This third bucket is also the one that makes the problem feel most persistent. A routed message comes and goes. A migrated legacy thread usually becomes clear once you check dates and settings. But stale prompt text keeps reintroducing the wrong label until you remove it.

How To Verify What Actually Answered

The right verification method depends on the surface.

In ChatGPT, start with what OpenAI itself says to trust. If the reply has a note like Used GPT-5, use that as the message-level source of truth. Then compare it with the model picker, which tells you the current default for the conversation. If the two disagree, that does not automatically mean something is broken. It usually means the system handled one message differently from the default chat setting.

For older threads, read the current release notes and retirement notices before drawing conclusions from the conversation history alone. OpenAI has already documented automatic continuation from older models to new equivalents. If a chat began months ago, the historical label in your head may no longer match the current backend path.

If you are using a Custom GPT, inspect the builder configuration and the instruction text rather than asking the assistant to certify its own identity. A wrong answer to "which model are you?" tells you very little on its own. A stale line in the instructions tells you much more.

For the API, do not import ChatGPT intuition wholesale. ChatGPT routing behavior and API behavior are not the same thing. In API use, your request configuration and your logs are authoritative. If your application sent gpt-5.2, the assistant saying "I am GPT-4" is usually a prompt or output issue, not proof that the API secretly served a different model.

That difference matters because many builders test prompts in ChatGPT, then assume the same model-identity logic applies in production. It often does not. ChatGPT can route messages inside the product experience. Your API call is the model call you asked for unless your own infrastructure changed it upstream.

How To Stop The Wrong Name From Showing Up

Once you know which bucket you are in, the repair path is straightforward.

- Start a fresh chat if you are testing model identity seriously. Old conversations carry too much baggage: previous instructions, legacy routing, older context, and migrated model state.

- Check whether automatic switching or legacy-model access is enabled in the ChatGPT model picker settings. Those controls are useful, but they also make it easier to misread one visible label as the whole story.

- Audit your Custom GPT instructions, saved prompts, and custom instructions for stale phrases such as

You are GPT-4,As GPT-4, or older product-specific identity lines. This is the most common persistent cause when the wrong label keeps appearing across multiple replies. - If you are building with the API, verify the request path in logs rather than interrogating the model in natural language. The model can describe itself incorrectly; your request metadata usually cannot.

One practical habit prevents most future confusion: stop using the answer's self-description as your model-verification step. That habit made more sense in older, simpler model surfaces. It is unreliable in a routed ChatGPT stack and a poor debugging method for API work.

Does It Mean You Are Really On GPT-4?

Usually, no. Not by itself.

If the only evidence you have is that the reply said "GPT-4," that is weak evidence. OpenAI explicitly says those self-references can be wrong or generic. If the UI annotation says Used GPT-5, that is stronger evidence that the message was actually handled by GPT-5. If your API logs show a GPT-5 model, that is stronger evidence than the model's own prose. The wrong label becomes important only when it matches other stronger signals, such as stale builder instructions, a legacy surface you knowingly selected, or logs that show a different model request than you expected.

Where it does matter is in evaluation work. If you are comparing outputs, verifying rate-limit behavior, or shipping a Custom GPT to other users, you do not want identity drift in the prompt layer. Even when the backend model is correct, stale self-labeling makes the product feel unreliable and can confuse users about what they are testing.

The Practical Rule

When GPT-5 says GPT-4, assume the wording is wrong before you assume the backend is wrong.

Then verify the message using the strongest signal available for that surface: ChatGPT annotations and settings inside ChatGPT, request metadata and logs in the API, and builder instructions in Custom GPTs. That one rule turns a vague naming mystery into a short diagnostic workflow, which is exactly what this topic needs.